Clear Sky Science · en

Design and implementation of a 6-DoF robot arm control with object detection based on machine learning using mini microcontroller

Smarter Robot Arms on a Smaller Budget

Imagine a factory robot arm that can spot an object, decide how to grab it, and move smoothly into place—all without relying on a bulky computer or cloud connection. This paper describes exactly that: a six-jointed (6-DoF) robotic arm that sees and thinks using a tiny, low-cost microcontroller. For readers interested in how artificial intelligence is escaping the data center and moving into everyday machines, this work shows how advanced vision and control can run on hardware not much bigger than a USB stick.

Why Shrinking Robot Brains Matters

Traditional industrial robots often depend on powerful external computers to recognize objects and plan motions. That adds cost, wiring, and technical complexity, which can be a barrier for small and medium-sized businesses. The authors set out to prove that a single inexpensive microcontroller could do almost everything: see objects, decide where they are in 3D space, plan how the arm should move, and drive the motors that carry out the task. By keeping processing on-board, the system becomes more portable, more energy-efficient, and easier to deploy in cramped or changing environments, from small factories to teaching labs.

A Flexible Arm That Builds Itself

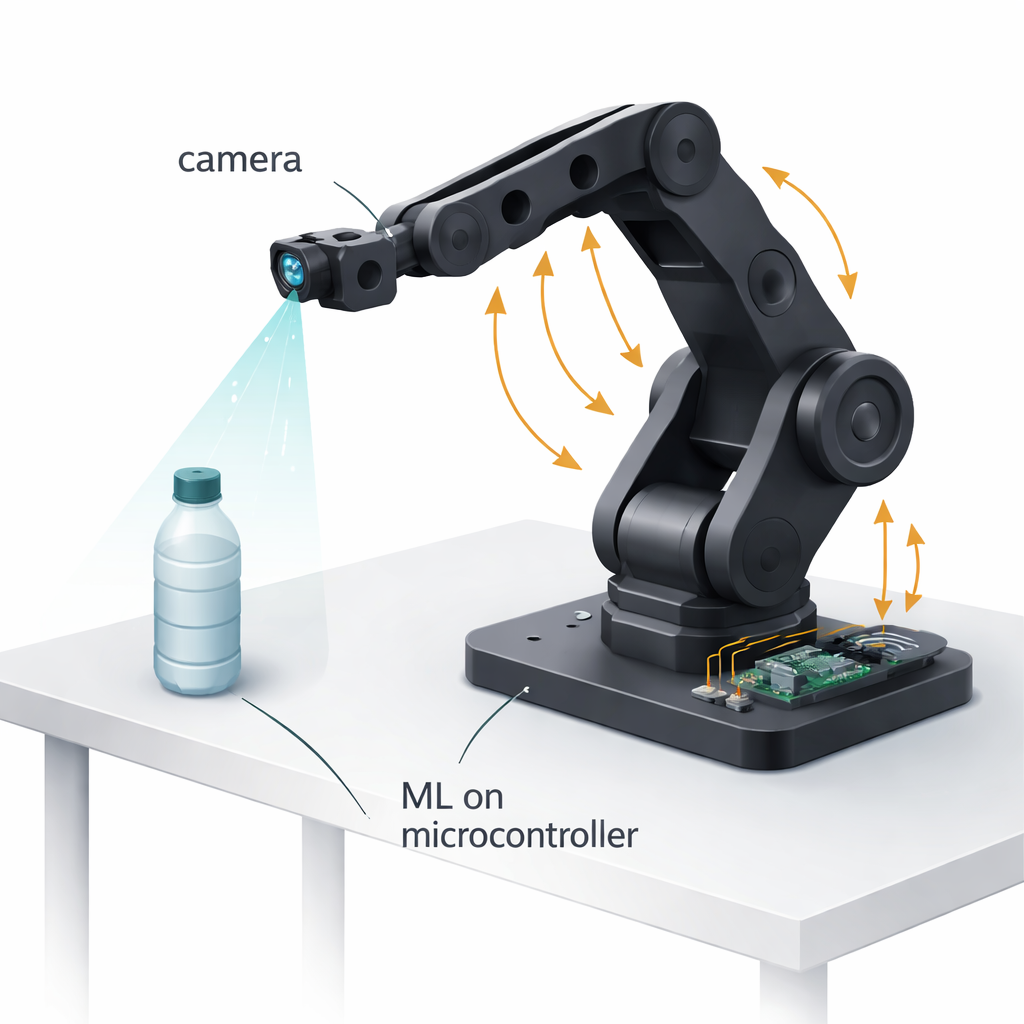

The team designed a six-jointed robotic arm from the ground up using mostly 3D-printed plastic parts to keep it light and affordable. High-torque hobby-style servo motors move each joint, and the researchers modified these motors to read back their exact angles in real time, enabling precise closed-loop control. A custom two-finger gripper at the tip includes a simple current sensor: by watching how much electrical current the gripper motor draws, the system can infer how hard it is squeezing and automatically adjust its grip to hold objects firmly without crushing or dropping them. Mounted on the arm’s “wrist” is a small full-HD camera that moves with the arm, giving the system a first-person view of the scene in front of the gripper.

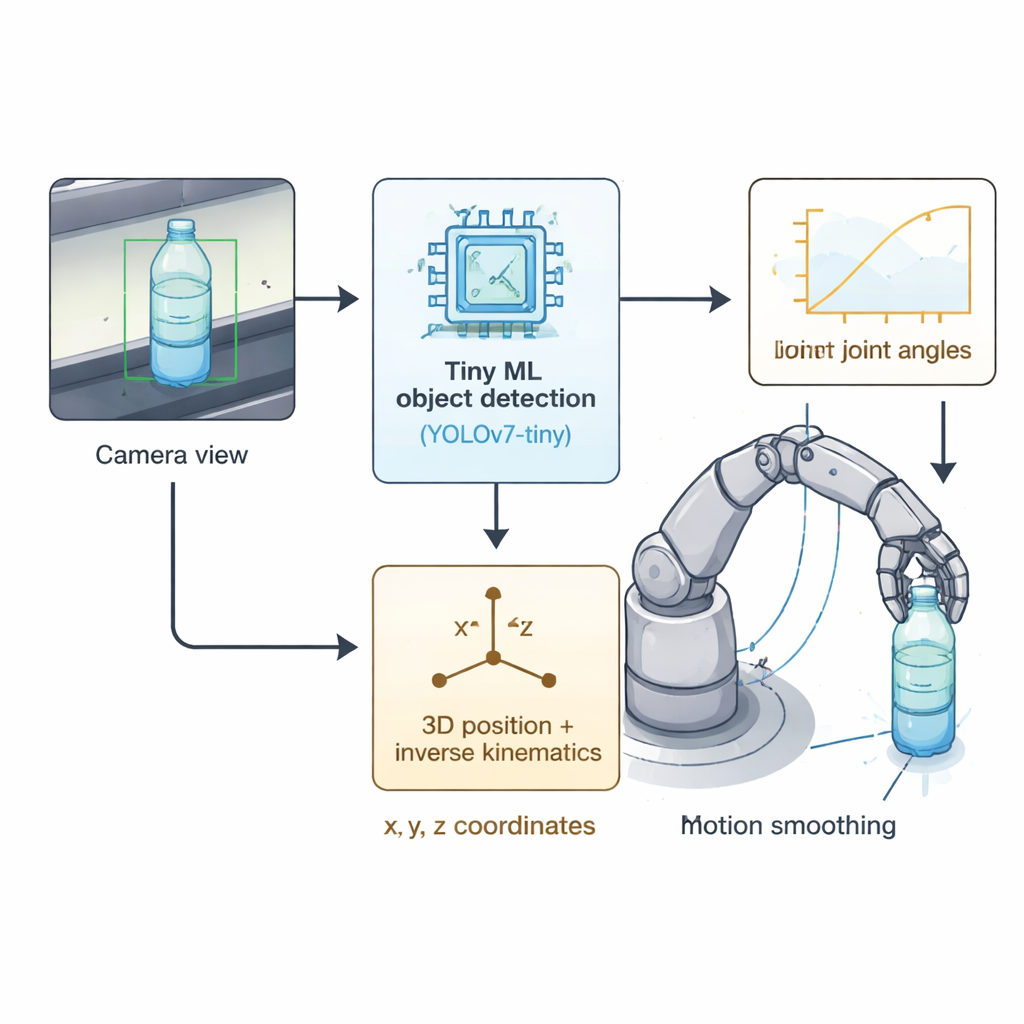

Teaching a Tiny Chip to See Bottles

At the heart of the system’s vision is a compact version of a popular object-detection network called YOLOv7-tiny. The researchers trained this model on more than 2,500 images of plastic water bottles captured under a wide range of lighting angles, distances, and partial occlusions. After training on a powerful graphics card, they compressed the model into an 8-bit "tiny ML" form so it could fit into the limited memory and processing power of the AMB82-Mini microcontroller. Even in this slimmed-down form, the model achieved about 88% accuracy at spotting bottles and ran fast enough—around 7–8 frames per second—for real-time pick-and-place work directly on the microcontroller, without help from a PC or the cloud.

From Pixels to Precise Motion

Once the camera spots a bottle, the system calculates the center of the detection box in the image and estimates how far away the object is based on how large it appears in pixels. That yields x, y, and z coordinates in space. A custom mathematical solver then translates those coordinates into the six joint angles the arm must reach, using standard robot geometry but optimized to run quickly on the tiny processor. The control software, organized as a simple state machine, cycles through finding an object, moving to it, closing the gripper using the current sensor as a feeler, and then transporting the object to a fixed drop-off point. To keep movements smooth and avoid shaking, the team uses carefully shaped motion “easing” curves and a special voltage modulation method that cuts down on motor chatter and overshoot.

Real-World Tests and What They Show

In repeated experiments, the integrated system demonstrated that it could reliably pick up and place plastic bottles weighing up to 300 grams with a 100% success rate, and still perform well at heavier loads up to 500 grams. Simulations and measurements showed that positioning errors were typically around one millimeter—good enough for many sorting and packaging tasks. Compared with more basic control schemes, the authors’ motor-control strategy reduced vibration, shortened the time it took the arm to settle in place, and eliminated jerky behavior. While the tests focused on one object type and fixed distances in controlled indoor conditions, they provide convincing evidence that serious robot perception and control can be packed into a single, very modest embedded board.

What This Means for Everyday Automation

For non-specialists, the main takeaway is that advanced "seeing" and "moving" abilities are no longer limited to expensive, high-powered robots. By combining tiny machine-learning models, smart geometry, and careful motor control on a low-cost microcontroller, this work shows a path toward compact, self-contained robot arms that small manufacturers, schools, and hobbyists could realistically adopt. As future versions expand to more object types and more varied environments, similar embedded systems could quietly automate countless simple pick-and-place jobs, bringing intelligent robotics within reach of many more people and places.

Citation: Almaliki, H.H., Mazinan, A.H. & Modaresi, S.M. Design and implementation of a 6-DoF robot arm control with object detection based on machine learning using mini microcontroller. Sci Rep 16, 6842 (2026). https://doi.org/10.1038/s41598-026-35508-3

Keywords: embedded robotics, tiny machine learning, robot arm control, object detection, industrial automation