Clear Sky Science · en

Intent classification for university administrative services using a bidirectional recurrent neural network modified by a developed Kepler optimization algorithm

Smarter digital help for everyday campus questions

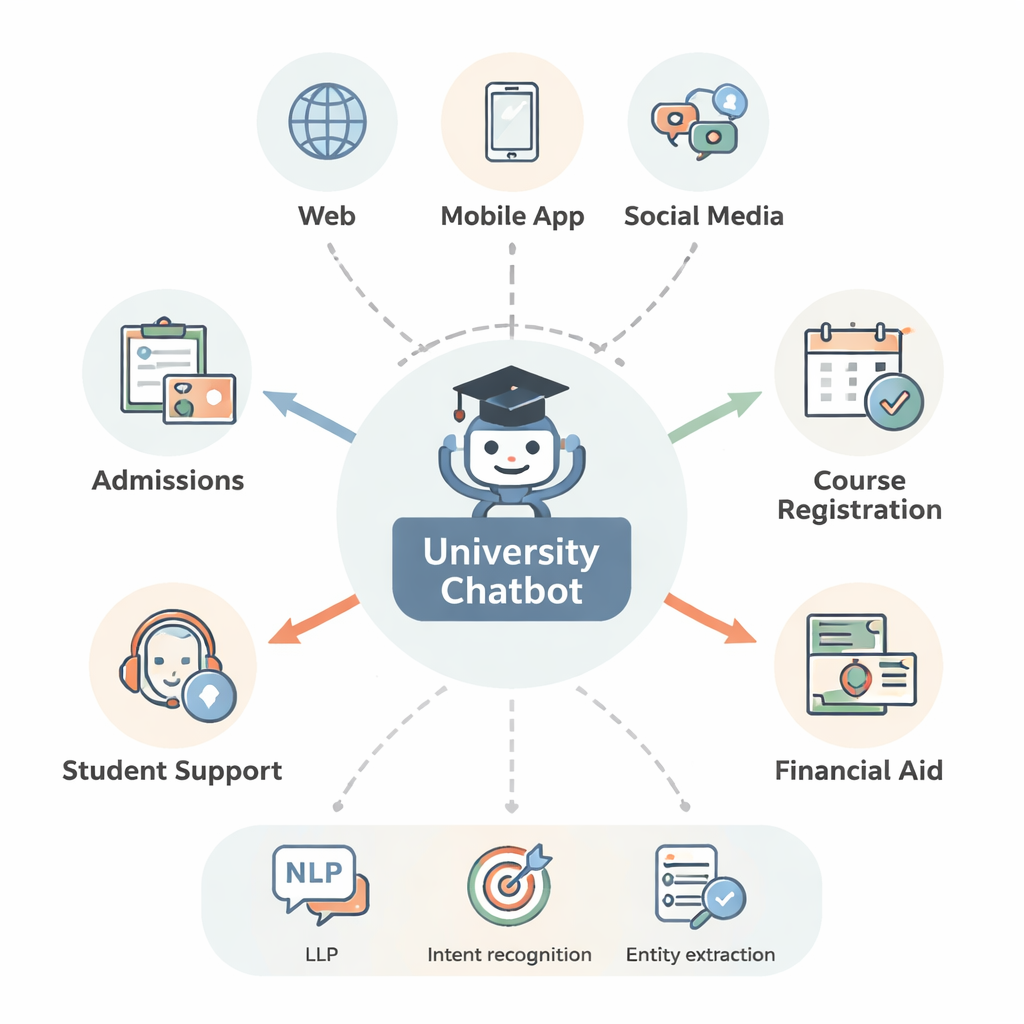

University students now expect quick, accurate answers at any hour—whether they are applying for admission, registering for classes, or asking about financial aid. This paper explores a new kind of AI-powered chatbot designed specifically for university administrative services, with a focus on handling both English and Greek. By teaching a single system to better understand what students mean and which details matter, the authors aim to make digital help desks faster, more reliable, and easier to run.

Why today’s chatbots still get confused

Most modern chatbots rely on a field called natural language understanding, which breaks a student’s question into two main parts. First is intent: what the student wants to do, such as “register for a course” or “ask about a deadline.” Second are entities: the concrete pieces of information inside the question, like a course code, semester, or program name. Traditional systems use separate models for these two tasks. That separation wastes memory and computing power and can lead to inconsistent answers—for example, correctly spotting a course code but failing to link it to the right action. These problems become worse in multilingual settings, where the same idea can be expressed in many forms across languages.

One brain instead of two

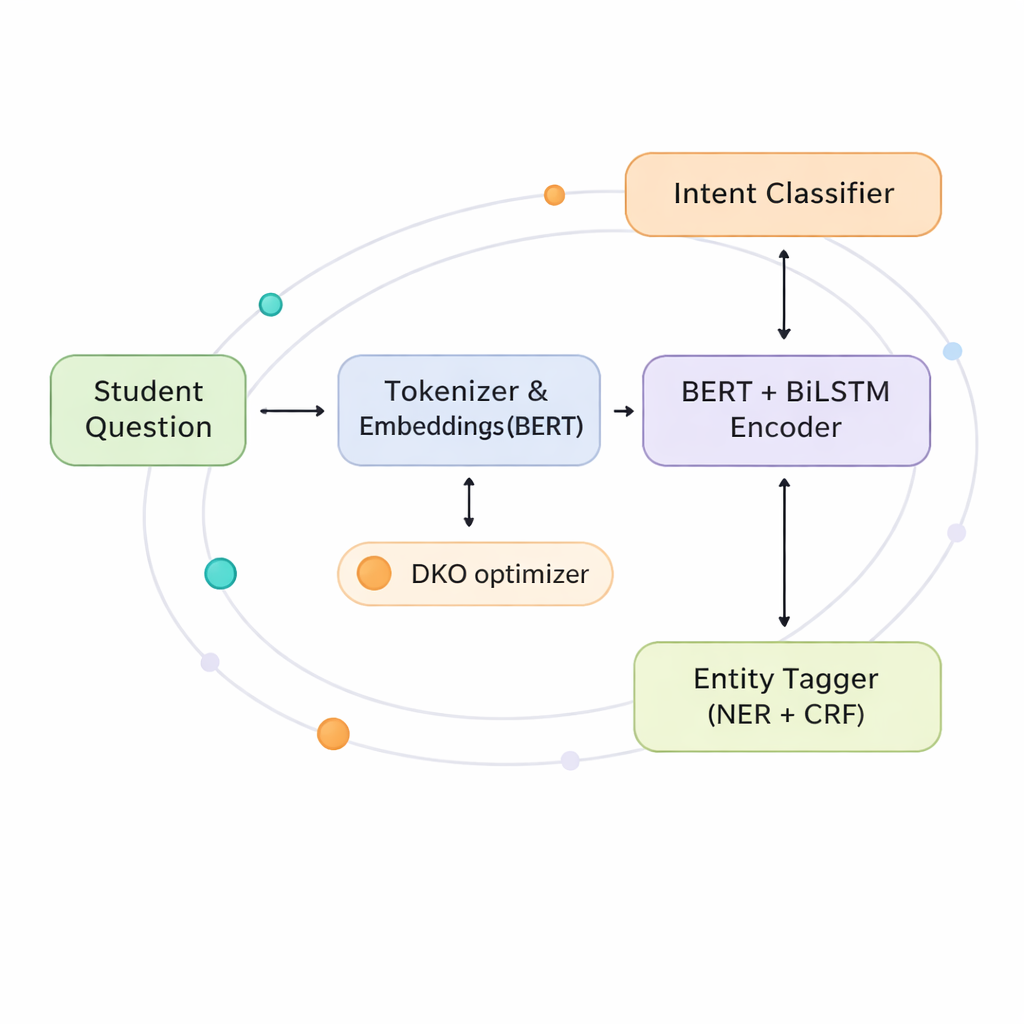

The authors propose a joint model that learns to recognize both intents and entities at the same time, using a shared “brain” instead of two separate ones. At its core is a combination of two powerful techniques. The first, BERT, looks at an entire sentence at once to capture its overall meaning. The second, a bidirectional LSTM network, pays close attention to the order of words from both left to right and right to left, which helps it track nearby relationships such as which course goes with which semester. On top of this shared understanding, the system branches into two heads: one predicts the student’s intent, and the other labels each word with its role as an entity or not.

Letting tasks talk to each other

To make the most of this shared brain, the model includes a “co-interactive transformer” layer that lets the two tasks inform each other in real time. When the system is deciding on an intent, it can look at the entities it thinks are present; when it is labeling entities, it can lean on what intent seems most likely. This cross-talk helps resolve ambiguities, such as whether “drop” refers to leaving a course or cancelling an application, and is especially valuable in Greek, where word forms and word order are more flexible than in English. By sharing representations and attention in this way, the model cuts the number of parameters by nearly half compared with running two large models separately, making it more practical for university IT departments.

A cosmic-inspired way to train the model

Training such a rich model is difficult: standard optimization methods can be slow and sensitive to fine-tuned settings. The authors introduce the Developed Kepler Optimization (DKO) algorithm, inspired by the way planets orbit the sun. In this analogy, different versions of the model are like planets exploring the space of possible parameter settings while being pulled toward the best-performing “sun.” DKO starts these candidates in a more diverse spread than usual and then continuously adjusts their “orbits” based on how well they perform. This approach speeds up learning by about 42 percent compared with a popular method called Adam, while also making training more stable, particularly on complex, multilingual data.

Real-world tests with students

The team evaluated their system on several datasets, including UniWay, a collection of English and Greek questions about university services, and xSID, a well-known benchmark for understanding short commands. Across all of them, the joint model consistently outperformed rule-based systems, older neural networks, and even strong transformer baselines. In field trials at two universities—one English-only and one bilingual—the chatbot correctly identified student intents and entities around nine times out of ten, and students rated their satisfaction at about 4.5 out of 5. Performance remained strong even when training data were reduced, suggesting the method is robust in lower-resource languages and domains.

What this means for students and universities

For a layperson, the key takeaway is that the authors have designed a more efficient and accurate “listening engine” for university chatbots. By unifying intent detection and detail extraction, and by using an orbit-inspired training method, their system can better grasp what students are asking while using less memory and training time. This could translate into faster answers, fewer misunderstandings, and round-the-clock multilingual support without overloading human staff. Although challenges remain—such as adapting to new policies, more languages, and long-term usage patterns—the work points toward campus help systems that feel more responsive, fair, and scalable.

Citation: Yang, Z., Lu, M. & Huang, S. Intent classification for university administrative services using a bidirectional recurrent neural network modified by a developed Kepler optimization algorithm. Sci Rep 16, 6263 (2026). https://doi.org/10.1038/s41598-026-35504-7

Keywords: university chatbots, intent classification, named entity recognition, multilingual AI, optimization algorithms