Clear Sky Science · en

A novel deep learning approach for mosquito species classification via a dual-head structure and calibration-aware fusion architecture

Why Smarter Mosquito ID Matters

Mosquitoes may be tiny, but they spread some of the world’s most dangerous diseases. Health agencies increasingly rely on smartphone photos of trapped insects to track where different species are appearing. The catch is that many mosquitoes look confusingly alike, and images taken in the field are often blurry, poorly lit, or shot with many different cameras. This study introduces a new artificial intelligence system that can tell look‑alike mosquito species apart with lab‑level accuracy, while also knowing how sure it is about each decision—an ability that is crucial when those decisions guide real-world disease control.

From Phone Photos to Reliable IDs

The researchers focus on images that resemble what a field worker or citizen scientist might capture: whole mosquitoes photographed with smartphones against messy, real-world backgrounds. Their goal is twofold. First, the system should correctly distinguish among eight categories, including several disease-carrying Aedes and Culex species and an "other/unknown" group. Second, it must indicate its confidence in each prediction in a way that matches reality, so that officials can safely set thresholds, for example deciding when to trigger a follow-up visit to a neighborhood. Poorly calibrated systems may sound certain but be frequently wrong when used in new locations or with different camera types.

Two Vision Pathways Working Together

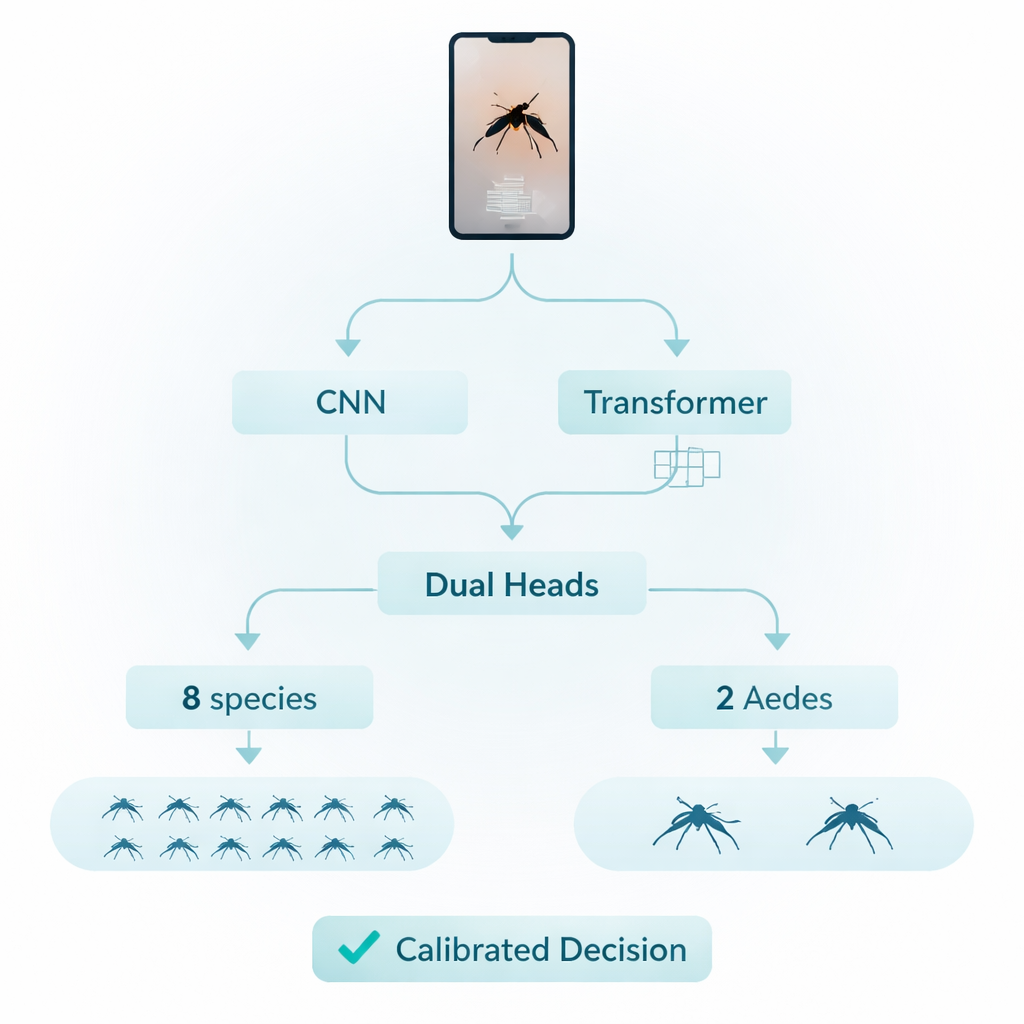

To reach these goals, the team builds an image-analysis pipeline that combines two complementary ways of "seeing". One pathway uses conventional convolutional neural networks (CNNs), which excel at picking up local textures such as body scales, stripes and wing veins. The other pathway uses a newer design called a Transformer, which is better at capturing the overall arrangement of body parts across the image, such as the proportions of wings, thorax and abdomen. Both pathways process the same mosquito photo in parallel, then feed their assessments into a shared decision module. This diverse setup helps the system stay reliable even when photos vary in pose, focus or device.

Learning Both Detailed and Coarse Labels

A key innovation lies in how the system is trained to think about species. Instead of learning just one task, it learns two at once. One "head" predicts all eight categories in the main training set. A second "head" focuses only on telling apart two closely related Aedes species that are of particular public-health interest. By jointly learning these fine and coarse distinctions, the model sharpens the boundaries between easily confused look‑alike species while still recognizing the broader set. During training, the authors also deliberately rebalance the data so that rare species have more influence and the system does not simply favor the most common mosquitoes.

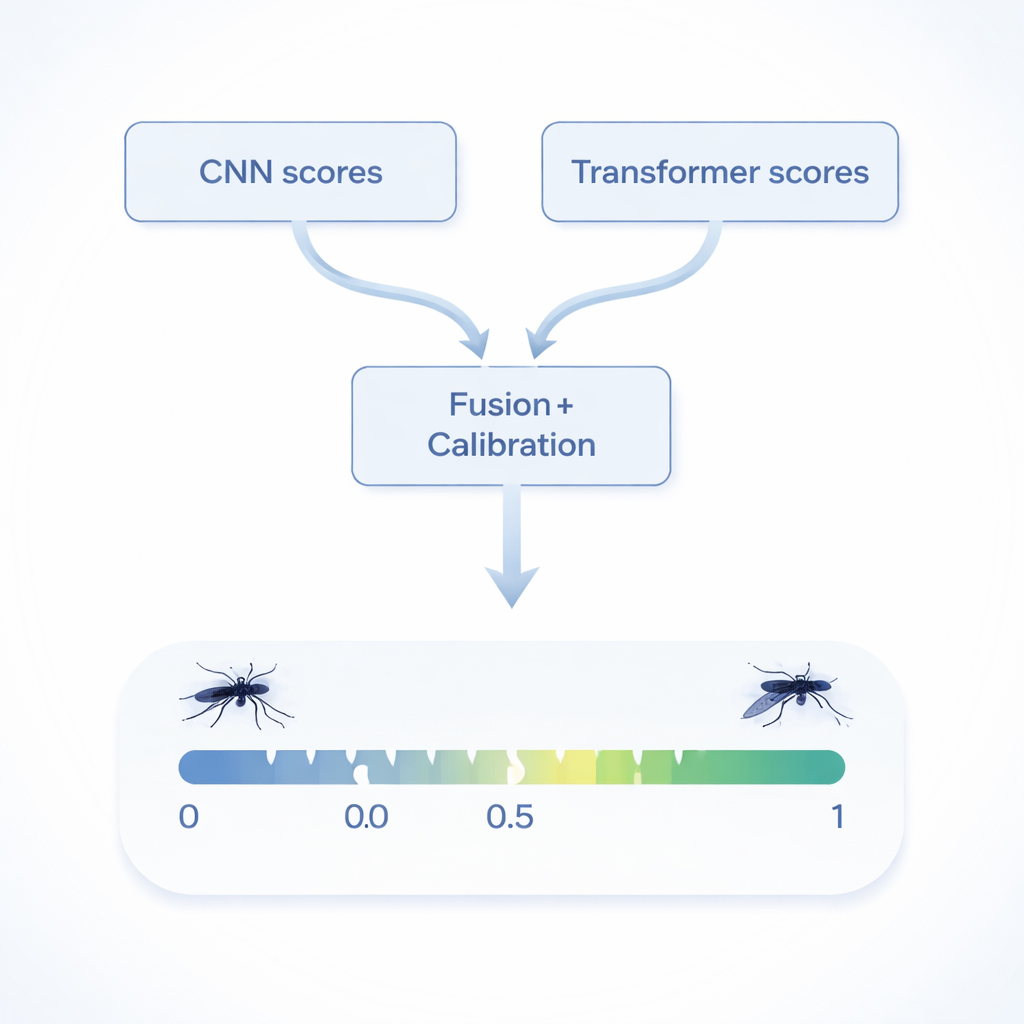

Turning Raw Scores into Trustworthy Confidence

Another central advance is how the system fuses information from its two vision pathways and two heads. Rather than simply averaging their guesses, the model learns how much to trust each internal source based on past performance, a process known as calibrated stacking. It then passes the combined score through a simple adjustment step called temperature scaling, which fine-tunes the sharpness of its confidence levels. The team also tests each image several times using slightly altered crops and flips, averaging the outcomes to reduce random variation. Together, these steps make the final confidence scores closely match the true error rates, even when the system is applied to a separate dataset it has never seen before.

Near-Perfect Accuracy in the Lab and the Wild

To see how well this works in practice, the authors train and tune their model on a large eight-class smartphone image collection and then evaluate it on both held-out images from that set and on an entirely separate Aedes dataset used strictly for testing. On the original eight-class task, their method reaches around 99.5 percent accuracy, slightly but consistently outperforming strong single-model baselines and simple ensembles. On the unseen two-species test set, it correctly identifies over 99 percent of images. Just as important, its confidence is well calibrated: when it reports 90 percent certainty, it is wrong only about one time in ten, a property that many previous mosquito-recognition systems did not measure or guarantee.

What This Means for Public Health

For non-specialists, the takeaway is that this work delivers not just a highly accurate species recognizer, but one whose self-reported certainty can be trusted. That combination allows agencies to set stable rules—such as "investigate any location where the model is at least 80 percent sure it has seen a dangerous species"—and expect those rules to behave similarly across different phones, regions and lighting conditions. While challenges remain in extreme cases like very blurry or heavily obscured insects, the proposed system offers a practical, deployment-ready baseline for large-scale mosquito surveillance and lays the groundwork for future tools that can handle new species, new devices and even additional sensing modes such as sound.

Citation: Nazari, M.Z., Zarchi, M.S., Emadi, S. et al. A novel deep learning approach for mosquito species classification via a dual-head structure and calibration-aware fusion architecture. Sci Rep 16, 7208 (2026). https://doi.org/10.1038/s41598-026-35453-1

Keywords: mosquito identification, deep learning, vector surveillance, calibrated AI, image classification