Clear Sky Science · en

Deep residual networks with convolutional feature extraction for short-term load forecasting

Why tomorrow’s power use matters today

Every time we flip a switch, power companies must already have the right amount of electricity ready to go. If they produce too little, lights flicker and factories stall; too much, and they waste fuel and money. This article explores a new artificial‑intelligence method that helps grid operators predict, hour by hour, how much electricity people will use the next day, in very different climates from snowy New England to tropical Malaysia.

The challenge of guessing our daily energy needs

Short‑term load forecasting is the task of predicting how much electricity a region will need from the next hour up to the next week. These forecasts guide crucial decisions, such as which power plants to turn on, how to schedule maintenance, and how to plan energy trading. Even a tiny improvement can save large sums of money; for a big utility, cutting the forecasting error by just one percent can save millions of dollars in fuel costs each year. But electricity demand is shaped by many intertwined factors: time of day, day of the week, season, weather, holidays, and changing habits. Capturing all of these patterns reliably is difficult, especially as energy systems become more complex and climate conditions more variable.

Limits of earlier smart forecasting tools

Researchers have long tried to improve these forecasts with mathematical models and, more recently, with deep learning. Traditional approaches such as regression and simple neural networks struggle when the number of inputs grows, often missing subtle patterns or overfitting to past data. More advanced networks each have strengths and weaknesses: convolutional networks are good at spotting short‑term wiggles in the data but not long‑term trends; recurrent networks such as LSTM and GRU can follow longer sequences but are slow and harder to train; Transformer models capture complex relationships but demand heavy computing power and can become unstable as they get deeper. A popular compromise, known as a deep residual network, adds “shortcut” connections that help very deep models learn without their training breaking down. Yet most earlier designs used these residual tricks only in later prediction layers, not during the crucial early stages when raw features are first extracted.

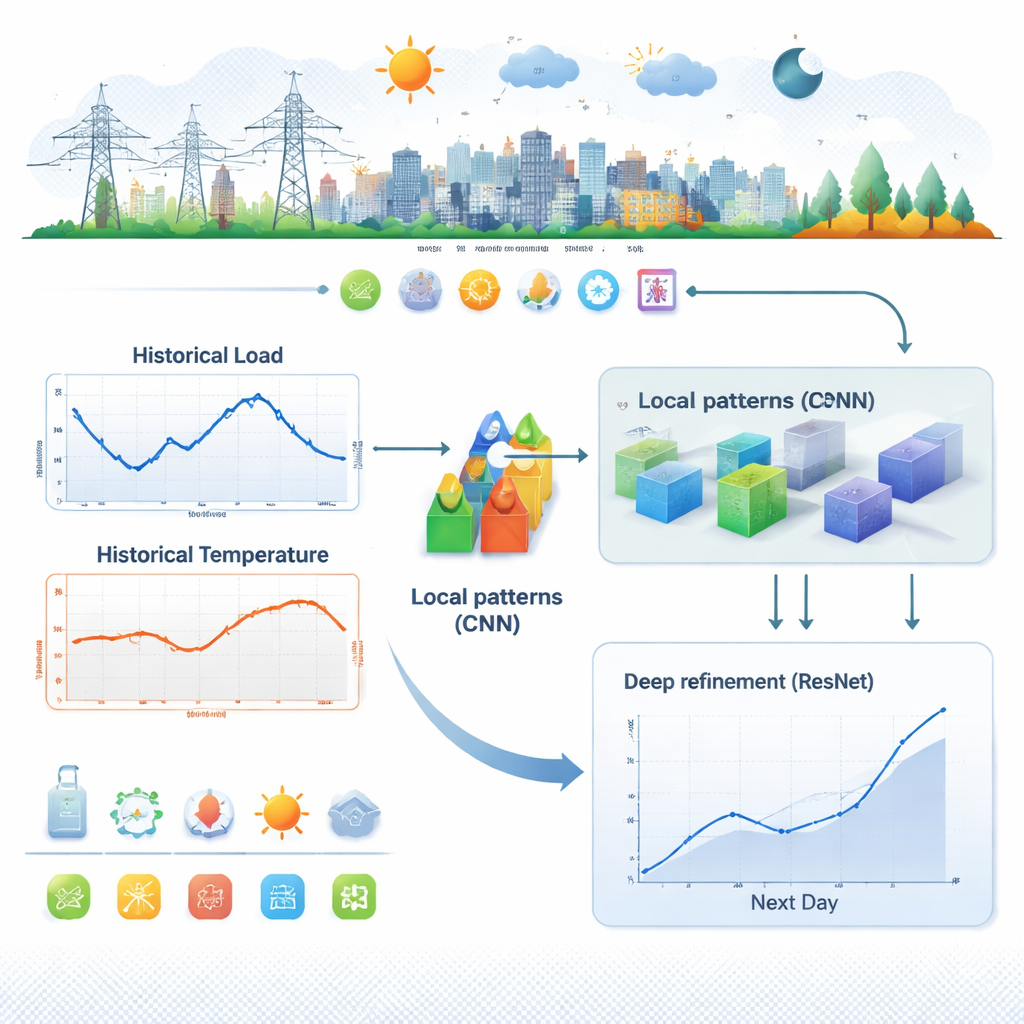

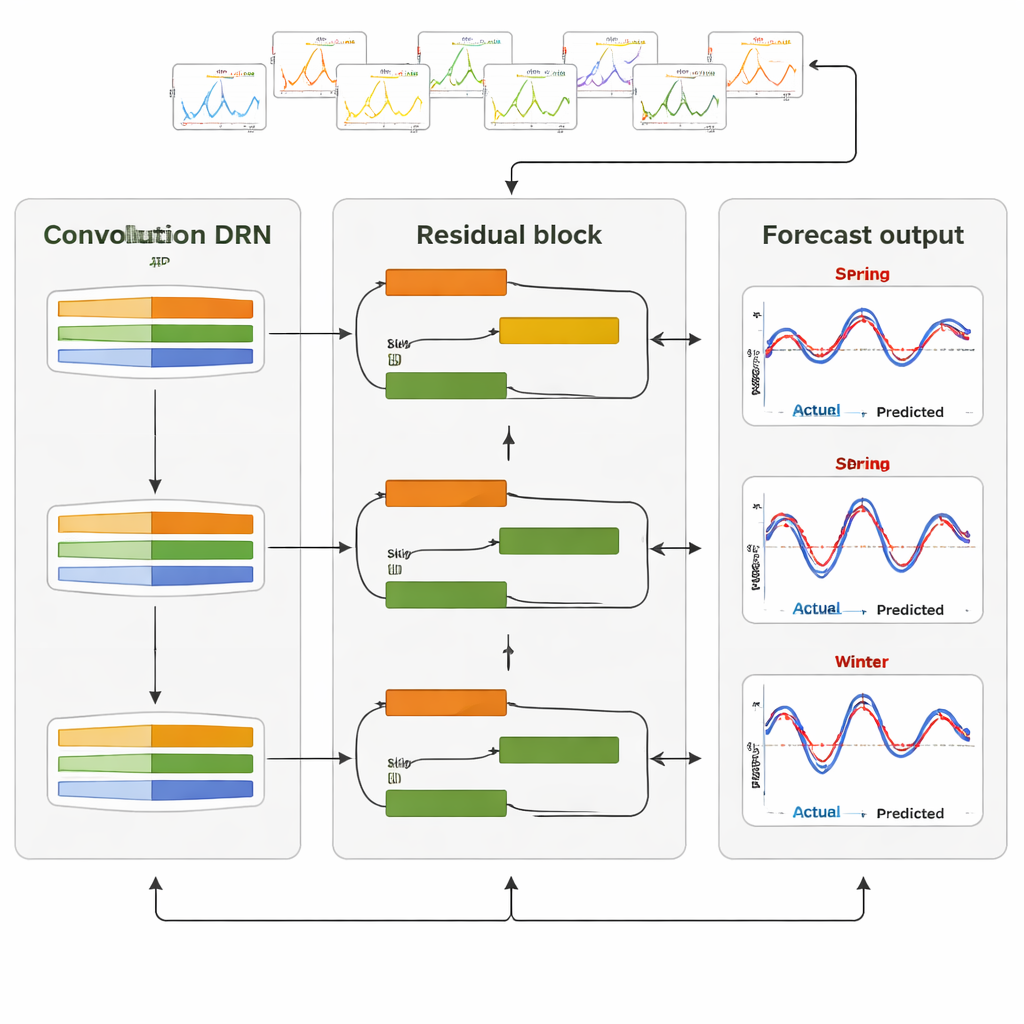

A two‑stage model that looks close and far

The authors propose a redesigned forecasting system called a CNN‑Embedded Deep Residual Network. In the first stage, the model focuses on local detail. It feeds recent load and temperature histories—ranging from the past 24 hours to the past several months—through one‑dimensional convolution blocks. These blocks act like sliding windows, scanning time series to detect recurring shapes: morning peaks, evening surges, weekend dips, or sudden weather‑driven spikes. A pooling step compresses each detected pattern into a compact summary, reducing noise while preserving what matters most. In parallel, calendar information such as season, weekday, and holiday flags is processed and merged. Twenty‑four small subnetworks, one for each hour of the coming day, then turn these enriched features into an initial 24‑hour forecast.

Deep refinement and tests across two very different grids

In the second stage, an enhanced residual network takes this first forecast and polishes it. Stacked “blocks” with shortcut connections adjust the hourly values while keeping the overall daily shape realistic and preventing the training process from stalling. The team trained and tuned this architecture on two real‑world datasets: ISO New England, which spans six U.S. states with strong seasonal swings, and Malaysia, where electricity use is steadier under a tropical climate. They compared their model with a wide range of alternatives, including pure convolutional networks, several recurrent networks, a Transformer, the original residual network design, and variants that only added convolution or residual parts in isolation. Performance was judged with common error measures, especially mean absolute percentage error, and tested for statistical significance using intensive bootstrap resampling.

What the results say about smarter grids

The CNN‑Embedded Deep Residual Network consistently delivered the most accurate forecasts. On the New England data, it reduced average percentage error to about 1.53 percent, improving on strong residual‑based competitors by up to roughly 11 percent. In Malaysia, where patterns are smoother and gains are harder to achieve, it still cut error to about 5.06 percent and edged out all other models. Seasonal tests showed that the method handled spring and summer peaks, winter heating loads, and tropical rainy and dry seasons without losing accuracy. Statistical checks confirmed that these improvements were not due to chance. For non‑specialists, the takeaway is straightforward: by combining a “microscope” for short‑term patterns with a “backbone” that stabilizes deep learning, this approach offers grid operators a more reliable way to anticipate tomorrow’s electricity needs, saving money, reducing waste, and supporting the transition to smarter, cleaner power systems.

Citation: Liu, J., Ahmad, F.A., Samsudin, K. et al. Deep residual networks with convolutional feature extraction for short-term load forecasting. Sci Rep 16, 6382 (2026). https://doi.org/10.1038/s41598-026-35410-y

Keywords: short-term load forecasting, deep learning, power grid, convolutional neural networks, residual networks