Clear Sky Science · en

Bayesian reinforcement learning for adaptive control of energy recuperation in hydraulic excavator arms

Why smarter excavators matter

Hydraulic excavators are the workhorses of construction and mining, but they burn a lot of diesel and waste much of the energy they draw from the engine. Any improvement in how they use power can translate into lower fuel bills for contractors and fewer emissions for everyone. This study explores a new way to make excavator arms "think ahead" about changing ground conditions and machine wear, so they can recover and reuse energy more effectively while staying within strict safety limits.

Digging is tough on fuel

Modern excavators often run their hydraulic systems at high, fixed pressures whether they are carving through hard rock or gently smoothing loose soil. That one-size-fits-all approach keeps machines reliable but throws away energy as heat, especially during light tasks and when the boom is lowering under its own weight. Although some newer designs include devices that can capture this excess energy in hydraulic accumulators, most machines still rely on simple, fixed rules to decide when and how to use them. The result is fuel use that can be 20% higher than necessary and a larger carbon footprint on job sites worldwide.

A learning control system for the arm

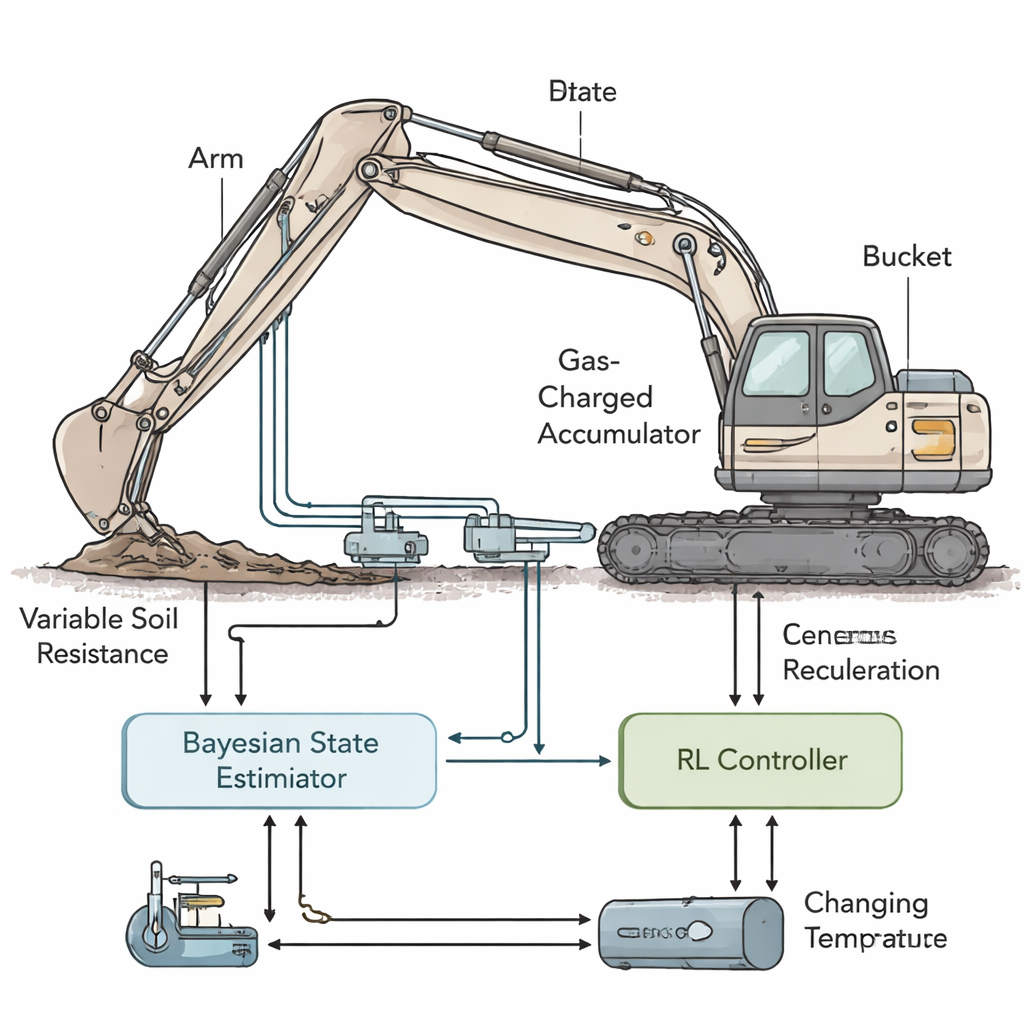

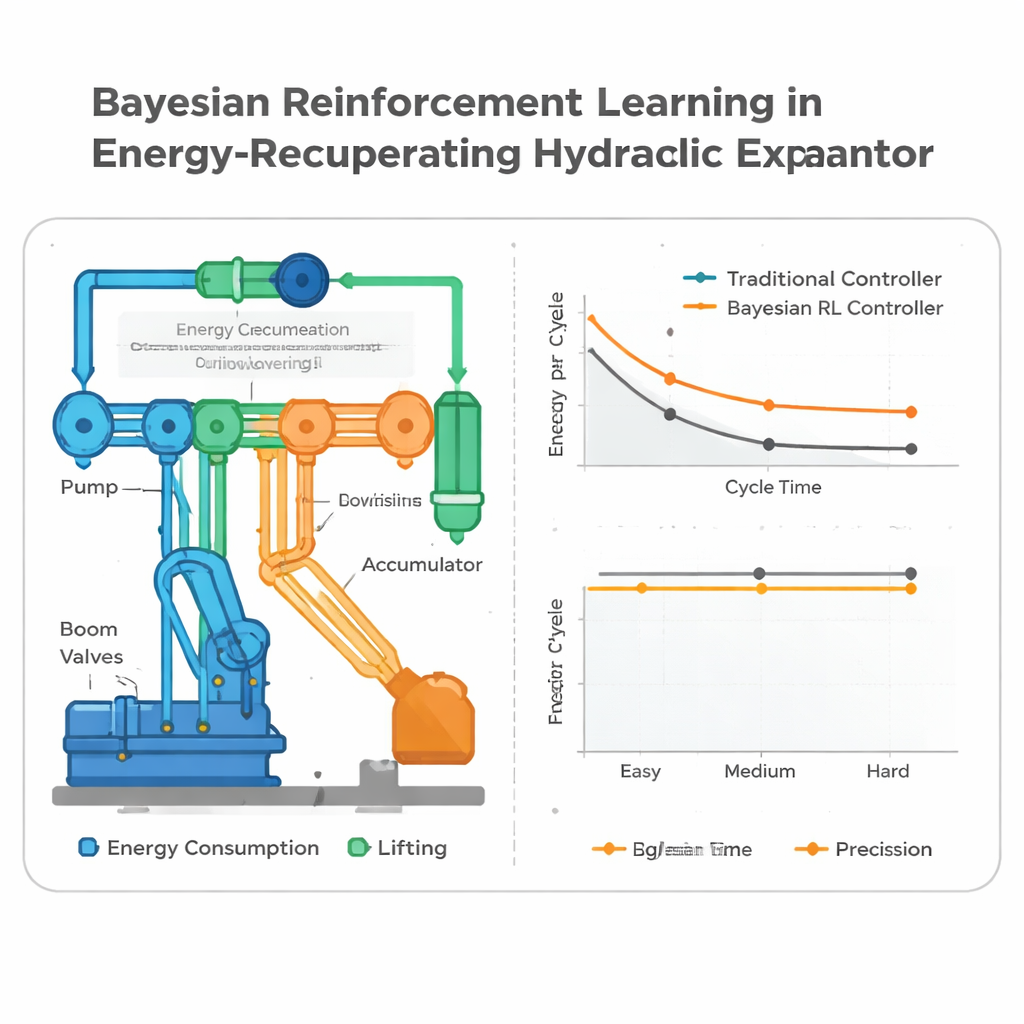

The authors design a detailed virtual model of a 21-ton excavator arm, including its cylinders, pump, valves, and a gas-filled accumulator that stores energy when the boom moves down and releases it to help lift the load later. Their controller has to cope with many real-world uncertainties: soil that suddenly gets harder or softer, oil that thickens or thins with temperature, components that slowly wear, and sensors that are noisy or slightly biased. Instead of assuming everything is known and constant, the system treats the true state of the machine as partly hidden and continually updates its best guess using Bayesian methods. A particle filter estimates hidden factors such as how resistant the soil is and whether the accumulator is slightly over- or under-charged compared with its design point.

Teaching the machine to adapt safely

On top of this state estimator, the team trains a reinforcement learning (RL) policy, an algorithm that gradually discovers good strategies by trial and error in simulation. At each instant, the policy receives the current "belief" about the machine’s condition and chooses continuous commands for pump pressure and valve openings. A dedicated safety layer then checks these suggestions and clips them to a strictly safe range: hydraulic pressures must stay between 5 and 35 megapascals overall, the accumulator must remain within its 12–28 megapascals window, and valve movements are limited so they cannot jerk the system. The learning process rewards the controller for finishing each digging cycle quickly, using as little hydraulic energy as possible, and keeping the bucket on target, while penalizing actions that would push the system toward unsafe regions.

How well the smart controller performs

The researchers run extensive co-simulations at 100 updates per second, comparing their Bayesian RL controller with two more traditional setups: one with fixed parameters and one that mimics a skilled operator making simple manual adjustments to pressure and speed. All controllers face the same scripted 20-minute job, in which soil difficulty changes in several stages and the oil warms up from cool to hot. The learning-based controller tracks changes in soil within seconds, raising pressure when the ground gets tougher to keep cycle times steady, and lowering it when digging becomes easier to save energy and create more chances to charge the accumulator. Across many randomized runs, it cuts energy use per digging cycle by about 20–22% compared with the fixed controller and by roughly 14–18% compared with the operator-adjusted one, while also shortening cycle times and improving how accurately the bucket meets position targets. Safety events, such as pressures trying to exceed limits or relief valves opening for long periods, become rarer even though the system is pushing performance harder.

What this means for real machines

To a lay reader, the key message is that the excavator arm can learn to be both careful and frugal. By constantly updating its understanding of how hard it is to dig, how healthy its components are, and how much energy is stored in its accumulator, the controller decides in real time when to save energy and when to spend a little extra to maintain productivity. The study is still based on simulation rather than field trials, and it focuses on a single size of machine, but it shows that combining probabilistic reasoning with learning-based control can make heavy equipment significantly more efficient without sacrificing safety or speed. If similar approaches are transferred to real excavators, they could help contractors move the same amount of earth with less fuel, less wear, and lower emissions.

Citation: Hu, P., Wen, T., Zhang, D. et al. Bayesian reinforcement learning for adaptive control of energy recuperation in hydraulic excavator arms. Sci Rep 16, 6195 (2026). https://doi.org/10.1038/s41598-026-35391-y

Keywords: hydraulic excavator, energy recuperation, reinforcement learning, Bayesian control, heavy machinery efficiency