Clear Sky Science · en

TempReasoner: neural temporal graph networks for event timeline construction

Why timelines matter in a world of constant events

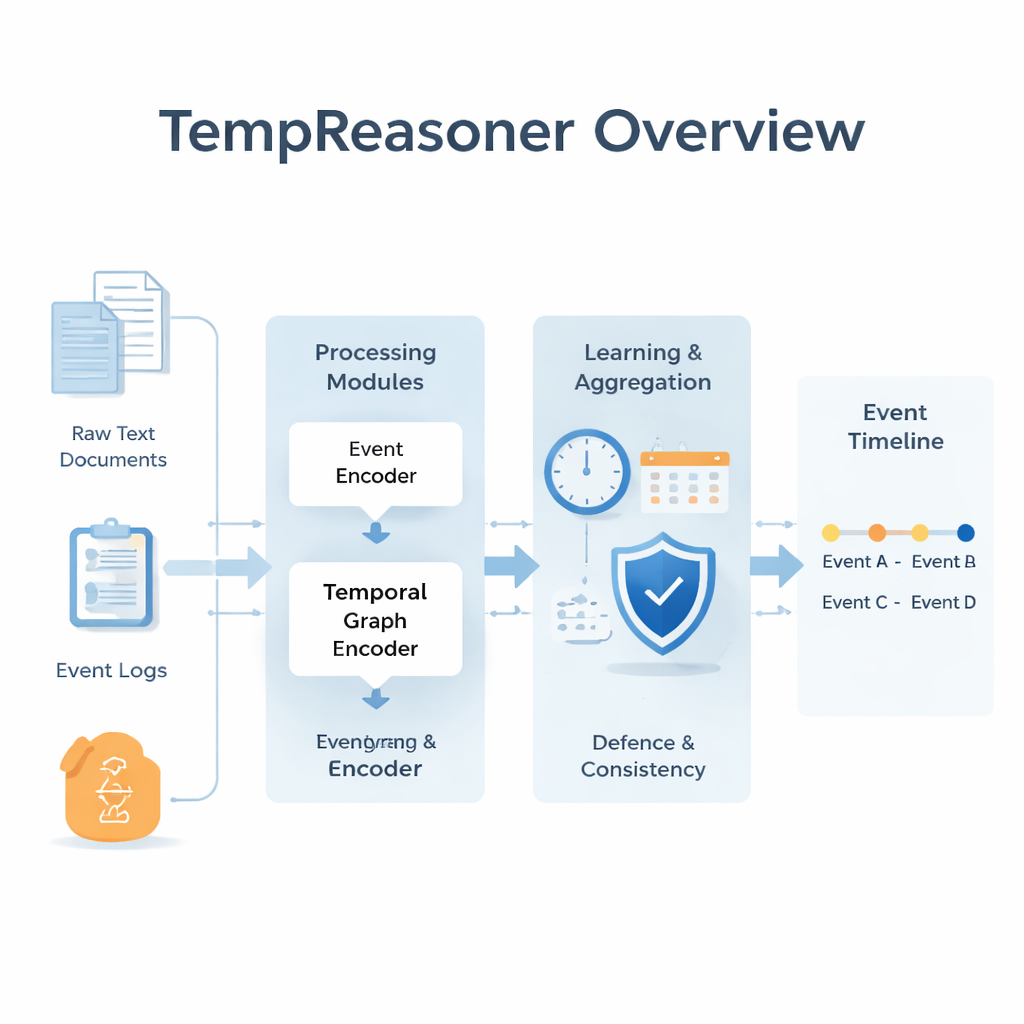

Every day, organisations drown in time-stamped information: news alerts, medical records, legal documents, sensor logs and more. Making sense of what happened, in what order, and what caused what is surprisingly hard, especially when clues are scattered across many sources. This paper presents TempReasoner, an artificial intelligence system designed to automatically turn messy, time-related data into clear, coherent event timelines that humans can trust.

From scattered data to a story of what happened

Most real-world data does not arrive as a neat chronology. A court case may span years of filings, emails and witness statements; a disease outbreak may be recorded in lab results, hospital notes and news reports. TempReasoner tackles this by treating each mention of an event as a node in a graph, connected by relationships that capture when events occur and how they relate. It reads raw text or structured records, extracts descriptions of events and their time markers, and embeds them into numerical vectors that capture both meaning (what happened) and timing (when it happened). The system is designed to work across many domains, from politics and law to medicine and engineering, without being rewritten for each field.

Looking at time from many angles at once

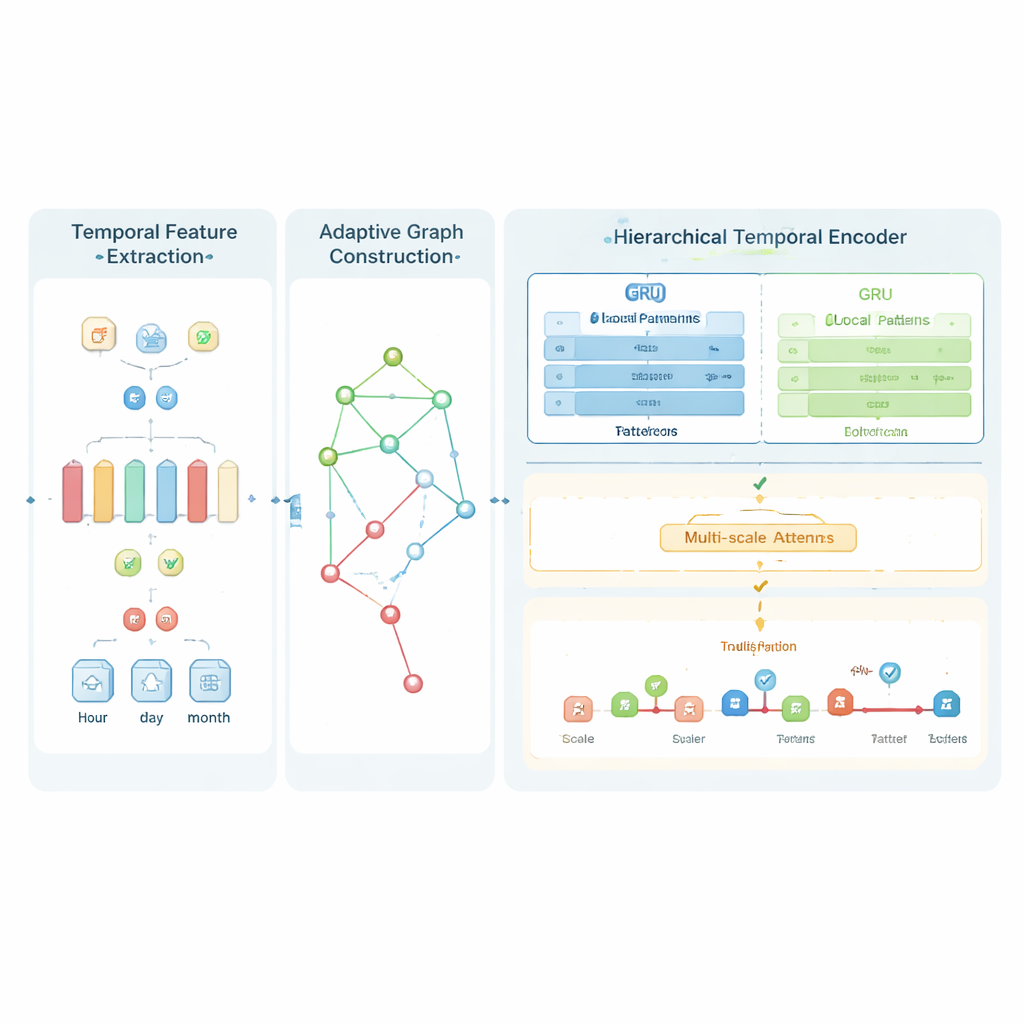

A central idea in TempReasoner is that time is not one-size-fits-all. Some questions hinge on minutes or hours—such as whether a drug dose was given before a reaction—while others depend on months or years, like the build-up to a diplomatic crisis. TempReasoner uses “multi-scale” temporal attention to see patterns at several time resolutions at once. It separately encodes fine-grained signals (for example, minute or day level) and coarse trends (months or years), then fuses them so the model can weigh short-term twists against long-term arcs. In practice, this means the system can track rapid developments, like a sequence of trades in a financial market, while still understanding broader storylines, such as the gradual escalation of tensions between countries.

Building and refining a living map of events

Instead of relying on a fixed set of links between events, TempReasoner continually learns and updates how events should be connected. Its adaptive graph construction module estimates how similar two events are in meaning and how close they are in time, then decides how strongly to link them. On top of this evolving network, a hierarchical encoder combines two types of processors: a recurrent network that excels at following step-by-step sequences and a transformer-style attention mechanism that can jump across long stretches of time to connect distant but related events. A specialised “consistency loss” nudges the model to avoid obvious contradictions—for example, preventing an event that is known to happen later from being placed earlier on the timeline—while still allowing for uncertainty when the data is vague or conflicting.

Teaching the system to resolve confusing situations

Real data is messy: time expressions like “shortly after” or “around the same time” are ambiguous, and different sources may disagree. To handle this, TempReasoner adds a reinforcement learning layer that acts like a decision-making agent. After the main model proposes a draft timeline, this agent experiments with slight changes—reordering events, inserting missing links, or adjusting relationships—and is rewarded when the final timeline is more accurate and logically consistent. Over many such trials, it learns strategies for untangling difficult cases, such as reconstructing the order of medical procedures from partial notes or aligning conflicting news reports about fast-moving crises.

How well it works and where it can be used

The authors tested TempReasoner on five well-known datasets that cover political events, news, and linguistically annotated timelines. The system achieved 94.3% accuracy in ordering events, surpassing a range of specialised competitors, while still running quickly enough for near real-time use—about 127 milliseconds per event sequence. It also generalised well across domains: a model trained on legal data could adapt to biomedical records or news with only modest fine-tuning. For lighter-weight settings, such as edge devices or smaller servers, a pared-down version called TempReasoner-Lite offers most of the accuracy with substantially fewer computing demands.

What this means for everyday applications

In simple terms, TempReasoner is a tool for turning piles of time-stamped facts into readable, reliable stories about what happened and why. In law, it could help investigators piece together case timelines from thousands of documents. In healthcare, it could clarify the sequence of treatments and outcomes in complex patient histories. For journalists and analysts, it could support fact-checking by aligning reports and highlighting inconsistencies. The authors note that the system still struggles with extremely vague language and very long timelines, and they emphasise the need for human oversight, especially in high-stakes settings. Even so, TempReasoner represents a significant step toward AI that not only recognises events, but also understands how they unfold over time in a way that aligns with human reasoning.

Citation: Aldawsari, M. TempReasoner: neural temporal graph networks for event timeline construction. Sci Rep 16, 4985 (2026). https://doi.org/10.1038/s41598-026-35385-w

Keywords: temporal reasoning, event timelines, graph neural networks, knowledge graphs, deep learning