Clear Sky Science · en

The role of diagnosticity in judging robot competence

Why robot slip-ups matter to all of us

Robots and AI systems are rapidly moving from labs into factories, hospitals, and our streets. As we start to rely on them, one question becomes crucial: how do people decide whether a robot is truly competent? This paper explores how we judge a robot that usually performs well but occasionally makes a surprising mistake—or pulls off an unexpected success. The findings help explain why our "gut feelings" about machines can differ from what we say out loud, and why a single error can either be shrugged off or permanently change our trust.

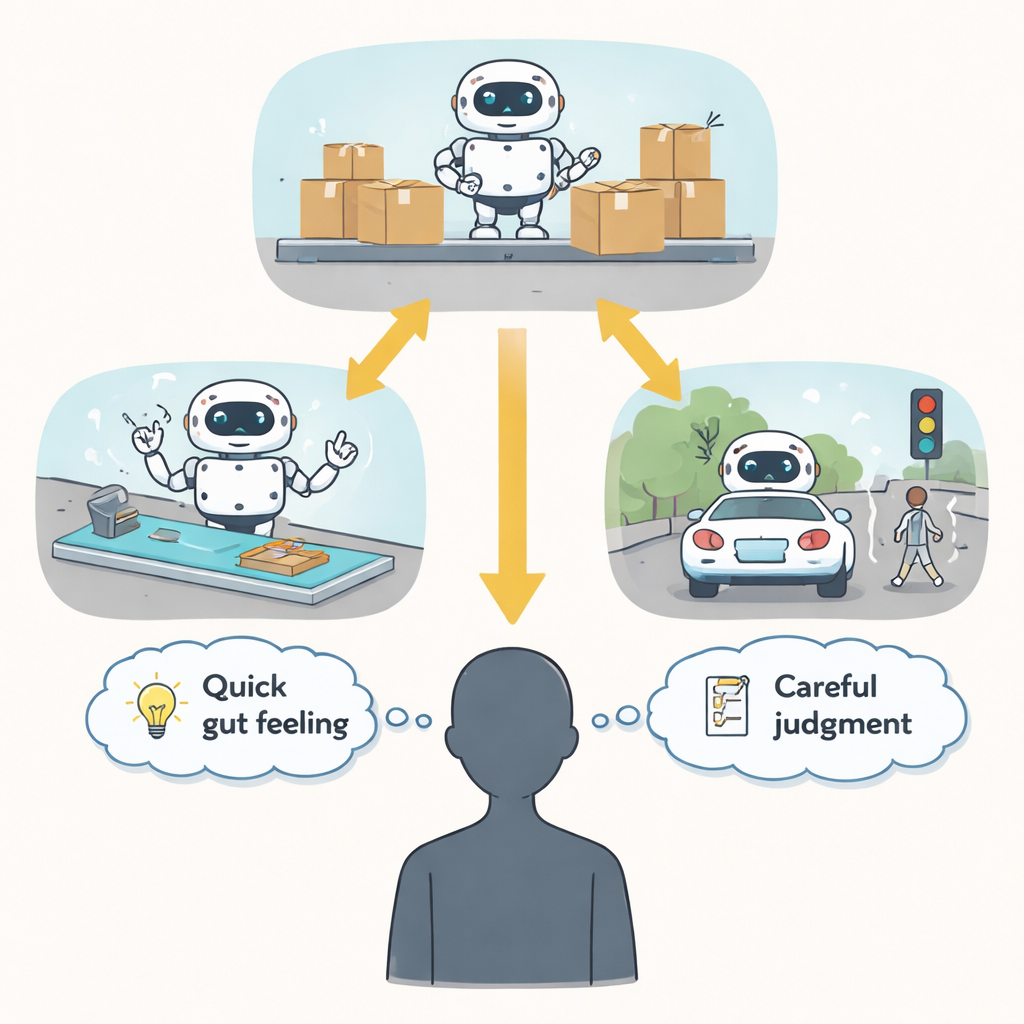

Two ways our minds judge machines

Psychologists distinguish between two kinds of impressions. Explicit impressions are the judgments we can report directly when asked, such as rating a robot on a 1–7 competence scale. Implicit impressions are more automatic, reflected in quick responses that people make without focusing on evaluation. Earlier work suggested that explicit impressions change quickly when we see new behavior, while implicit impressions are slower to move. This study asks whether that gap is really about different mental systems—or whether it depends on how informative, or diagnostic, the new behavior seems about the robot’s true abilities.

From factory floors to operating rooms and highways

Across nine online experiments with more than 3,700 participants, the researchers showed people different robots working in realistic settings: an industrial robot navigating a crowded warehouse, a surgical robot tracing precise paths, and a self-driving car reacting to obstacles in the road. Sometimes the robot was consistently skilled or consistently clumsy. In other cases, it was mostly competent but made a single clear mistake, or mostly incompetent but had one standout success. After watching short sequences of these performances, participants’ impressions were measured in two ways: direct self-report questions and indirect tasks that picked up quick, automatic reactions.

When one odd performance looms large

In these realistic scenarios, people’s explicit ratings of the robots tracked both the overall pattern of performance and the rare “oddball” trial. A lone mistake among many successes could noticeably drag down explicit ratings, and a lone success among many failures could boost them. Implicit impressions, however, told a different story. They responded strongly to the robot’s typical behavior—whether it was usually competent or not—but largely ignored the single oddball. This dissociation remained even when the researchers carefully redesigned their measures so that the “explicit” and “implicit” tasks looked and felt as similar as possible, differing mainly in whether participants were told to judge the robot on purpose.

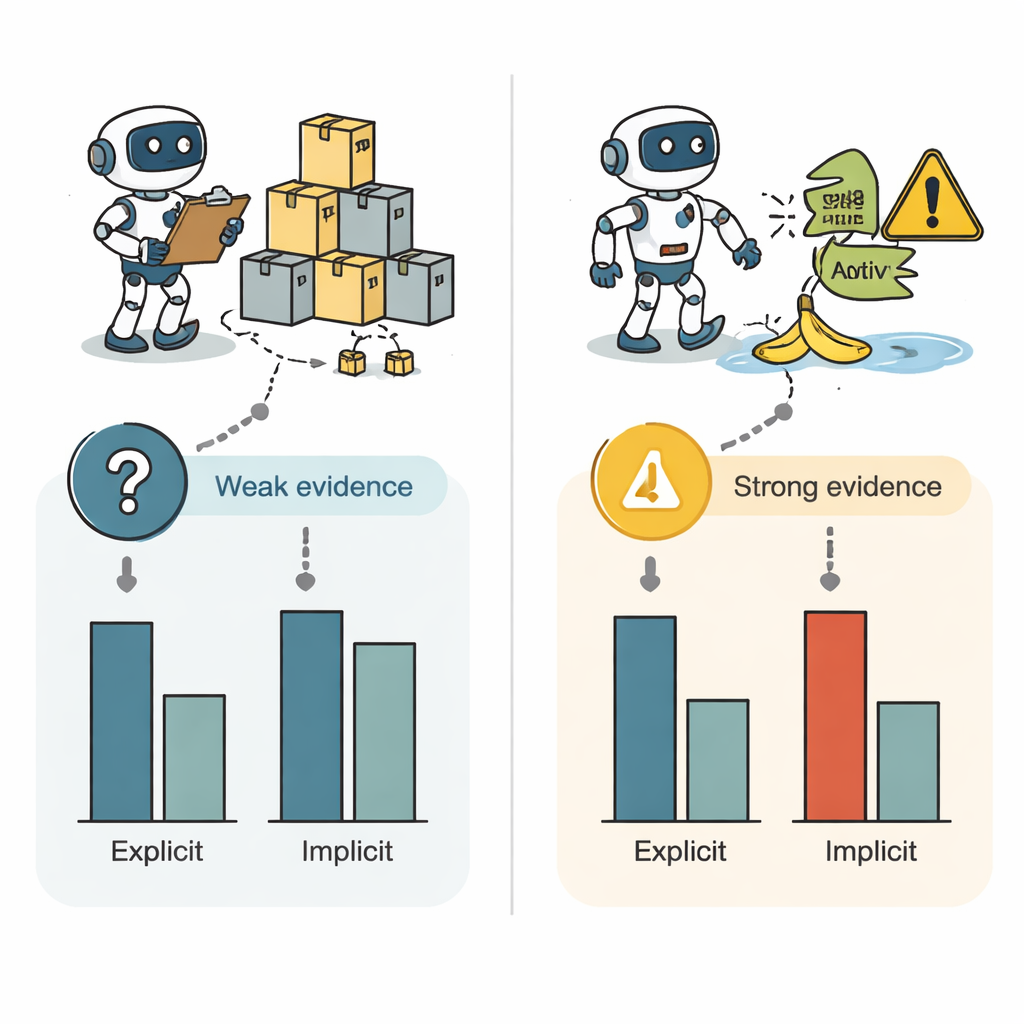

What makes a robot’s error truly telling

The team then tested a key idea: that the power of an oddball event depends on how diagnostic it seems—whether people think it really reveals something lasting about the robot. In new studies, participants first saw a robot perform several flawless trials, then later saw one or more failures. When the later failures were framed as important, recent tests, or when several failures accumulated to match the amount of earlier success, both explicit and implicit impressions shifted. Crucially, under these high-diagnostic conditions even a single error was enough to nudge automatic, implicit impressions. When the same kind of misstep was described as outdated, unimportant, or a one-off fluke, implicit impressions barely budged, even though explicit ratings still moved.

Why this matters for everyday trust in AI

Taken together, the findings suggest that our quick, automatic impressions of robot competence are not blind to new information, but they demand stronger or more clearly meaningful evidence before they change. Our spoken ratings, in contrast, are highly responsive even to weak or ambiguous events, partly because we feel pressure to use whatever information we have when someone asks for a judgment. For designers, engineers, and policymakers, this means that managing trust in robots is not only about reducing errors, but also about how those errors are framed and understood. A single highly telling failure—like a car not stopping when it obviously should—can reshape both our gut feelings and our stated opinions, with direct consequences for how willing we are to adopt and rely on intelligent machines.

Citation: Surdel, N., Ferguson, M.J. The role of diagnosticity in judging robot competence. Sci Rep 16, 7578 (2026). https://doi.org/10.1038/s41598-026-35375-y

Keywords: robot competence, human-robot interaction, implicit bias, trust in AI, diagnostic information