Clear Sky Science · en

Multi-class eye disease classification using deep learning EfficientNetB0 fusion techniques

Why early eye checks matter

Loss of sight often creeps in quietly. Common eye problems like cataracts, glaucoma and diabetic damage to the retina can steal vision long before symptoms are obvious. Around the world, there are not enough eye specialists to check everyone in time, especially in rural or low‑income areas. This study explores how a smart computer system that reads photographs of the back of the eye could help doctors spot several major eye diseases early and reliably, using the same kind of artificial intelligence that powers modern image search and face recognition.

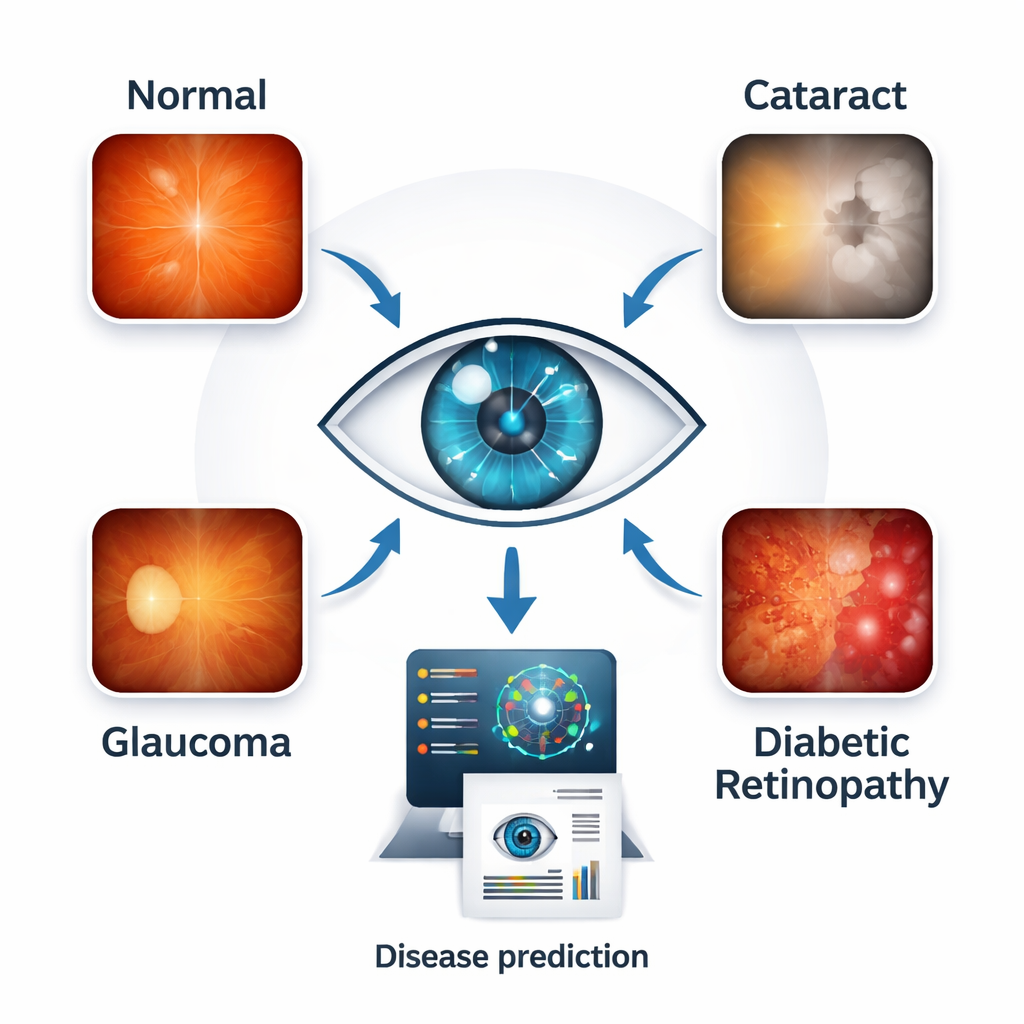

Seeing disease in a single snapshot

Eye doctors already use color photographs of the retina—the light‑sensitive layer at the back of the eye—to look for disease. In these images, cataracts show up as clouding in the optical path, glaucoma alters the shape of the optic nerve, and diabetic retinopathy sprinkles the retina with tiny leaks and scars. The researchers gathered 4,217 high‑resolution retinal images, neatly balanced across four groups: healthy eyes, cataract, glaucoma and diabetic retinopathy. By working with a balanced collection pulled from several public sources, they reduced the risk that the computer would learn shortcuts tied to one hospital, camera or disease type rather than the real signs of illness.

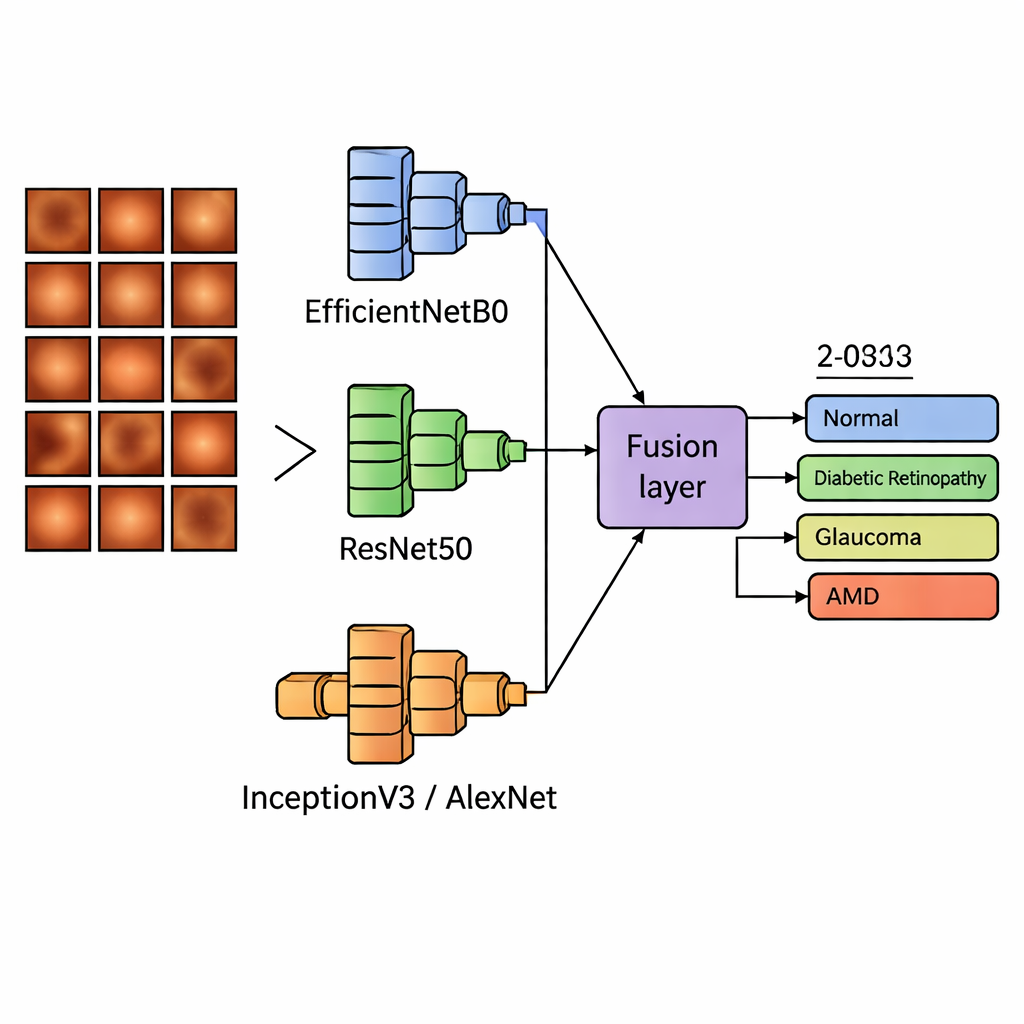

Letting two brains work together

Modern image‑reading programs, called deep learning models, are very good at spotting patterns but each has its own strengths and blind spots. Instead of relying on a single model, the team built “dual‑backbone” systems that run two well‑known image networks in parallel and then blend what they see. One of these networks, EfficientNetB0, is a compact, efficient model that captures general structure in images; it was always used as the base. It was paired in turn with three other models—ResNet50, InceptionV3 and AlexNet—that specialize in deeper, multi‑scale, or lightweight pattern recognition. The systems then combined the two sets of features in several ways: by simply joining them, adding them, weighting them differently, or letting each model vote on the final answer.

Putting the system to the test

The researchers trained and tuned 12 different model combinations on most of their retinal images, keeping some aside to check performance. On this internal test, the best approach joined features from EfficientNetB0 and ResNet50, reaching about 95% overall accuracy and an almost perfect score on a standard measure of diagnostic quality. Similar combinations with InceptionV3 and AlexNet also performed strongly. To see whether the system could handle real‑world variety rather than just memorizing its training set, the team then tested all models on 400 images from two independent collections taken in different hospitals with different cameras. Here, accuracy climbed even higher, to between about 95% and 98%, and all models maintained very high scores on how well they separated diseased from healthy eyes.

Looking inside the black box

Doctors and regulators increasingly ask not only “How accurate is it?” but also “Why does it decide that way?”. To answer this, the authors used visualization tools such as Score‑CAM and LIME. These tools highlight which parts of an image most influence the model’s verdict, turning the system’s “attention” into heatmaps overlaid on the retina. For diabetic retinopathy, the highlighted areas lined up with leaking blood vessels and spots near the macula, the sharp‑vision center. For glaucoma, the focus was on the optic nerve head and surrounding tissue, where damage occurs. Cataract‑related decisions emphasized diffuse clouding along the visual path. Crucially, normal eyes did not show strong, misplaced hotspots. This close match between the model’s focus and textbook anatomy suggests that the system is keying in on the same features that clinicians use in practice.

What this could mean for everyday care

For a non‑specialist, the bottom line is that a single retinal photograph, taken with a standard camera, could soon help screen for several major causes of blindness at once. The dual‑network design and smart ways of fusing their outputs gave not only high accuracy but also stable results when images came from new clinics and devices—a must for real‑world use. While more testing on larger and more varied populations is still needed, especially before the technology can guide treatment on its own, this work shows that combining different types of artificial “eyes” can produce fast, trustworthy second opinions. In busy hospitals, small clinics or mobile screening units, such tools could help flag people who most urgently need to see an eye doctor, potentially preserving sight for millions.

Citation: Sah, U.K., Chatterjee, J.M. & Sujatha, R. Multi-class eye disease classification using deep learning EfficientNetB0 fusion techniques. Sci Rep 16, 6368 (2026). https://doi.org/10.1038/s41598-026-35357-0

Keywords: eye disease, retinal imaging, deep learning, glaucoma, diabetic retinopathy