Clear Sky Science · en

Quantifying central canal stenosis prediction uncertainty in SpineNet with conformal prediction

Why smarter spine scans matter

Lower back pain sends millions of people to the doctor each year, and MRI scans of the spine are a key tool for figuring out who needs surgery and who can be treated more conservatively. Increasingly, computers help radiologists by automatically grading how narrowed the spinal canal is—a condition called central canal stenosis. But doctors rightly ask a critical question: how sure is the computer about its answer? This study shows how to turn a black‑box spine AI system, called SpineNet, into one that can say not just what it thinks, but also how uncertain it is—providing a more honest and clinically useful second opinion.

From single guesses to honest ranges

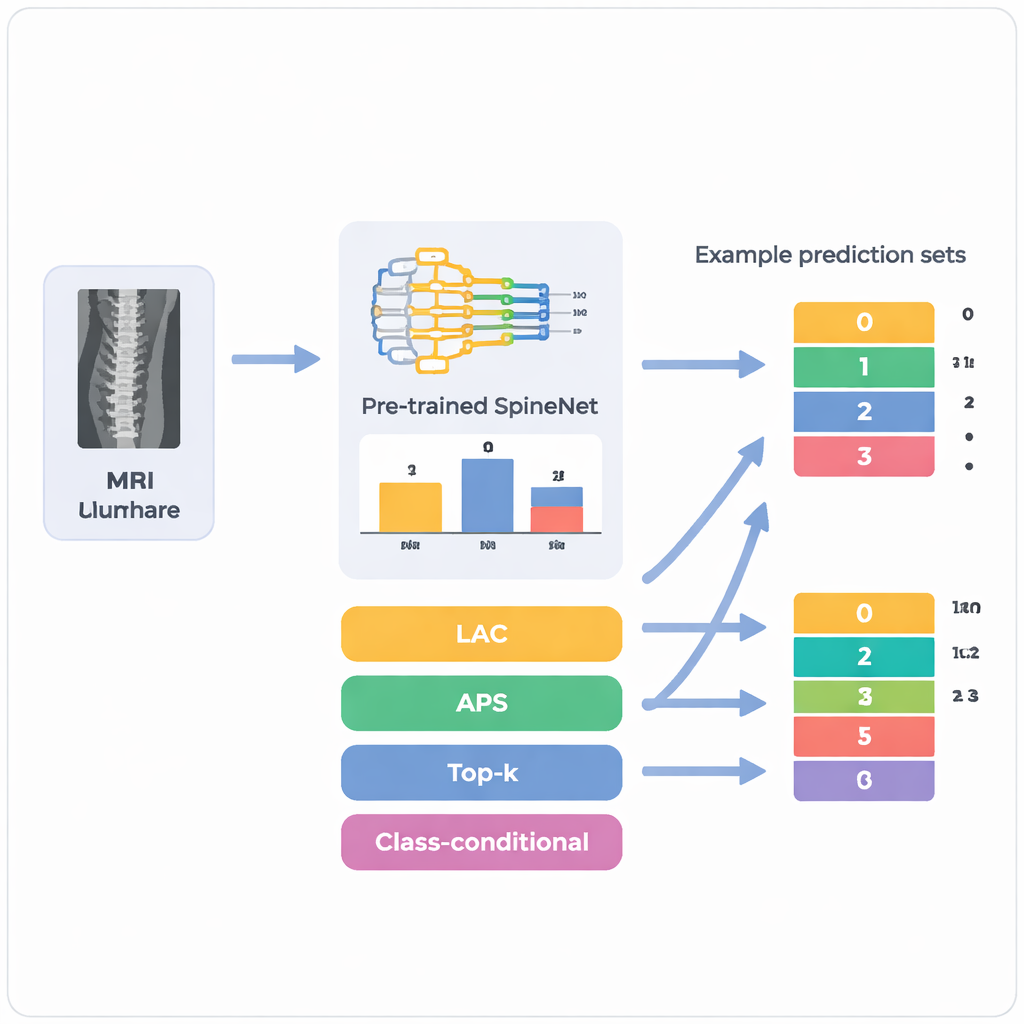

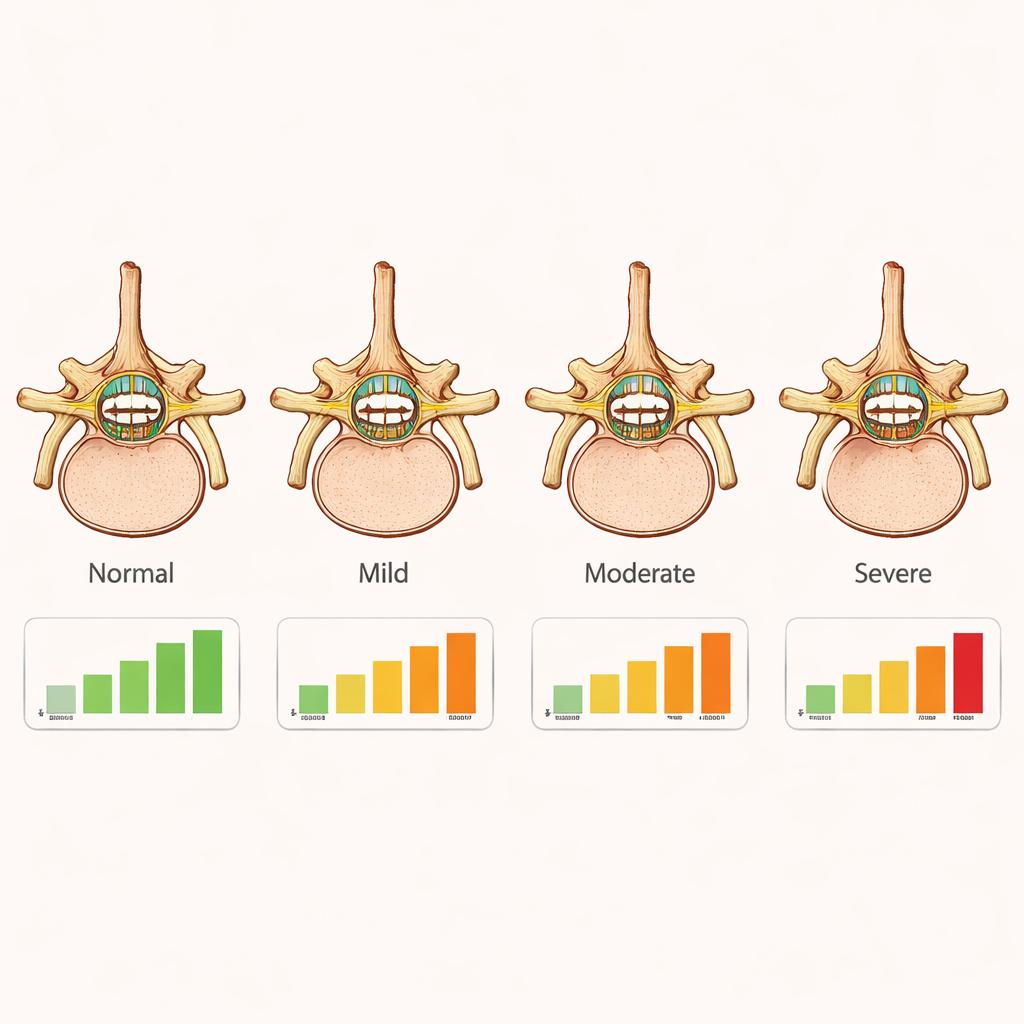

Most artificial intelligence systems used in medicine behave like very confident students who always give a single answer, even when they are unsure. SpineNet, for example, looks at lumbar spine MRI scans and assigns each disc level one of four grades—normal, mild, moderate, or severe stenosis. What it does not say is when more than one grade seems plausible. The authors apply a statistical framework called conformal prediction, which wraps around an existing model and converts its raw confidence scores into small sets of possible answers. Instead of saying “mild” full stop, the system might say “mild or moderate” and attach a guarantee that, over many cases, the true grade lies somewhere in that set at least 85–95% of the time, depending on how strict clinicians choose to be.

Testing the approach on real patients

The team started from 340 older adults with symptoms of lumbar spinal stenosis who had undergone MRI at a Swiss hospital. SpineNet automatically graded up to five vertebral levels per person, producing 1,689 disc levels in total. For each level, the researchers extracted the model’s internal probabilities for the four stenosis grades and then applied four flavors of conformal prediction. They repeatedly split the data into calibration and test subsets a thousand times and varied how much error they were willing to tolerate. This intensive resampling let them see not only how often the true grade fell inside each prediction set, but also how large those sets tended to be overall and for each severity grade.

Finding the sweet spot between safety and usefulness

All four methods could be tuned so that their overall success rate matched the desired target, but they differed sharply in how informative they were. A simple “top‑k” method achieved perfect coverage at strict settings by almost always listing all four grades, which is mathematically safe but nearly useless in practice. Another pair of methods, designed to adaptively grow the prediction set, often struggled with the tricky moderate and severe cases, missing the target coverage even when they produced large sets. The clear winner was a class‑conditional method that learns a separate uncertainty threshold for each grade. It reliably hit the requested coverage while keeping prediction sets as small as possible—often just one or two grades for common normal and mild cases, and only slightly larger sets for less frequent moderate and severe stenosis, where human experts themselves tend to disagree.

What the model struggles with in the spine

By looking vertebra by vertebra, the authors showed that the system’s uncertainty lines up with real‑world clinical difficulty. In upper lumbar levels, where cases are mostly normal or mildly narrowed, prediction sets were small and reliable. At levels like L3/L4 and L4/L5, where severe narrowing is more common but still relatively rare, the uncertainty grew: prediction sets for severe cases were larger and coverage more variable. This reflects both the uneven distribution of disease and the fact that the underlying SpineNet model is less accurate for mild and moderate grades than for clearly normal or clearly severe ones. Importantly, the conformal layer exposes that weakness instead of hiding it, flagging exactly those cases where a radiologist should be especially cautious.

What this means for patients and clinicians

For patients, the work does not replace the radiologist; it makes automated tools more trustworthy. Rather than accepting a spine AI’s verdict as all‑or‑nothing, clinicians can now see when the algorithm is on solid ground and when it is hedging between neighboring grades. That transparency makes it easier to decide when to rely on the model, when to seek another opinion, and how to weigh imaging against symptoms when discussing surgery. Because the conformal prediction layer can be added to many existing systems without retraining them, this approach offers a practical path toward AI that not only reads spine MRIs, but also knows—and clearly communicates—its own limits.

Citation: Cina, A., Monzon, M., Galbusera, F. et al. Quantifying central canal stenosis prediction uncertainty in SpineNet with conformal prediction. Sci Rep 16, 4963 (2026). https://doi.org/10.1038/s41598-026-35343-6

Keywords: spinal stenosis, medical AI, MRI imaging, uncertainty estimation, conformal prediction