Clear Sky Science · en

A multi-modal approach for recognizing fake news and influential nodes in spreading them using deep learning and network analysis

Why this matters to everyday life

Every day, millions of people scroll through social media, seeing posts about health, politics, money, and more. Mixed in with helpful information are rumors and fake news that can spark fear, confusion, or even real‑world harm. This study introduces a powerful way to do two things at once: first, automatically spot false or misleading posts, and second, map out who is most responsible for spreading them through an online community. The work focuses on Twitter messages about Covid‑19, but the ideas could help platforms, journalists, and the public respond faster and more precisely to harmful misinformation in many areas of life.

How rumors ripple through online crowds

Social networks such as Twitter, Facebook, or messaging apps can be thought of as huge webs of people (nodes) connected by their interactions (links). When one user posts a message and others reply or share it, that information can quickly ripple across the web. Rumors—unverified or false claims—behave much like contagious diseases: they can jump from person to person, grow rapidly, and be hard to stop. Past research has often treated two questions separately: how to tell whether a given post is a rumor, and how to find the key “spreaders” who help that rumor reach many others. The authors argue that tackling both questions together, while also paying attention to how the network is wired and how activity changes over time, gives a far clearer picture of how false stories move.

Teaching a computer to read and flag doubtful posts

The first part of the method focuses on the content of each tweet. The researchers treat every tweet as a short document and clean it up by removing clutter such as extra symbols, replacing web links and email addresses with simple tags, and stripping out common filler words that add little meaning. They then translate each word into a numerical vector using a widely used technique called GloVe, which captures how words tend to appear together across large collections of text. By averaging these word vectors, each tweet becomes a compact numerical summary of its meaning. These summaries are then fed into a one‑dimensional convolutional neural network—a type of deep learning model that can detect subtle patterns—to decide whether a tweet is a genuine message or a rumor.

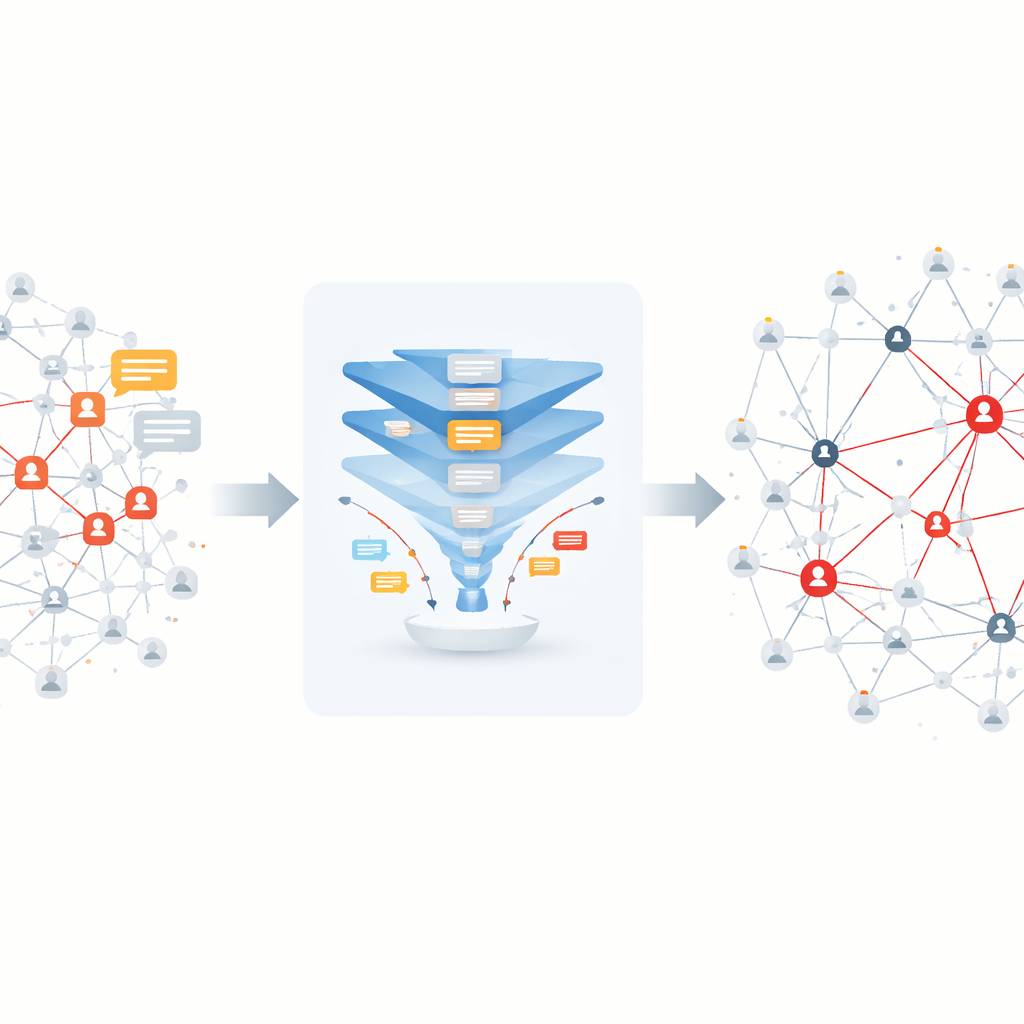

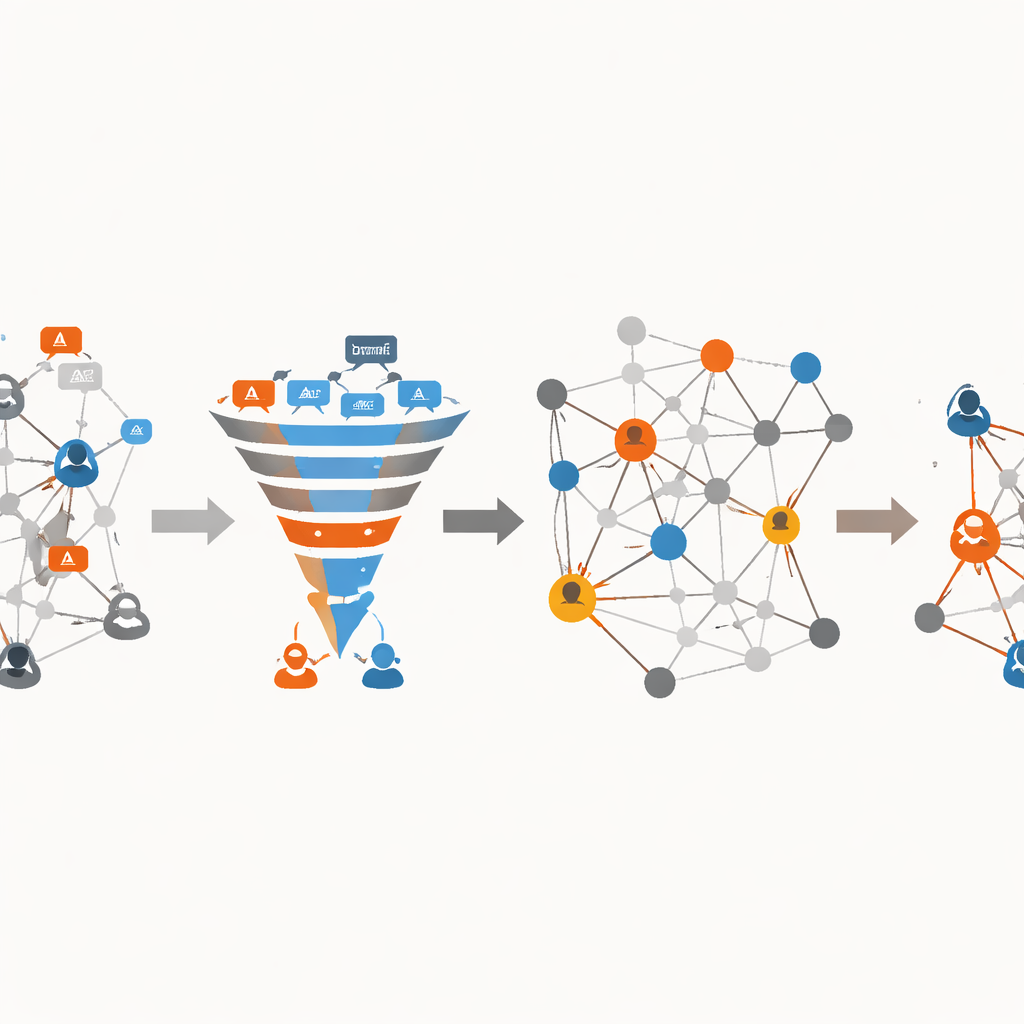

Finding the key spreaders inside the network

After the system has separated rumor tweets from real ones, the second part of the approach turns to the structure of the social network itself. Each user is a point in a directed, weighted graph, and each reply or retweet becomes a link whose strength reflects how often one user reacts to another’s messages. Using this information, the authors first cluster users into communities—groups that interact more with each other than with outsiders—by building a special tree representation of the network and then merging closely connected subgroups based on how well they fit together. Within these communities, they compute how often each user lies on the most important paths between others, a measure known as betweenness. Users that appear again and again on high‑value paths are treated as influential spreaders. The weights of connections are then updated to reflect both how often people interact and how central the connected users are, revealing the most likely routes that rumors take through the network over time.

What the Covid‑19 case study revealed

To test their framework, the researchers applied it to a massive Twitter dataset about Covid‑19: nearly 100 million tweets involving more than 150,000 users, from which they extracted over 14,000 unique messages labeled as either authentic information or rumors. On this data, their deep learning model correctly classified about 99 percent of tweets, outperforming several existing methods, including other advanced fake‑news detectors. In the second stage, they compared their list of influential users with a well‑known mathematical model of how information spreads, finding the highest agreement among the methods tested. They also showed that when they analyzed longer time periods—120, 240, then 360 days—the model’s ability to pinpoint key spreaders and main rumor routes improved, and it did so with lower processing time than competing network‑based techniques.

What this means for fighting misinformation

Put simply, the study shows that it is possible to build a system that not only spots likely fake news with very high accuracy, but also traces how it travels and who is most responsible for its spread. Instead of treating all users and all connections as equal, the method highlights a smaller set of communities and individuals whose behavior matters most for controlling harmful stories. While the work was done on anonymized Twitter data about Covid‑19 and may not directly generalize to every platform or topic, it suggests a path toward more targeted, data‑driven responses to online rumors—such as directing fact‑checking, warnings, or platform interventions where they can have the greatest impact, while keeping individual privacy and ethical use in view.

Citation: Zhang, W., Qian, M. & Zhang, Q. A multi-modal approach for recognizing fake news and influential nodes in spreading them using deep learning and network analysis. Sci Rep 16, 9775 (2026). https://doi.org/10.1038/s41598-026-35342-7

Keywords: fake news, social networks, rumor spreading, deep learning, influential users