Clear Sky Science · en

A real-time mobile aquatic plant recognition algorithm based on deep learning for intelligent ecological monitoring

Why spotting water plants with a phone matters

Aquatic plants do far more than decorate ponds and lakes: they filter pollution, supply oxygen, and shelter fish and insects. But when the wrong species spread too fast, they can choke waterways and upset entire ecosystems. This study presents a new way to recognize different kinds of water plants automatically using a standard smartphone, helping scientists and water managers watch over fragile ecosystems in real time instead of relying only on slow, manual surveys.

The challenge of watching life under the surface

Healthy lakes and rivers depend on a delicate mix of native aquatic plants. They pull nutrients and harmful substances from the water, keep it clearer, and provide food and hiding places for wildlife. Invasive species, however, can take over quickly, blocking boat traffic, reducing oxygen, and harming fisheries. Traditionally, identifying these plants has meant sending experts into the field to collect and classify samples by hand, a process that is time‑consuming, expensive, and hard to repeat often enough to catch rapid changes driven by climate and human activity.

From bulky computers to pocket‑sized plant detectors

In recent years, artificial intelligence has learned to pick out objects in photos and videos with impressive accuracy, and researchers have applied it to weeds, crops, and some aquatic plants. Yet most of these systems run on powerful desktop computers or servers. Lightweight versions that can run on phones or drones usually sacrifice too much accuracy, or demand more computing power than small devices can spare. The authors focus on this bottleneck: how to keep detection fast and accurate while shrinking the model so that it can run smoothly on a mobile phone beside a riverbank.

A smarter, leaner way to see plants in complex waters

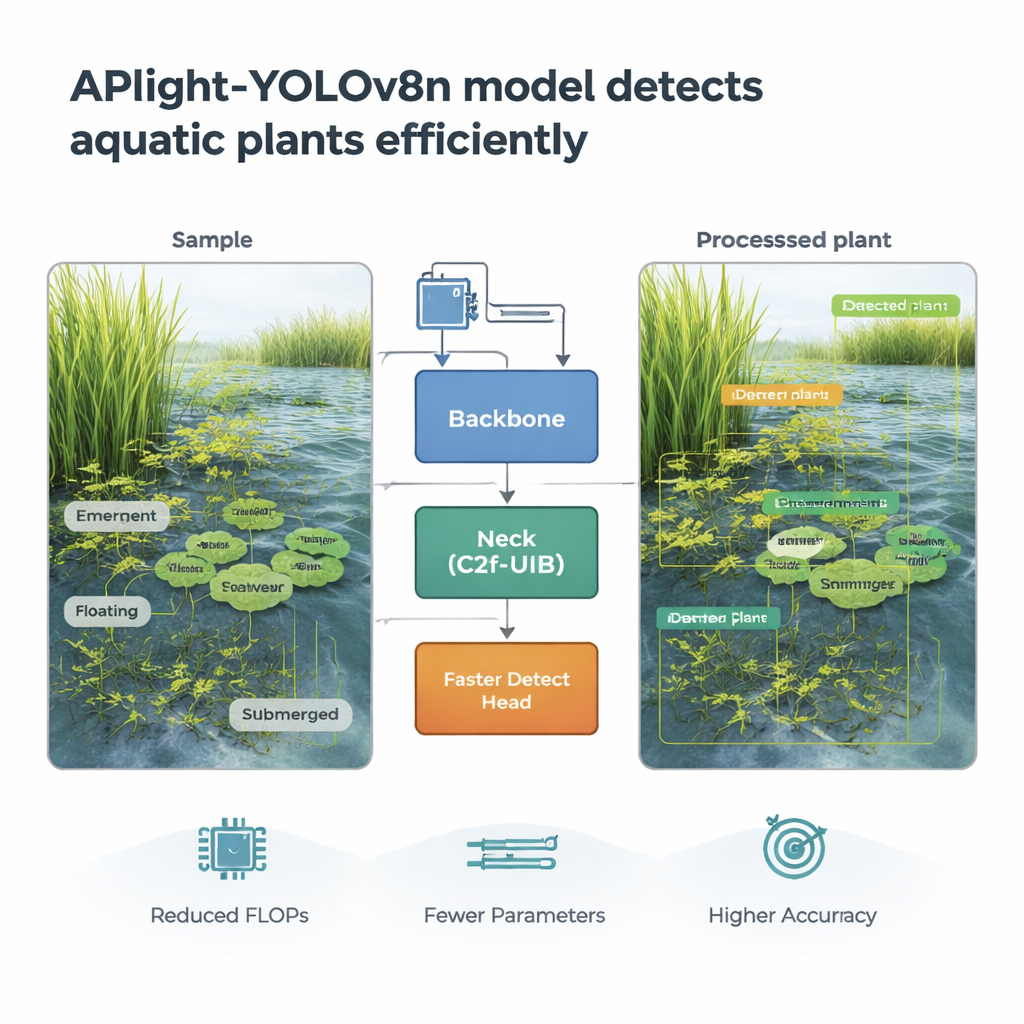

The team builds on YOLOv8n, a popular “you only look once” object‑detection model already designed to be relatively compact. They redesign two key parts of its internal pipeline to better handle small and medium‑sized aquatic plants buried in cluttered, reflective backgrounds. First, a new “Faster Detect” head concentrates its attention on the sizes of plants most often seen in the real world, improving how the model outlines and labels them. Second, a redesigned “C2f‑UIB” feature‑processing block mixes fine details and broader scene information more efficiently, allowing the network to distinguish similar‑looking species while using fewer computations. Together, these changes create a new model, APlight‑YOLOv8n, tailored specifically for aquatic plant monitoring.

Putting the model to the test in the field

To see whether APlight‑YOLOv8n really works outside the lab, the authors train and test it on more than two thousand high‑resolution photos of rivers, wetlands, and fish ponds, covering twelve plant species across four growth forms: emergent, floating, floating‑leaved, and submerged. The images include murky water, overlapping leaves, and plants partly hidden by other objects. Compared with the original YOLOv8n and several other well‑known detection models, the new design is both smaller and smarter. It cuts the number of trainable settings and the required mathematical operations by more than a quarter, yet still detects plants more accurately—especially emergent and floating species. When installed on an Android smartphone, it processes around 33 video frames per second while scanning for plants, fast enough for real‑time use along shorelines or from small boats.

Limits, next steps, and what this means for clean water

Although APlight‑YOLOv8n performs strongly overall, it still struggles somewhat with submerged plants, whose faint outlines are easily blurred by muddy water, reflections, and low light. The current dataset also covers a limited set of species from a specific region, so additional images from other climates and plant communities will be needed to turn this into a globally reliable tool. The researchers suggest future work could strengthen the model’s sensitivity to weak, underwater signals and adapt it for other mobile platforms such as drones and low‑power embedded boards.

A new tool for faster, smarter water protection

For non‑specialists, the main message is straightforward: this study shows that an ordinary smartphone, armed with a carefully designed AI model, can quickly recognize many kinds of aquatic plants in real time. By making monitoring cheaper, faster, and more flexible, APlight‑YOLOv8n could help water managers track invasive species, plan weed control, and protect aquatic habitats before problems spiral out of control. It is an early but promising step toward putting advanced ecological monitoring tools directly into the hands of people working on the water’s edge.

Citation: Wang, D., Dong, Z., Yang, G. et al. A real-time mobile aquatic plant recognition algorithm based on deep learning for intelligent ecological monitoring. Sci Rep 16, 5075 (2026). https://doi.org/10.1038/s41598-026-35310-1

Keywords: aquatic plants, invasive species, ecological monitoring, mobile deep learning, object detection