Clear Sky Science · en

Enhanced YOLOv11n for small object detection in UAV imagery: higher accuracy with fewer parameters

Why spotting tiny things from the sky matters

Small flying robots are rapidly becoming part of everyday life, from traffic monitoring and disaster response to package delivery. But there is a stubborn problem: when a drone looks down from hundreds of meters in the air, people, cars, and boats shrink to just a few pixels. Standard computer-vision systems often miss these specks or confuse them with background clutter. This paper presents a smarter, leaner version of a popular detection system, YOLOv11n, that is specially tuned to find these tiny objects in drone images while still running efficiently on lightweight onboard computers.

Seeing more in tiny, blurry details

Drone images are harsh environments for algorithms. Most targets are smaller than 32×32 pixels, and many are under 16 pixels across. Motion, vibration, changing light, haze, and busy city backgrounds all make those tiny targets even harder to distinguish. At the same time, drones often rely on modest processors and limited power, so heavy-duty models are impractical. The authors start from YOLOv11n, a compact real-time detector, and redesign its internal components to squeeze more useful information out of small, low-contrast regions without bloating the model. Their goal is simple but demanding: significantly higher accuracy on small objects, with equal or fewer learnable parameters and acceptable speed on edge hardware.

Learning from the scene at many scales

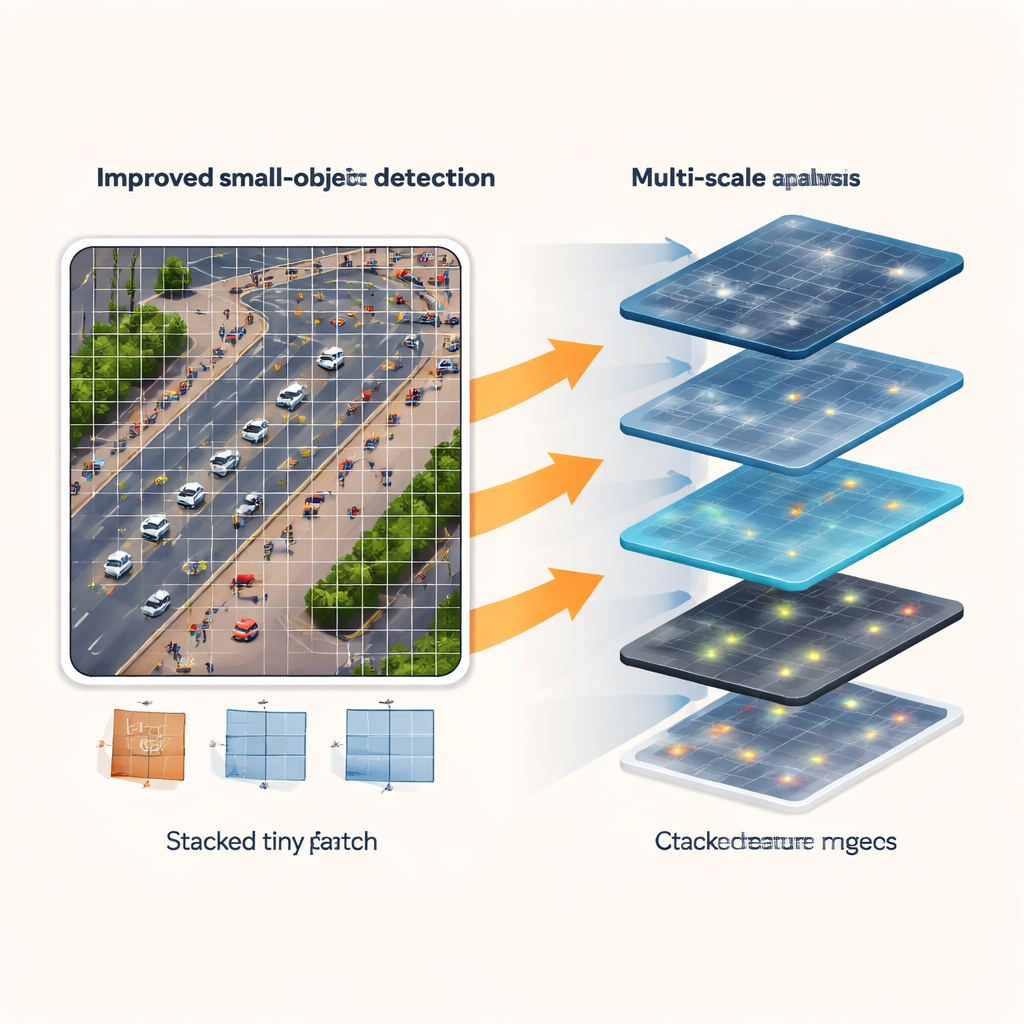

The first improvement focuses on how the network “sees” the scene. Instead of looking through a single fixed-size window, the new Multiscale Edge-Feature Adaptive Selection (MSEAF) module observes the image at several scales at once. Coarse views capture the broader layout of roads, buildings, and crowds, while finer views keep track of individual cars or people. An edge-enhancement step emphasizes boundaries and shapes that are easy for humans to see but often get washed out in tiny image patches. A smart fusion mechanism then boosts the most informative regions while suppressing background noise. This multiscale, edge-aware representation gives the rest of the network a richer starting point for finding hard-to-see objects.

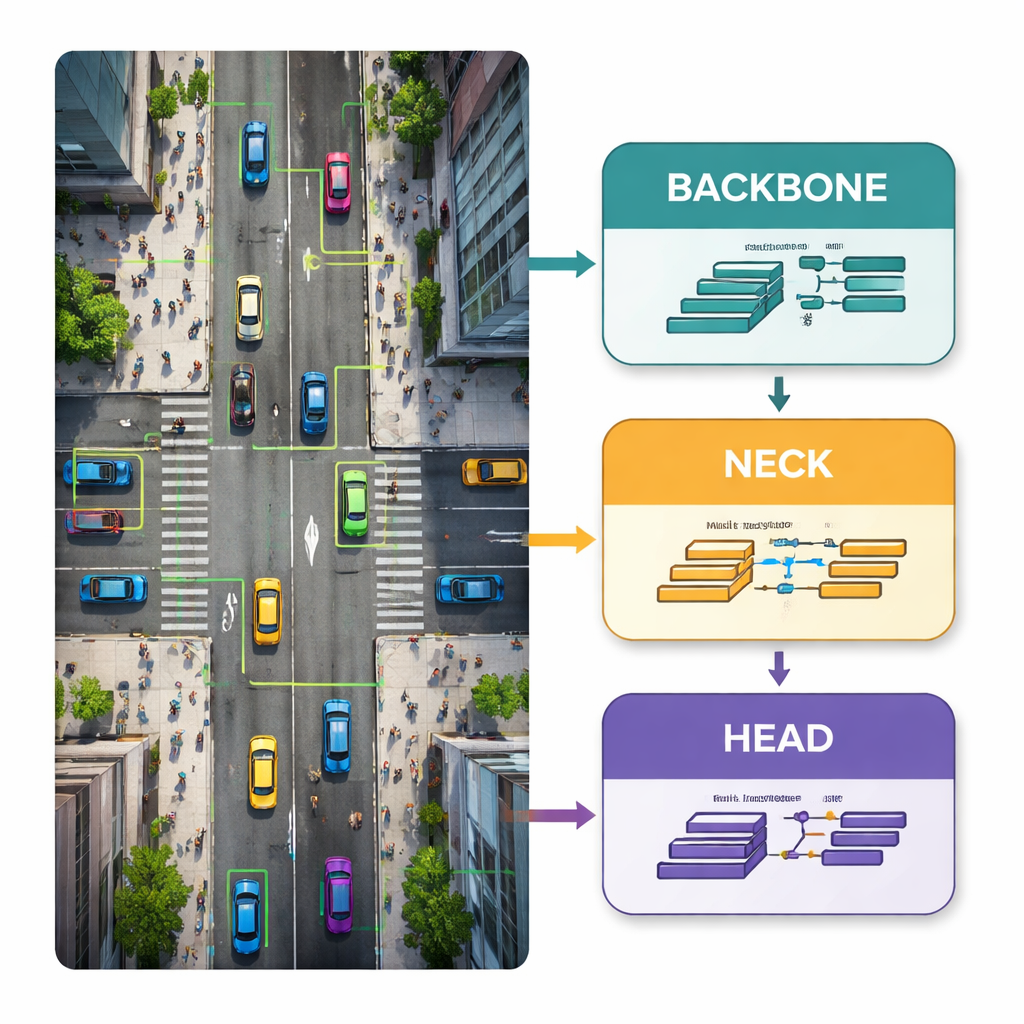

Keeping small objects alive through the pipeline

Traditional YOLO networks repeatedly shrink feature maps as information flows from input to output. For large objects, this is fine, but for tiny ones it can be fatal: after several rounds of downsampling, a pedestrian may occupy only one or two pixels in an internal map, or vanish entirely. To prevent this, the authors reshape the middle “neck” part of the network and add a new P2 detection head that works on higher-resolution features. Custom modules called ScalCat and Scal3DC carefully combine information from different layers so that fine details from shallow layers and richer context from deeper layers reinforce each other. The result is a detector that keeps track of small cars and people across multiple scales, instead of sacrificing them for speed.

Sharper decisions with fewer knobs

The final step is to streamline the prediction stage, known as the detection head. In the original design, deeper layers with many channels were devoted mostly to large objects, which are relatively rare in drone scenes. The new Shared Re-parameterized Detection (SRepD) head equalizes the channel count across scales and uses a clever training trick: during learning, several specialized convolution branches explore different ways of highlighting features; at inference time, these branches are mathematically merged into a single, simple convolution. This shared, re-parameterized design improves how well features are fused while actually reducing the number of parameters and keeping computations manageable for edge devices.

What the numbers say in real-world tests

The enhanced model is tested on three challenging public datasets: VisDrone2019 (mixed urban scenes), TinyPerson (extremely small people over land and sea), and HazyDet (vehicles in heavy haze from a drone’s viewpoint). On VisDrone2019, the new design boosts a key accuracy measure (mAP50) and precision by 4.6 percentage points compared with the original YOLOv11n, while trimming parameters by about 8.5 percent. On TinyPerson, improvements are even larger—about 5.9 percentage points in mAP50 and 5.6 in precision—with a similar parameter reduction, and the compact model even outperforms a much larger YOLOv11s that uses four times as many parameters. In hazy conditions, the upgraded detector also surpasses the baseline across accuracy and robustness measures, all while maintaining real-time speeds on a low-power Huawei Atlas 200 DK edge board.

Why this matters for everyday drone use

For non-specialists, the main takeaway is that this research shows drones can become both sharper-eyed and more resource-efficient at the same time. By rethinking where and how the network looks for detail, preserving high-resolution information for tiny targets, and simplifying the prediction machinery, the authors break the usual trade-off between accuracy and size. The resulting system is better at spotting small, distant people and vehicles in crowded, hazy, or complex scenes without demanding bulky hardware. This kind of progress brings reliable, real-time aerial monitoring for traffic safety, search and rescue, and environmental observation closer to everyday deployment.

Citation: Zhu, H., Xie, X. Enhanced YOLOv11n for small object detection in UAV imagery: higher accuracy with fewer parameters. Sci Rep 16, 5536 (2026). https://doi.org/10.1038/s41598-026-35301-2

Keywords: drone object detection, small object recognition, YOLO neural network, aerial imagery analysis, edge AI for UAVs