Clear Sky Science · en

Deep learning-based automatic segmentation and classification for cervical cancer detection using an improved U-Net and ensemble methods

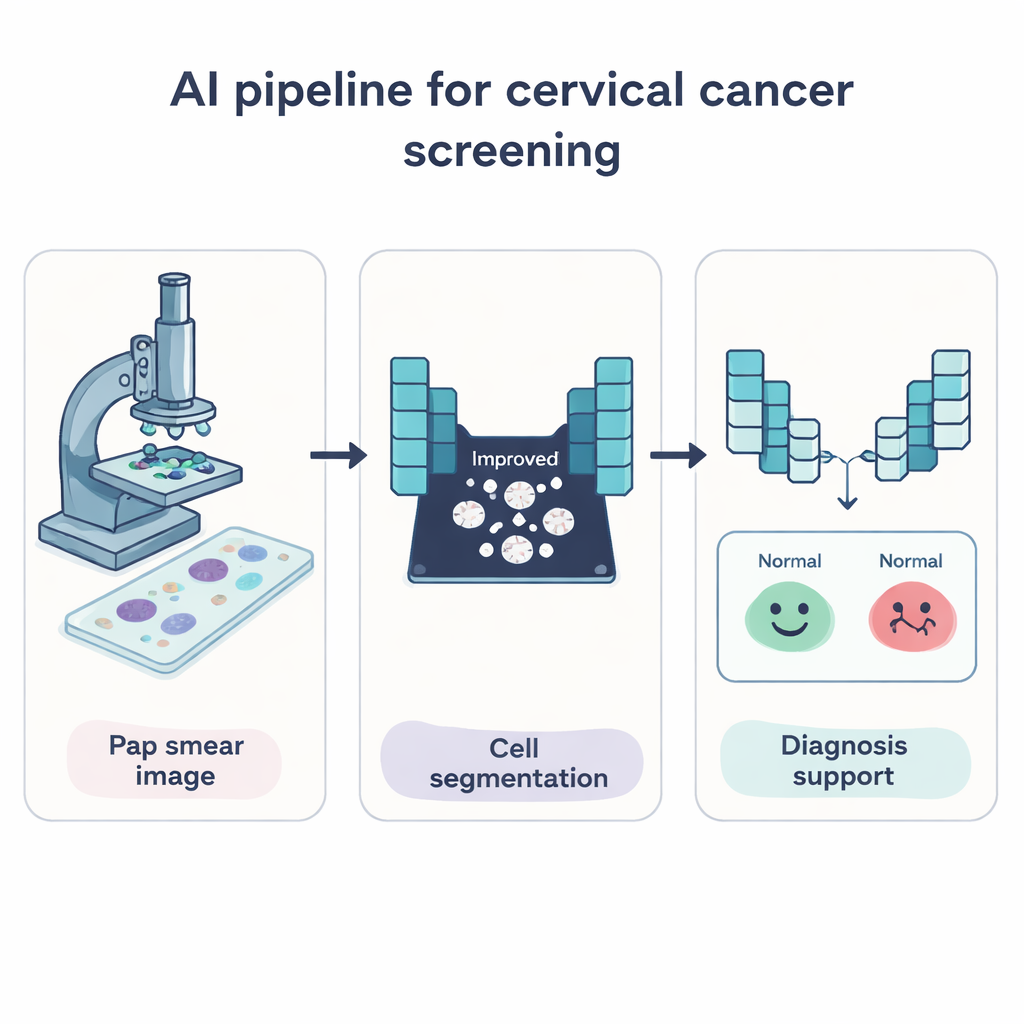

Why Pap Tests Still Need a Digital Helping Hand

Cervical cancer is one of the few cancers that is highly preventable when caught early, yet many women still die because dangerous cell changes are missed or found too late. The familiar Pap test already saves lives, but reading thousands of cell images by eye is slow, tiring work and even experts can disagree. This paper explores how modern artificial intelligence can act as a tireless assistant, automatically finding and sorting cervical cells on Pap smear images to help doctors spot early warning signs more quickly and reliably.

Teaching Computers to See Problem Cells

The researchers set out to build a computer system that can do two key jobs: first, pick out each cervical cell from the background of a Pap smear image, and second, decide whether the cell looks normal or shows signs linked to cancer. To do this, they used deep learning, a form of AI that learns patterns directly from large numbers of example images rather than from hand-written rules. Their system focuses on the whole cell—both the dark center (nucleus) and the surrounding material (cytoplasm)—because changes in size, shape, and texture across the entire cell can signal disease.

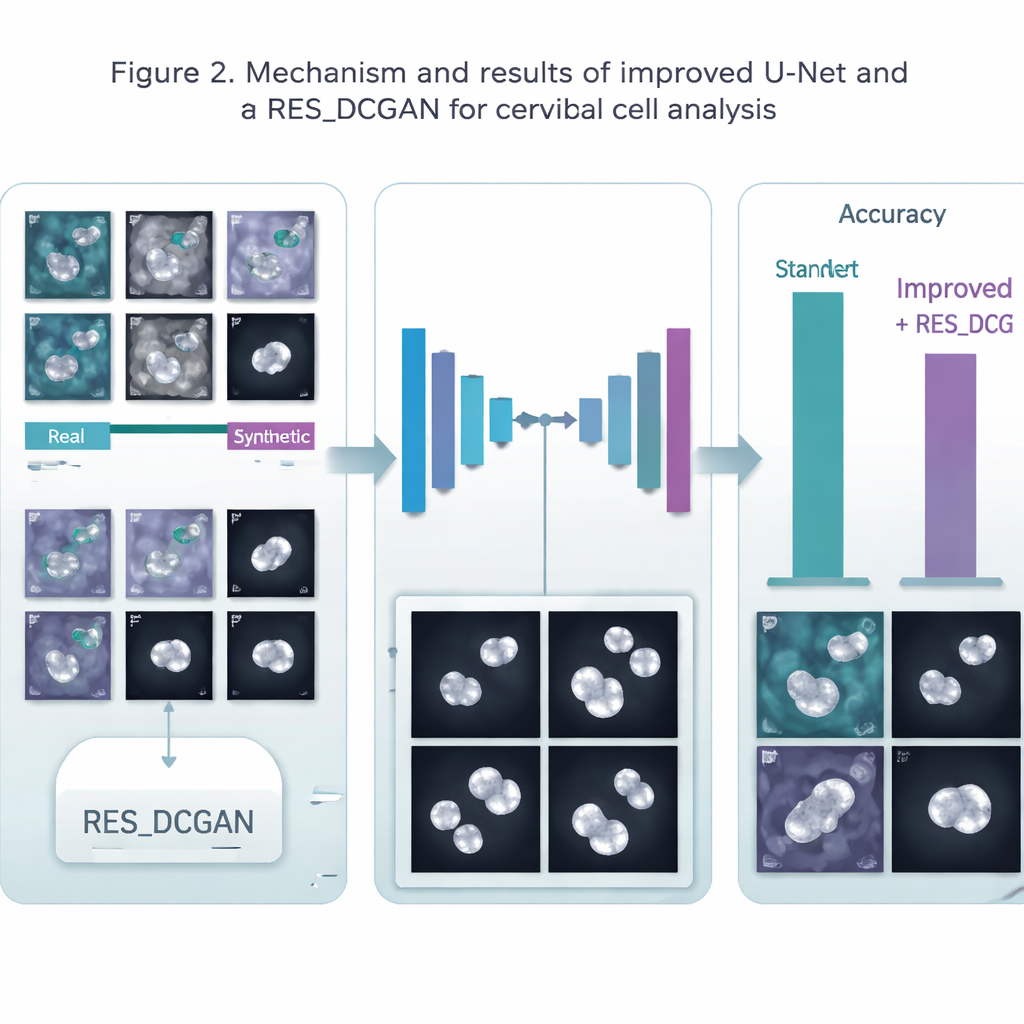

A Smarter Way to Outline Cells

At the heart of the system is an improved version of a popular medical imaging model called U-Net, which is especially good at drawing precise outlines around objects in pictures. The authors modified U-Net so it can look at image details at several scales at once and stay stable even when trained on small batches of data, a common limitation in medical work. This upgraded network learns to paint a simple mask over each image: white where a cell is present, black for the background. By isolating just the cell areas, later steps in the pipeline can focus on what matters most rather than being distracted by stains, debris, or empty space.

Making More Training Examples from Thin Air

A major challenge in medicine is that high-quality, labeled images are scarce and costly to obtain. To tackle this, the team used a generative model called RES_DCGAN, which learns to create realistic synthetic Pap smear images based on the real ones. These extra “made-up but convincing” images are mixed into the training process, both before the cell-outlining step and afterward, in the classification step. By seeing many more variations of cells—including rare and subtle patterns—the AI becomes more robust and less likely to overfit to a small set of patients or imaging conditions.

From Outlines to Early Warnings

Once the cells are segmented, a second group of deep learning models takes over to classify each cell as normal or belonging to different abnormal categories. The authors used a strong image-recognition model called ResNet50V2 and combined it with several other well-known networks in an “ensemble,” where multiple models vote together on the final decision. They tested six different processing pipelines on three datasets from Poland (Pomeranian), Denmark (Herlev), and Greece (SIPaKMeD), covering both simple normal-versus-abnormal cases and more detailed multi-class problems. Across these tests, segmenting the cells first consistently improved classification accuracy, and adding synthetic images generally nudged performance even higher, especially for outlining the cells.

How Well Did the Digital Assistant Perform?

The system achieved very high scores. For cell outlining, accuracy reached about 99.5% on one dataset and around 98% on another, comfortably beating a standard U-Net. For deciding cell type, the ensemble of models correctly labeled around 95–96% of cells in the more complex tasks and up to 99% in simpler yes/no cancer-risk decisions. These results match or surpass many earlier studies, while also showing that a single, unified pipeline can work across different laboratories and data sources. The gains were more modest on one particularly varied dataset, highlighting that real-world diversity still presents challenges.

What This Means for Patients and Doctors

In everyday terms, this work shows that an AI assistant can learn to carefully trace cervical cells and sort them into risk groups with remarkable consistency. It does not replace the pathologist, but it can pre-screen slides, highlight suspicious cells, and reduce the chance that early signs are overlooked in busy clinics or in regions with few specialists. With further testing on larger, more complex samples and expert-validated masks, systems like this could help bring reliable cervical cancer screening to more women worldwide, catching dangerous changes earlier and improving chances of successful treatment.

Citation: Wubineh, B.Z., Rusiecki, A. & Halawa, K. Deep learning-based automatic segmentation and classification for cervical cancer detection using an improved U-Net and ensemble methods. Sci Rep 16, 5184 (2026). https://doi.org/10.1038/s41598-026-35299-7

Keywords: cervical cancer screening, Pap smear images, deep learning, medical image segmentation, computer‑aided diagnosis