Clear Sky Science · en

Active guidance in ultrasound bladder scanning using reinforcement learning

Why better bladder scans matter

When someone has trouble emptying their bladder, doctors often turn to ultrasound to see how much urine is left behind. That number guides important decisions, such as whether a catheter is needed or if a treatment is working. Yet getting a clear, correctly positioned ultrasound image of the bladder is harder than it sounds, especially for less experienced staff. This study explores how an artificial intelligence (AI) technique called reinforcement learning can coach the person holding the probe in real time, helping them find the best view more quickly and reliably.

The challenge of finding the right angle

In a routine bladder scan, the probe must be moved over the lower abdomen to capture two key views: one looking across the bladder (transverse) and one looking along its length (longitudinal). The quality of these images depends on exactly where the probe is placed and how it is tilted. Skilled sonographers learn this through experience, but beginners can easily miss the ideal plane, leading to blurred outlines and inaccurate volume estimates. Earlier computer methods tried to predict the next probe movement from each single image, but they tended to give jumpy, inconsistent suggestions and ignored important details about the bladder’s shape.

Teaching a virtual probe to explore

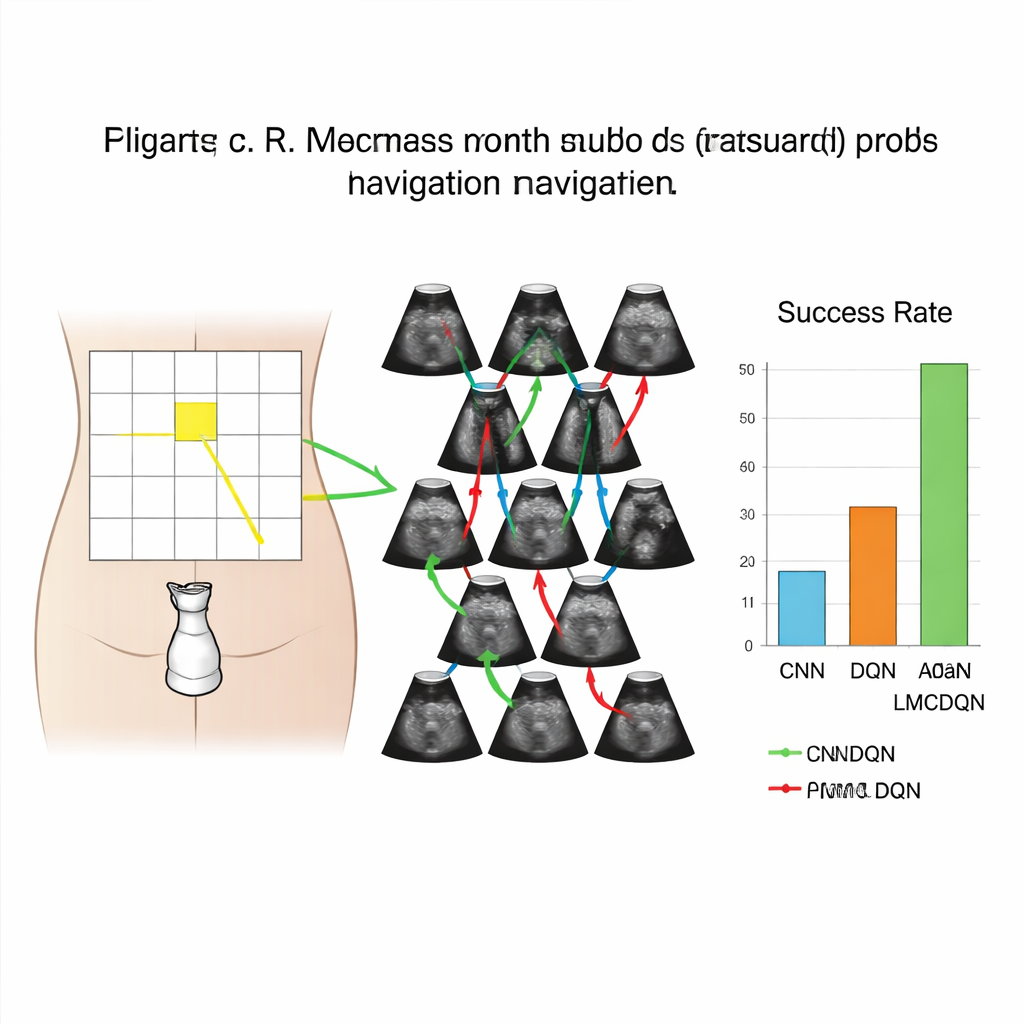

The researchers built a realistic computer simulation of bladder scanning using 3D ultrasound data from 17 healthy volunteers. They overlaid a 6-by-5 grid on each person’s lower abdomen to mark possible probe positions, and at each position they recorded ultrasound volumes in two directions. This created a kind of training playground where a virtual probe could move left, right, up, down, and even tilt by small angles, just as a real operator would. The “agent” in this playground saw only the images, not its true location, and had to learn how to navigate toward the grid square that produced the clearest bladder view.

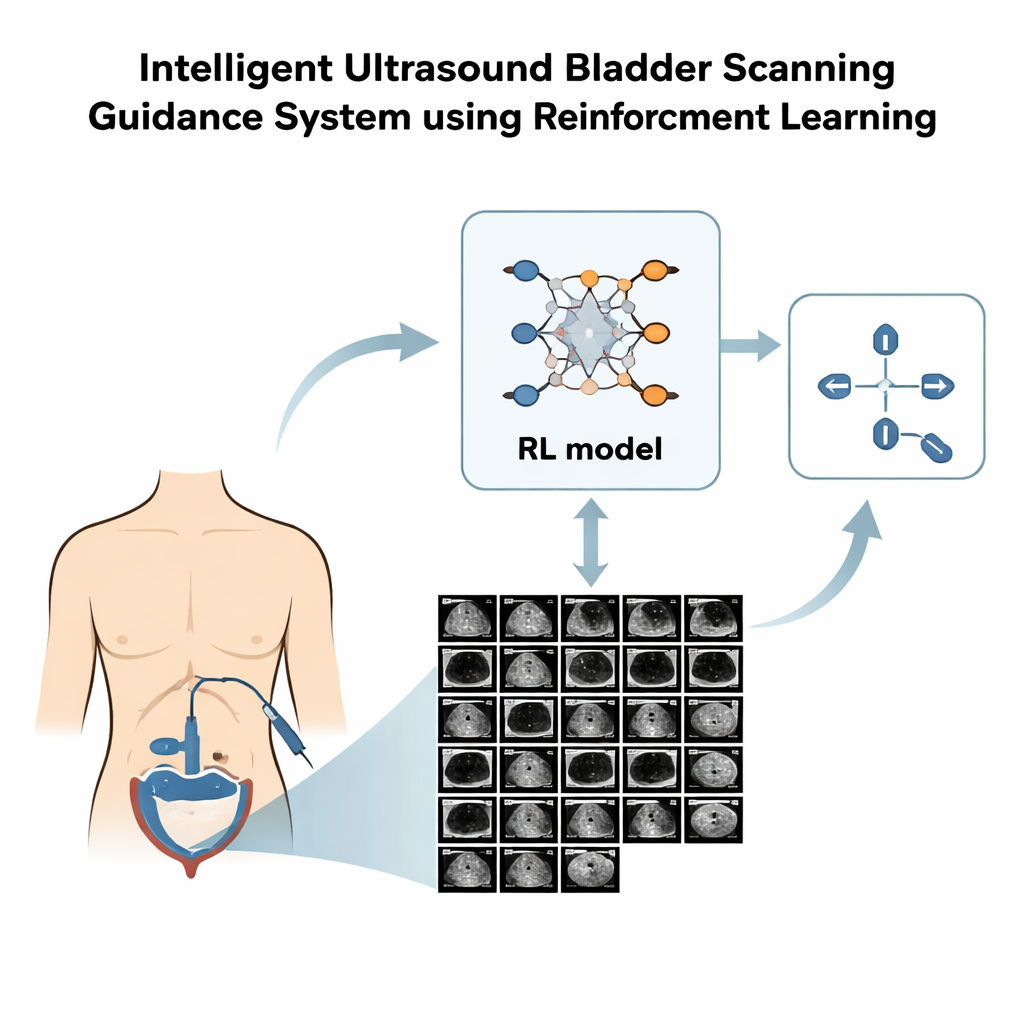

How the learning system makes decisions

The team used a style of AI called reinforcement learning, in which the system tries actions and receives rewards or penalties based on how helpful those actions are. Their method, named Adam LMCDQN, is an advanced version of a popular reinforcement learning approach that explores its options by adding carefully tuned randomness to the learning process. The agent earned higher rewards for moves that brought it closer to the best view and for actually reaching that view within a limited number of steps. In a further refinement, the researchers asked an expert to outline the bladder in many images. Using these outlines, they designed a reward that favored views where the bladder was larger and more centered, nudging the agent to pay attention to the organ itself rather than just distances on the grid.

What the system achieved in practice

When tested on three volunteers whose data were not used for training, the reinforcement learning system clearly outperformed a conventional deep-learning classifier. In the simpler setting where the probe could only shift along the surface (without tilting), the new method reached the target view successfully in 69% of transverse scans and 51% of longitudinal scans, compared with 58% and 32% for the supervised classifier. Allowing the agent to also tilt the probe improved performance further: success rates rose to 81% across the body and 67% along its length. The segmentation-based reward, which explicitly looked for a large, centered bladder, also provided a measurable boost over a reward based only on grid distance.

What this could mean for patients and staff

The study shows that it is technically feasible to train an AI system that watches incoming ultrasound frames and tells the operator how to move the probe—left, right, up, down, or tilt—to home in on the best bladder view. In a real device, this guidance could appear as simple on-screen arrows or short text prompts, helping nurses and other front-line staff achieve expert-level images without years of practice. Although this work was done in a simulated environment and only on healthy volunteers, it lays the groundwork for future clinical testing and for expanding the approach to patients with a wider range of conditions. If successful, such AI guidance could make bladder volume measurements faster, more consistent, and more widely available in everyday care.

Citation: Hsu, HL., Zahiri, M., Li, G. et al. Active guidance in ultrasound bladder scanning using reinforcement learning. Sci Rep 16, 5273 (2026). https://doi.org/10.1038/s41598-026-35285-z

Keywords: bladder ultrasound, reinforcement learning, medical imaging AI, probe navigation, urinary retention