Clear Sky Science · en

Deep learning approach for hybrid beamforming design in MU-MISO mmWave systems

Why faster wireless beams matter for everyday life

Future cars, phones, and sensors will rely on ultra-fast wireless links to share data in real time. Millimeter-wave (mmWave) signals can deliver fiber-like speeds, but they are easily weakened by distance and obstacles. To compensate, base stations must "aim" their signals very precisely with beamforming, a process that is powerful but often too slow and complex for real-world, fast-moving scenarios like vehicular communications. This article explores how deep learning can redesign that process so networks can keep up with rapid changes on the road.

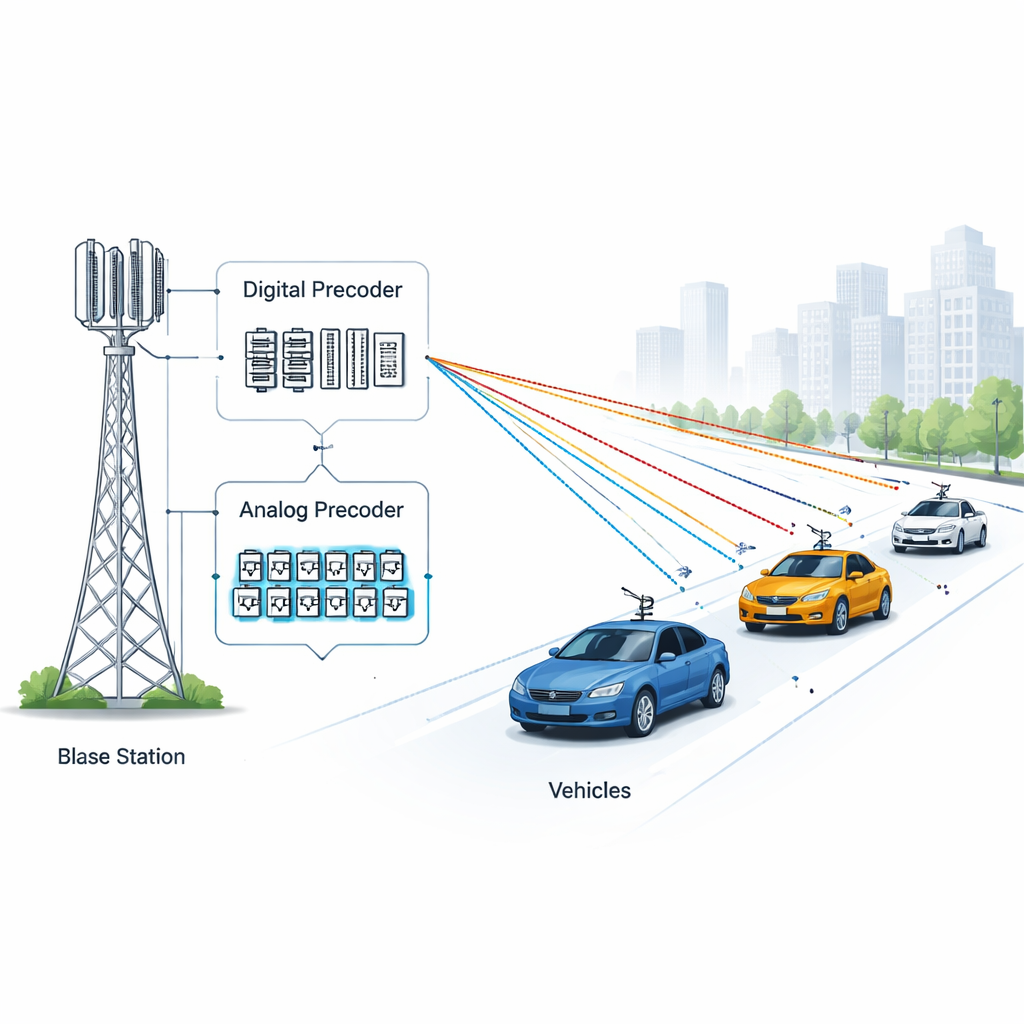

Sharpening wireless beams without bulky hardware

Modern mmWave base stations use many tiny antennas packed into a small area. By carefully adjusting how each antenna sends the signal, the station can form a narrow beam that focuses energy toward a specific user, improving both speed and reliability. There are two main ways to do this. Digital beamforming offers the greatest flexibility but needs a full set of expensive, power-hungry electronics for every antenna. Analog beamforming is cheaper and more energy-efficient but can usually serve only one beam or user at a time. Hybrid beamforming combines both ideas: a small digital stage feeds a network of analog phase shifters, aiming to deliver high data rates while keeping hardware costs and power under control.

The bottleneck: slow and complex beam design

Designing a good hybrid beam pattern is mathematically difficult. The system must decide how to split the work between the digital and analog stages under strict hardware rules, such as fixed-magnitude phase shifters and a limited number of radio-frequency chains. Traditional methods search for near-optimal solutions by iteratively tweaking the beam patterns to maximize the sum of data rates for all users. Well-known algorithms can achieve high performance but require many repeated calculations and specialized optimization software. This makes them too slow and computationally heavy for real-time use, especially in situations where cars and other users move quickly and channels change from moment to moment.

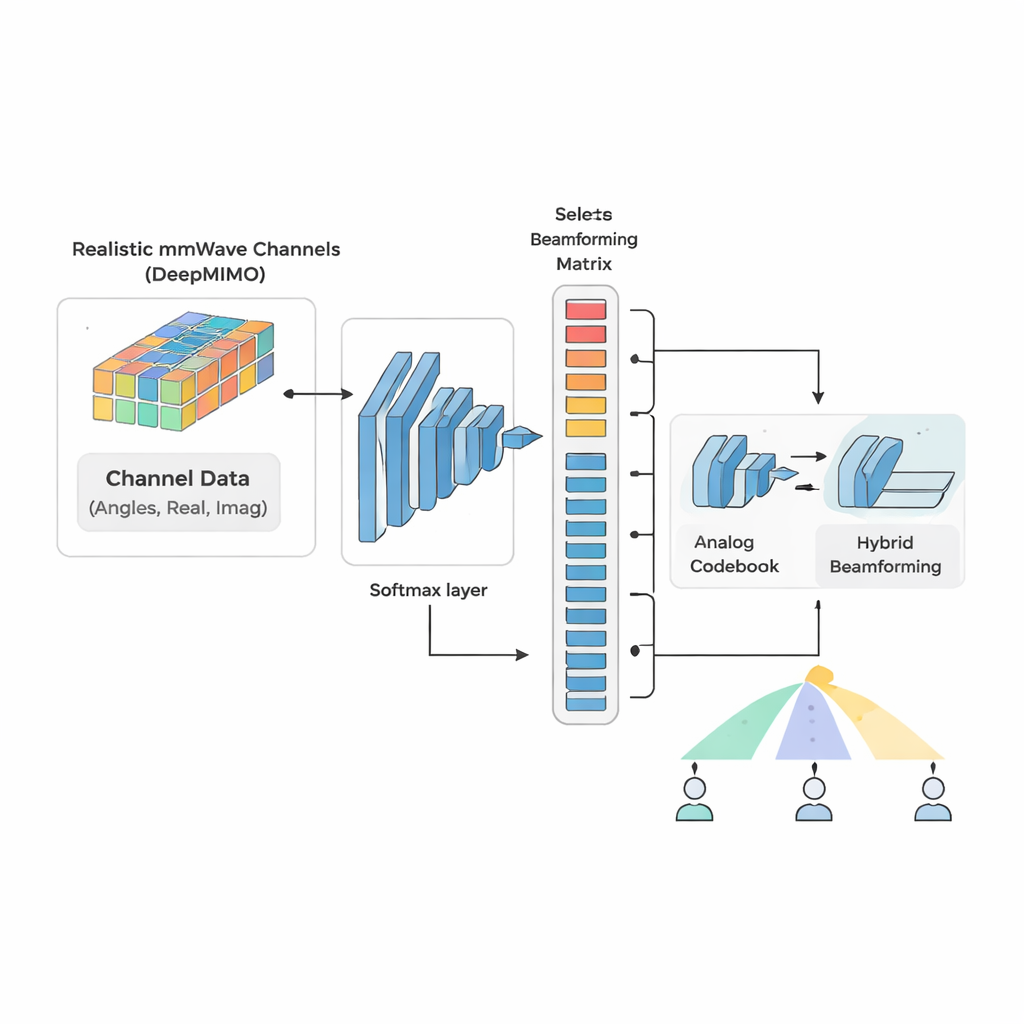

Teaching a neural network to pick the right beams

The authors propose a deep-learning-based hybrid beamforming approach, called DL-HBF, that treats beam design as a pattern recognition task. Instead of re-solving a complex optimization each time, the system first builds a large training set using a realistic ray-tracing channel model known as DeepMIMO. For each simulated channel between the base station and multiple single-antenna users, an exhaustive offline search identifies the best analog beamforming matrix from a carefully constructed codebook and computes the matching digital precoder. These choices serve as labels. The input to the neural network is a three-layer representation of the channel that includes the signal phase, and its real and imaginary parts, giving the model rich information about how signals propagate through the environment.

From heavy optimization to quick decisions

The core of DL-HBF is a convolutional neural network that learns to map channel measurements directly to the index of the best analog beam pattern. After training, the network can classify new channel conditions with high accuracy in a single forward pass, avoiding slow iterative loops. The digital part of the beamforming is then computed in closed form from the selected analog matrix. Simulations using a detailed street-level scenario with moving users at 60 GHz show that the proposed method achieves sum data rates close to those of the most powerful traditional algorithm, while dramatically cutting computation time. Compared with several standard hybrid beamforming techniques, the deep learning approach offers a better trade-off between data rate and latency and scales more gracefully as the number of radio chains grows.

Staying reliable when channel information is imperfect

Real networks never know the state of the wireless channel perfectly; measurements are noisy and delayed. The study therefore tests how different methods behave when the channel estimates are corrupted. All approaches lose some accuracy, but DL-HBF shows the smallest degradation in how closely it tracks the ideal, fully digital solution. Because the neural network is trained on many channel realizations, including imperfect ones, it learns robust patterns rather than depending on exact numbers. The authors also design their dataset creation pipeline to be fast and memory-efficient, making it easier to retrain the system when network layouts or operating conditions change.

What this means for future wireless systems

In practical terms, this work shows that deep learning can turn a slow, mathematically demanding beamforming optimization into a fast look-up-like operation that is accurate enough for real deployments. The proposed DL-HBF scheme provides high data rates with much lower latency and computation than classic methods, and it remains stable even when channel information is imprecise. For a layperson, the takeaway is that smarter, learning-based signal control could help future 5G and 6G networks deliver reliable, high-speed connections to many moving users at once, enabling safer connected vehicles and richer mobile applications without requiring prohibitively complex hardware.

Citation: Ghaith, E., Mekkawy, T., Abouelfadl, A.A. et al. Deep learning approach for hybrid beamforming design in MU-MISO mmWave systems. Sci Rep 16, 5014 (2026). https://doi.org/10.1038/s41598-026-35247-5

Keywords: mmWave beamforming, deep learning wireless, hybrid precoding, vehicular communications, massive MIMO