Clear Sky Science · en

Federated lung nodule segmentation using a hybrid transformer–U-Net architecture

Why spotting tiny lung spots matters

Lung cancer is the deadliest cancer worldwide, yet its earliest warning signs—tiny spots called nodules on CT scans—are easy to miss. Radiologists must sift through thousands of detailed images, and sharing patient data between hospitals to train smarter computers is often blocked by strict privacy rules. This study introduces a way for hospitals to work together to teach an artificial intelligence (AI) system to find lung nodules accurately, without ever exchanging raw patient scans.

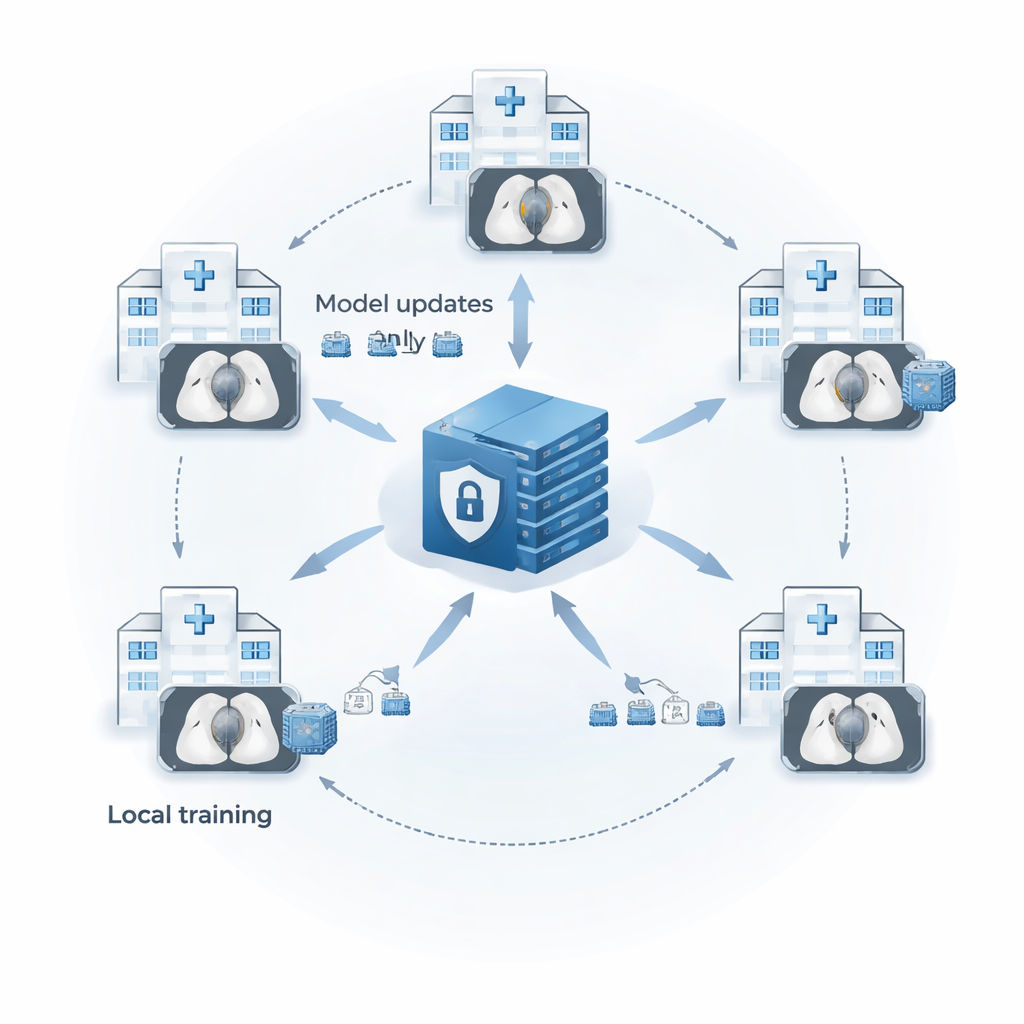

Sharing knowledge without sharing scans

Modern CT scanners can capture lung details down to fractions of a millimeter, but that precision creates a flood of images that no human can review alone. Computer-aided tools can help, yet they need large, diverse datasets to avoid missing unusual nodules. Laws such as HIPAA and GDPR prevent hospitals from simply pooling patient data in one place. The authors use a strategy called federated learning to solve this dilemma. Each hospital trains a copy of the same model locally on its own CT scans and then sends only the model’s learned parameters, not the images themselves, to a central server. The server averages these parameters into an improved “global” model and sends it back, allowing all sites to benefit from each other’s experience while keeping patient data in-house.

Cleaning up the images before teaching the AI

The research focuses on “solid” lung nodules between 15 and 25 millimeters, which are clinically important but occupy only a few pixels in each CT slice, making them easy for computers to overlook. Before any learning happens, every CT slice is passed through a two-step cleanup process. First, a contrast-enhancement method called CLAHE brightens faint nodules without amplifying noise, making subtle spots stand out more clearly. Second, the images are rescaled so that all pixel values fall between 0 and 1, giving the model a consistent brightness scale across scans from different machines and hospitals. This standardized preprocessing helps the AI pay attention to tiny, low-contrast nodules instead of being confused by scanner quirks.

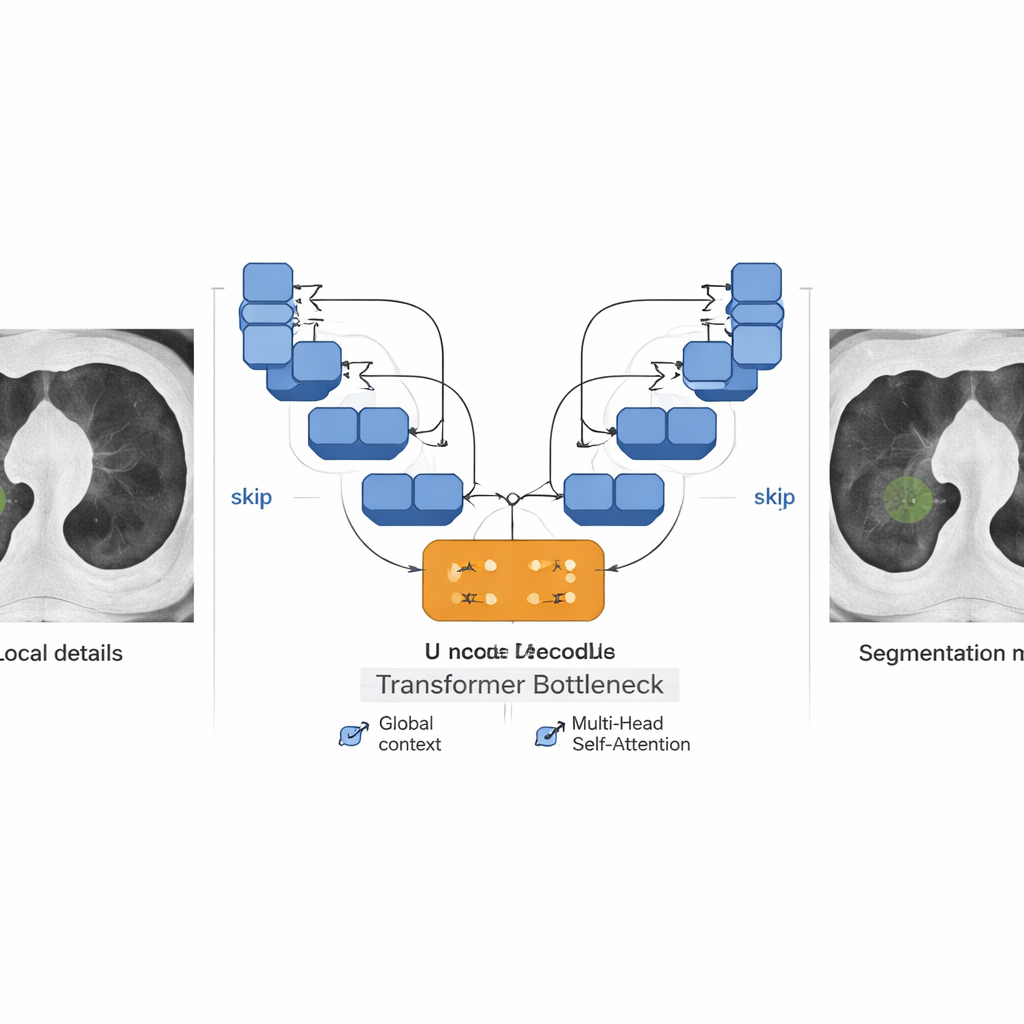

Blending two ways of seeing: nearby detail and big-picture context

At the heart of the system is a hybrid network that blends two powerful ideas from modern AI: the U-Net, which excels at marking objects in images, and the Transformer, originally developed for language but now widely used in vision. The U-shaped part of the model first compresses the image through layers of small filters that excel at local texture—edges, specks, and fine boundaries—then reconstructs a full-size mask that marks which pixels belong to a nodule. Residual connections and skip links carry fine details through the network so they are not lost along the way. In the middle of this U-shape sits a Transformer “bottleneck” that treats patches of the image like tokens in a sentence, using self‑attention to relate distant regions. This allows the model to see both the tiny nodule and its broader anatomical surroundings, which is crucial when nodules blend into nearby vessels or chest structures.

Handling rare targets and uneven data

The authors also tackle a major problem in medical imaging: class imbalance. In lung CT, almost every pixel is background; nodule pixels are rare. Standard training tends to reward models that simply label everything as background. To counter this, the team combines two loss functions—Dice loss, which directly rewards overlap between predicted and true nodules, and Focal loss, which puts extra emphasis on hard-to-classify pixels. This Dice–Focal blend encourages the model to pay attention to small, difficult nodules and crisp boundaries. In their federated setup with five simulated hospital “clients” trained on the LUNA16 public dataset, the system achieved Dice scores up to 0.93 for solid nodules and showed low rates of both missed nodules and false alarms. Performance remained strong across most clients despite differences in image quality and nodule appearance, though nodules with fuzzy or mixed densities remained challenging.

What this means for future lung screening

Put simply, this work shows that hospitals can jointly train a high-quality nodule-finding AI without ever shipping patient scans across the network. By combining careful image cleanup, a model that sees both details and context, and a training strategy tuned to rare targets, the framework reliably outlines solid lung nodules in a realistic multi-hospital setting. While more work is needed to handle very faint or partially solid nodules, the study points toward lung cancer screening tools that are both accurate and privacy-preserving—bringing the benefits of big-data AI to patients without compromising the confidentiality of their medical images.

Citation: Turjya, S.M., Fawakherji, M. Federated lung nodule segmentation using a hybrid transformer–U-Net architecture. Sci Rep 16, 5228 (2026). https://doi.org/10.1038/s41598-026-35243-9

Keywords: lung cancer screening, medical image segmentation, federated learning, CT lung nodules, privacy-preserving AI