Clear Sky Science · en

Evaluating machine learned nuclear data precision in full core nuclear reactor Monte Carlo neutronics and computational efficiency analyses

Why faster reactor simulations matter

Nuclear power plants rely on detailed computer models to predict how fuel behaves over months and years of operation. These models are crucial for safety, efficiency, and designing new reactors, but they are notoriously slow and memory-hungry. This paper explores whether machine learning can slim down the huge nuclear data tables that drive these simulations—cutting computing cost dramatically—without sacrificing the physical accuracy engineers depend on.

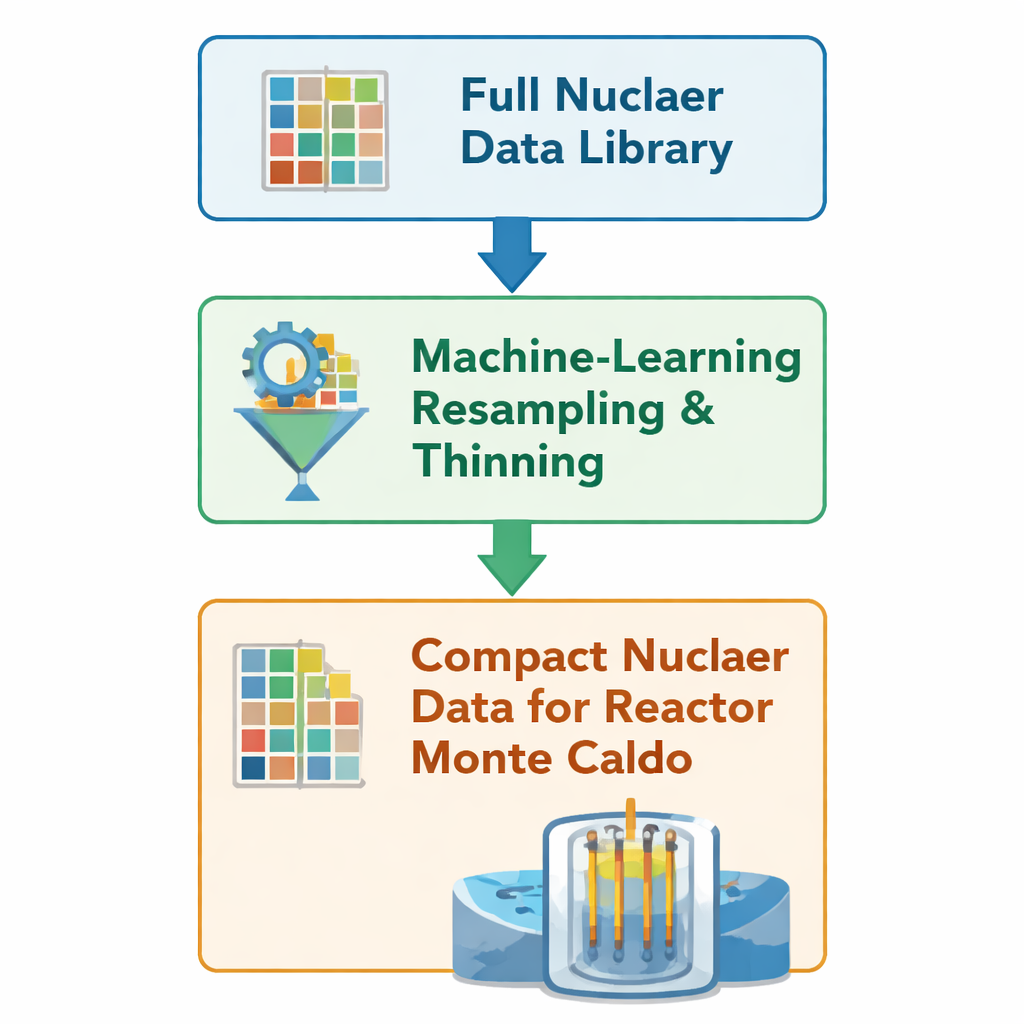

Shrinking the data behind the physics

Every time a simulated neutron moves through a virtual reactor core, the code consults large tables that describe how likely it is to bounce, be absorbed, or split an atom. These tables, called nuclear data libraries, encode probabilities over thousands of energy points for many isotopes in the fuel and its by‑products. The authors build on an earlier machine‑learning method that “thins” those tables: it removes redundant energy points while preserving sharp features such as reaction thresholds and resonance peaks, where probabilities change rapidly. Instead of regenerating the data through a long, traditional processing chain, the method edits OpenMC’s native HDF5 files directly, keeping only about 10–50% of the original grid points for 23 especially important nuclides.

Testing the idea on full reactor cores

To see if this leaner data still produces trustworthy results in realistic settings, the team runs year‑long simulations of two large pressurized‑water reactors: a European Pressurized Reactor (EPR) and a VVER‑1000, using the open‑source Monte Carlo code OpenMC. For each core, they perform two otherwise identical campaigns: one with the full nuclear data library and one with the machine‑thinned version. All geometry, operating conditions, and numerical settings are held fixed; only the data tables behind the physics differ. They disable other acceleration features inside OpenMC so that any change in speed or memory can be traced directly to the reduced data, not to changes in algorithms or settings.

Speed gains with tight error bounds

The payoff is substantial. For the EPR case, total wall‑clock time drops by about 18%, and for the VVER‑1000 the runtime shrinks by roughly 43%. Memory use changes more modestly: peak usage falls by about 4% in the EPR and rises by about 5% in the VVER‑1000, reflecting differences in how much time each model spends looking up nuclear data versus tracking particle paths through geometry. Crucially, the main reactor‑level measures remain very close to the originals. Over a full year in the VVER‑1000, the effective multiplication factor—essentially, how many neutrons each fission produces on average—never deviates by more than about 100 parts per million, and typically by only a few tens of parts per million. For key reaction channels such as fission in uranium‑235 and uranium‑238 and neutron capture in xenon‑135 and samarium‑149, average differences stay well below one‑tenth of a percent.

Fuel evolution and poisons stay on track

Because long‑term reactor behavior depends not just on instantaneous reactions but on how fuel and fission products build up and burn away, the authors also track the changing inventories of important isotopes. They examine the main uranium isotopes, a family of plutonium isotopes bred from uranium‑238, and strong “poison” nuclides that soak up neutrons, especially xenon‑135 and samarium‑149. Even after a full year, the differences in these inventories between the full and reduced data cases are tiny: on the order of a few hundredths of a percent for xenon and samarium, and generally below a tenth of a percent for the plutonium species. Uranium‑235 and uranium‑238, which dominate the core’s energy production and neutronic balance, are reproduced to much finer than one hundredth of a percent. Where relative errors briefly exceed one percent for some plutonium isotopes, it happens early in the cycle when their absolute amounts are still extremely small, so the practical effect on reactor behavior is negligible.

What this means for future reactor modeling

For non‑specialists, the essential message is that a carefully trained machine‑learning procedure can make the nuclear “lookup tables” inside advanced reactor simulations dramatically smaller and faster to use, while keeping the simulated reactor’s behavior almost indistinguishable from the traditional approach. The study shows this for two industrial‑scale reactor cores over a full year of operation, with error margins that are small compared with other typical uncertainties in reactor analysis. The authors emphasize that their conclusions currently apply to steady‑state pressurized‑water reactors using a specific data library and code settings, and that more work is needed to test other reactor types and transient conditions. Still, the results suggest a promising path toward faster, more efficient high‑fidelity nuclear simulations, enabling more design studies and safety analyses to be performed with limited computing resources.

Citation: Hashemi, A., Macián-Juan, R. & Ohlerich, M. Evaluating machine learned nuclear data precision in full core nuclear reactor Monte Carlo neutronics and computational efficiency analyses. Sci Rep 16, 1314 (2026). https://doi.org/10.1038/s41598-026-35227-9

Keywords: nuclear reactor simulation, machine learning, Monte Carlo neutronics, nuclear data libraries, pressurized water reactors