Clear Sky Science · en

Attention-enhanced MobileNetV2 models for robust forest fire detection and classification

Why Faster Fire Alerts Matter

Across the globe, wildfires are becoming hotter, larger, and more expensive to fight. Minutes can make the difference between a small, containable blaze and a regional disaster that damages ecosystems, fills cities with smoke, and costs billions of dollars. This study explores how compact artificial intelligence (AI) models can spot the very first signs of forest fires in ordinary images and video, fast enough to run on drones, security cameras, and low-power sensors scattered through vulnerable landscapes.

Watching the Woods with Smart Cameras

Traditional fire lookout towers and human operators scanning live video feeds struggle to keep up with vast forested areas, especially at night or in smoky, hazy conditions. The authors tackle this problem by turning forest fire detection into a simple yes-or-no question: does this image contain fire or not? They assemble a balanced collection of 5,121 pictures showing both fire and non-fire scenes, drawn from forests, cities, and industrial sites, and captured in daylight, at night, and through smoke or fog. By carefully balancing the dataset and checking labels by hand, they aim to teach AI systems what real flames look like in the wild, not just in ideal lab examples.

Lean AI Built for the Field

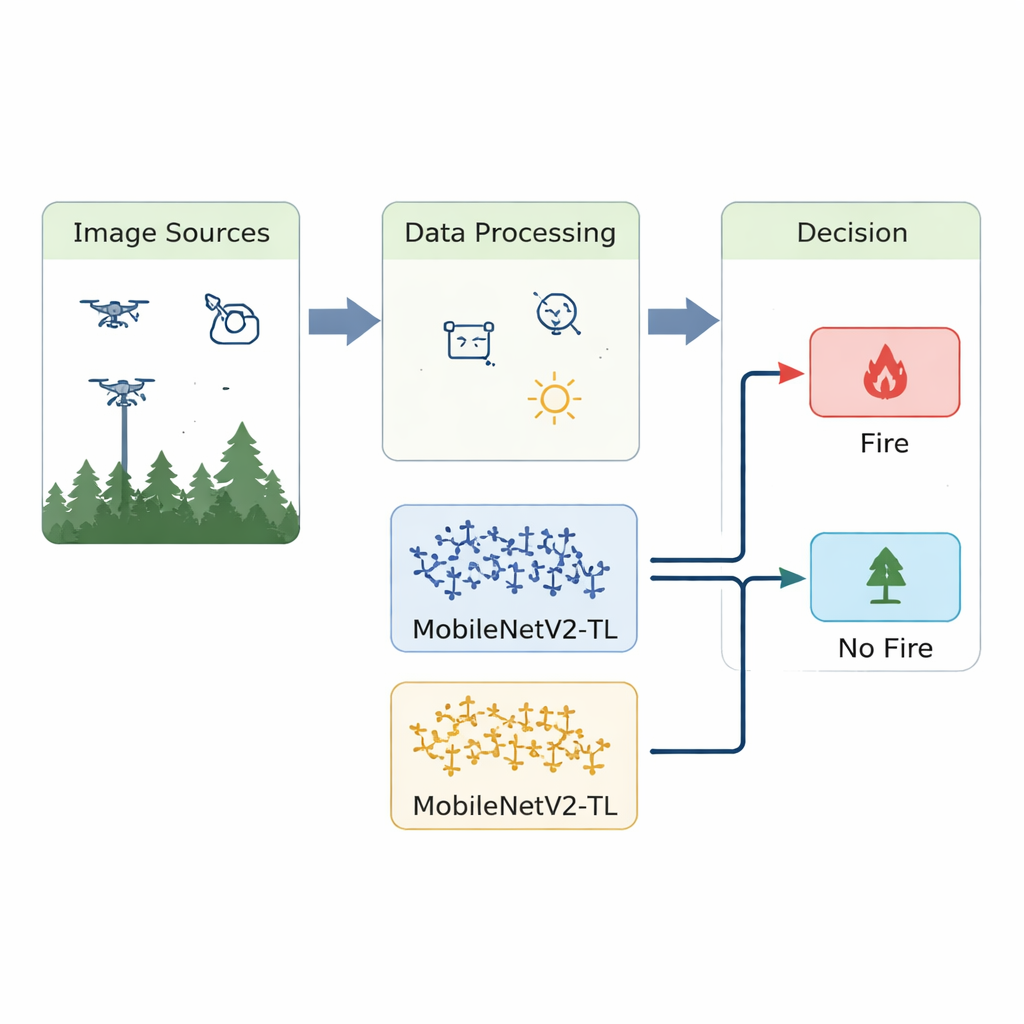

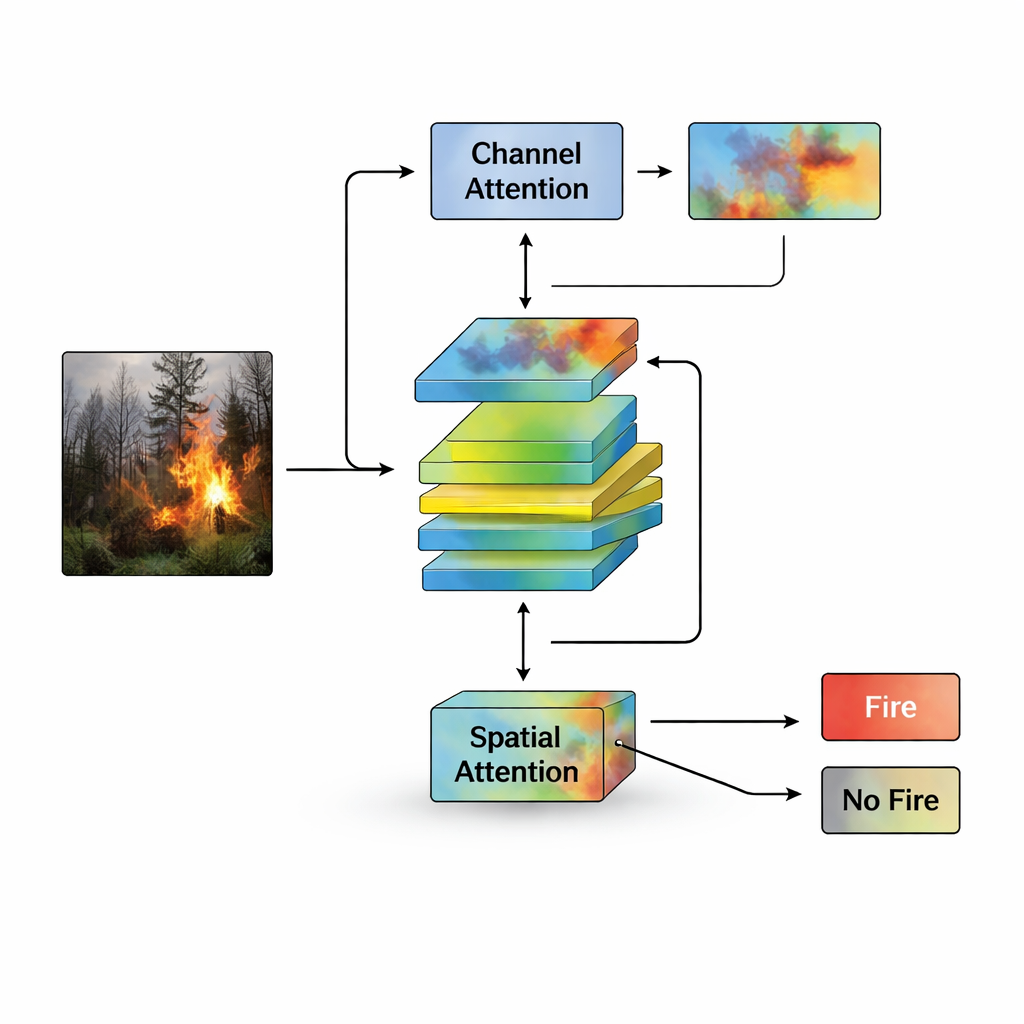

Many powerful image-recognition systems are too heavy to run on the small computers inside drones or low-cost surveillance cameras. To avoid that problem, the study builds on MobileNetV2, a family of neural networks designed to be fast and frugal with memory. The first model, called Att-MobileNetV2, adds an "attention" mechanism that helps the network concentrate on telltale flame colors, textures, and edges while ignoring clutter such as sunlit clouds or bright building lights. The second model, MobileNetV2-TL, leans on transfer learning: it reuses visual skills learned from millions of general photos and trains only a small, task-specific head for fire versus non-fire, keeping processing time and energy use low.

Training for Tough Real-World Conditions

To mimic the messy conditions of real fire monitoring, the researchers do more than just collect varied images. They also apply controlled tweaks to each training picture—such as small rotations, flips, and changes in brightness or contrast—to simulate different camera angles and lighting. Both models are then trained and evaluated under the same rules, using standard measures like accuracy, precision, and recall, and by checking how often they miss fires or raise false alarms. Att-MobileNetV2 reaches an accuracy of about 99.6%, while MobileNetV2-TL reaches about 98.4%. Crucially, these results are achieved with only a few million parameters and a fraction of a billion operations per image, allowing decisions in just 10–12 milliseconds on a single graphics processor.

Outperforming Larger Systems with Less

The two compact models are compared against both classic machine-learning methods and more complex deep networks. Under identical training and testing conditions, Att-MobileNetV2 delivers the best overall balance of correct fire detections and correct "all clear" decisions, while MobileNetV2-TL achieves particularly high recall, meaning it rarely overlooks actual fires—an important property for early-warning systems. An ensemble that combines both models does even slightly better, suggesting that the ways they "see" a scene are complementary. Tests on an independent public dataset show that the models maintain strong performance on new imagery, indicating that they generalize beyond the specific pictures they were trained on.

What This Means for Future Fire Safety

In plain terms, this work shows that small, efficient AI models can spot forest fires early and reliably, even when running on modest hardware mounted on drones, towers, or roadside cameras. By using attention to focus on the right parts of an image and transfer learning to reuse prior visual knowledge, the proposed systems achieve accuracy that rivals or surpasses much heavier models. While challenges remain in the thickest fog, the approach points toward networks of smart, affordable sensors that could raise alarms sooner, helping firefighters respond faster and potentially preventing small sparks from becoming catastrophic wildfires.

Citation: Ul Haq, I., Husnain, G., Iqbal, A. et al. Attention-enhanced MobileNetV2 models for robust forest fire detection and classification. Sci Rep 16, 4805 (2026). https://doi.org/10.1038/s41598-026-35207-z

Keywords: forest fire detection, wildfire monitoring, deep learning, edge AI, computer vision