Clear Sky Science · en

Efficient detection of AI-generated scientific abstracts with a lightweight transformer

Why spotting AI-written science matters

As artificial intelligence becomes skilled at writing, it can now draft scientific summaries that look almost indistinguishable from those written by humans. That raises hard questions: How can journals, universities, and readers be sure a research abstract truly reflects a scientist’s work and not a machine’s invention? This paper tackles that problem by building a fast, compact tool that can flag AI-written scientific abstracts with very high reliability, offering a practical defense for academic integrity.

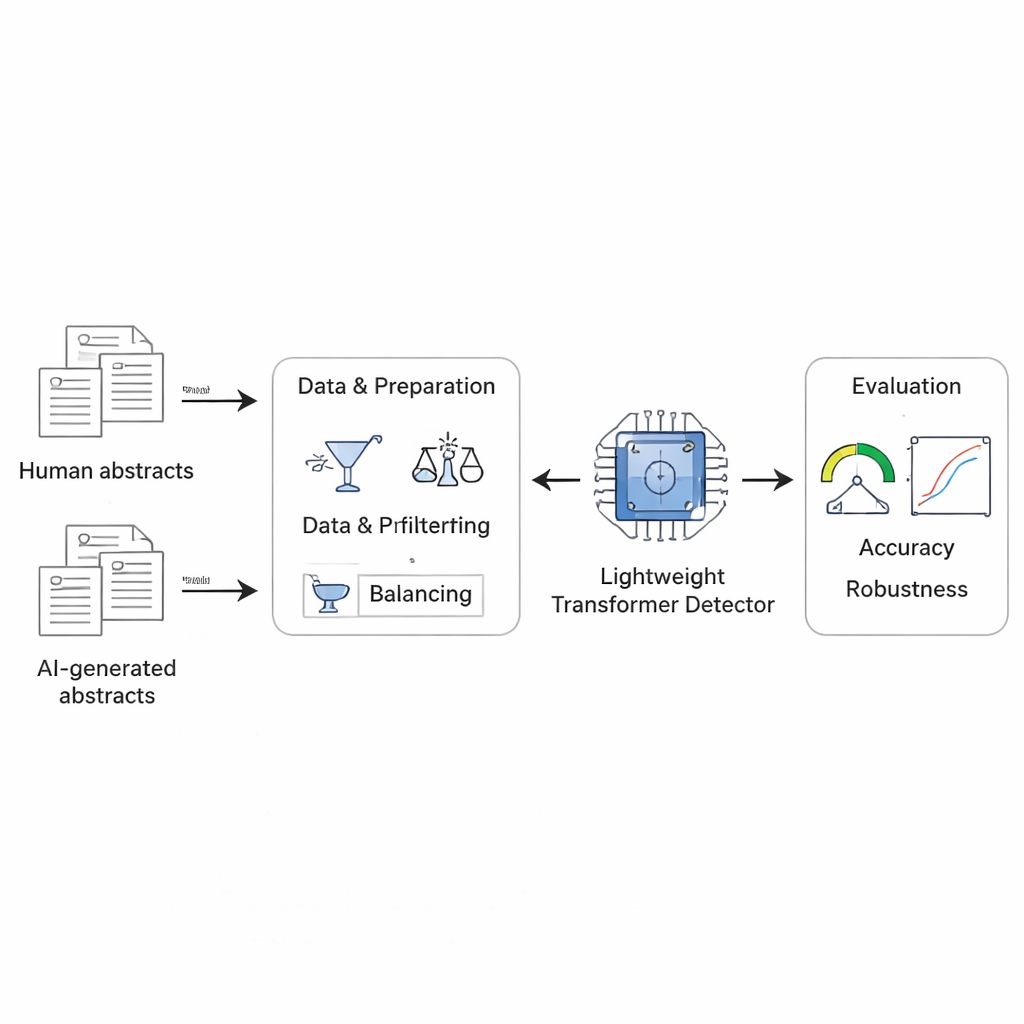

Building a test bed of real and synthetic abstracts

To measure and improve AI-text detection, the authors first needed trustworthy data. They collected 5,000 scientific abstracts from the online preprint server arXiv, covering five areas: computer vision, signal processing, quantitative biology, physics, and other computer science topics. For every human-written abstract, they used a large language model to generate an AI version from the paper’s title, carefully checking for near-duplicate texts and stripping obvious clues such as web addresses or code snippets. They also ensured that AI and human texts had similar lengths, so the detector could not simply rely on crude statistics like word count.

A compact model tuned for the real world

Instead of using a huge and costly AI model, the researchers chose a smaller system known as DistilBERT, a streamlined version of a popular language model. They fine-tuned it to decide, for each abstract, whether it was written by a person or generated by AI. The model reads up to 256 tokens—roughly a few paragraphs—and outputs a score between zero and one, interpreted as the probability that the text is machine-written. Training and evaluation followed a strict protocol: the data was split into training, validation, and test sets with no overlap, and the team reported not only accuracy but also how well the model behaves when the allowed false-alarm rate is kept very low, a regime that matters when accusing real authors of using AI.

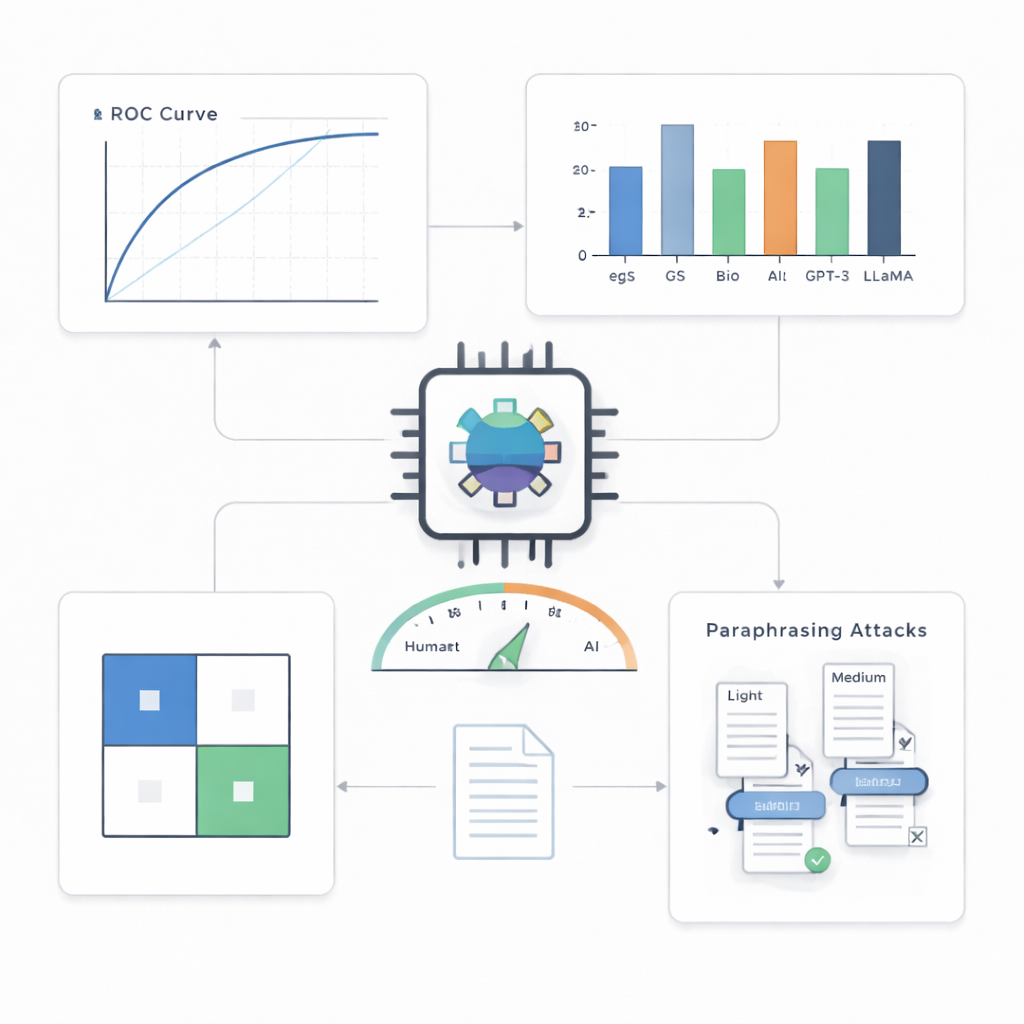

How well the detector performs

On abstracts from computer vision, the main testbed, the detector was remarkably accurate. It correctly labeled 499 of 500 AI-written texts and 495 of 500 human texts, achieving about 99.4% accuracy and an almost perfect score on a standard performance curve. When the authors forced the system to make at most one false accusation in a hundred cases, it still caught about 90% of AI texts; with a slightly higher tolerance of five false alarms per hundred, it caught about 97%. Compared with a range of alternatives—including simpler statistical tools and other transformer models—the compact detector consistently came out on top, especially in more demanding scenarios.

Beyond one field, one model, and simple tricks

A key question is whether such a detector can handle writing styles and AI systems it has never seen. The authors tested it on abstracts from other scientific fields and on texts written by several different advanced language models. Across domains, performance stayed strong, with only modest drops, suggesting that the system captures general patterns of AI writing rather than quirks of one subject area. Against unseen AI models, it also performed well, though less perfectly than in its home setting. The toughest challenge came from paraphrasing attacks: when another AI rewrote machine-generated abstracts to sound different while preserving their meaning, detection became noticeably harder. Under medium-strength rewriting, the share of AI texts that slipped through rose to nearly 30%, revealing that even sophisticated detectors can be fooled by deliberate obfuscation.

What this means for science and its safeguards

The study shows that, for now, AI-written scientific abstracts still leave subtle traces that a well-designed model can pick up, even when that model is small enough to run on modest hardware. This makes it feasible for publishers, conferences, and universities to screen large volumes of submissions without enormous computing costs. At the same time, the vulnerability to paraphrasing underscores that such tools are not a silver bullet. The authors argue that AI-text detection should be combined with other safeguards—such as editorial judgment, plagiarism checks, and transparency requirements—to protect the trustworthiness of scientific communication as AI systems continue to improve.

Citation: Zhang, C., Zhou, W. Efficient detection of AI-generated scientific abstracts with a lightweight transformer. Sci Rep 16, 4975 (2026). https://doi.org/10.1038/s41598-026-35203-3

Keywords: AI text detection, scientific abstracts, academic integrity, large language models, machine-generated text