Clear Sky Science · en

Tracking evolving communities in fake news cascades using temporal graphs

Why online rumors spread in tight-knit groups

When false stories race across social media, they rarely travel alone. Instead, they are pushed, shared, and repeated by clusters of users who move in concert. This study asks a simple but pressing question: can we track how these online groups form and change over time, and use that knowledge to slow the spread of fake news—without even reading the content of the posts?

Following the paths of misleading stories

The researchers focus on “information cascades” on platforms like Twitter—chains of retweets and replies that unfold after a story breaks. Rather than treating users as isolated individuals, they look at how people cluster into communities as a rumor spreads. These communities might be devoted promoters of the false story, skeptical commenters, or ordinary onlookers who get swept up. The challenge is that online crowds are constantly changing: people join and leave, conversations split and merge, and the structure of the network shifts from hour to hour.

A step-by-step framework for tracking communities

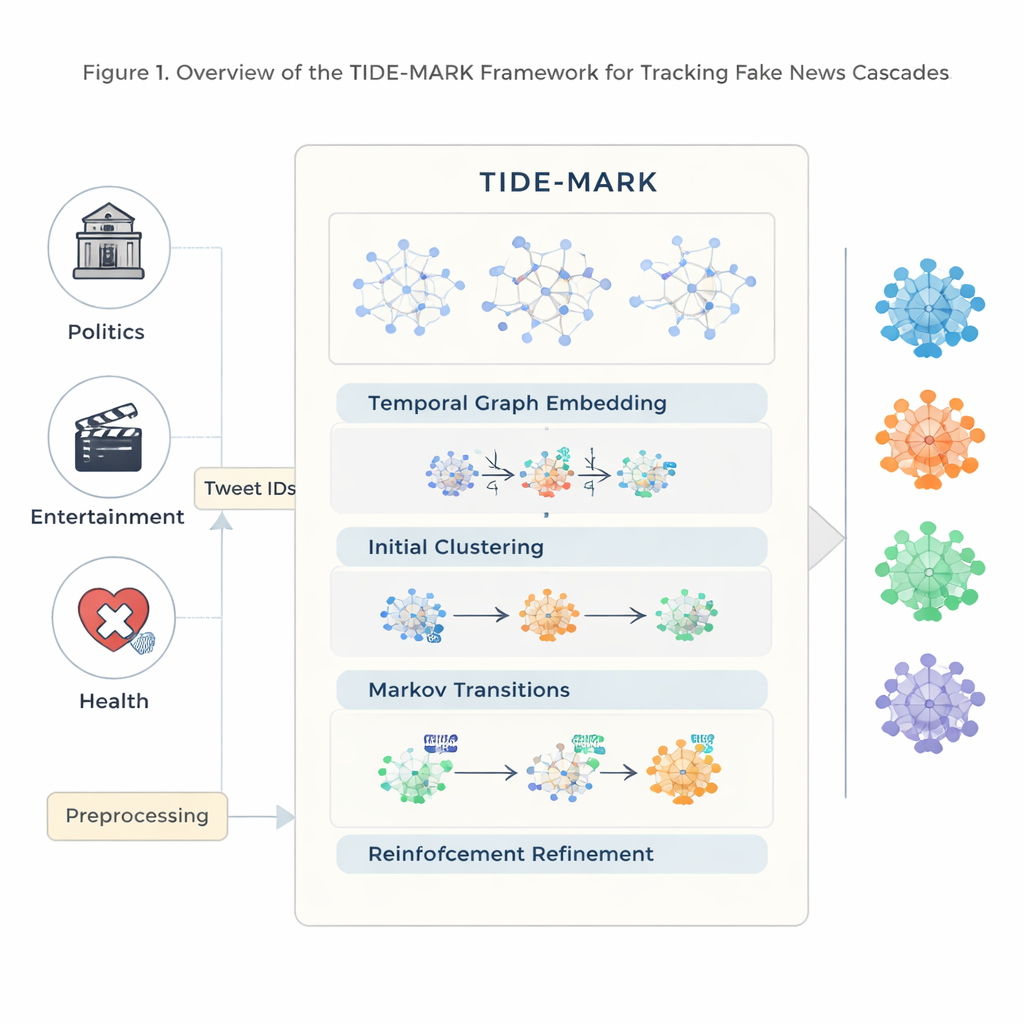

To tackle this, the authors introduce TIDE-MARK, a multi-stage framework built to follow evolving user communities through time. First, they rebuild each cascade from raw tweet IDs, turning it into a series of hourly snapshots where nodes are users and links represent retweets or replies. They enrich each user with simple profile information and a numerical summary of the tweets they share. Next, a time-aware neural network learns how each user’s connections and behavior change across snapshots, producing a compact “fingerprint” for every user at every hour.

From blurry crowds to stable groups

Using these fingerprints, TIDE-MARK groups similar users into preliminary communities. It then models how communities change from one snapshot to the next, estimating how likely it is for members of one group to stay together, split, or join another group. Finally, a reinforcement-learning module cleans up the fuzzy borders between groups. It repeatedly tests small changes—moving individual users from one community to another—and keeps the changes that make groups more internally connected while remaining consistent with previous time steps. The result is a clearer, more stable picture of who is coordinating with whom as the cascade unfolds.

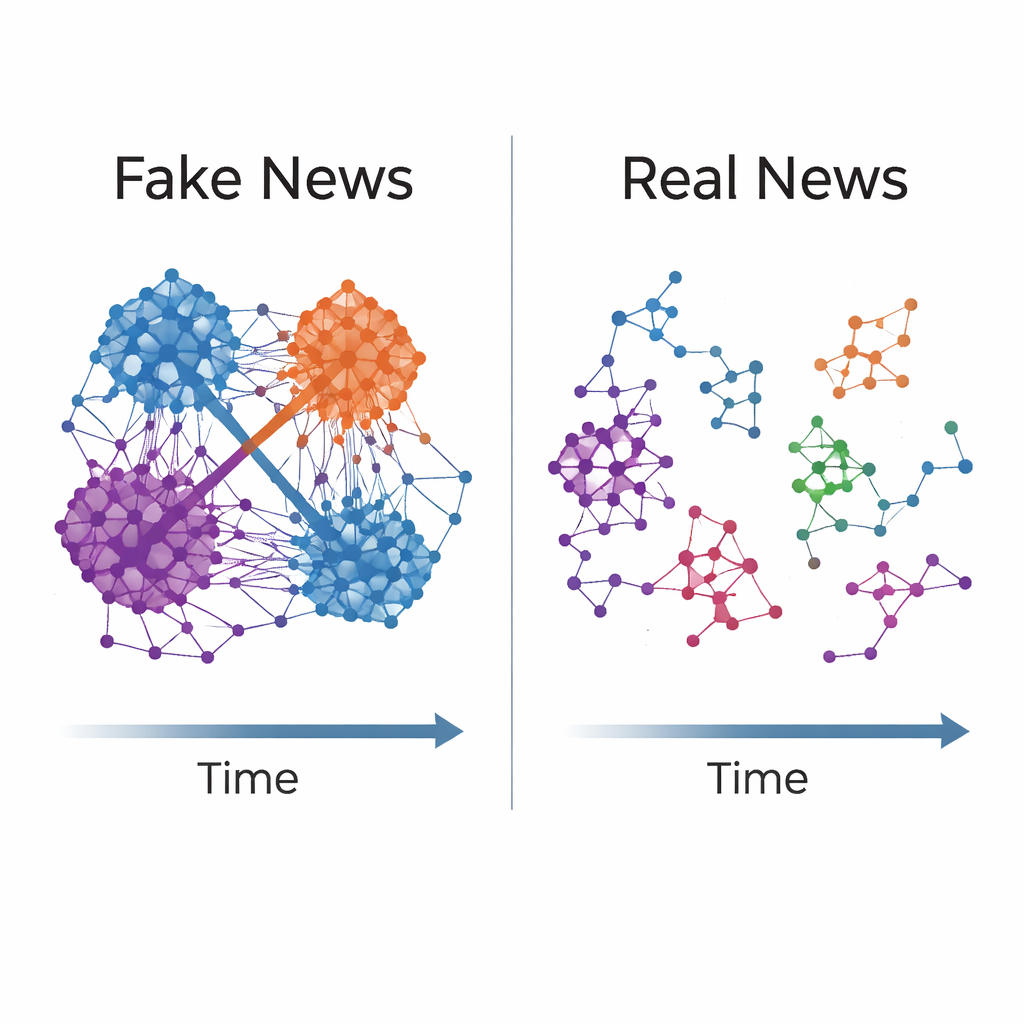

What fake and real news communities look like

The team applies TIDE-MARK to three large fake-news datasets spanning politics, celebrity gossip, and COVID-19 health information. Across all of them, they find the same pattern: false stories tend to flow through tighter, more persistent communities than true stories. These fake-news groups are more densely connected inside, have sharper boundaries from the rest of the network, and keep roughly the same membership over time. Real news, in contrast, spreads through looser, more scattered groups that reconfigure as discussions move on. Notably, the structural features TIDE-MARK extracts—how cohesive and stable communities are—are strong enough to help simple classifiers distinguish fake from real cascades, even without looking at the text.

Testing ways to disrupt harmful cascades

Because TIDE-MARK provides a community-level view, it can also be used to simulate interventions. The researchers test what happens if, early in a fake-news cascade, you remove just a few highly connected users from the most persistent community—an idealized stand-in for suspending accounts or limiting their reach. In simulations, this targeted removal sharply weakens the structure of the cascade and shrinks its largest connected cluster, suggesting that structure-aware, content-neutral strategies might meaningfully slow down the spread of misinformation.

What this means for fighting misinformation

In everyday terms, this work shows that fake news often travels through durable “fan clubs” of coordinated users, while real news moves through more fluid crowds. TIDE-MARK offers a way to see and track these groups in real time, without reading or judging the content itself. That makes it a promising tool for platforms, researchers, and policymakers who want to identify risky patterns of coordination and design careful, targeted interventions—potentially defusing harmful cascades before they fully take off.

Citation: Ma, Y., Qu, D. & Wang, Y. Tracking evolving communities in fake news cascades using temporal graphs. Sci Rep 16, 4952 (2026). https://doi.org/10.1038/s41598-026-35175-4

Keywords: fake news, social networks, community detection, misinformation spread, social media moderation