Clear Sky Science · en

Evaluation of ChatGPT-4o and Gemini for gout management: a comparative analysis based on EULAR guidelines

Why smart chatbots and sore joints matter

Gout, a painful form of arthritis that often attacks the big toe, is becoming more common around the world. Doctors already have clear, science-based guidelines on how to diagnose and treat it, yet many patients still don’t get ideal care. At the same time, powerful artificial-intelligence chatbots like ChatGPT-4o and Gemini are starting to appear in clinics, raising a simple but crucial question: can these tools actually give safe, guideline‑consistent advice about gout, or might they mislead doctors and patients?

Checking how well the chatbots follow the rulebook

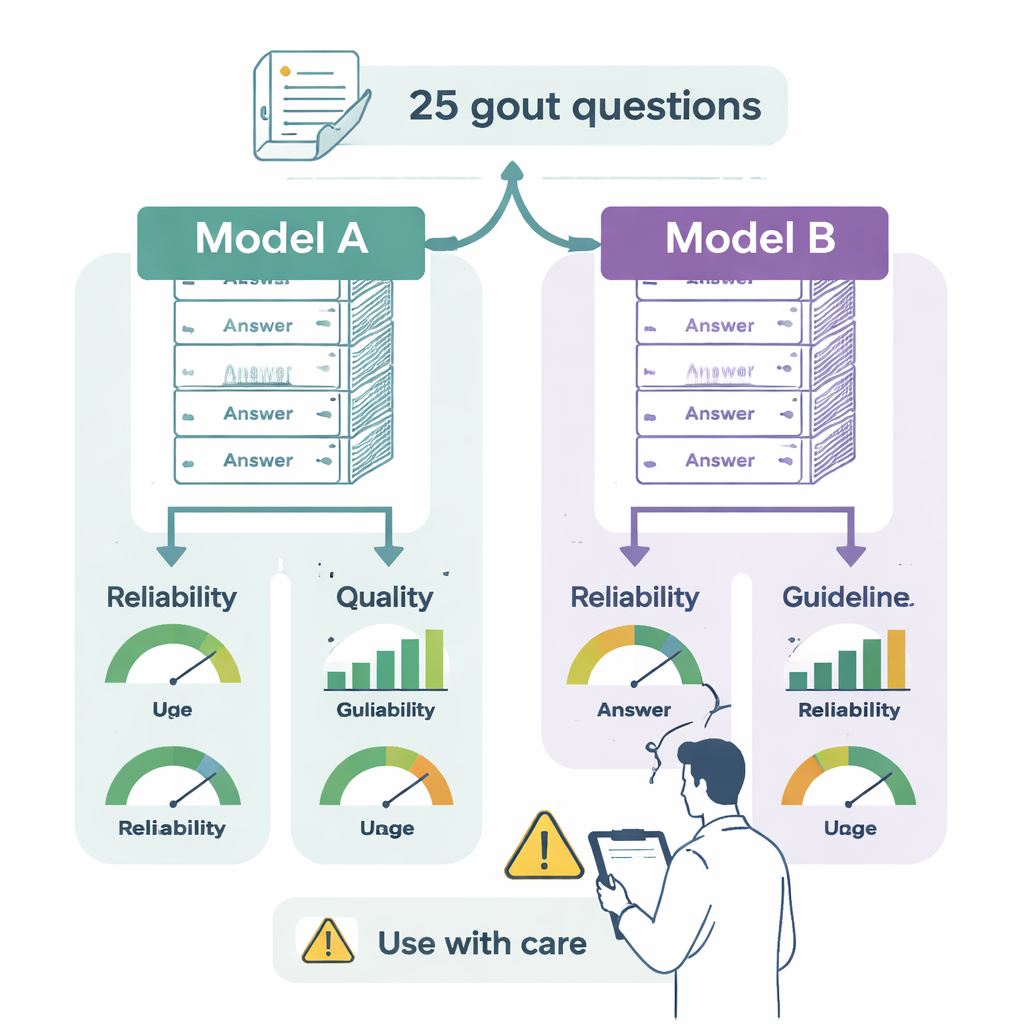

The researchers set out to test two leading language models—ChatGPT-4o and Gemini 2.0 Flash—against the official European (EULAR) guidelines for gout. Two specialists turned 25 key recommendations from the guidelines into doctor-style questions about real-world issues: how to diagnose gout, when to start urate-lowering drugs, how to manage flares, what targets to aim for in blood tests, and how lifestyle or other medicines should be adjusted. Both chatbots were asked the same questions in separate, clean sessions so that previous answers would not influence new ones.

How the answers were scored

Each answer was rated by two experienced gout clinicians, who did not know which model had produced which text. They scored three things. First, reliability: does the response seem balanced, objective, and trustworthy, or does it leave out key facts or overstate benefits? Second, quality: is the answer clear, well organized, and useful for a specialist making decisions? Third, guideline alignment: does it match what EULAR actually recommends, partly agree with some gaps, or directly contradict the rules? The team also checked how hard the answers were to read using standard readability tests that estimate what education level is needed to understand a text.

ChatGPT vs. Gemini: who did better?

Both chatbots produced generally sensible, clearly written answers, and both often reminded readers to consult a health professional. But important differences emerged. ChatGPT-4o fully matched the gout guidelines in 76% of cases and gave mostly correct but incomplete answers in another 20%, with only a single answer containing a clear medical error. Gemini was fully aligned in 48% of responses and partly correct but incomplete in 32%. More concerning, 12% of its answers mixed correct ideas with wrong information, and 8% flatly contradicted the guidelines—for example, suggesting broad use of a powerful anti‑inflammatory drug class (IL‑1 inhibitors) where EULAR reserves them for select, hard‑to-treat patients, or urging routine starts of urate‑lowering drugs during an acute flare, an area where experts advise more caution.

Readable, but not easy reading

When it came to style, the two systems were surprisingly similar. On multiple reading scales, both produced text that required at least a college-level education to follow comfortably. That may be acceptable for specialist doctors but is far too complex for most patients. Neither model gave references or links to sources unless specifically asked, making it hard to verify where the information came from. The reviewers’ agreement with each other was rated as good to excellent, suggesting that the scoring was consistent and that the differences between the chatbots were real rather than a matter of opinion.

What this means for people living with gout

Overall, the study suggests that advanced chatbots can be helpful assistants for doctors managing gout, but they are not ready to stand alone. ChatGPT-4o was more reliable, more complete, and more faithful to expert guidelines than Gemini, yet even its rare errors could matter when medications and safety are involved. Both tools spoke at a level too complex for most patients and lacked built‑in transparency about their sources. For now, the authors argue, AI should be seen as a promising support tool that can help clinicians and educators—but only when its advice is checked against up‑to‑date guidelines and expert judgment, especially in conditions like gout where small dosing details and timing decisions can make a big difference in pain, long‑term damage, and quality of life.

Citation: Meral, H.B., Kolak, E. Evaluation of ChatGPT-4o and Gemini for gout management: a comparative analysis based on EULAR guidelines. Sci Rep 16, 4831 (2026). https://doi.org/10.1038/s41598-026-35166-5

Keywords: gout, clinical guidelines, artificial intelligence, large language models, rheumatology