Clear Sky Science · en

A novel approach for dynamic task scheduling for IOT in fog-cloud environment

Why your smart devices need smarter helpers

From fitness trackers and home cameras to self-driving cars and factory robots, modern gadgets constantly stream data that must be processed in fractions of a second. Sending everything to distant cloud data centers is often too slow and wasteful. This paper presents a new way to decide, moment by moment, where all those tiny digital jobs should run so that systems stay fast, energy‑efficient, and affordable—even when thousands of devices are competing for attention.

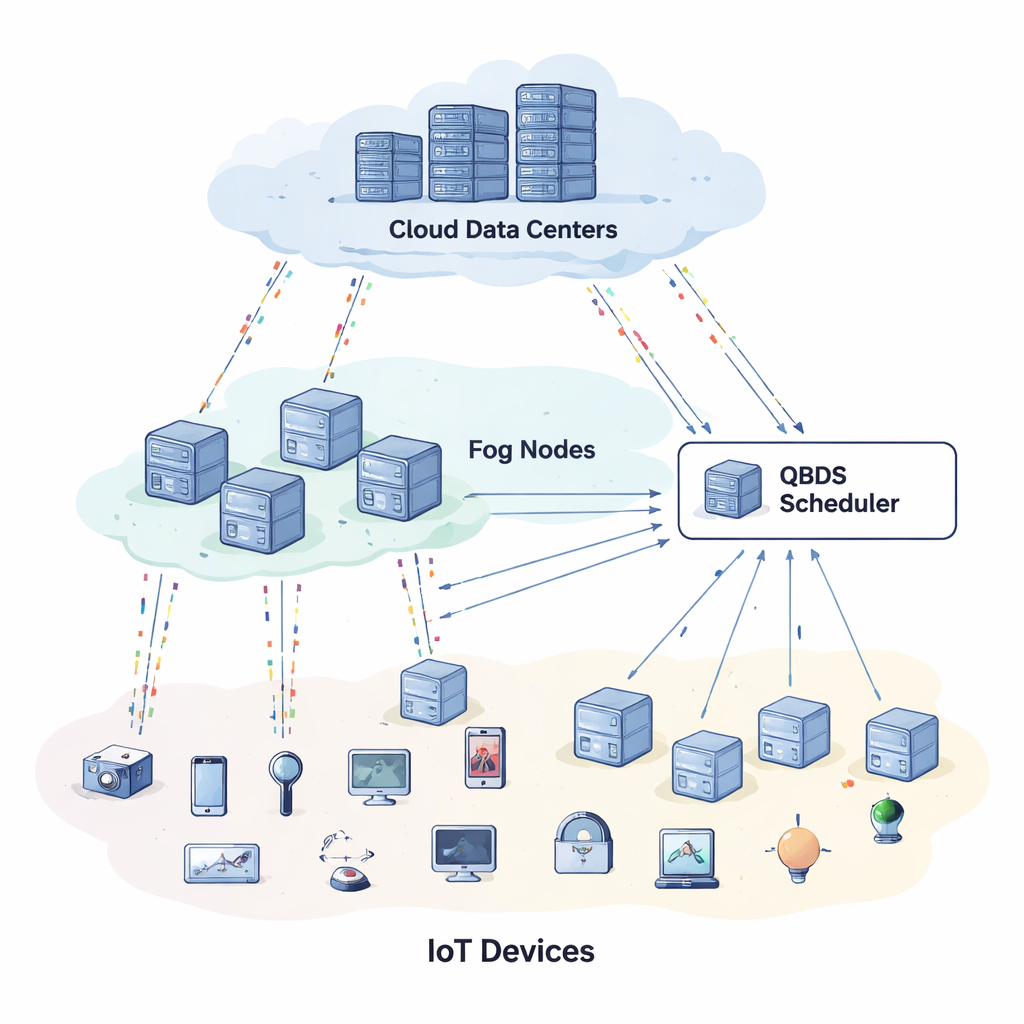

From the cloud to the nearby fog

Traditional cloud computing works well for storing photos or running big data analyses, but it struggles with life‑or‑death or split‑second scenarios, like remote surgery, smart traffic lights, or autonomous drones. The delay caused by sending data across the internet and waiting in queues can be unacceptable. To fix this, engineers introduced an extra middle layer called “fog” computing: small servers and gateways placed closer to where data is generated. In a three‑tier setup—devices, fog, and cloud—lightweight, urgent tasks should stay near the edge, while heavier, less time‑critical work can move to the cloud. The catch is that these layers contain a mix of machines with different speeds, memory sizes, network links, energy use, and prices, all changing over time. Efficiently deciding who does what, and when, becomes a hard puzzle.

A traffic controller for digital jobs

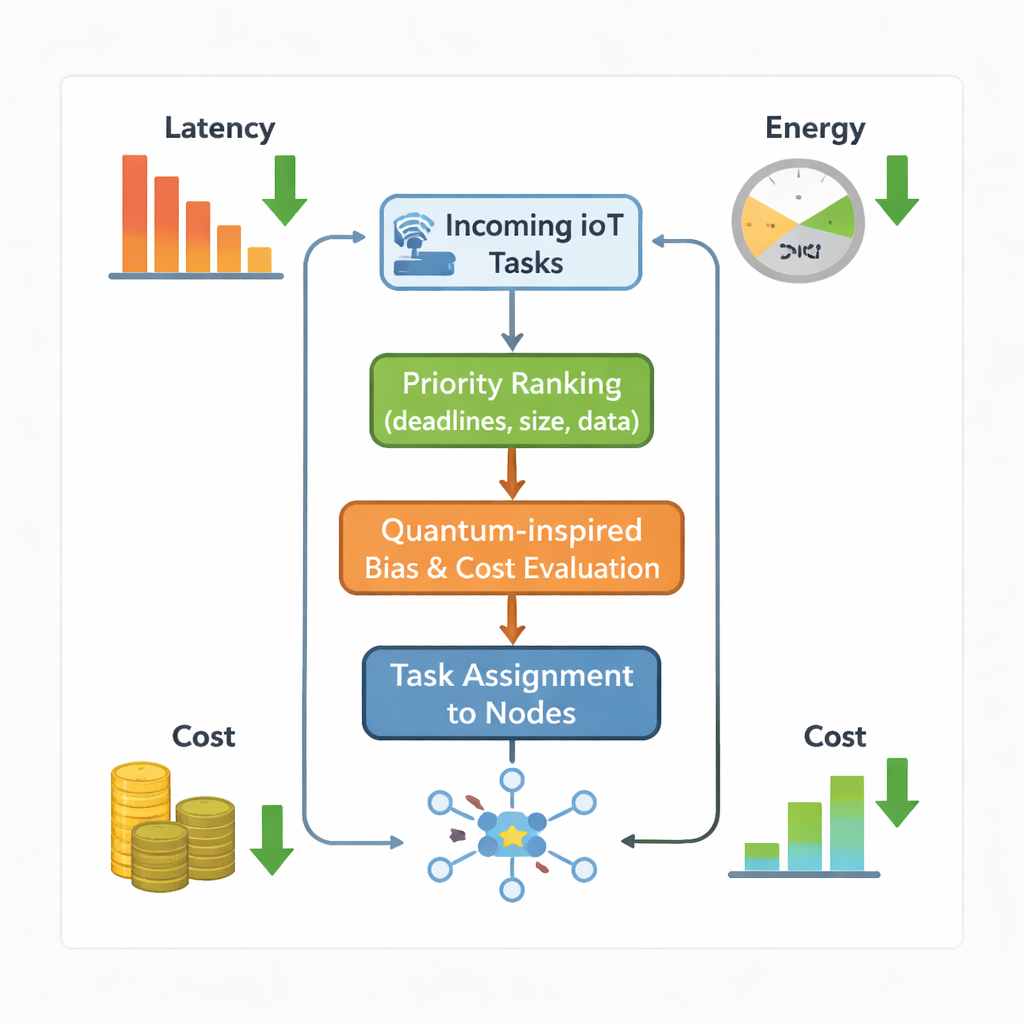

The authors propose a new traffic controller for this puzzle, called the Quantum‑inspired Biased Dynamic Scheduler (QBDS). Think of every message from a sensor or app as a task that must be assigned to some fog or cloud node. QBDS first ranks all waiting tasks according to how urgent and demanding they are—taking into account their deadlines, how long they will run, how much memory they need, and how much data must be moved. This prevents small but urgent tasks from being buried under large but less critical ones. For each possible match between a task and a machine, QBDS then estimates how long the task would take, how much energy the machine would burn, and how much the operator would have to pay in usage fees or penalties for missing deadlines. All of these ingredients are combined into one flexible score that system operators can tune depending on whether they care more about speed, cost, or energy savings.

Borrowing a trick from waves, not quantum hardware

What makes QBDS stand out is a subtle “quantum‑inspired” twist. Rather than using real quantum computers, the method borrows the idea of wave‑like behavior to improve its search for good task–machine pairings. For each pairing, the scheduler builds several simple measures: how well the task’s size matches a machine’s processor and memory, how suitable the network link is, how cheap the machine is, and how short its communication delay will be. These measures are transformed using smooth sine waves and then blended with random weights. The resulting bias slightly bends the overall cost score so that the scheduler is nudged away from overworked machines and toward capable but underused ones. Crucially, this modulation is carefully limited so it never overwhelms the basic goals of finishing tasks on time and within budget. The approach stays entirely classical—it just reshapes the “cost landscape” in a controlled, wave‑like way to avoid getting stuck in mediocre choices.

Putting the new scheduler to the test

To see if this idea works in practice, the researchers ran extensive computer experiments that simulate thousands to tens of thousands of tasks arriving at mixed fog–cloud systems. They first compared QBDS with a version of itself that lacks the quantum‑inspired bias. With the bias turned on, the system finished all tasks about a quarter faster, used nearly a fifth less energy, spent less money overall, and spread work much more evenly across machines. Next, they pitted QBDS against a range of advanced optimization schemes, including modern metaheuristics, machine‑learning‑based schedulers, and classical rules like “first‑come, first‑served” or “shortest job first.” Across small and large setups, QBDS consistently produced shorter completion times, better throughput, fewer missed deadlines, and better balancing of load—often while running far faster than population‑based search methods that require many iterations.

What this means for everyday technology

For a non‑specialist, the key message is that smarter, more flexible scheduling can make connected systems both snappier and greener. By ranking tasks intelligently and adding a gentle, wave‑inspired push toward underused machines, QBDS keeps data closer to where it is needed, trims wasted energy, and cuts the risk of dangerous delays. While the work has so far been demonstrated in simulations rather than on live hardware, it points toward future fog–cloud platforms that can juggle thousands of real‑time jobs—from medical monitoring to smart cities—without requiring exotic quantum computers or massive extra computing power.

Citation: Mindil, A., Hamed, A.Y., Hassan, M.R. et al. A novel approach for dynamic task scheduling for IOT in fog-cloud environment. Sci Rep 16, 5501 (2026). https://doi.org/10.1038/s41598-026-35156-7

Keywords: fog computing, IoT task scheduling, edge and cloud, energy-efficient computing, real-time systems