Clear Sky Science · en

Derivation of an intrinsic brain activity biomarker for the earliest prediction of cognitive decline

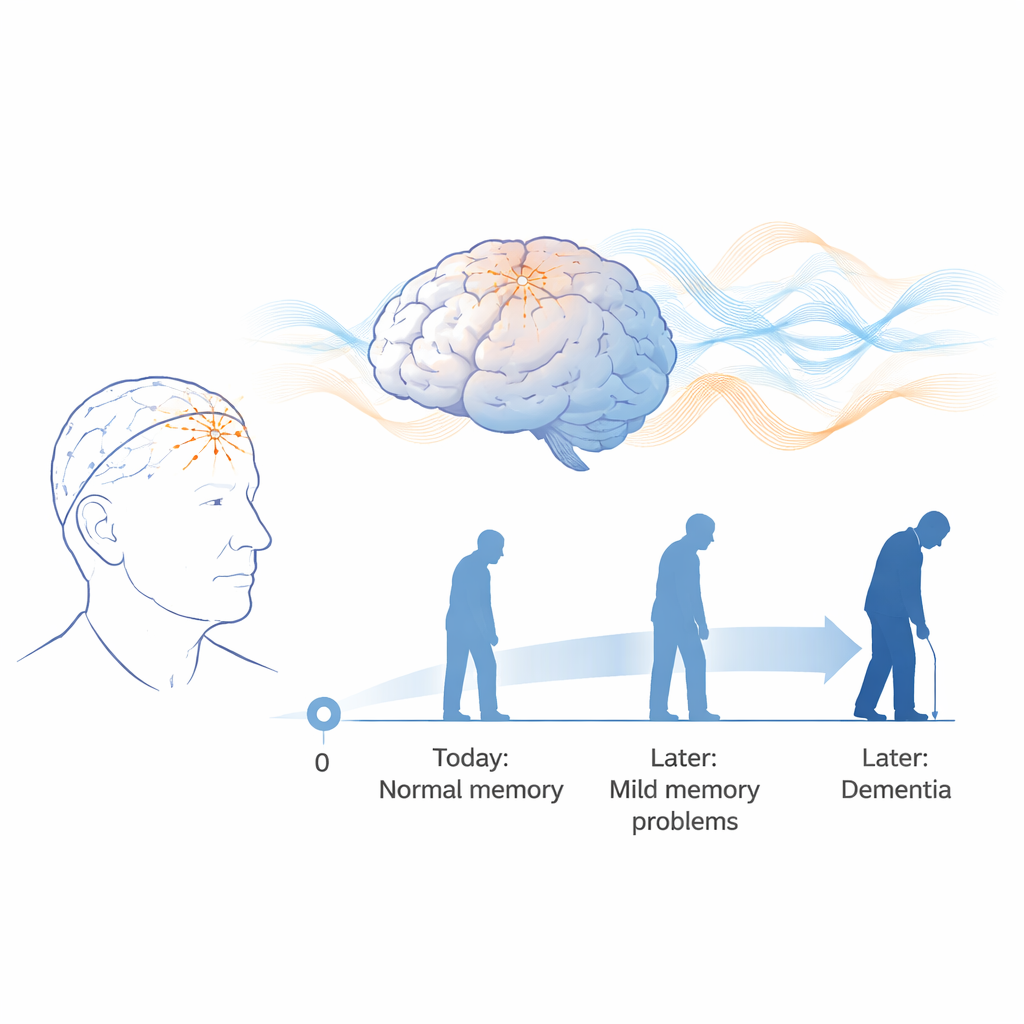

Why early brain changes matter

Many older adults notice subtle memory lapses long before any doctor can diagnose dementia. At this stage, standard brain scans and blood tests often look normal, yet the underlying disease process may already be underway. This study explores whether a simple, non-invasive brainwave test—the electroencephalogram, or EEG—can reveal very early changes in brain function and reliably forecast who is most likely to experience serious cognitive decline years later.

Listening to the brain’s quiet signals

The researchers focused on people with “subjective cognitive impairment” (SCI): older adults who feel their memory is slipping but still perform normally on standard tests. Eighty-eight such volunteers, aged 52 to 85, had 20 minutes of resting EEG recorded with their eyes closed, and were then followed for 5–7 years. During follow-up, doctors tracked each person’s cognitive status using established rating scales. By the end of this period, some participants remained stable, while others declined to mild cognitive impairment or developed dementia. These outcomes allowed the team to ask whether subtle patterns in the original EEG could have predicted who would later deteriorate.

Turning brainwaves into a predictive fingerprint

Rather than inspecting the EEG by eye, the team used quantitative EEG (qEEG), which converts raw brainwaves into thousands of numerical features. These features capture how strong different frequency bands are (such as alpha and theta rhythms), how well distant brain regions synchronize with one another (connectivity and phase lag), and how complex or disorganized the overall activity pattern appears. Because normal aging also alters EEG, the researchers mathematically adjusted all features for age, then standardized them so that "zero" represented the expected value for a healthy person of the same age. To avoid overfitting, they systematically narrowed more than 6,000 candidate measures down to a compact set that were stable, non-redundant, and best at separating people who would remain stable from those who would decline.

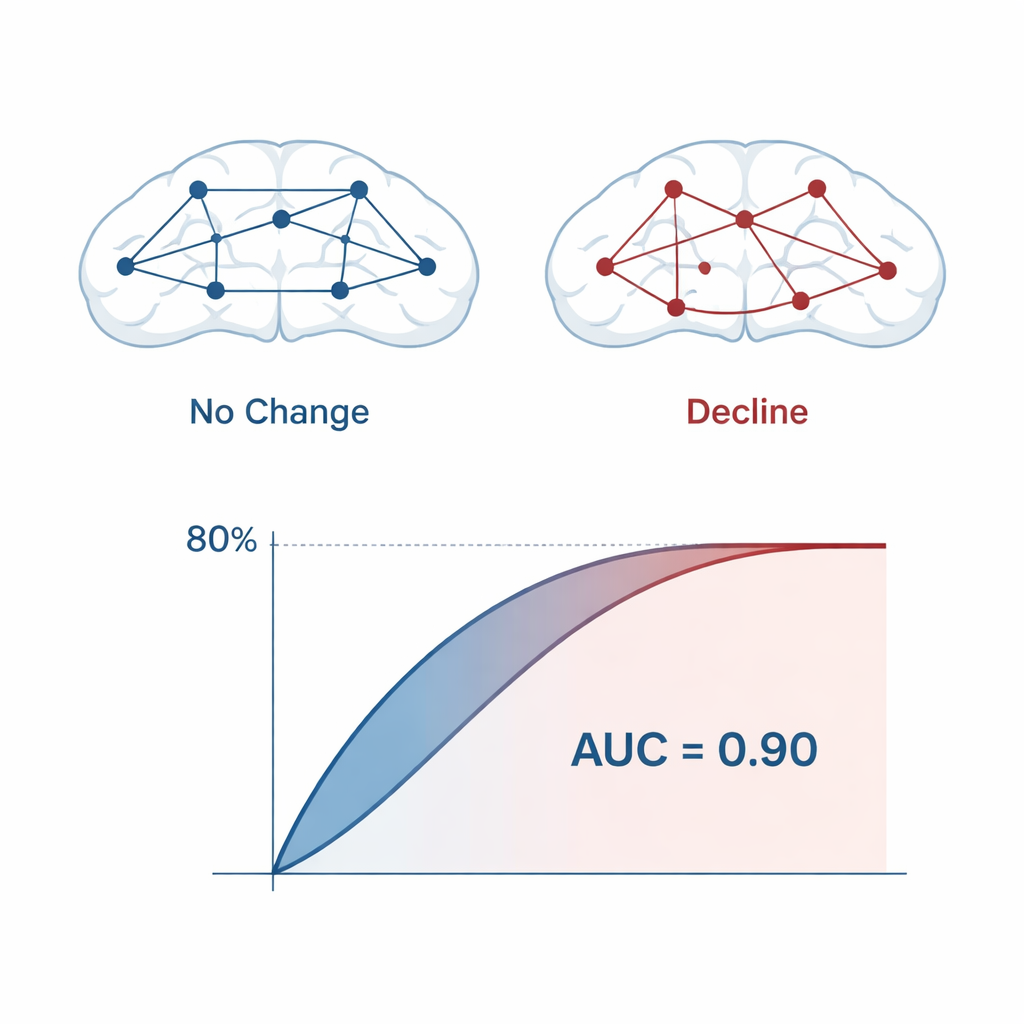

Machine learning as a crystal ball

With this reduced feature set, the team trained several machine learning models—logistic regression, support vector machines, and random forests—to estimate each participant’s probability of future decline. Repeated cross-validation and a specialized bootstrap method were used to gauge performance as realistically as possible. Across models, prediction accuracy was about 80%, with an area under the receiver operating characteristic curve (AUC) of around 0.90, which indicates strong discrimination between stable and declining individuals. The final locked models used only 14 qEEG features, mostly drawn from frontal brain regions recorded with a small set of electrodes, making the approach practical for routine clinical use.

What is changing in the brain

The features most responsible for accurate prediction pointed to early disruption in how brain areas talk to each other. Measures of connectivity, especially phase lag and asymmetry between left and right frontal regions, were central to the model. Abnormalities in the alpha and theta frequency bands stood out: increased or shifted theta activity has been linked in other research to hippocampal atrophy and cortical thinning, while changes in alpha power and frequency may reflect the brain’s initial attempts to compensate for emerging damage. Importantly, no single EEG measure could tell the whole story. It was the specific combination—the biomarker “fingerprint”—that signaled heightened risk years before full-blown symptoms emerged.

Putting the tool to the test in the real world

To see whether their biomarker would generalize beyond the original group, the researchers tested it on two independent cohorts from the United States and Italy, each with their own recording setups and patient characteristics. As expected for truly new data, accuracy dropped modestly, to roughly 60–70%, but the model still performed far better than chance, suggesting that the signal it captures is robust. The team also showed that clinicians could adjust the decision threshold: lowering it increases sensitivity (catching more future decliners at the cost of more false alarms), while raising it increases specificity (fewer false positives but more missed cases). This flexibility lets providers tailor the tool to different clinical priorities.

What this means for patients and clinicians

In plain terms, this work suggests that a short, painless EEG recording—using only a handful of electrodes over the forehead—can help identify older adults who appear normal today but are at high risk of cognitive decline over the next several years. While larger studies and comparisons with other biomarkers are still needed, the approach is inexpensive, non-invasive, and repeatable, making it attractive for widespread screening, especially in settings where advanced imaging or spinal fluid tests are impractical. If validated further, such EEG-based biomarkers could help doctors intervene earlier, monitor disease progression, and select participants for clinical trials at the very stage when treatments are most likely to make a lasting difference.

Citation: Prichep, L.S., Zaidi, S.N., Brink, K. et al. Derivation of an intrinsic brain activity biomarker for the earliest prediction of cognitive decline. Sci Rep 16, 5500 (2026). https://doi.org/10.1038/s41598-026-35144-x

Keywords: early dementia prediction, EEG brainwaves, subjective cognitive decline, machine learning biomarker, Alzheimer’s risk