Clear Sky Science · en

A meta learning framework for few shot personalized gait cycle generation and reconstruction

Why How We Walk Matters

Every step we take reveals more than we might think. The way a person walks—their gait—can hint at their identity, health, mood, and even how tired they are. Yet capturing these subtle patterns usually demands lots of data and long lab sessions. This paper presents MetaGait, a new AI-based method that can learn a person’s unique walking style from just a handful of examples, making personalized motion analysis and assistance far more practical in clinics, robotics, and virtual reality.

From Average Walks to Individual Steps

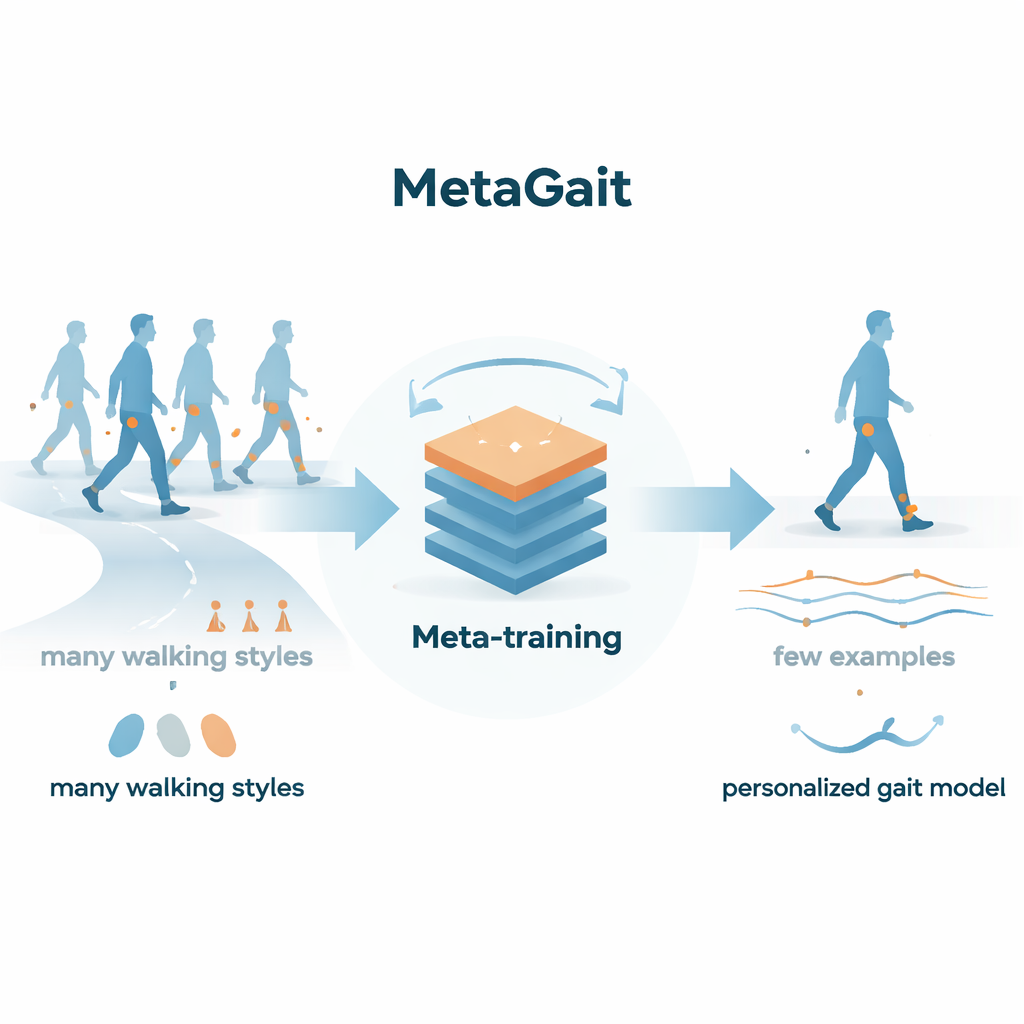

Traditional computer models of walking are very good at learning the “average” way people move, but they struggle with the quirks that make each of us unique. Past systems typically needed large datasets from each person to fine-tune the model to their particular style, which is expensive and time-consuming. MetaGait tackles this challenge by treating personalization itself as a learning problem: instead of only learning how people walk, it learns how to learn a new person’s walk quickly, using very few recorded steps.

Learning to Learn from Many Walkers

To achieve this, the researchers use a strategy called meta-learning, often described as “learning to learn.” They draw on the Human Gait Database, which contains thousands of walking cycles captured by small motion sensors attached to the legs of over 200 people walking under different conditions. MetaGait repeatedly practices on mini-tasks such as “adapt to subject A” or “reconstruct subject B’s walk from noisy data.” For each mini-task, the system gets a tiny support set—a few recorded gait cycles—to adapt its internal settings, and then it is tested on new cycles from the same person. Over many such tasks, MetaGait discovers an internal starting point that can be quickly tuned to a new individual with only one to five example cycles.

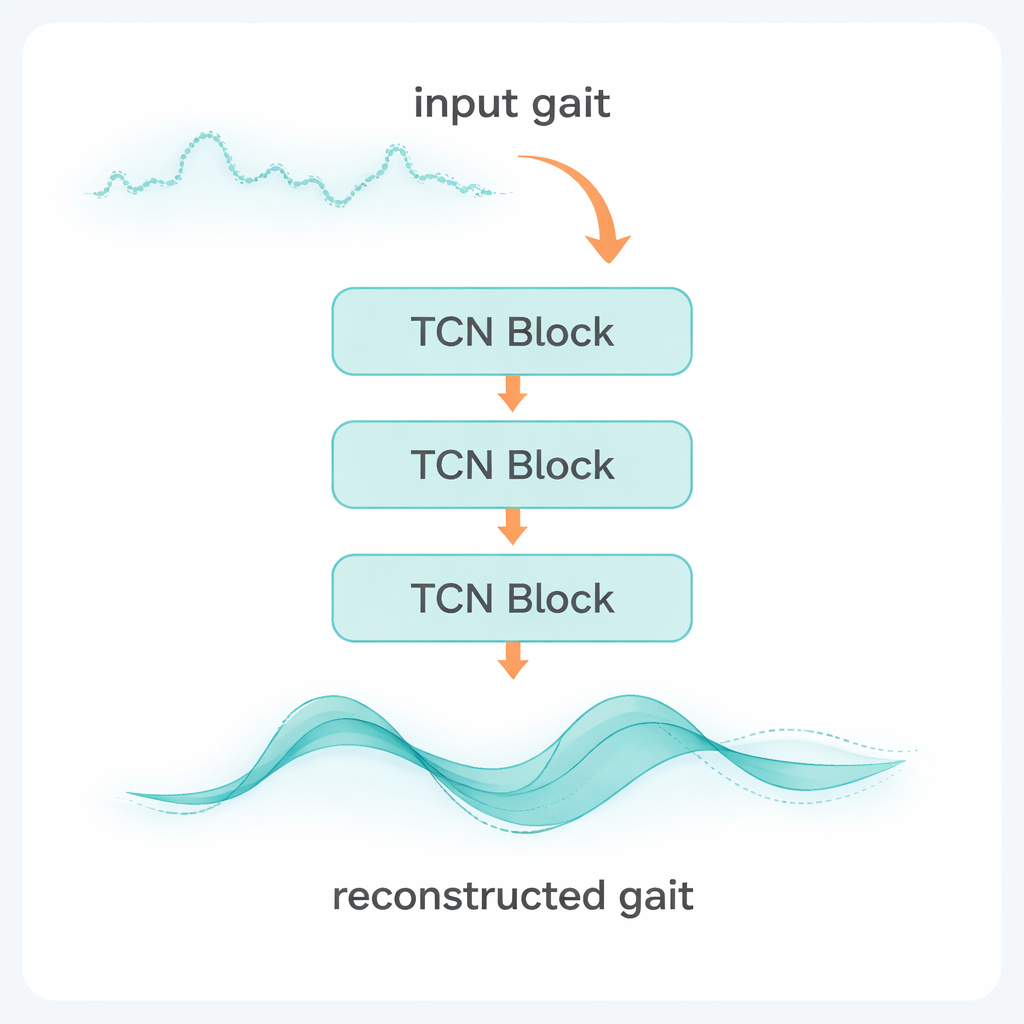

A Smart Engine for Time-Based Motion

At the heart of MetaGait lies a temporal convolutional network, a type of neural network designed to handle sequences that unfold over time. This network ingests sensor readings—such as accelerations and rotations from the shank-mounted devices—across 100 time steps for each stride. In one mode, it is used for generation: given a few clean examples from a person, it produces a new, realistic gait cycle that matches that person’s style. In another mode, it is used for reconstruction: given a partly corrupted or noisy gait signal plus a few clean examples, it recovers the full, clean cycle. During meta-training, the network’s parameters are adjusted in nested loops so that a small number of fine-tuning steps on new data is enough to specialize it to a fresh subject.

Testing the System on Limited Data

The team evaluates MetaGait in strict “few-shot” scenarios, where the model sees only one or five gait cycles from a new person before being asked to generate or reconstruct more. They compare it against two common baselines: training a model from scratch using just those few examples, and pretraining a general model on a large pool of data and then fine-tuning it. Using standard measures of accuracy for motion sequences, MetaGait consistently produces more accurate and natural-looking gait patterns than either baseline, for both generation and reconstruction. It not only fills in missing segments and removes noise better, but it does so while preserving individual style.

What This Could Mean in Daily Life

For non-specialists, the key takeaway is that MetaGait shows we can build personalized walking models with very little data from each person. That could speed up the fitting of robotic exoskeletons or prosthetic legs, help clinicians assess walking problems without long test sessions, and enable virtual characters that move like their human users after only a brief calibration. While future work is needed to make training more efficient and to test it in real-world deployments, this study demonstrates a promising path toward quick, accurate, and highly personalized analysis of how we walk.

Citation: Yadav, R.K., Nandi, A., Sharma, D.A.K. et al. A meta learning framework for few shot personalized gait cycle generation and reconstruction. Sci Rep 16, 5506 (2026). https://doi.org/10.1038/s41598-026-35121-4

Keywords: gait analysis, personalized movement, meta learning, wearable sensors, human motion