Clear Sky Science · en

Deep learning-based assessment of periapical radiographic image quality

Why clearer dental X-rays matter

Every time you sit in a dental chair for an X‑ray, your dentist relies on those shadowy images to spot cavities, infections, and bone loss. But these pictures are surprisingly easy to get wrong: the angle can be off, parts of the tooth can be cut out of the frame, or scratches can obscure details. Each flawed image may mean another X‑ray—and more radiation—for the patient. This study explores how a powerful type of artificial intelligence (AI) can automatically check the quality of dental X‑rays in real time, helping dentists get the picture right the first time.

The problem with blurry or cut-off pictures

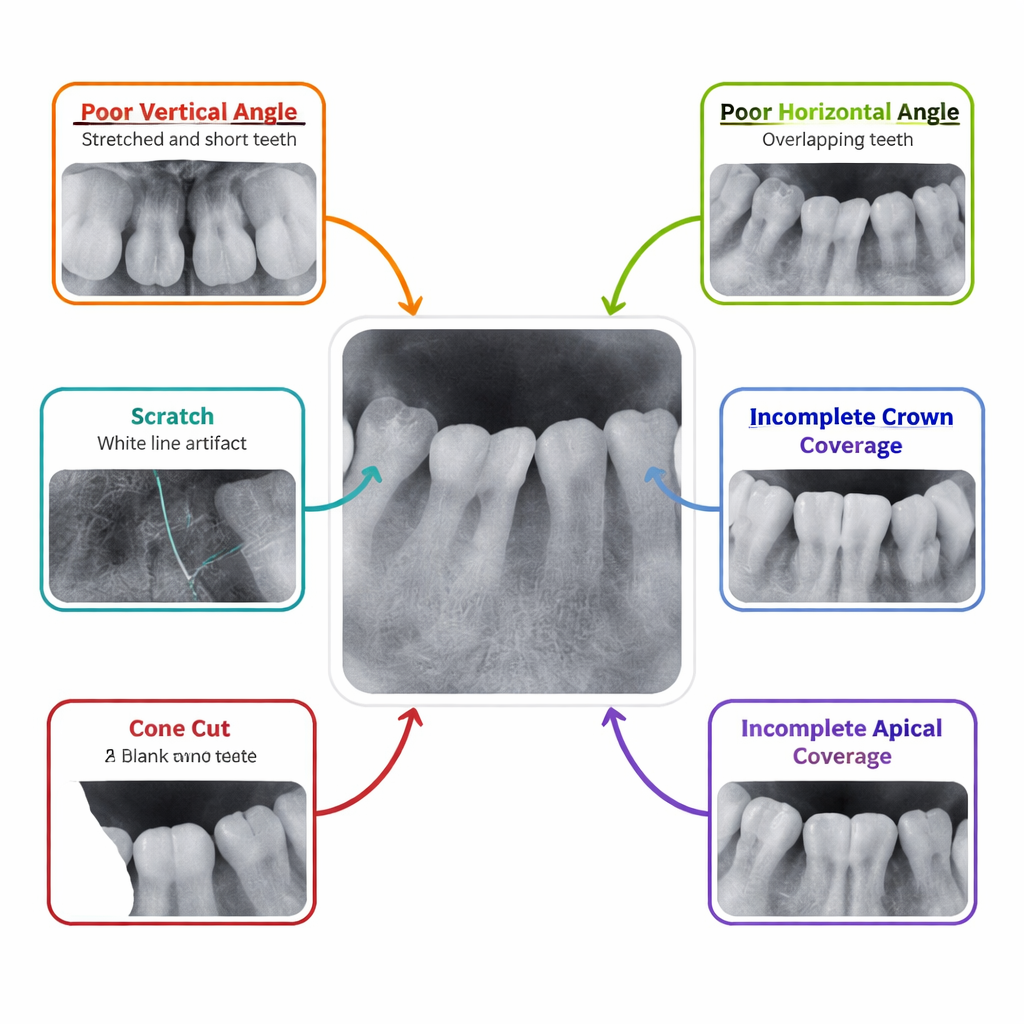

Dentists routinely use periapical radiographs—close‑up X‑rays that show individual teeth and the surrounding bone—to diagnose problems like deep decay and infections at the root tip. Yet these images are among the most frequently rejected in dental radiology, with about one in six needing to be taken again. Small errors in how the sensor is placed in the mouth or how the X‑ray beam is angled can stretch or overlap teeth, chop off the crown or root area, or even miss part of the image entirely. Today, deciding whether an image is “good enough” is done by eye, which is slow, subjective, and varies from one person to another.

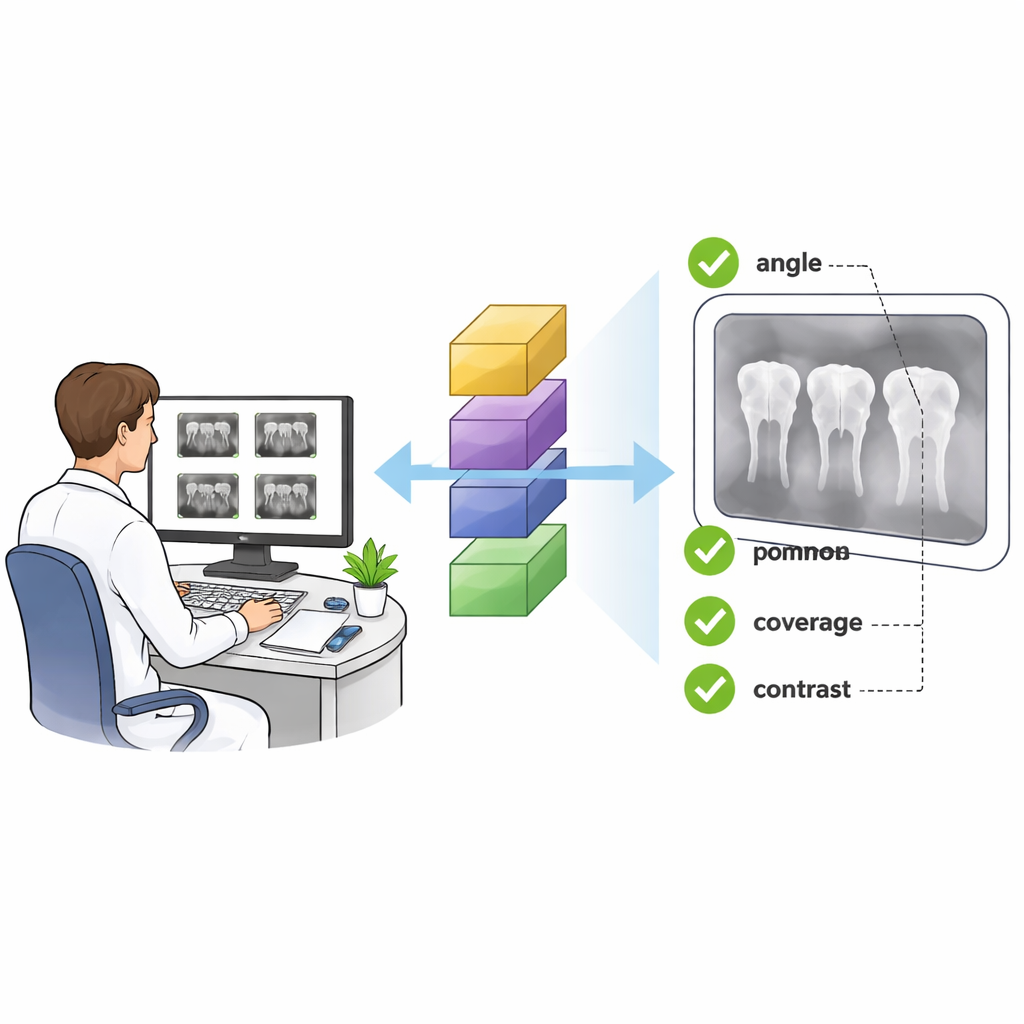

Teaching a computer to see like a dental expert

The researchers set out to see whether a modern deep learning system could be trained to judge these X‑rays as consistently as an experienced radiologist. They collected 3,594 periapical images from a single hospital, all taken with the same X‑ray machine. Expert readers labeled each image according to which part of the mouth it showed—such as upper molars or lower incisors—and whether it had any of six common problems: wrong vertical angle, wrong horizontal angle, missing part of the crown, missing part of the root tip area, a cone cut (where part of the plate receives no X‑rays), or scratches on the plate. To make sure the “answer key” was reliable, two experts labeled the images independently, and a third settled disagreements, achieving very high agreement overall.

How the AI learned from thousands of X-rays

The team used a well‑known deep learning architecture called ResNet50, originally trained on everyday photographs, and adapted it for dental images. Instead of building one all‑purpose model, they created seven specialized ones: one to recognize which tooth region was shown, and six separate models to say “yes” or “no” for each type of defect. The images were split into a training group and a test group. During training, the computer saw many altered versions of each X‑ray—flipped, slightly shifted, scaled, or with a bit of noise added—to help it learn to ignore minor variations and focus on true quality problems. Extra copies of rare defect types were also fed into the system so that the AI would not be biased toward the more common, normal images.

How well the AI judged image quality

When tested on images it had never seen before, the AI system performed strikingly well. For identifying which part of the mouth the X‑ray showed, it reached an area‑under‑the‑curve score (a standard accuracy measure) of 0.997 out of 1. For five of the six defect types—wrong vertical angle, wrong horizontal angle, missing crown, missing root‑tip area, and cone cut—the accuracy scores were in the “excellent” range, often extremely close to perfect. The most challenging problem was detecting scratches, likely because they vary greatly in appearance and can overlap with bright dental materials, but even here the system still performed strongly. These results suggest that a computer can reliably detect both where an image was taken and whether it meets basic quality standards.

What this could mean in the dental chair

For patients, the promise of this work is fewer repeated X‑rays, more consistent diagnoses, and potentially lower radiation exposure over time. If built into digital X‑ray systems, the AI could give immediate feedback—alerting the operator that a tooth’s root is cut off or that the angle has distorted the image—before the patient even leaves the chair. Over the longer term, analyzing thousands of stored images could reveal patterns, such as which tooth positions or operators most often generate flawed pictures, guiding targeted training. The authors note that the system still needs to be tested on images from other clinics and machines, but their findings point toward a future where smart software quietly watches every dental X‑ray, helping ensure that each one is clear, complete, and truly worth taking.

Citation: Chi, X., Wang, M., Gao, Y. et al. Deep learning-based assessment of periapical radiographic image quality. Sci Rep 16, 5047 (2026). https://doi.org/10.1038/s41598-026-35100-9

Keywords: dental X-ray quality, artificial intelligence in dentistry, deep learning, periapical radiograph, image quality control