Clear Sky Science · en

A multimodal learning and simulation approach for perception in autonomous driving systems

Smarter Self-Driving Cars

Self-driving cars promise safer roads and less traffic, but only if they can truly understand the world around them. This paper explores a new way to help autonomous vehicles “see,” “feel,” and “anticipate” their surroundings more like a careful human driver—by blending different sensors, testing safely in a virtual copy of the real world, and making the car’s decisions more transparent to people.

Seeing the Road with Many “Senses”

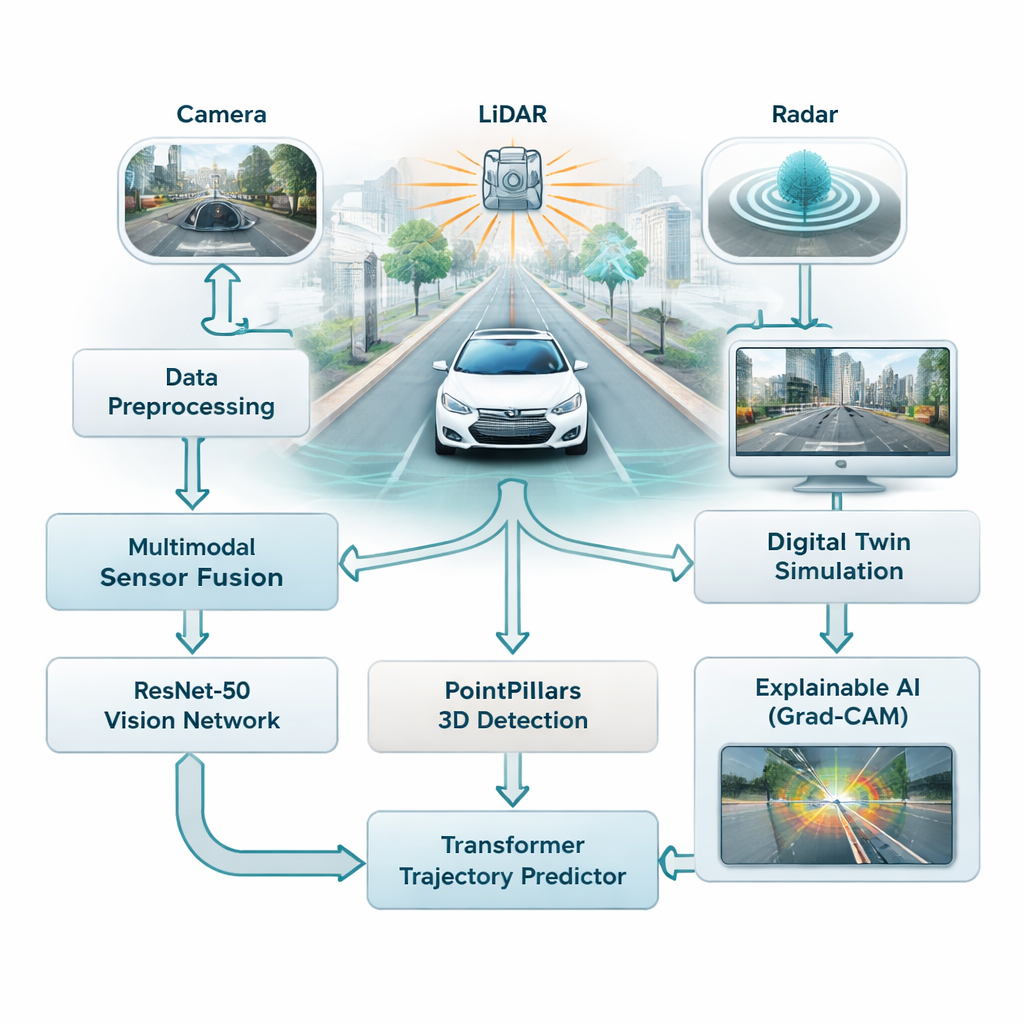

Most driver-assistance systems today lean heavily on cameras, which work well in good light but struggle in fog, rain, or at night. This study combines three different sensor types—cameras, laser scanners (LiDAR), and radar—so the car does not depend on a single, fragile source of information. Cameras capture rich color and detail, LiDAR builds a precise 3D picture of the scene, and radar remains reliable in bad weather. The authors fuse all three streams into a single view of traffic, giving the vehicle a fuller, more reliable understanding of roads, pedestrians, and other cars.

Teaching the Car to Recognize and Anticipate

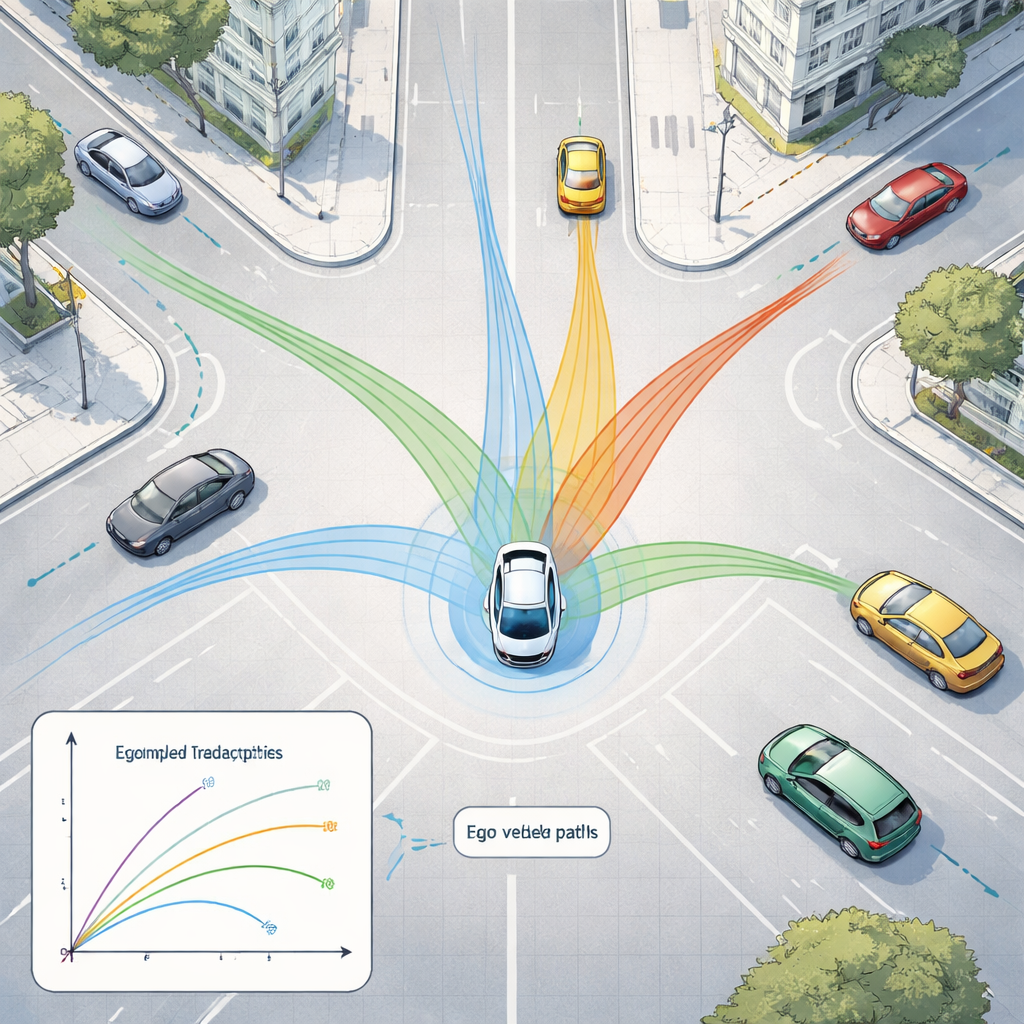

To make sense of this flood of data, the framework uses two families of modern AI models. First, a deep image network called ResNet-50 scans camera images to capture the overall situation—how crowded the road is, where lanes are visible, and how the scene is laid out. At the same time, a 3D model called PointPillars reads LiDAR point clouds to locate vehicles and other objects in three dimensions. These signals are then fed into a Transformer, a type of AI originally designed for language, which excels at understanding how things change over time. Here, it learns to predict how nearby cars and other moving objects are likely to move over the next few seconds, taking into account both their past motion and the structure of the road.

Building a Safe Virtual Test Track

Instead of testing risky situations directly on public roads, the researchers plug their system into a digital twin—a virtual replica of real city streets based on a large public dataset from Boston and Singapore. In this simulated world, the car’s sensors, motion, and surroundings are replayed and altered at will, while the AI tries to track objects and forecast their future paths. The system can run these “what if?” scenarios in real time, with response times under 50 milliseconds, allowing engineers to explore edge cases such as sudden braking, sharp turns, or crowded intersections without endangering anyone.

Peeking Inside the AI’s “Black Box”

A frequent criticism of deep learning is that it can be hard to understand why the model made a particular decision. To address this, the authors use a method called Grad-CAM, which highlights the parts of an image that most influenced the model’s output. These heatmaps show, for example, whether the network is focusing on another car, a pedestrian, or a lane marking when estimating trajectories. Although this explanation step runs offline and not in the car’s real-time loop, it helps engineers and safety reviewers verify that the system is paying attention to the right clues, which is crucial for building public trust.

How Much Better Does It Drive?

When tested on hundreds of urban driving scenes, the proposed framework detects 3D objects accurately and predicts motion more precisely than simple physics rules that assume constant speed or steady acceleration. Its forecast errors—how far its predicted positions deviate from reality—are significantly smaller than those of such baselines and close to a strong recurrent AI model, while still running fast enough for real-time use. Careful experiments comparing different network designs show that a deeper image model and a medium-depth 3D detector strike the best balance between accuracy and speed, and that the system can be deployed on smaller onboard computers after model compression.

What This Means for Everyday Drivers

For non-specialists, the message is that safer, more reliable self-driving cars are likely to come from an approach that blends multiple sensors, predicts how the scene will evolve, and is tested thoroughly in realistic virtual worlds. By joining perception, prediction, simulation, and human-understandable explanations into one design, this work moves autonomous vehicles closer to behaving like cautious, transparent partners on the road rather than mysterious machines.

Citation: Almadhor, A., Al Hejaili, A., Alsubai, S. et al. A multimodal learning and simulation approach for perception in autonomous driving systems. Sci Rep 16, 5505 (2026). https://doi.org/10.1038/s41598-026-35095-3

Keywords: autonomous driving, sensor fusion, trajectory prediction, 3D object detection, digital twin simulation