Clear Sky Science · en

Precise segmentation method for slender power targets based on multi-scale perception and location-sensitive learning

Keeping the Lights On, Safely

Modern life depends on electricity flowing smoothly through a vast web of power lines. Much of this grid runs above our heads, where aging wires, bad weather, and human error can trigger outages or even accidents. Utilities increasingly rely on cameras and artificial intelligence to watch these lines in real time, but getting a computer to see long, thin wires clearly against messy backgrounds is surprisingly hard. This study introduces a new image-analysis method that helps computers trace power lines more precisely, even in cluttered, real-world scenes, strengthening the safety and reliability of everyday power delivery.

Why Finding Thin Wires Is So Difficult

At first glance, recognizing a power line in a photo seems simple: just look for a long dark stripe against the sky. In reality, the task is much tougher. Power lines can be very thin compared with the whole image, they can cross each other, bend, and appear at many angles. They are often partially hidden by equipment, buildings, trees, or tools used by workers. Traditional deep-learning tools for image segmentation—techniques that color in each pixel as “wire” or “background”—were designed mainly for bulkier, blob-like objects such as cars or people. These methods tend to blur the edges of wires, break them into pieces, or confuse them with other long, narrow objects. For live-line maintenance, where work is done without shutting off the power, such mistakes can weaken safety alarms and inspection systems.

A New Way to See Power Lines

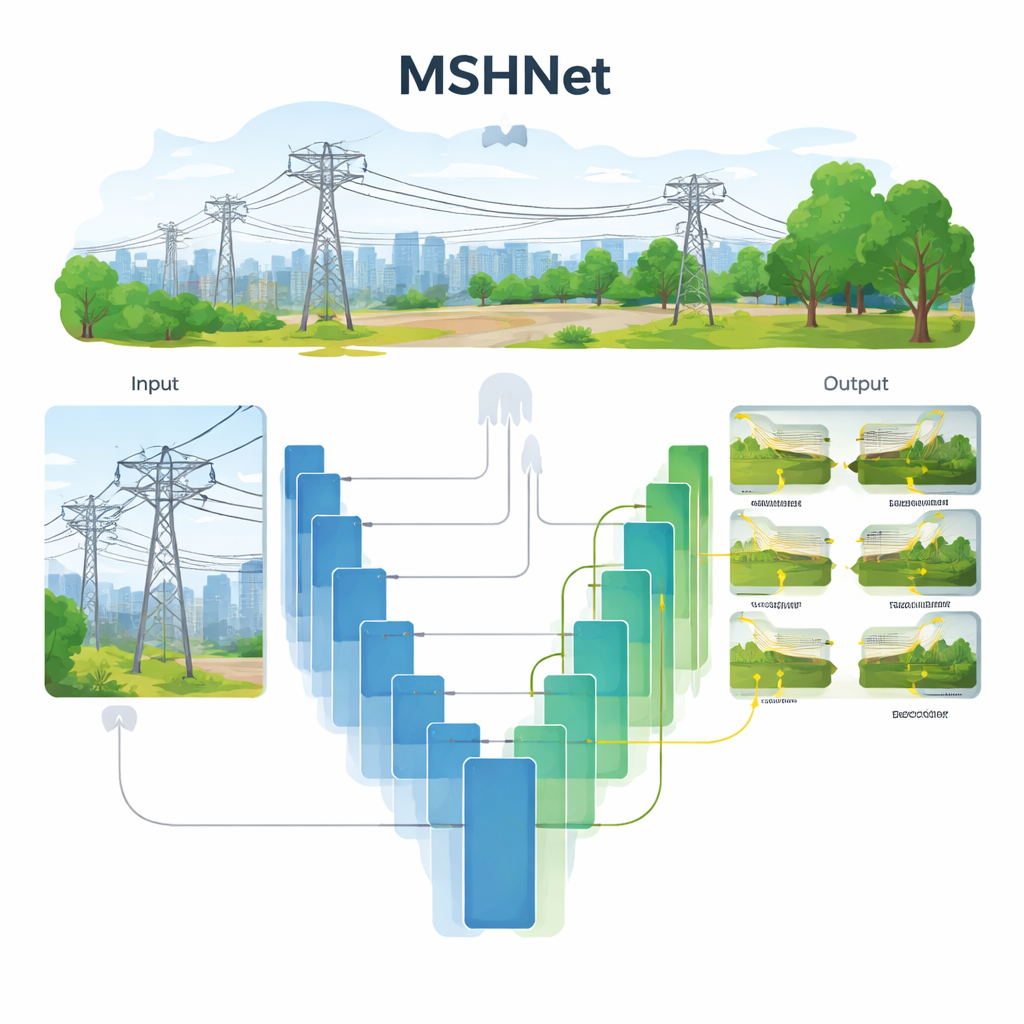

The researchers build on a popular image-segmentation design known as U-Net, which processes an image at several resolutions and then recombines the information. Their new system, called MSHNet (Multi-Scale Head Network), adds extra “heads” that make predictions at multiple scales simultaneously. Each head focuses on a different level of detail, so the model pays attention both to the overall route of a line and to its fine edges. All of these predictions are then blended into a final, full-size map of where the wires are. To guide learning, the team also designs a special loss function—essentially a scoring rule—that does not just ask “Did you find the wire?” but also “Did you get its size and position right?” This scale- and location-sensitive loss encourages the network to match the true thickness, length, and placement of each wire much more closely than standard criteria.

Teaching the Network About Shape and Direction

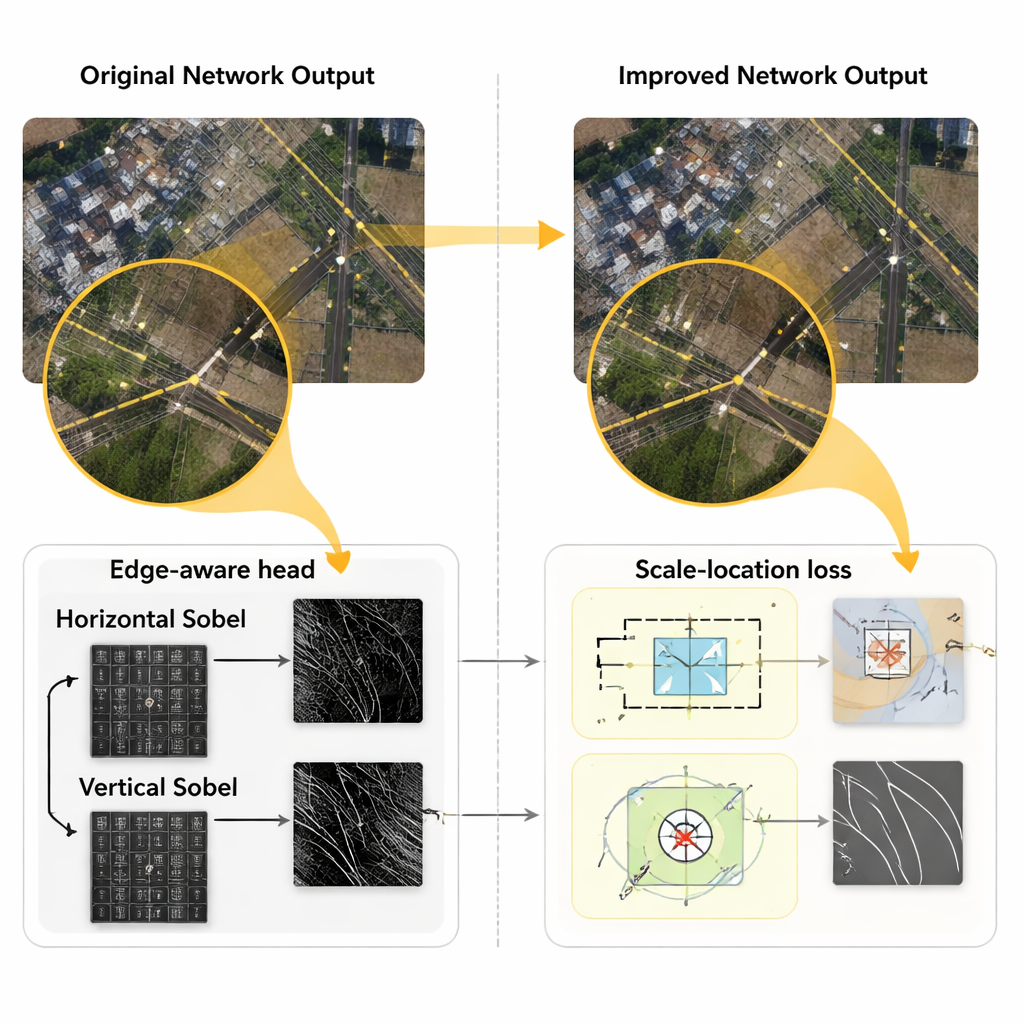

Even with these upgrades, the original MSHNet still struggled with extremely long, thin lines. To tackle this, the authors modify the prediction heads to act like smart edge detectors. Inspired by classic image-processing filters, they split the usual square filters into horizontal and vertical components, using Sobel operators that are especially good at picking out sharp changes along lines. The network multiplies its internal features by the responses of these edge detectors, effectively amplifying line-shaped structures and muting irrelevant background patterns. At the same time, they refine the loss function so that it cares more about the direction of a line. Instead of simply penalizing squared angle errors, they use a cosine-based measure that reacts strongly to even small direction mistakes and escalates the penalty when the model confuses horizontal and vertical orientations. This combination helps the network keep wires continuous across long distances and through bends.

Putting the Method to the Test

To see how well their system works in practice, the team collected 1,800 high-resolution images from real live-line maintenance scenes in cities, factories, and suburban areas. These pictures include harsh lighting, cluttered environments, and many types of poles and wires, making them a demanding testbed. After carefully resizing and augmenting the images, they trained and evaluated several models, including U-Net, DeepLabV3+, PSPNet, the original MSHNet, and their improved version. They measured three key indicators: overall pixel accuracy, how well predicted and true wire regions overlap, and how precisely the model balances catching all wires with avoiding false alarms. The improved MSHNet achieved pixel accuracy near 99.5% and scored higher on overlap and precision than all other methods, showing cleaner, more continuous wire traces, especially where lines cross or are partly blocked by metal structures.

What This Means for Everyday Power and Beyond

For non-specialists, the bottom line is that this method lets computers draw power lines in images almost as reliably as a careful human inspector, but much faster and at large scale. By better understanding the size, position, and direction of slender objects, the system can trigger more accurate safety warnings, support live-line work without outages, and help spot defects before they cause failures. The same ideas could aid inspection of other long, thin structures, such as railway overhead cables or pipelines. As utilities push toward smarter, more automated grids, advances like this provide a crucial digital “pair of eyes” that helps keep the lights on safely and efficiently.

Citation: Zhang, D., Xie, P., Chen, H. et al. Precise segmentation method for slender power targets based on multi-scale perception and location-sensitive learning. Sci Rep 16, 4899 (2026). https://doi.org/10.1038/s41598-026-35084-6

Keywords: power line inspection, image segmentation, deep learning, infrastructure monitoring, computer vision