Clear Sky Science · en

Automated image inpainting for historical artifact restoration using hybridisation of transfer learning with deep generative models

Why fixing ancient art with AI matters

Museums and archaeologists around the world are racing against time. Ancient murals, frescoes, and painted walls are crumbling, fading, and cracking after centuries of exposure to moisture, pollution, and careless handling. Restoring them by hand is slow, expensive, and sometimes irreversible. This study presents a new artificial intelligence system that can digitally repair damaged images of historic artworks, offering curators and researchers a safe way to visualize what lost scenes might have looked like and to preserve them for future generations.

Cracked walls, missing paint, and a digital safety net

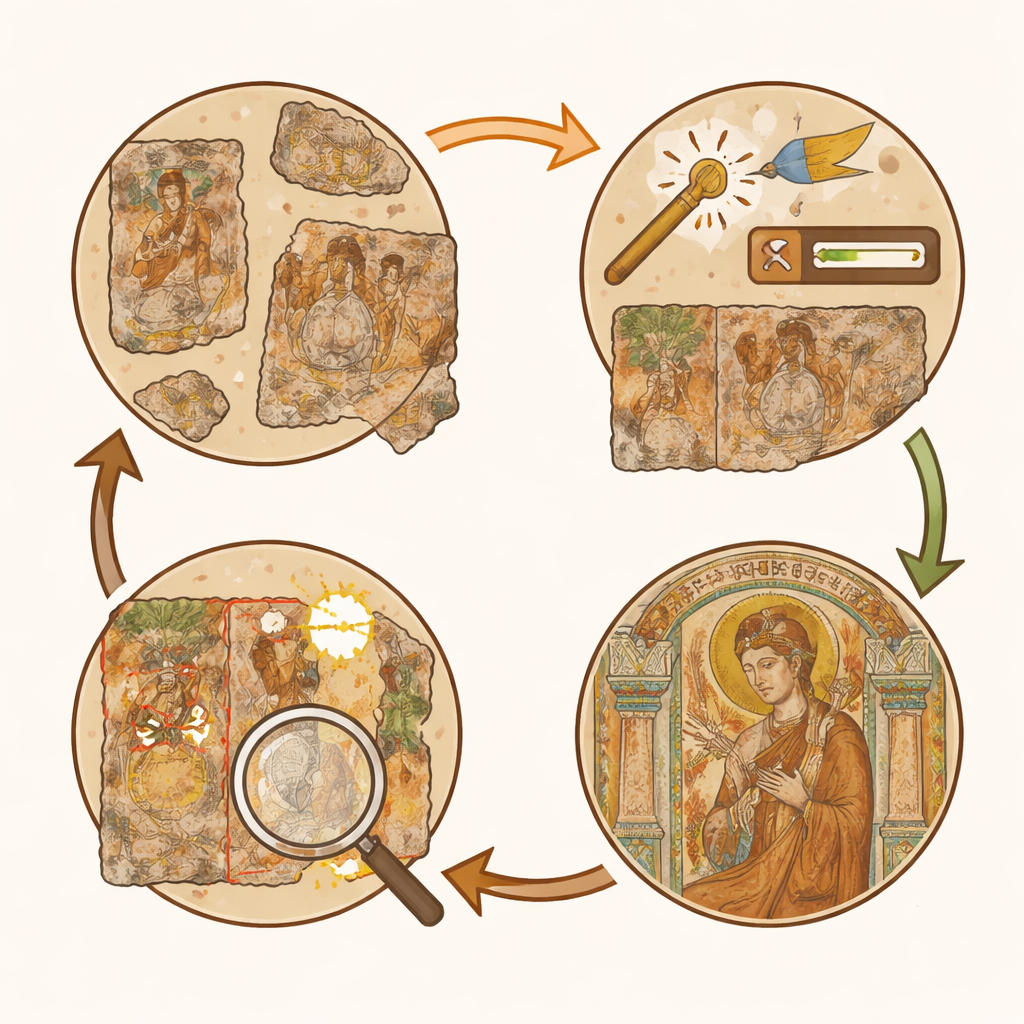

Traditional restoration often means a conservator physically retouches the artwork, adding new paint where old paint is gone. While done carefully, these changes cannot easily be undone and may introduce modern bias. Digital restoration takes a different path: high-resolution photographs of damaged murals are processed by computer algorithms that suggest how missing regions could be filled in. Because everything happens in software, proposed restorations can be compared, revised, or completely discarded without touching the physical object. The authors focus on murals from Dunhuang in China—a famous complex of cave temples whose wall paintings have suffered cracks, flaking, mould, and large missing patches. Their goal is to build a system that can repair such images automatically while keeping the original style, colors, and fine details as intact as possible.

From noisy photos to clear starting points

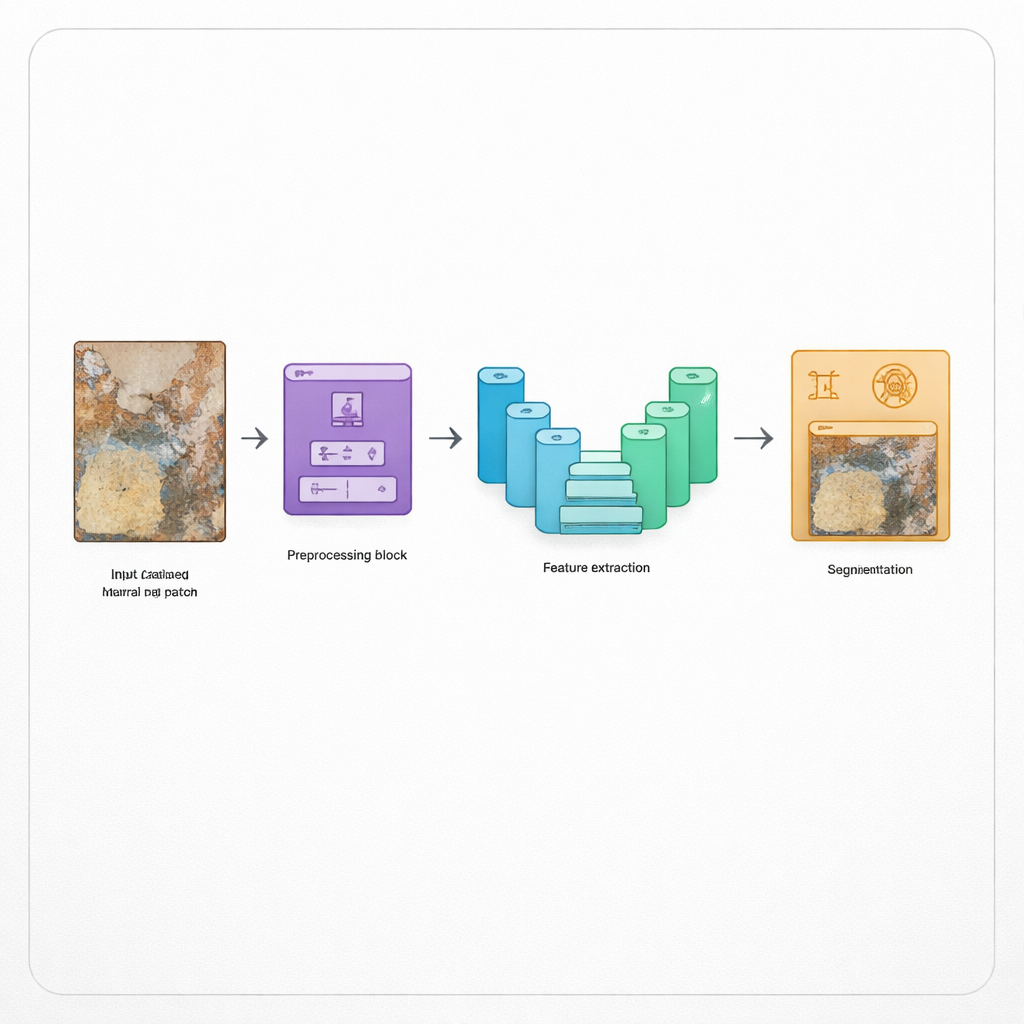

The first step in the system is to clean up the input photographs so that later processing is not misled by camera noise or poor lighting. The method uses an adaptive median filter, a technique that smooths away speckles and random bright or dark pixels while preserving sharp edges, such as outlines in a mural scene. It then enhances contrast so that faint lines and faded colors become easier to distinguish. These adjustments act like gently polishing a dusty lens: they do not invent new content, but they make existing details more visible. By carefully tuning this stage, the authors avoid over-smoothing, which could erase delicate brushwork that scholars care about.

Teaching the system to understand damage

Once the image is cleaned, the model must decide which parts of a mural are intact and which are damaged. To do this, the authors use a compact but powerful neural network called SqueezeNet, adjusted with an attention mechanism so it focuses on informative regions. This network learns to read the visual language of murals—textures of plaster, pigment patterns, and shapes of cracks or bare wall. Its output feeds into another network called U-Net, designed for precise "cut-out" style tasks. U-Net labels every pixel as healthy paint, missing patch, or other forms of deterioration. Thanks to skip connections and added attention and residual blocks, it keeps track of both broad layout (where a figure or border lies) and tiny features (like hairlines and ornaments), mapping out exactly where inpainting is needed.

Letting an AI painter fill in the gaps

With the damaged regions marked, the final stage is to imagine how those areas might originally have looked. Here the authors combine two cutting-edge ideas: generative adversarial networks (GANs), which are expert at creating realistic images, and transformer networks, which excel at capturing long-range relationships. Their hybrid "transformer-based GAN" looks at the surrounding intact paint and at the mural as a whole to infer plausible textures, shapes, and colors for the missing zones. It does not simply copy and paste nearby pixels; instead, it synthesizes new content that blends smoothly into the scene and respects global composition, such as the symmetry of patterns or the continuity of robes and architectural lines.

How well the digital restorer performs

To test their system, the researchers used a specialized dataset of Dunhuang mural images that includes artificially damaged versions and ground-truth originals. This allows them to measure how close the digitally restored output comes to the undamaged reference. They report that their method, called HDLIP-SHAR, beats several strong existing techniques on multiple quality scores, including overall clarity (PSNR), structural similarity (SSIM), and a modern perceptual measure (LPIPS) that better reflects human visual judgment. The model also runs efficiently, requiring fewer computing resources and less time than many rival approaches, which is important if museums want to process large collections.

What this means for saving history

For non-specialists, the key takeaway is that this AI system acts like a careful, reversible assistant rather than an overconfident painter. It can suggest how missing faces, patterns, or scenes in ancient murals might be completed, offering scholars a powerful visualization tool without putting the originals at risk. At the same time, the authors note limitations: the method still depends on having reasonably clear reference material, struggles with extremely severe damage, and does not yet incorporate historical scholarship or material analysis into its guesses. Even so, hybrid approaches like HDLIP-SHAR mark an important step toward using AI not just to enhance pretty pictures, but to help safeguard irreplaceable cultural heritage in a transparent, testable, and non-invasive way.

Citation: Swathi, B., Rao, D.B.J. Automated image inpainting for historical artifact restoration using hybridisation of transfer learning with deep generative models. Sci Rep 16, 4810 (2026). https://doi.org/10.1038/s41598-026-35056-w

Keywords: digital mural restoration, image inpainting, deep learning, cultural heritage, GAN transformer models