Clear Sky Science · en

The development and evaluation of agricultural question-answering systems based on large language models

Smart Answers for Growing Food

Farmers and agricultural experts make daily decisions about what to plant, how to irrigate, and how to protect crops. Getting good advice quickly can make the difference between a healthy harvest and a costly failure. This article explores how modern AI tools called large language models can power question‑and‑answer systems for agriculture, turning plain‑language questions into practical guidance for the field.

Why Farms Need Better Digital Help

Agriculture is becoming increasingly data‑driven, from satellite images to soil sensors. Yet many experts and technicians still struggle to access reliable, easy‑to‑understand information when they need it. Traditional AI systems often require huge labeled datasets, powerful computers, and specialist programmers. In contrast, large language models—trained on vast collections of text—can answer questions, summarize information, and reason about problems with far less task‑specific data. This makes them attractive tools for farmers, advisors, and extension services who need fast, low‑cost support.

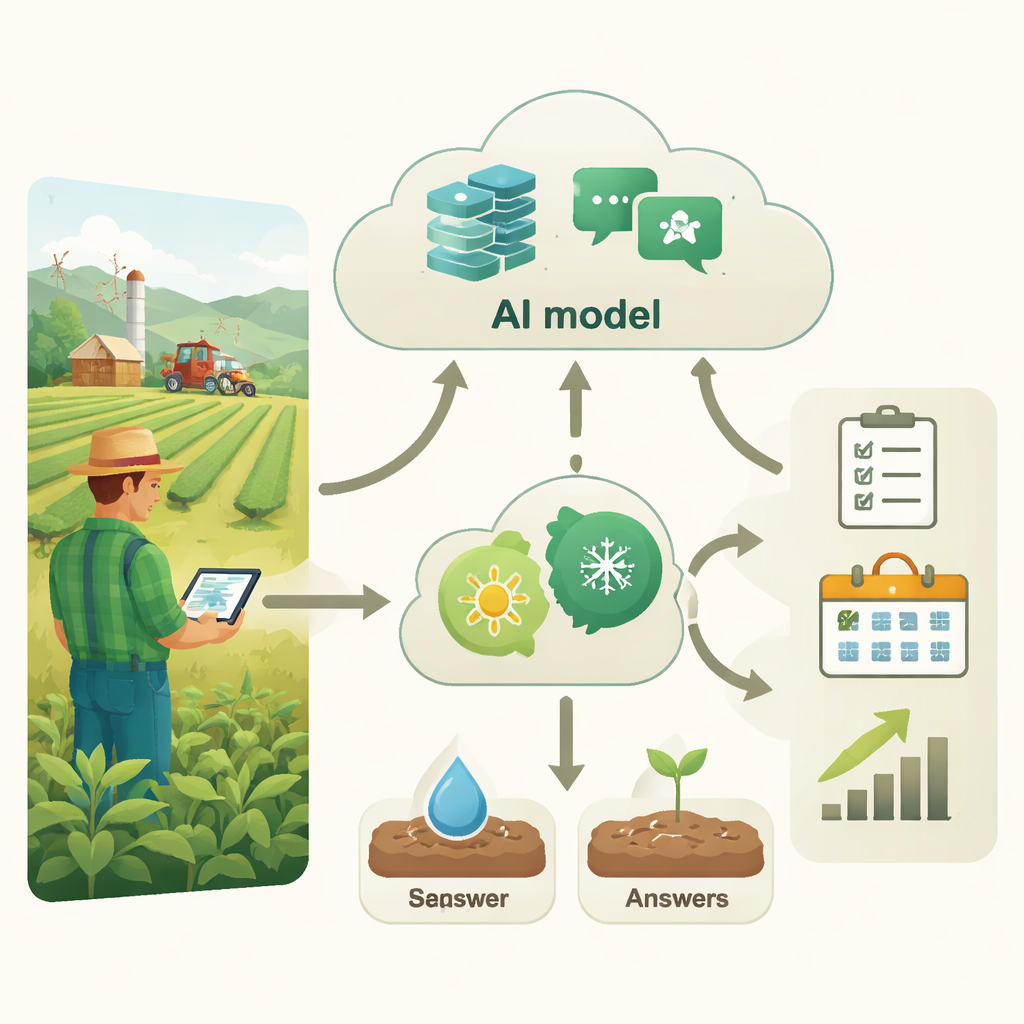

Building an Agricultural Answering Machine

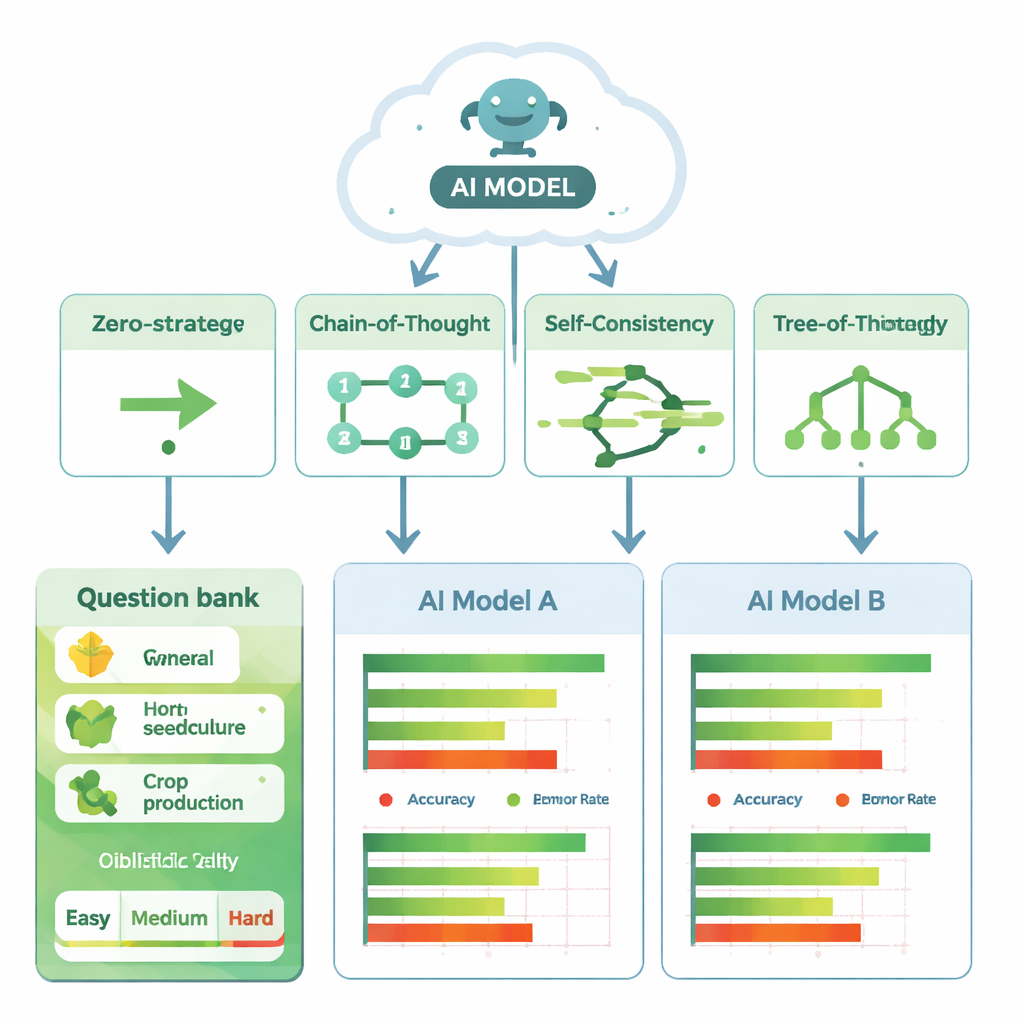

To see how well these models can work in practice, the authors created an agricultural question‑answering system called AgriQAs. They gathered 90 multiple‑choice questions from reliable agricultural sources, covering three areas: general agriculture, horticulture, and crop production. Each topic included easy, medium, and difficult questions, from simple definitions to problems that require several steps of reasoning. Two leading language models were tested: one from OpenAI (GPT‑4o) and one from Google (Gemini‑2.0‑flash). For every question, both models had to choose the correct option from four answers, just as a human taking an exam would.

Teaching AI to Think Through Farm Problems

Simply asking a model a question does not always produce the best answer. The way the question is written—the “prompt”—can strongly influence the result. The researchers compared four prompting styles. In the simplest, called Zero‑Shot, the model was just given the question and told to pick an option. In Chain‑of‑Thought, it was asked to show step‑by‑step reasoning. Self‑Consistency had the model generate several lines of reasoning and then choose the most consistent answer. Tree‑of‑Thought encouraged it to explore several different solution paths before deciding. The team also used an automatic prompt‑engineering tool to refine the wording of the instructions, strengthening the model’s “role” as an agricultural expert and clarifying how it should reason.

How Well Did the AI Advisors Perform?

Across all questions, both models did surprisingly well, but performance depended strongly on how they were prompted. GPT‑4o achieved accuracy between about 85% and 95%, while Gemini‑2.0‑flash ranged from about 75% to 88%. The weakest results for both came from the simple Zero‑Shot style, which offers little guidance on how to reason. The strongest results relied on more structured thinking: Self‑Consistency gave GPT‑4o its best scores, and Tree‑of‑Thought worked best for Gemini‑2.0‑flash. Errors were most common on the hardest questions and in the crop‑production category, which often requires detailed, multi‑step decisions. The authors went beyond simple averages, using formal statistical tests to confirm that the differences between prompting methods and models were real and not just due to chance.

What This Means for Future Farming

For non‑specialists, the key message is that “how you ask” matters almost as much as “who you ask” when working with AI. With carefully designed prompts, large language models can serve as powerful assistants for agricultural engineers and technicians, offering fast, reasonably accurate advice without custom training on every new problem. The authors emphasize, however, that these systems must be used responsibly: biased or incorrect answers could mislead farmers and cause economic loss. As future work adds regional data, sensor information, and clearer guidance from human experts, tools like AgriQAs could become everyday companions in sustainable, high‑tech farming—helping growers make better decisions while conserving resources.

Citation: Eldem, A., Eldem, H. The development and evaluation of agricultural question-answering systems based on large language models. Sci Rep 16, 5357 (2026). https://doi.org/10.1038/s41598-026-35003-9

Keywords: agricultural AI, question answering, large language models, prompt engineering, digital farming