Clear Sky Science · en

An accurate realtime underwater object segmentation using improved dual-domain YOLOv11-UOS with physics guided adaptive enhancement and attention-boosting

Diving Deeper with Sharper Digital Eyes

Our oceans are increasingly explored not just by divers and submarines, but by smart cameras carried on underwater robots. These cameras help search for shipwrecks, inspect offshore pipelines, and monitor coral reefs and fish populations. Yet underwater pictures are often murky, blue‑green, and full of visual clutter, making it hard even for humans—let alone computers—to pick out objects. This paper introduces a new computer‑vision system that cleans up underwater images and then spots and outlines objects in them, fast enough to guide real‑time robotic missions.

Why Seeing Underwater Is So Difficult

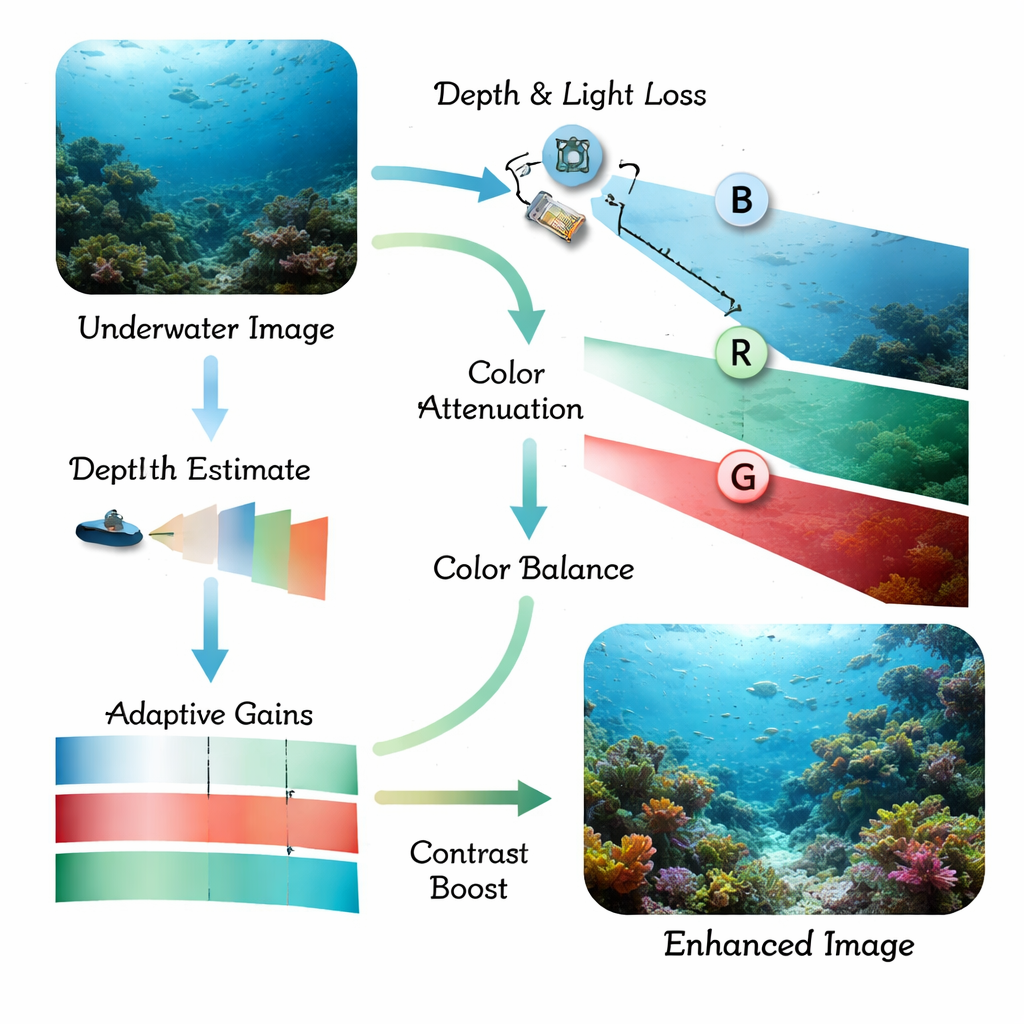

Light behaves very differently in water than in air. As sunlight travels downward, red tones vanish first, then greens, leaving a bluish cast and dull, low‑contrast scenes. Tiny particles in the water scatter light, creating haze that blurs edges and hides small details. Traditional object‑detection programs, and even modern deep‑learning models, struggle with these distorted images: fish blend into coral, man‑made structures disappear into the background, and low‑light scenes become nearly unreadable. Previous research usually tackled either image clean‑up or object detection alone, which often left the final system too slow, too fragile, or still blind in especially murky water.

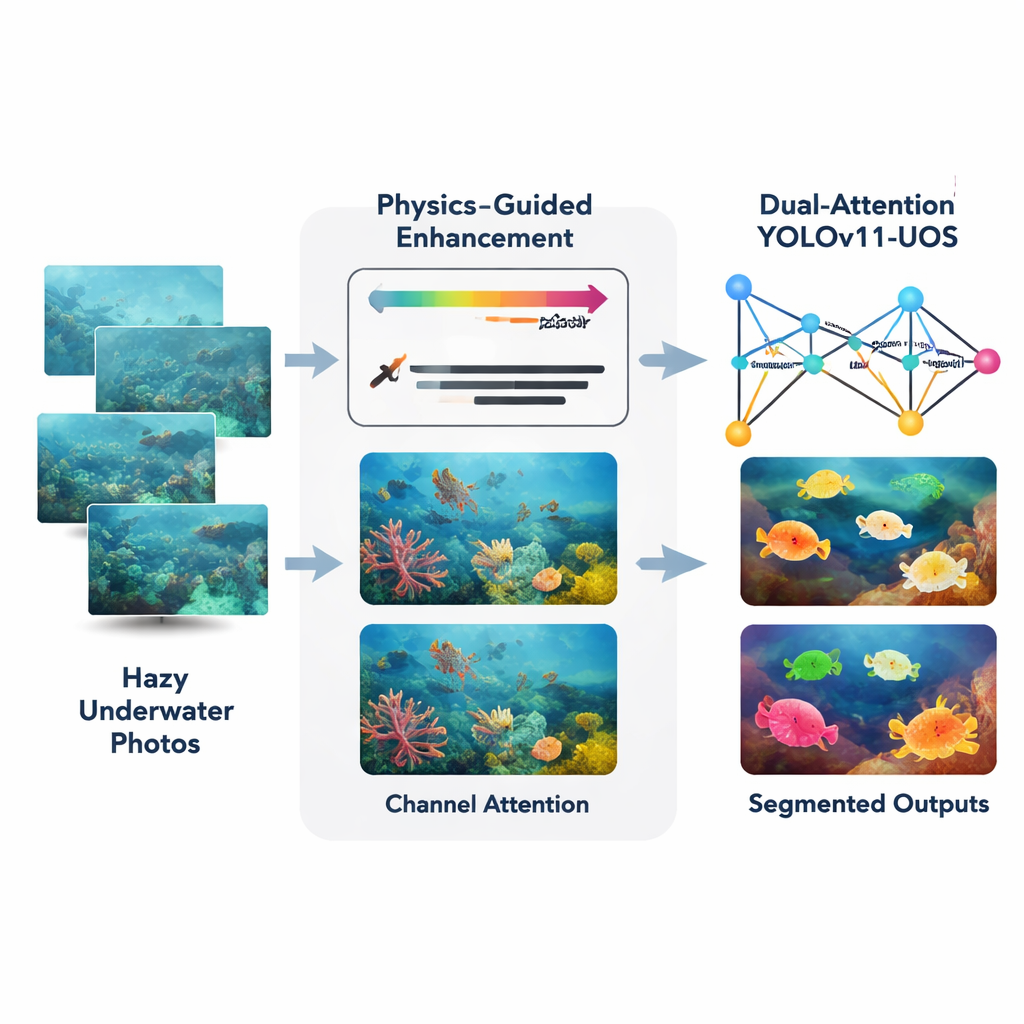

A Two‑Step Strategy: Clean First, Then Focus

The authors propose a combined approach built around a recent real‑time detector called YOLOv11, customized here for underwater scenes and instance segmentation (drawing a precise outline for each object). First, a front‑end module called Adaptive Physics‑Guided Enhancement takes in raw underwater photos and corrects them using a simplified physical model of how light is absorbed and scattered in water. It estimates how far each part of the scene is from the camera, then compensates for the stronger loss of red light compared with green and blue. This restores more natural colors and boosts local contrast, while a careful histogram‑based step sharpens edges without amplifying noise, even in dark or turbid regions.

Teaching the Network Where to Look

Once the image has been cleaned up, it is passed to an upgraded YOLOv11 backbone that has been fitted with attention mechanisms. These added modules behave a bit like a spotlight and a color filter. Spatial attention tells the network to pay more attention to important regions—such as the outline of a fish or the edge of a submerged artifact—and to ignore distracting background like sand or swaying plants. Channel attention adjusts how strongly the system weighs different color and texture patterns, so that useful visual cues are emphasized while irrelevant ones are muted. Together, these dual attention stages help the network build sharper internal representations before it decides where objects are and what they are.

Testing on Real Oceans and Tough Conditions

To see how well the system works in practice, the researchers trained and tested it on several public underwater image collections plus a new custom dataset of over 7,000 carefully labeled photos from coastal waters with varying depth and cloudiness. They measured standard detection and segmentation scores and compared their method against widely used models such as U‑Net, DeepLab, transformer‑based segmenters, and a baseline YOLOv11 system without the new modules. The combined enhancement‑plus‑attention design improved average detection accuracy by about 6.5 percentage points over the baseline YOLOv11, with noticeably cleaner object outlines and fewer missed or falsely detected items. Importantly, the system still runs at around 38 frames per second on a modern graphics processor, fast enough for near real‑time use on robotic platforms.

What This Means for Ocean Robots and Research

In plain terms, the study shows that smart preprocessing and focused attention let computers "see" much better underwater. By first undoing some of the physics that ruin underwater photos and then guiding the detection network to concentrate on the most informative regions and colors, the method delivers sharper, more reliable outlines of fish, coral, and man‑made structures. This can help autonomous underwater vehicles navigate safely, monitor fragile marine ecosystems, and inspect critical undersea infrastructure without human supervision. Challenges remain in extremely muddy water or very deep, red‑light‑starved scenes, but the framework offers a practical step toward robust, real‑time underwater vision that can support future 3D mapping and multi‑sensor exploration of the ocean.

Citation: Deluxni, N., Sudhakaran, P., Alroobaea, R. et al. An accurate realtime underwater object segmentation using improved dual-domain YOLOv11-UOS with physics guided adaptive enhancement and attention-boosting. Sci Rep 16, 4804 (2026). https://doi.org/10.1038/s41598-026-35001-x

Keywords: underwater vision, marine robotics, image enhancement, object segmentation, computer vision