Clear Sky Science · en

Examining human reliance on artificial intelligence in decision making

Why Our Trust in Smart Machines Matters

From movie recommendations to job screening and criminal justice, artificial intelligence (AI) is increasingly helping people make decisions. Many of us assume that computers are less biased and more accurate than humans. But what actually happens when people receive advice from an AI system—do they use it wisely, or lean on it too heavily? This study explores how people respond to AI guidance compared with guidance from other humans, and what that means for the growing role of AI in everyday decisions.

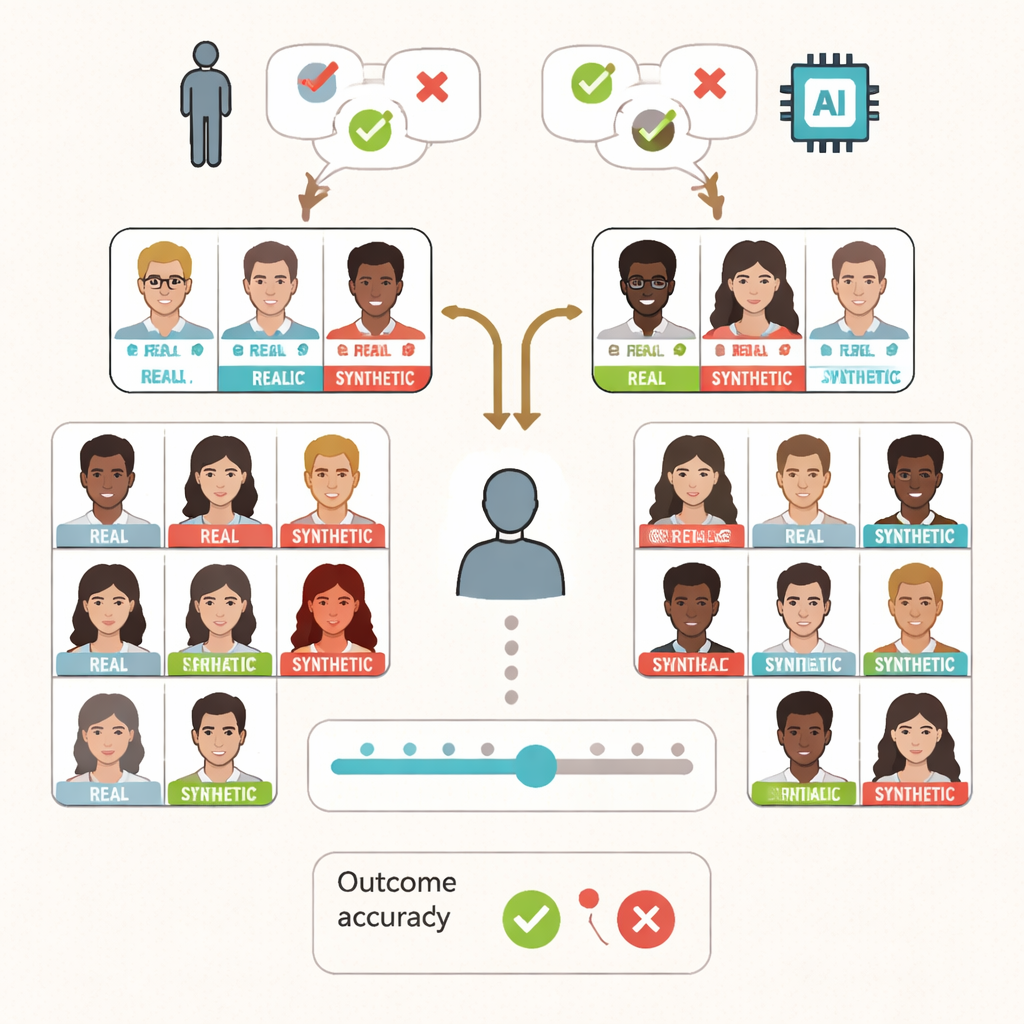

Testing Real People with Real and Fake Faces

The researchers asked 295 adults to perform a seemingly simple task: decide whether a face on a screen was a photograph of a real person or an AI-generated fake. Each participant saw 80 faces—half real, half synthetic—that had been carefully chosen from earlier work so that most people could get them mostly right, but not perfectly. Alongside every face, participants saw a short piece of guidance that said whether the face was “real” or “synthetic.” They were told this advice came either from a group of human experts or from an AI system, though in reality all guidance was pre-programmed and correct only half of the time.

Using Advice, but Not Blindly

The central question was whether people would simply follow the guidance or think for themselves. The results show that participants did not behave like passive button-pressers. They followed the advice much more when it happened to be correct and were more likely to ignore it when it was wrong, regardless of whether it supposedly came from humans or AI. Overall accuracy in spotting real versus synthetic faces stayed around two-thirds correct—very similar to a separate group from earlier research who performed the same task without any guidance at all. In other words, the presence of an AI “helper” neither dramatically improved nor ruined performance on average.

When Positive Attitudes Toward AI Backfire

Beneath these averages, however, a more subtle pattern emerged. Participants also completed surveys about how much they generally trust other people and how they feel about AI. Those who held more positive attitudes toward AI actually became worse at telling real from fake when they received AI guidance. They were less able to distinguish genuine from synthetic faces than participants with more cautious or negative views of AI. This effect did not appear when people believed their guidance came from humans, suggesting that AI advice may uniquely shape, and sometimes distort, our decision-making. The study also found that people who claimed they always relied on the guidance performed worse than those who said they used it only sometimes or not at all.

People Still Play the Deciding Role

The researchers dug deeper into how people balanced their own judgement with the guidance. On average, participants showed a bias toward labeling faces as real, and that bias grew slightly among those who reported a greater tendency to trust other humans. Yet the way people used the guidance looked “strategic”: they seemed to call on it especially when they were less sure. Confidence ratings matched performance fairly well—when people felt more confident, they were generally more accurate—indicating that participants had a reasonable sense of when they were getting it right or wrong, even with AI in the loop.

What This Means for Everyday AI Tools

For a lay reader, the key message is that AI does not magically remove human bias, nor does it automatically overwhelm our judgement. People often treat AI advice similarly to human advice and can ignore it when it looks unhelpful. But when someone already thinks very positively about AI, they may be more likely to lean on it in ways that reduce their accuracy. As AI systems spread into critical areas such as healthcare, security, and justice, designers and policymakers will need to understand these human tendencies. This study suggests that effective AI use depends not just on better algorithms, but on informed people who know when to trust the machine—and when to trust themselves.

Citation: Pearson, J., Dror, I., Jayes, E. et al. Examining human reliance on artificial intelligence in decision making. Sci Rep 16, 5345 (2026). https://doi.org/10.1038/s41598-026-34983-y

Keywords: artificial intelligence, human decision making, trust in AI, automation bias, deepfake faces