Clear Sky Science · en

A long-range LiDAR–camera extrinsic calibration method for rail transit

Keeping Trains Safe From Afar

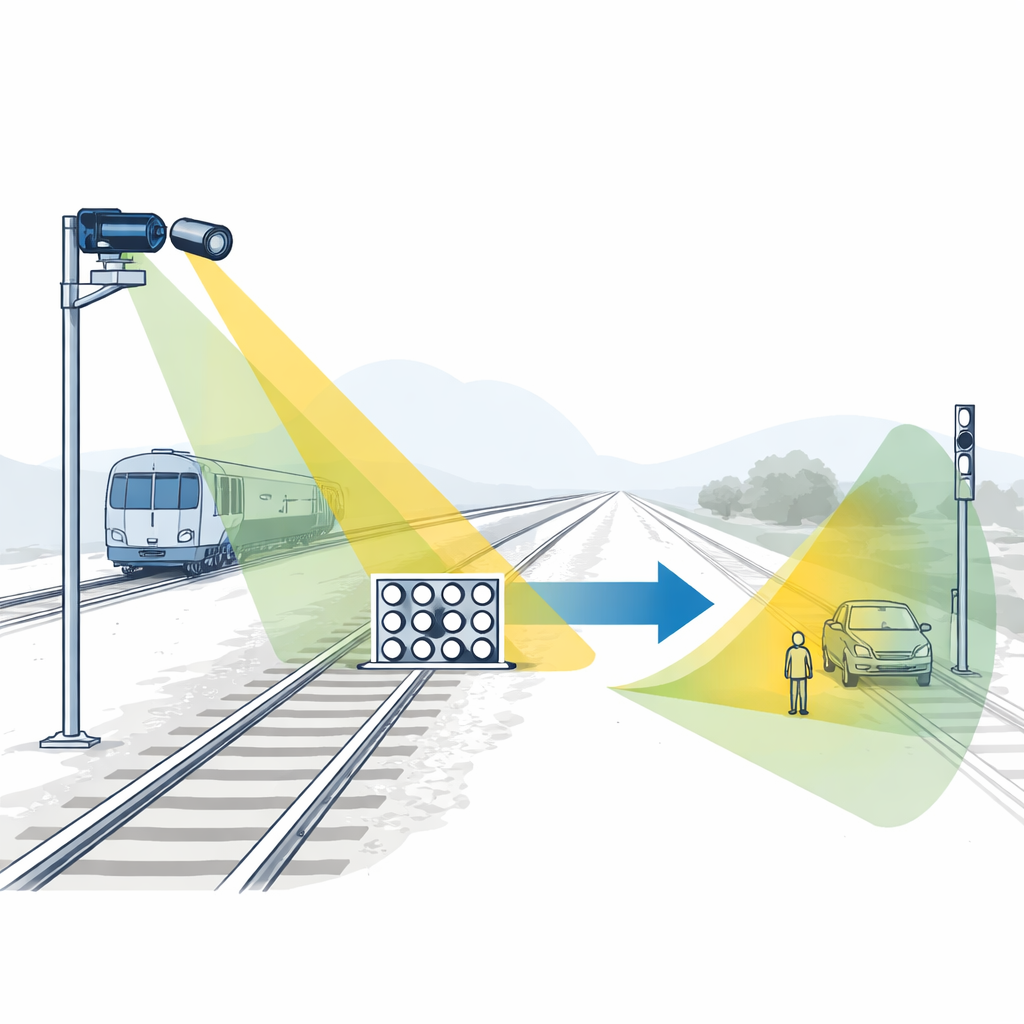

Modern driverless trains rely on electronic “eyes” to watch the tracks for obstacles long before a human could see them. Two of the most important eyes are cameras and laser scanners called LiDARs, which each sense the world in different ways. To work together, they must be aligned with great precision, a task that becomes surprisingly difficult when watching tracks hundreds of meters away. This study presents a new way to align these sensors so they can reliably protect rail systems at long range.

Why Sensor Alignment Matters

On an autonomous train, cameras capture detailed color images while LiDAR measures distance by firing pulses of light and timing their return. Fusing these two views lets the system spot and track objects that might intrude on the track area, from a stalled car at a crossing to debris on the rails. But fusion only works if the system knows exactly how the camera and LiDAR are positioned relative to each other. A small misalignment can shift a detected obstacle by many centimeters—or even meters—at long distances, which could make automatic protection systems slower or less reliable.

The Challenge of Seeing Far Down the Track

For rail applications, engineers often use telephoto lenses so the camera can clearly see objects hundreds of meters away. At those ranges, however, the LiDAR returns from any calibration target become very sparse: only a few laser points land on the board used to align the sensors. Most existing alignment techniques assume a dense cloud of LiDAR points or rich edges in the scene, conditions that simply do not hold at long range. As a result, it becomes hard to find matching features between the 2D image and the 3D point cloud with enough accuracy to support safe train control.

A Smarter Calibration Board

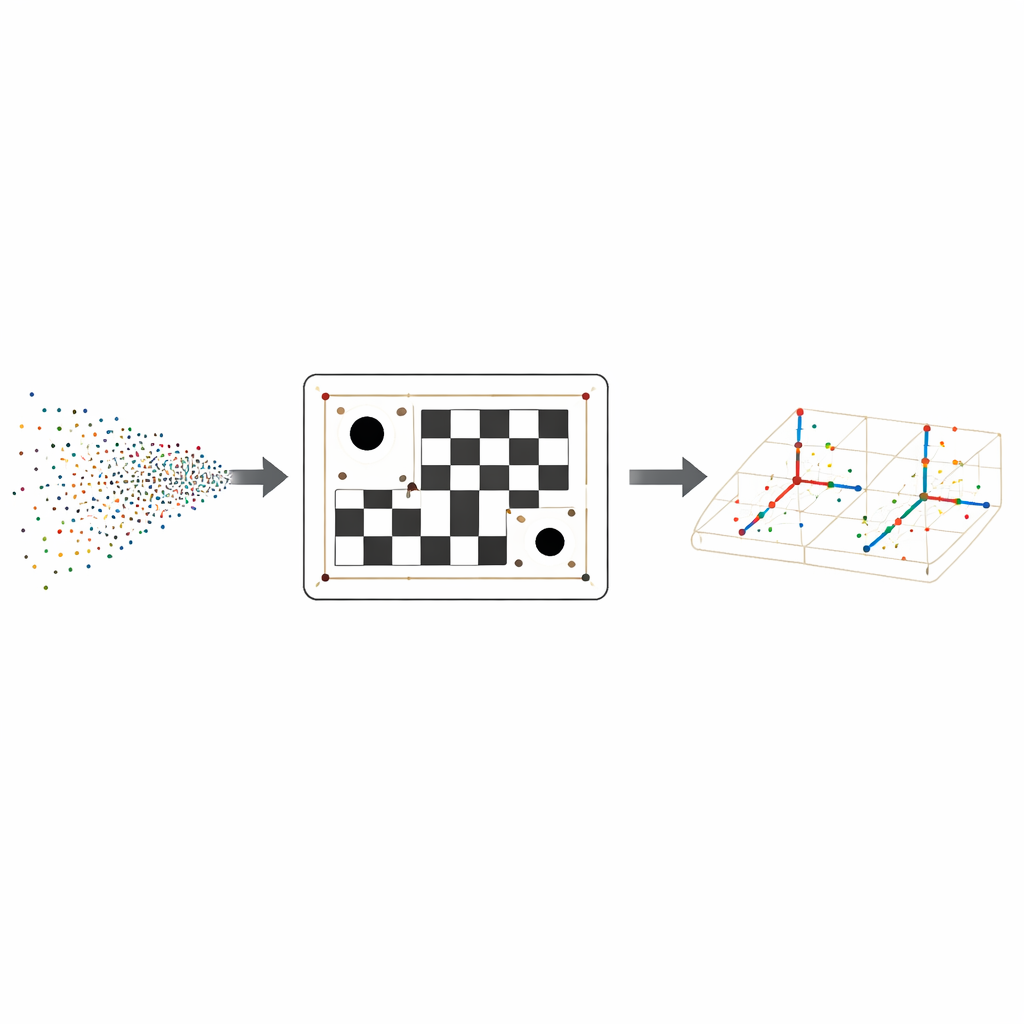

To overcome this, the authors design a special calibration board that combines a familiar black–white checker pattern with three circular holes whose centers form an irregular triangle. The checker pattern supplies many precise corner points in the camera image, while the holes create strong geometric clues for the LiDAR, which can easily detect their round edges even from far away. Because the three holes are placed in an asymmetric triangle, the board’s orientation in space can be determined unambiguously, avoiding confusion from mirrored or rotated views.

Turning Sparse Dots Into Reliable Matches

On the LiDAR side, the method first cleans the point cloud and fits a flat plane representing the board. It then projects the points onto this plane and uses a robust circle‑fitting procedure to find each hole’s center, refining their positions by enforcing the known physical distances between the holes. With the triangle of hole centers established, the algorithm builds a local coordinate grid across the board, predicts where every checker corner should lie in 3D, and checks nearby LiDAR points for the right brightness, or reflectivity, values. This combination of geometry and reflectivity turns a handful of scattered returns into a dependable set of 3D corner locations that match the camera’s 2D corners.

Fine-Tuning the Sensor Relationship

Once the same physical corners are identified in both the camera image and the LiDAR cloud, the authors solve for the exact rotation and translation that link the two sensors. They use an iterative optimization technique that repeatedly adjusts this relationship to shrink the gap between where LiDAR points land in the image and where the camera actually sees the corners. Tests on a real rail platform, using different camera lenses from moderate to strong telephoto, show that the new method consistently keeps projection errors to about a pixel or less, and it outperforms several well‑known alternatives especially at the longest focal lengths where data are scarcest.

What This Means for Rail Safety

In everyday terms, the study offers a more dependable way to tell the camera and LiDAR on an autonomous train, “you are here and looking in exactly this direction.” By redesigning the calibration board and adding smart processing of sparse LiDAR data, the method maintains high accuracy even when the sensors watch scenes hundreds of meters away. This tighter alignment allows the fused system to place obstacles more precisely in 3D space, strengthening the technological foundation for safer rail transit and more trustworthy multi‑sensor perception in the real world.

Citation: Liu, X., Wang, H., Ruan, S. et al. A long-range LiDAR–camera extrinsic calibration method for rail transit. Sci Rep 16, 8018 (2026). https://doi.org/10.1038/s41598-025-34547-6

Keywords: rail transit safety, LiDAR camera fusion, sensor calibration, autonomous trains, long range perception