Clear Sky Science · en

Single-shot incoherent imaging with extended and engineered field of view using coded phase apertures

Why seeing more in one shot matters

From smartphone cameras to telescopes, we often face the same trade-off: zoom in to see small details and you lose how much of the scene fits in the frame. Making sensors bigger is expensive and clashes with the push toward thinner, lighter devices. This research presents a way to “bend the rules” of that trade-off, letting a camera keep its strong magnification while digitally extending how much of the scene it can see in a single exposure.

A new way to stretch the frame

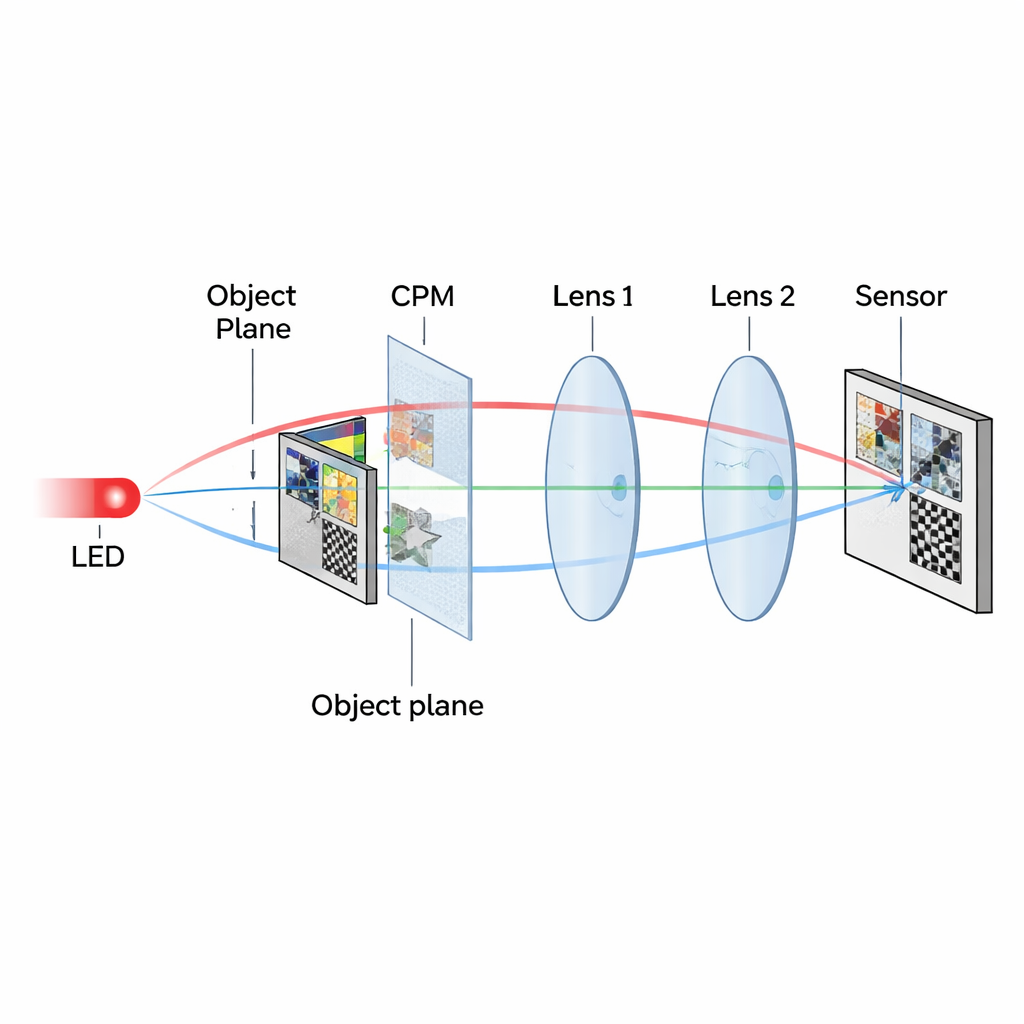

Instead of changing the lens or camera sensor, the authors reshape how light is encoded before it reaches the detector. They insert a special glass-like element called a coded phase mask (CPM) into an ordinary lens system. The CPM does not form an image by itself. Rather, it scrambles the light in a carefully designed way so that information from regions of the scene that would normally fall outside the sensor is redirected into the sensor area. Later, a computer uses this coded signal to reconstruct an extended view of the original scene.

Turning hidden regions into dotted clues

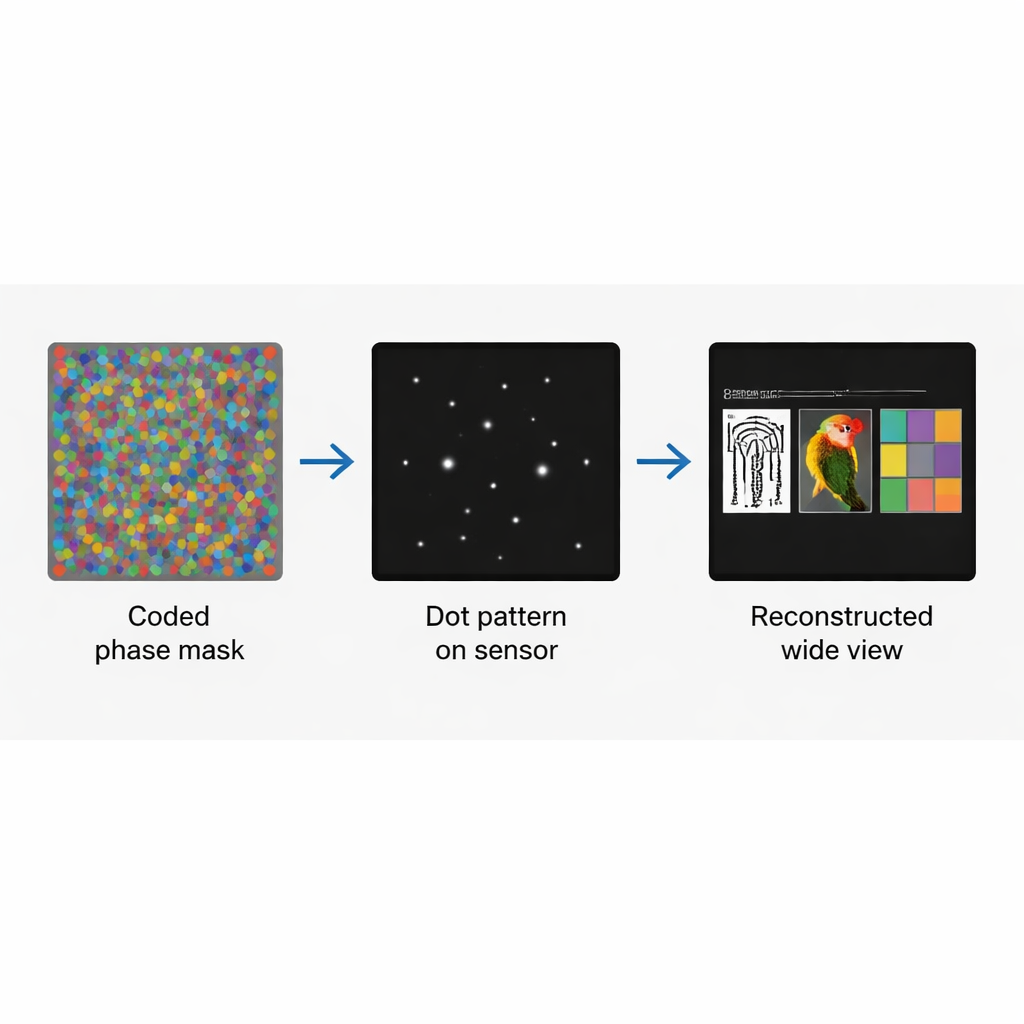

The CPM is built as a multiplex of several distinct phase patterns, each assigned to a different region of the object plane. When a tiny point of light in one region passes through its corresponding pattern, it produces a unique “constellation” of bright dots on the camera—its point spread function. Points from other regions create different constellations that hardly overlap with one another. Crucially, even if a region lies outside the normal field of view, its CPM pattern redirects its light so that its telltale dot pattern appears inside the sensor area. The raw camera image is therefore not a recognizable picture but a composite of sparse dot patterns that encode the entire extended scene.

Decoding the scene with smart math

Once this dot-filled pattern is captured, the image is recovered by deconvolution—a mathematical operation that reverses the blurring and mixing imposed by the optics. The recorded object response pattern is digitally padded and processed together with the corresponding set of point spread functions, one for each region of the scene. By shifting and combining these response functions appropriately, the algorithm reconstructs all regions at their true locations, or even in a new chosen layout. In this sense, the field of view becomes something that can be “engineered”: the same single shot can be reassembled to show different permutations or arrangements of the original areas.

Putting the method to the test

The researchers validated their idea through both simulations and laboratory experiments. They used standard resolution test charts as objects and a camera whose sensor was deliberately too small to see all the objects at once in a normal setup. With the coded phase mask in place, they recorded a single exposure and then reconstructed images that clearly showed two or three separated objects that would otherwise be partly or entirely outside the frame. By varying how many bright dots each pattern contained, they optimized image quality using familiar measures: signal-to-noise ratio, structural similarity to a reference image, and mean squared error. They found specific dot counts that best balanced sharpness against background noise for their two- and three-object experiments.

What this means for everyday imaging

The work offers a different path to wider fields of view than bulky wide-angle lenses, multi-camera arrays, or methods that need many exposures and long computation. Here, a single compact optical element plus one exposure and a relatively simple digital step produce an extended view while preserving the original magnification and resolution. There are still challenges, mainly noise that arises when patterns from different regions interfere in the reconstruction, but the authors outline strategies—such as time-multiplexing masks—to reduce it. In the longer term, this approach could help compact cameras, microscopes, and lightweight telescopes see more of the world in one shot, without giving up fine detail.

Citation: Sure, S.D., Desai, J.P. & Rosen, J. Single-shot incoherent imaging with extended and engineered field of view using coded phase apertures. Sci Rep 16, 7620 (2026). https://doi.org/10.1038/s41598-025-33540-3

Keywords: field of view, computational imaging, coded aperture, digital deconvolution, single-shot imaging