Clear Sky Science · en

Lightweight scalable deep learning framework for real time detection of potato leaf diseases

Why spotting sick leaves matters

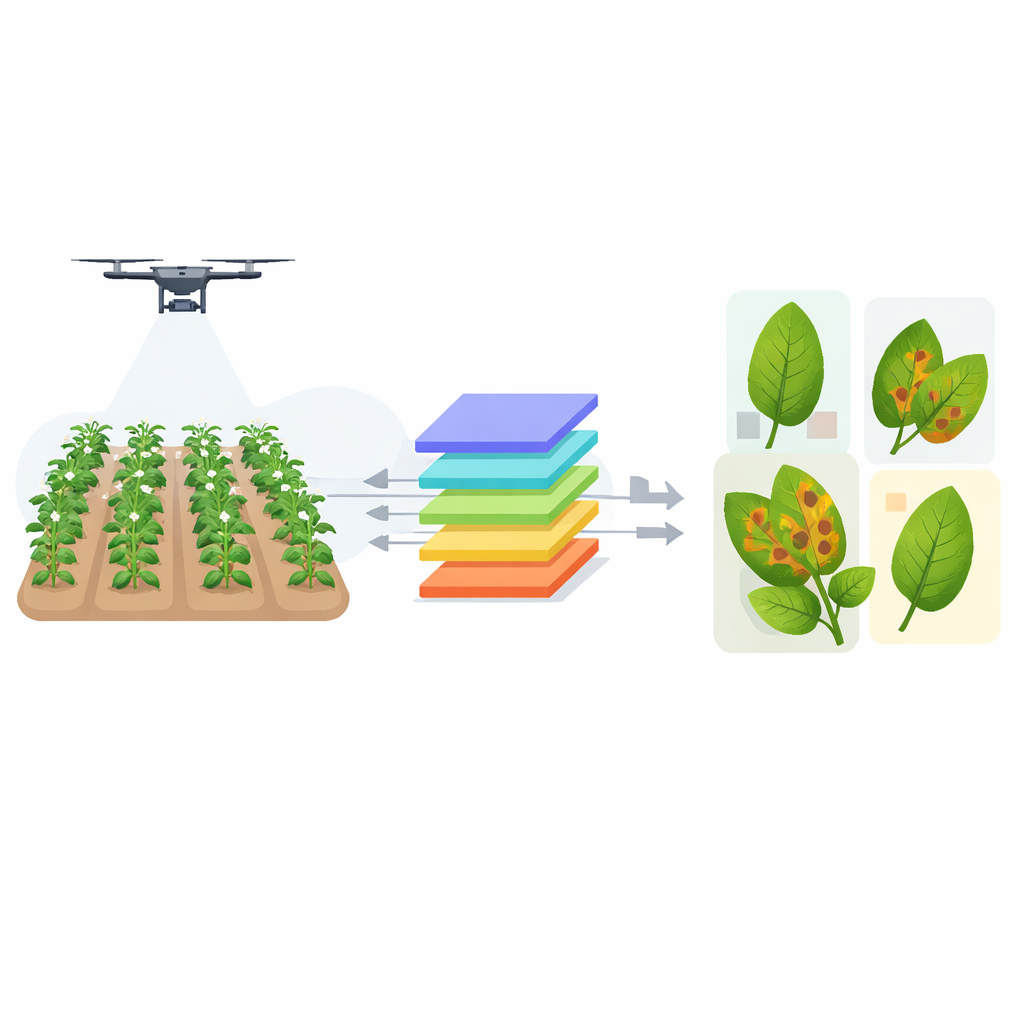

Farmers around the world depend on potatoes as a staple food and source of income. Yet two common leaf diseases, early blight and late blight, can quietly spread through fields, cutting yields and forcing heavy pesticide use. This study describes a new artificial intelligence system that can scan potato plants in real time, pick out sick leaves directly in messy field conditions, and do so fast enough to run on drones, robots, or smartphones. By turning raw images into on-the-spot warnings, it aims to help farmers act earlier, spray less, and protect harvests.

Looking for trouble in real fields

Detecting disease on leaves may sound simple, but farm fields are visually chaotic. Leaves overlap, light changes from bright sun to deep shade, dust and dew create shiny spots, and wind blurs photos. On top of that, harmless problems—such as nutrient stress or insect bites—can look a lot like disease. Many earlier computer systems were trained on clean, lab-style pictures with plain backgrounds. They could say whether an image contained disease, but not precisely where it was or how advanced it was on a real plant. The authors therefore built a new image collection of 2,500 potato leaves photographed on farms in India and Bangladesh, covering healthy plants and a range of disease severities, all carefully labeled by plant experts.

A lean smart detector for tiny spots

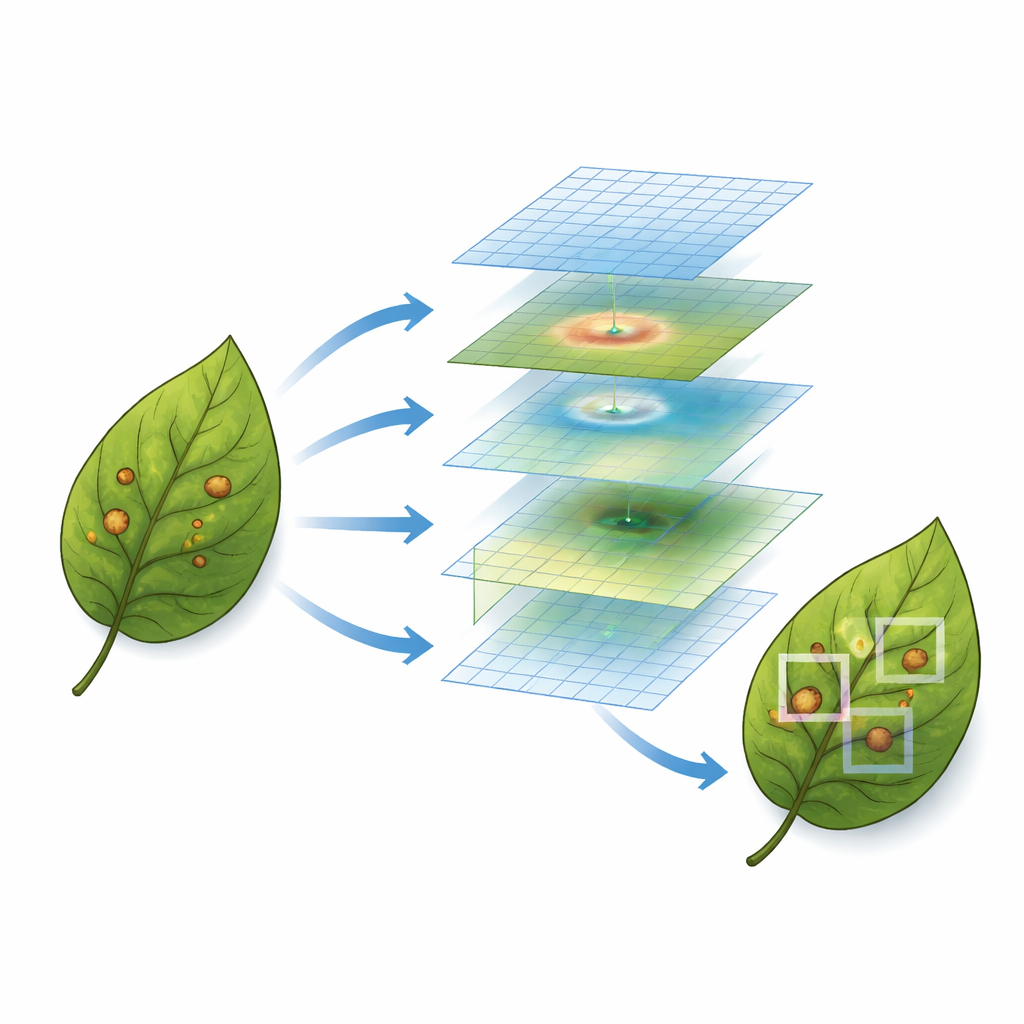

To make sense of these challenging images, the team designed a streamlined detection model called Extended Feature Single Shot Multibox Detector, or EF-SSD. At its core, the system takes relatively large, detailed pictures (512 by 512 pixels) so that even early, pinhead-sized spots remain visible. Unlike standard detectors, which examine features at only a few sizes, EF-SSD builds a tower of ten feature layers. Large layers capture broad context, such as the shape of an entire leaf, while smaller ones focus on fine textures and color shifts that signal the first stages of infection. This multi-scale design helps the system notice both tiny new lesions and bigger, well-developed patches in one pass.

Teaching the model where to focus

Another key addition is an attention mechanism known as Squeeze-and-Excitation. These small modules sit inside the network and act like adjustable volume knobs on the image’s color and texture channels. When the model learns that certain patterns—such as speckled brown rings or water-soaked edges—are linked to disease, it turns up their influence while dialing down distracting background details like soil or neighboring plants. Experiments showed that placing these attention blocks in the middle of the network, where features are still fine-grained but somewhat abstracted, gave the best boost, improving detection scores by about four percentage points.

How well it performs against rivals

The researchers compared EF-SSD against several popular object-detection systems, including YOLOv5, YOLOv8, a newer YOLOv12 variant, Faster R-CNN, RetinaNet, and a transformer-based model called RF-DETR. All were trained and tested under identical conditions on the same field dataset. EF-SSD came out on top across almost all measures: it correctly identified and localized disease regions with a mean Average Precision of 97 percent and achieved a balanced F1-score of 95 percent. It also drew bounding boxes that closely matched expert markings, with high overlap scores. Despite its deeper feature hierarchy, the model remained efficient, running at about 47 frames per second on a desktop graphics card and maintaining practical speeds on compact devices such as NVIDIA Jetson boards.

From lab to farm and beyond

A closer look at the results shows that EF-SSD is especially strong at catching small, fragmented, or partially hidden lesions—exactly the kinds of cases that often slip past other detectors in cluttered scenes. When the authors turned off the attention modules or reduced the number of feature layers, performance clearly dropped, confirming that both design choices matter. While the system can still struggle with extreme lighting, heavy blur, or the tiniest early spots, the study demonstrates that a carefully tailored, lightweight detector can provide reliable, real-time feedback in the field. For farmers, the practical takeaway is straightforward: a compact AI tool, embedded in a phone or drone, could soon flag sick potato plants early enough to guide targeted treatment, save yield, and reduce unnecessary chemical use.

Citation: Bhavani, G.D., Chalapathi, M.M.V. Lightweight scalable deep learning framework for real time detection of potato leaf diseases. Sci Rep 16, 8770 (2026). https://doi.org/10.1038/s41598-025-33423-7

Keywords: potato leaf disease, plant disease detection, deep learning in agriculture, object detection, precision farming