Clear Sky Science · en

CMAF-Net: cross-modal attention fusion with information-theoretic regularization for imbalanced breast cancer histopathology

Why this research matters for breast cancer care

Pathologists diagnose breast cancer by studying thin slices of tissue under a microscope, but sorting rare cancerous spots from a sea of healthy cells is demanding and imperfect work. This study introduces CMAF-Net, a new type of computer system designed to help catch more cancers in these images while keeping false alarms low, even when malignant samples are vastly outnumbered by healthy ones. Its advances could make automated screening more reliable, support overburdened clinicians, and offer a blueprint for detecting many other rare diseases.

Finding a needle in a haystack of tissue images

In real hospital data, most breast tissue samples are harmless, and only a minority contain invasive ductal carcinoma, the most common form of breast cancer. This imbalance causes many artificial intelligence systems to quietly “learn” that predicting healthy tissue is almost always safe, missing dangerous tumors in the process. At the same time, clues to malignancy appear at very different zoom levels, from distorted nuclei in single cells to disordered structures across an entire region of tissue. Traditional image-analysis networks are good at either small details or large patterns, but they rarely combine both in a way that highlights the rare, life-threatening cases.

Blending close-up details with the bigger picture

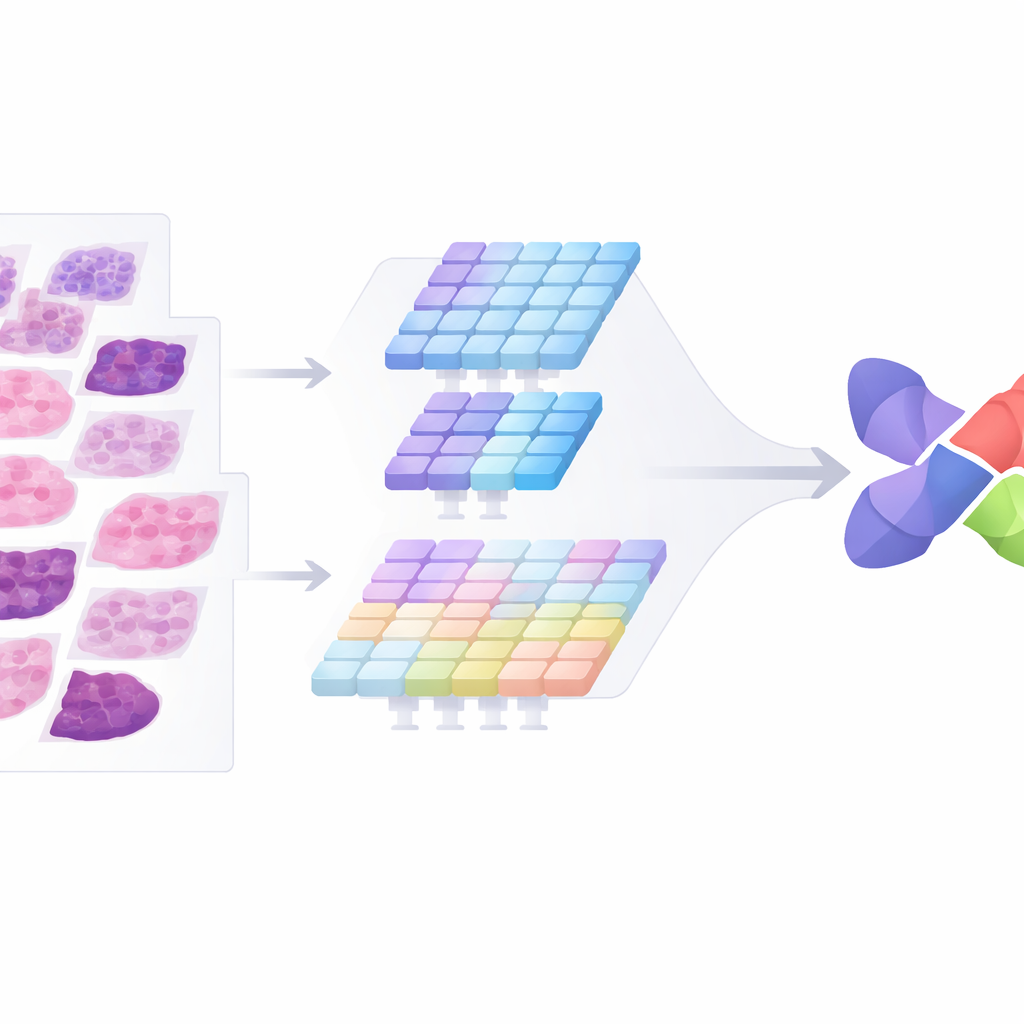

To tackle these twin problems, the authors designed CMAF-Net with two complementary “eyes” on each image. One branch acts like a classic pattern-recognition engine that specializes in fine textures, such as the shapes and arrangements of cells. The second branch behaves more like a global map-reader, capturing broader tissue organization using a modern transformer design. Rather than simply stacking these two views together, the system passes them through a dedicated fusion block that allows the branches to exchange information via multiple channels of attention. This block selectively keeps features that add new insight while suppressing duplicated or distracting signals, so that the final combined representation remains both rich and compact.

Teaching the system to care about rare cancers

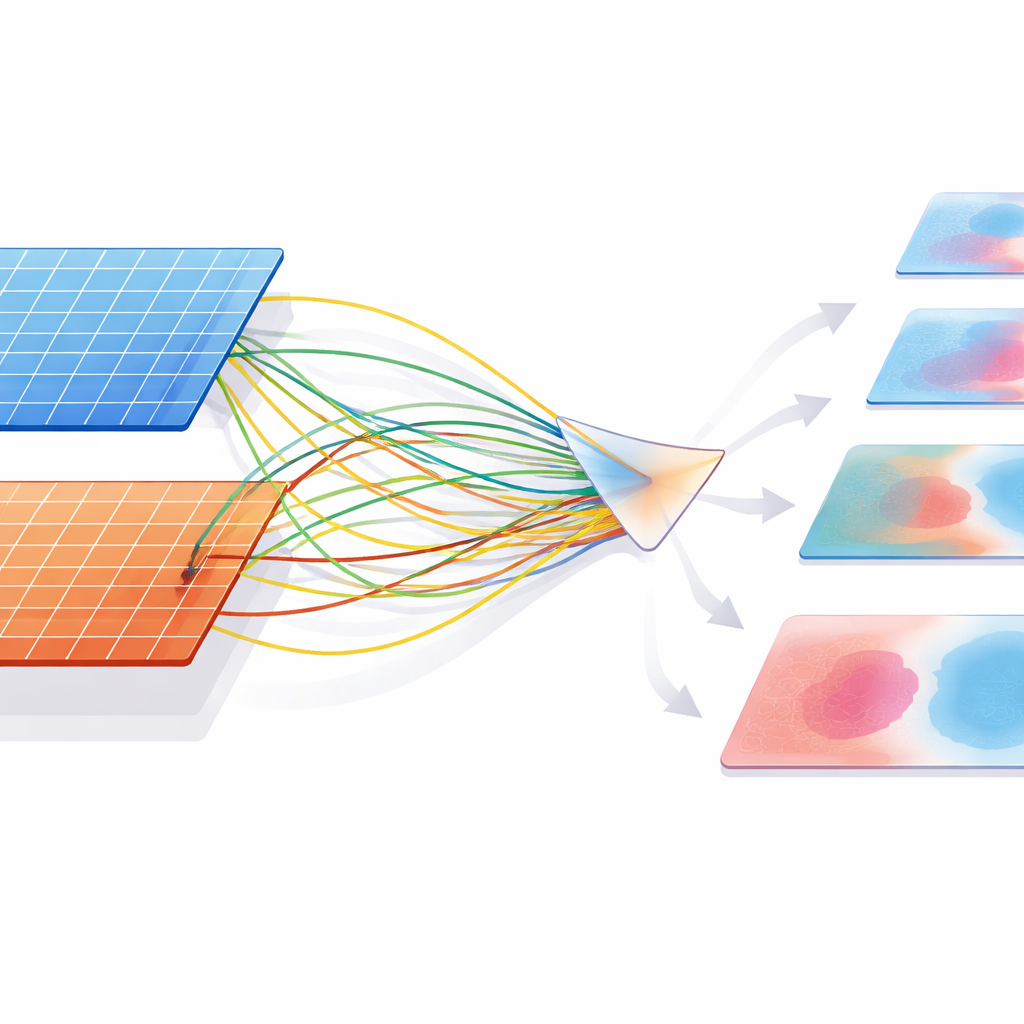

Even a clever architecture can still favor the majority class, so the researchers redesigned the way the system learns from its mistakes. Building on ideas from information theory and margin-based learning, they crafted a training rule that explicitly pushes the model to carve out wider “safety margins” around the minority cancer cases. In practical terms, CMAF-Net is penalized more for missing a malignant patch than for misclassifying a benign one, and this penalty is adjusted over time as the feature space matures. The attention mechanism itself is also tuned through a kind of “temperature” control: sharper attention preserves more information when needed, while softer attention filters out noise, giving the model a principled way to compress data without losing the signals that separate cancer from non-cancer.

Putting the method to the test

The team evaluated CMAF-Net on a large, naturally imbalanced dataset of breast tissue patches, where about three quarters were benign and the rest cancerous. Compared with a range of strong baseline systems—including deep convolutional networks, vision transformers, and previous fusion models tailored for imbalance—the new method stood out. It correctly identified roughly 95% of malignant samples while keeping specificity similarly high, and it did so with fewer parameters than many competing fusion networks. When the authors made the data even more skewed, down to just one cancer patch for every ninety-nine benign ones, CMAF-Net’s performance declined gradually but remained clinically useful. Other methods, in contrast, lost most of their ability to recognize cancer under these extreme conditions.

Generalizing across microscopes and tumor types

To see whether CMAF-Net was merely memorizing one dataset or learning more universal patterns of disease, the authors tested it on a separate collection of breast tumor images captured from different patients and at four different magnification levels. Without any retraining, the model maintained high sensitivity across all zoom levels and outperformed prior approaches on both simple benign-versus-malignant tasks and a more demanding eight-class problem covering multiple tumor subtypes. Notably, CMAF-Net showed the largest gains on rare tumor categories, suggesting that its focus on information-efficient fusion and class-aware learning helps it distinguish subtle, uncommon patterns rather than just the most typical cases.

What this means going forward

For non-specialists, the key message is that CMAF-Net offers a smarter way for computers to read pathology slides: it looks closely and broadly at once, learns to pay extra attention to rare but dangerous signs of cancer, and keeps working even when malignant examples are scarce. Beyond breast cancer, the same design principles could guide tools for spotting rare diseases in many kinds of medical images, offering doctors a more trustworthy second opinion and potentially bringing earlier, more accurate diagnoses to patients who need them most.

Citation: Ativi, W.X., Chen, W., Kwao, L. et al. CMAF-Net: cross-modal attention fusion with information-theoretic regularization for imbalanced breast cancer histopathology. Sci Rep 16, 9607 (2026). https://doi.org/10.1038/s41598-025-32794-1

Keywords: breast cancer, histopathology AI, class imbalance, deep learning, medical image analysis