Clear Sky Science · en

Camouflaged object detection via context and texture-aware hierarchical interaction

Why spotting hidden shapes matters

From leaf‑colored insects to military camouflage and even hard‑to‑see growths in medical scans, our world is full of things designed to blend into the background. Teaching computers to reliably find these hidden objects could help protect wildlife, improve safety inspections, and assist doctors in catching disease earlier. This paper introduces a new artificial intelligence system, called CTHINet, that learns to see through camouflage by paying attention not only to overall scene context but also to tiny texture clues that human eyes often miss.

Seeing the forest and the trees

Camouflaged object detection is much harder than ordinary object detection because the target often matches its surroundings in color, brightness, and shape. Earlier computer methods relied on simple hand‑crafted cues such as motion, edges, or basic texture, which break down in cluttered or noisy scenes. Modern deep‑learning approaches have made progress by training large networks on specialized image collections of camouflaged animals and man‑made objects. Many of these methods add extra hints, such as drawing boundaries around objects or estimating uncertainty, but they can easily be misled when the edges themselves are blurry or ambiguous—exactly the case in good camouflage.

Tiny texture clues that give the game away

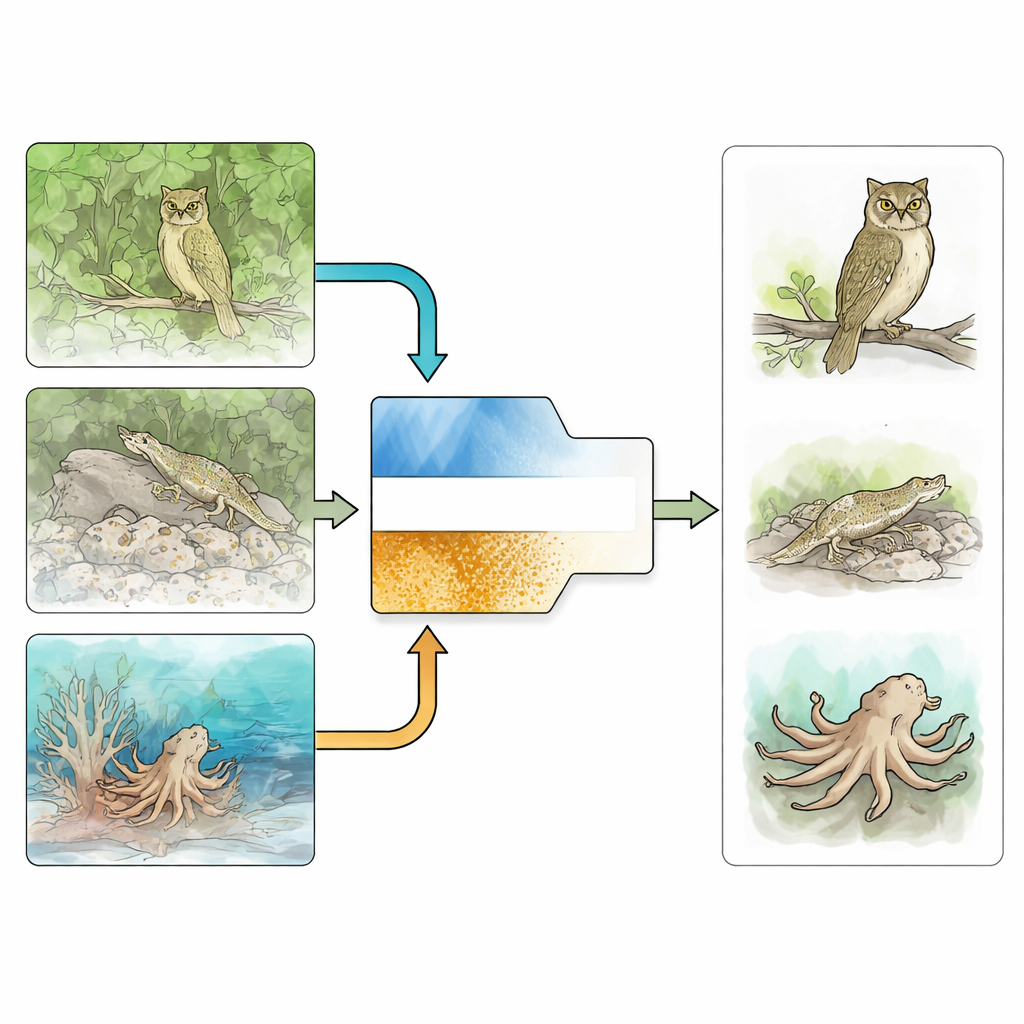

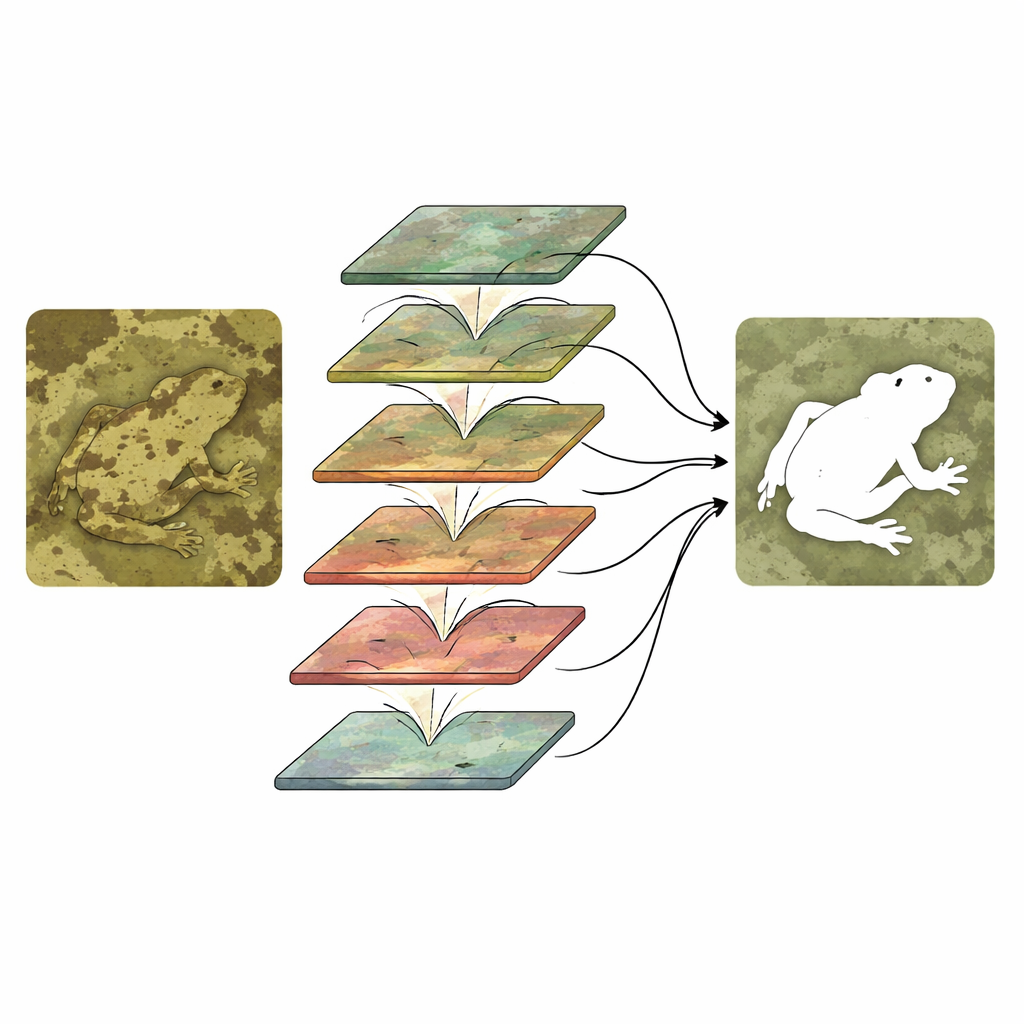

The authors argue that even the best camouflage leaves tell‑tale traces in the fine texture of an image—small differences in grain, pattern, or smoothness that are easy to overlook when focusing only on outlines. Building on this idea, CTHINet separates learning into two coordinated branches. One “context” branch, based on a powerful vision transformer backbone, captures broad, multi‑scale information about the whole scene: how regions relate to each other, where large shapes lie, and which areas might plausibly contain an object. In parallel, a dedicated “texture” branch focuses narrowly on subtle surface patterns, trained with special texture labels that tell the network what kinds of fine detail belong to the hidden object rather than to the background.

How the two branches work together

Simply running two branches is not enough; they must interact in a smart way. CTHINet first refines the context features using a Multi‑head Feature Aggregation Module. This module splits the information into several parts, each processed with a different effective “zoom level,” so the system can respond to tiny insects and large animals alike. It then recombines these views so they inform each other without exploding the computational cost. Next, a series of Hierarchical Mixed‑scale Interaction Modules link the context and texture streams. At each stage, the network groups and mixes channels from both branches, lets them exchange information, and then re‑weights them so that the most informative combinations are amplified while less useful ones are suppressed. This coarse‑to‑fine stacking gradually sharpens a concealed object’s outline and separates it from distracting background detail.

Proving it works in the wild and in the clinic

To test CTHINet, the researchers evaluated it on three challenging public benchmarks of camouflaged animals and objects, containing thousands of images in varied natural settings. Across several standard accuracy measures, the new method consistently outperformed more than twenty leading systems, especially on difficult scenes with small targets, heavy background matching, or partial occlusion. The team also tried the same network, with minimal changes, on a medical task: segmenting polyps in colonoscopy images. Polyps often blend into the intestinal wall in much the same way that animals blend into foliage. Here too, CTHINet delivered the best results among several strong medical‑image models, suggesting that its way of combining context and texture is broadly useful.

What this means for finding the nearly invisible

In everyday terms, CTHINet embodies a simple but powerful insight: to find something that is meant to be hidden, a computer must look at both the big picture and the tiniest surface details, and let these two views inform each other step by step. By designing a network that cleanly separates these roles, then reunites them through carefully staged interactions, the authors achieve more accurate detection of camouflaged targets and show promise for medical and industrial inspection tasks where important structures can be easily overlooked. As image data continue to grow, such context‑ and texture‑aware systems may become key tools for revealing what was intended to stay unseen.

Citation: Wang, Z., Deng, Y., Shen, C. et al. Camouflaged object detection via context and texture-aware hierarchical interaction. Sci Rep 16, 9328 (2026). https://doi.org/10.1038/s41598-025-32409-9

Keywords: camouflaged object detection, computer vision, texture analysis, medical image segmentation, deep learning