Clear Sky Science · en

Incremental learning approach for semantic segmentation of skin histology images

Why teaching computers to read skin samples matters

Skin cancer is one of the most common cancers worldwide, and doctors often rely on thin slices of tissue, viewed under a microscope, to decide how serious a tumor is and how to treat it. Reading these slides is slow, demanding work that can vary from one expert to another. This study explores how to build computer systems that learn to recognize different skin tissues and cancers on these microscope images, and, importantly, keep getting better over time as new types of images are added—much like a human trainee who continues to learn throughout their career.

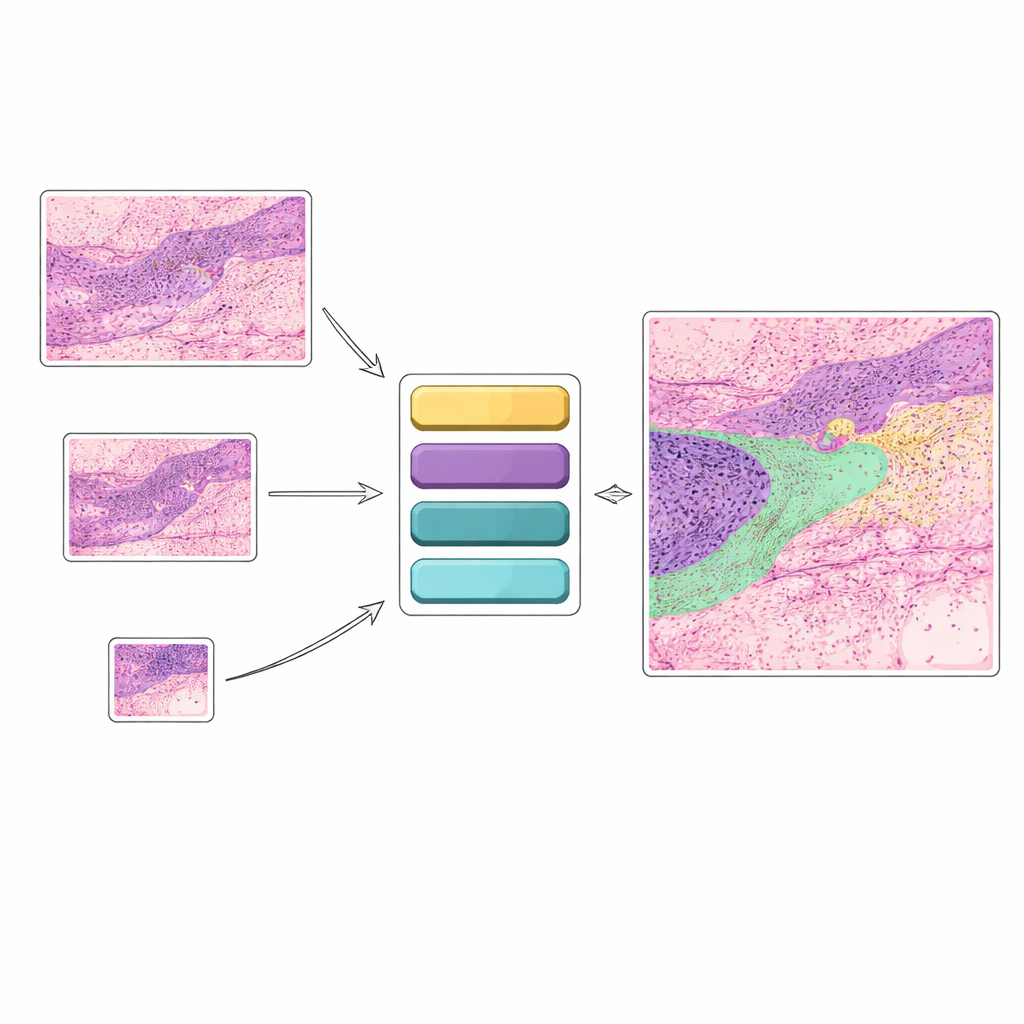

From simple yes–no answers to detailed tissue maps

Many existing artificial intelligence tools for skin cancer look at an image and answer a narrow question such as “cancer” or “no cancer.” While useful, this kind of yes–no decision does not capture the rich detail that pathologists see. In practice, doctors care about many structures at once: different cancer types, healthy skin layers, hair follicles, glands, inflamed regions, and more. This study instead focuses on “semantic segmentation,” where each pixel in a histology image is assigned to one of twelve tissue categories. That produces a color-coded map showing exactly where various cancers and normal tissues lie, offering clearer guidance for diagnosis and treatment planning.

Why current smart systems struggle to adapt

Today’s powerful deep learning models usually assume that all the training data are available at once. Once trained, they tend to “lock in” their knowledge. If new data with different properties—for example, images at another magnification—are introduced later, the safest option is often to retrain the entire model from scratch. That is costly and slow, and, even worse, adding new information can cause “catastrophic forgetting,” where performance on earlier tasks quietly degrades. In clinical settings, however, data evolve all the time as scanners are upgraded, imaging settings change, and hospitals collect new kinds of samples. An AI tool that cannot gracefully absorb such changes is hard to trust in everyday practice.

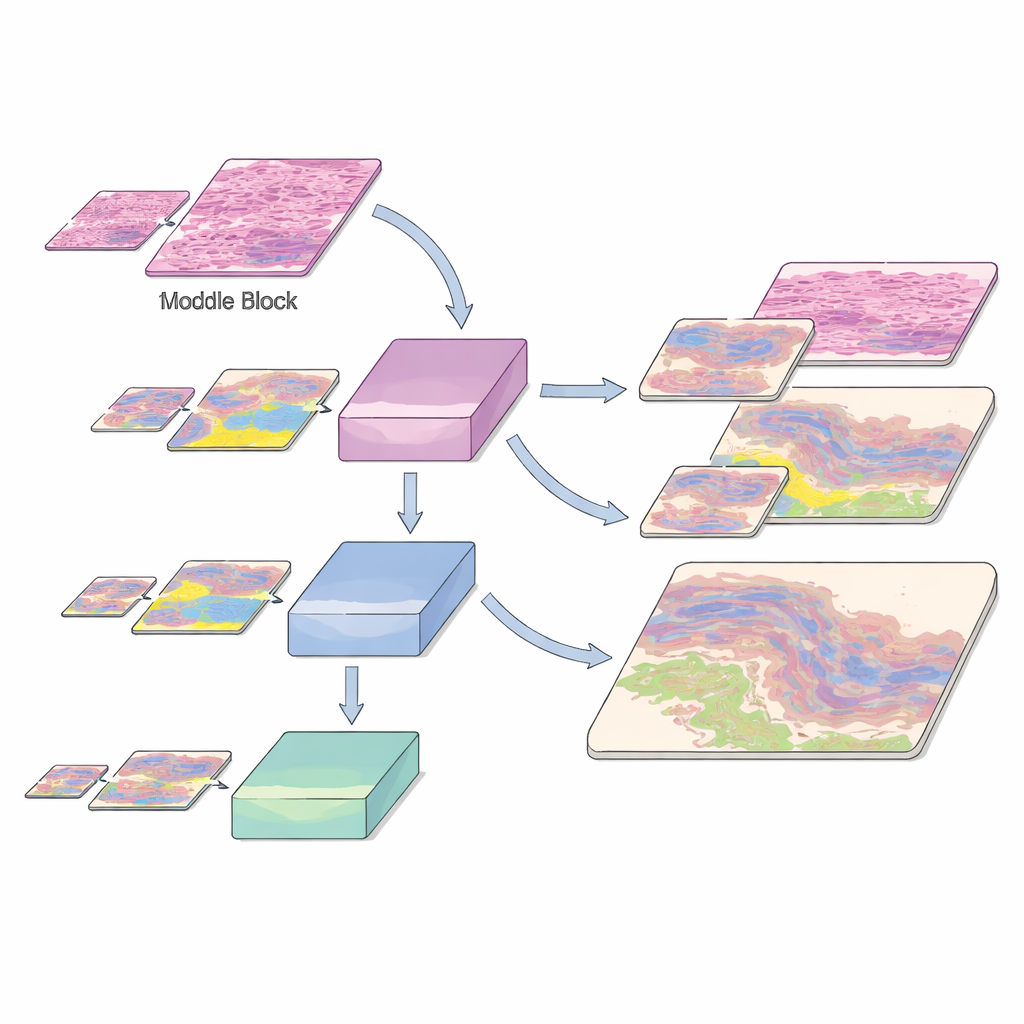

A stepwise learning strategy inspired by how humans learn

The authors build on a modern vision transformer architecture called SegFormer and turn it into an “incremental learning” system for non-melanoma skin cancer. Instead of seeing all data at once, the model is trained in stages using histology slides from a public Queensland University dataset. First, it learns from high-magnification (10×) images, where fine detail is clear. Later, images at 5× and then 2× magnification are added, while a portion of the earlier high-detail data is kept in the mix. Special loss functions help the new version of the model retain what it previously knew about tissue patterns, even as it adapts to the coarser, more zoomed-out views. This “learning without forgetting” is guided by a technique called knowledge distillation, where an earlier model acts as a teacher to the newer one, and by a mutual distillation term that nudges both old and new representations to stay in harmony.

Learning across zoom levels and rare tissue types

Histology images are challenging not just because there are many tissue types, but also because some important structures are rare. The dataset includes common cancers such as basal cell carcinoma and squamous cell carcinoma, as well as normal and inflamed skin layers, each annotated at the pixel level by experts—a painstaking process taking hundreds of hours. The authors slice these huge slides into small patches and train their model using a careful split into training, validation, and test sets, preserving the mix of tissue classes at each magnification. To help the system recognize scarce but clinically crucial regions, they augment underrepresented classes by rotating patches and by exposing the model to those tissues at multiple zoom levels. This multi-resolution exposure helps the AI recognize the same biological structure whether it appears as a crisp close-up or a softer, zoomed-out shape.

What the model achieves compared with earlier tools

On their own, SegFormer models trained separately at each magnification already outperform earlier convolutional designs such as U-Net on many tissue categories. But when the incremental learning scheme is applied—training first at 10×, then at 10× plus 5×, and finally at 10×, 5×, and 2× together—the gains become striking. Overall accuracy rises from about 89% with only 10× images to more than 95% after all three magnifications are included. Measures of overlap between predicted and true regions also improve steadily, and performance on cancers such as basal cell and squamous cell carcinoma, as well as key normal layers like the epidermis and papillary dermis, exceeds that of competing methods. Importantly, as each new zoom level is added, the model does not forget what it learned earlier; instead, its understanding of tissue structure becomes more robust and general.

How this work moves AI-assisted diagnosis closer to the clinic

For non-specialists, the main message is that the authors have created a skin tissue “map-maker” that can keep studying new kinds of images without losing its old skills. By carefully designing how the model learns in stages and how it reuses its own past knowledge, they show that it is possible to build AI tools that adapt as medical imaging practices evolve. While more validation is needed across hospitals and disease types, this incremental, transformer-based approach points toward AI systems that can stay up to date with changing data, offer detailed visual explanations of where cancers lie, and ultimately support pathologists in making more confident and consistent treatment decisions.

Citation: Fatima, S., Salam, A.A., Akram, M.U. et al. Incremental learning approach for semantic segmentation of skin histology images. Sci Rep 16, 9593 (2026). https://doi.org/10.1038/s41598-025-31553-6

Keywords: skin cancer, histology images, semantic segmentation, incremental learning, deep learning transformers