Clear Sky Science · en

A novel approach to preeclampsia early prediction addressing predictive uncertainty due to missing data in clinical dataset

Why this matters for mothers and babies

Preeclampsia is a dangerous complication of pregnancy that can suddenly threaten the lives of both mother and baby. Doctors know that simple steps, such as giving low-dose aspirin very early in pregnancy, can greatly reduce the risk for women who are likely to develop the condition. The challenge is spotting those high‑risk pregnancies in time, and doing so reliably when real‑world medical records are often incomplete. This study introduces a new way to predict preeclampsia early while also telling doctors how much they should trust each prediction.

Understanding a silent pregnancy threat

Preeclampsia affects 2–8% of pregnancies worldwide. It usually appears later in pregnancy, but its roots are laid much earlier. Mothers with preeclampsia can suffer damage to the kidneys, liver, brain, and other organs, and in the worst cases both mother and baby can die. Babies may stop growing properly or need to be delivered very prematurely. Because starting low‑dose aspirin before 16 weeks of pregnancy can cut the risk of early preeclampsia by more than half, being able to identify women at high risk in the first trimester could transform care. Relying only on a clinician’s experience, however, has proved too uncertain for such high‑stakes decisions.

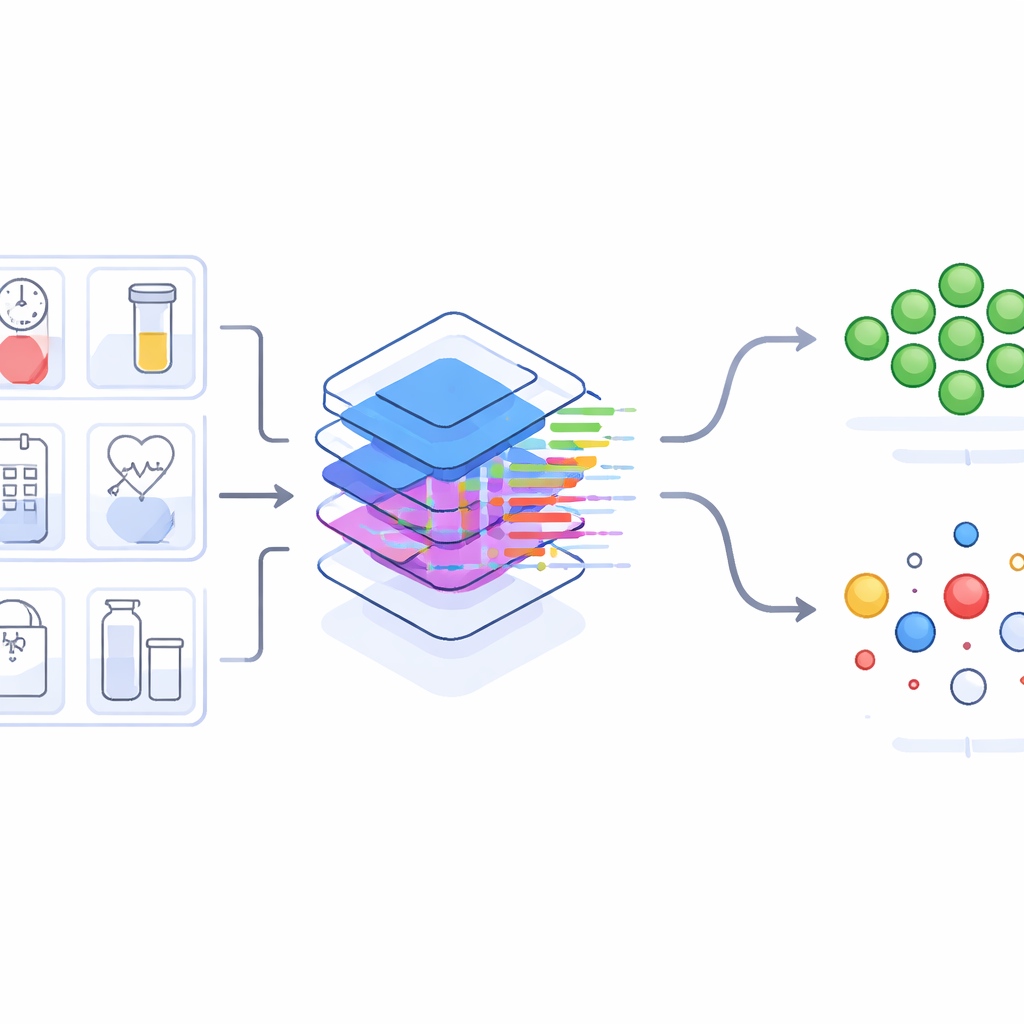

Turning messy medical records into useful warnings

Over the last decade, many research teams have used machine‑learning methods to predict preeclampsia from routine clinic and lab information. These models typically reach moderate accuracy, but they all share a major problem: they assume every prediction is equally trustworthy, even when key test results are missing from a patient’s record. In real antenatal care, blood tests and follow‑up visits are often skipped, especially in busy outpatient settings. This means large hospital databases are full of gaps. Earlier studies mostly ignored how those gaps affect confidence in each prediction, which may have hidden the true potential of these models.

Adding an "honesty meter" to risk scores

The authors analyzed records from more than 31,000 singleton pregnancies at three hospitals in Korea, using information collected before 16 weeks of gestation. They built a prediction model that outputs a preeclampsia risk score between 0 and 1. Then they added a second number: an uncertainty score that reflects how much missing information might be undermining that prediction. To do this, they examined how strongly each clinical or laboratory variable typically pushes the risk up or down in women whose data are complete. Variables whose values strongly influence the model—such as mean arterial blood pressure, a long gap since the last pregnancy or being a first‑time mother, certain pregnancy‑related proteins, and HDL cholesterol—were judged more important. If such a crucial variable was missing for a given woman, her uncertainty score increased more than it would for a missing less‑important item.

What happens when you trust only clearer signals

Armed with this uncertainty score, the team asked how the model performs when they focus only on pregnancies with relatively complete and informative data. In internal testing, when they ignored uncertainty and used all women, the model’s ability to distinguish who would and would not develop preeclampsia was good but not exceptional. As they gradually restricted evaluation to women with lower uncertainty scores—meaning fewer or less‑critical missing values—the accuracy rose steadily. At a modest uncertainty level, the model’s performance was already better than previous reports; at very low uncertainty, its accuracy became strikingly high, correctly identifying nearly all future preeclampsia cases with few false alarms. A similar pattern appeared when the model was tested on an independent hospital’s data, suggesting the approach is robust even across different clinics and patient groups.

Clues for better testing and future care

Because the method tracks how much each variable contributes to uncertainty, it can guide which measurements are most worth collecting in early pregnancy. The analysis showed that no single test is enough: many variables each add a small but important piece of information. The framework is flexible and could be paired with other, more complex machine‑learning models or extended to other rare pregnancy problems. At the same time, the authors caution that their work is exploratory, based mostly on Korean women with single pregnancies, and that the most impressive accuracy estimates come from small, low‑uncertainty subgroups where very few preeclampsia cases exist. More diverse studies and careful choice of decision thresholds will be needed before such a tool can shape real‑world care.

What this means for expecting families

This study does not yet offer a clinic‑ready test, but it points toward smarter, more transparent prediction tools. Instead of providing a bare risk score, future systems could also say how certain they are, helping doctors avoid overconfidence when important pieces of the puzzle are missing. By learning which routine measurements matter most and how to handle imperfect data, this work lays the groundwork for safer, earlier identification of pregnancies at risk of preeclampsia—offering more time to protect the health of both mother and baby.

Citation: Kim, J.W., Kim, N., Kim, J.Y. et al. A novel approach to preeclampsia early prediction addressing predictive uncertainty due to missing data in clinical dataset. Sci Rep 16, 8455 (2026). https://doi.org/10.1038/s41598-025-27801-4

Keywords: preeclampsia, pregnancy risk prediction, machine learning in obstetrics, clinical data uncertainty, maternal health