Clear Sky Science · en

3D reconstruction of shallow sea structures using direct system calibration and faint laser line extraction

Bringing Hidden Underwater Worlds into View

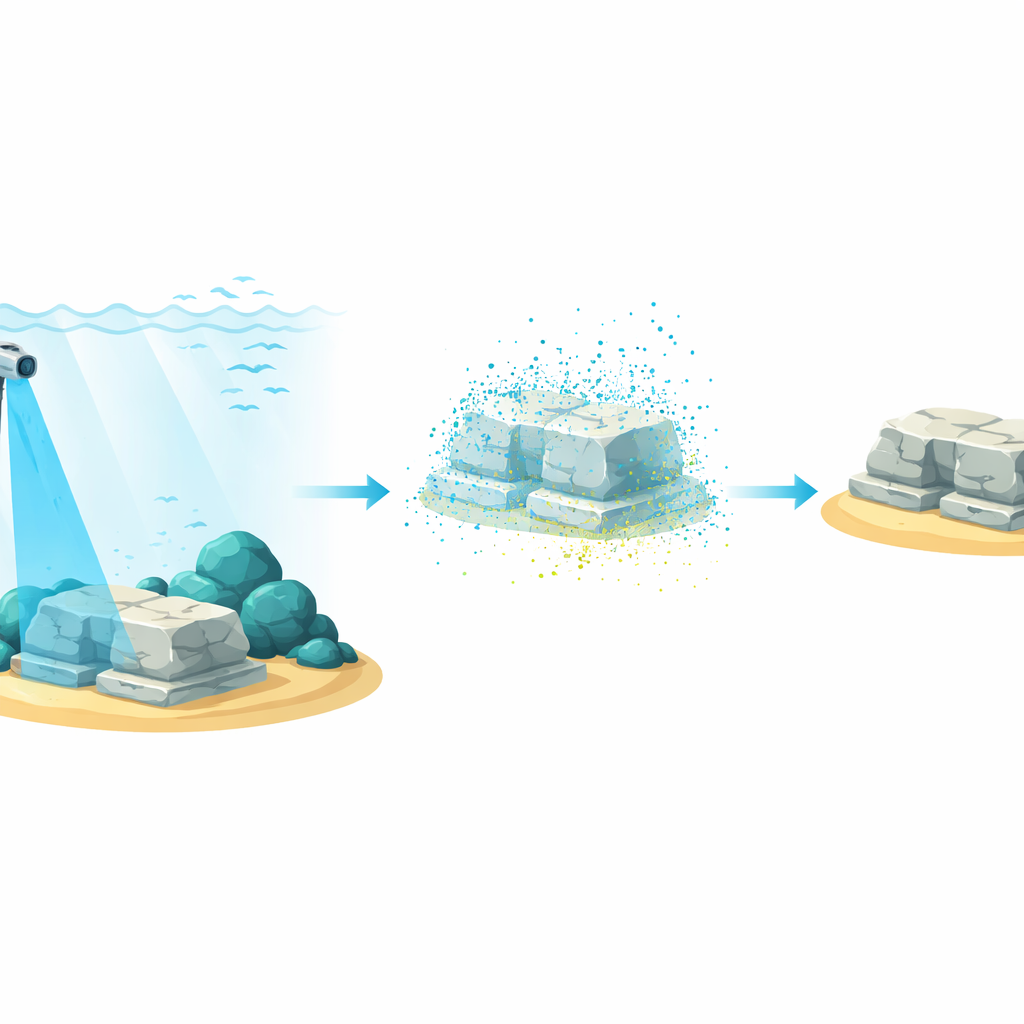

Many of the most intriguing traces of our past now lie underwater—shipwrecks, drowned cities, and coastal ruins. To explore and preserve these sites digitally, researchers need accurate 3D maps of what lies on the seafloor. Yet in shallow water, bright sunlight, floating sand, and the water itself make precise measurements surprisingly hard. This paper presents a new way to scan and reconstruct detailed 3D models of underwater structures using a low-power blue laser, even in sunlit, noisy conditions where existing methods largely fail.

Why Scanning Shallow Seas Is So Difficult

Creating a digital 3D model of a scene usually means assembling millions of points in space—what scientists call a point cloud. On land, lasers and cameras do this reliably. Underwater, however, things get messy. Water bends and scatters light, washing scenes in blue-green haze and blurring edges. Sunlight shining through waves creates bright moving patterns called caustics that can drown out the thin line of a low-power laser. Microscopic particles add a veil of fog and flickering reflections. As a result, many current underwater systems only work at night, in very low light, or under carefully controlled conditions, which is not how real oceans behave.

A Rotating Blue Laser as a 3D Paintbrush

The authors built a compact, waterproof scanner that acts like a 3D paintbrush. It projects a thin vertical sheet of blue laser light that sweeps around as the arm of the device slowly rotates. Wherever this sheet touches a rock, wall, or artifact, it traces out a glowing curve. A camera mounted next to the laser captures images at each small rotation step. By combining all these views, the system can reconstruct a dense 3D point cloud of the surroundings, complete with approximate color, which can later be turned into a surface mesh for visualization or virtual reality.

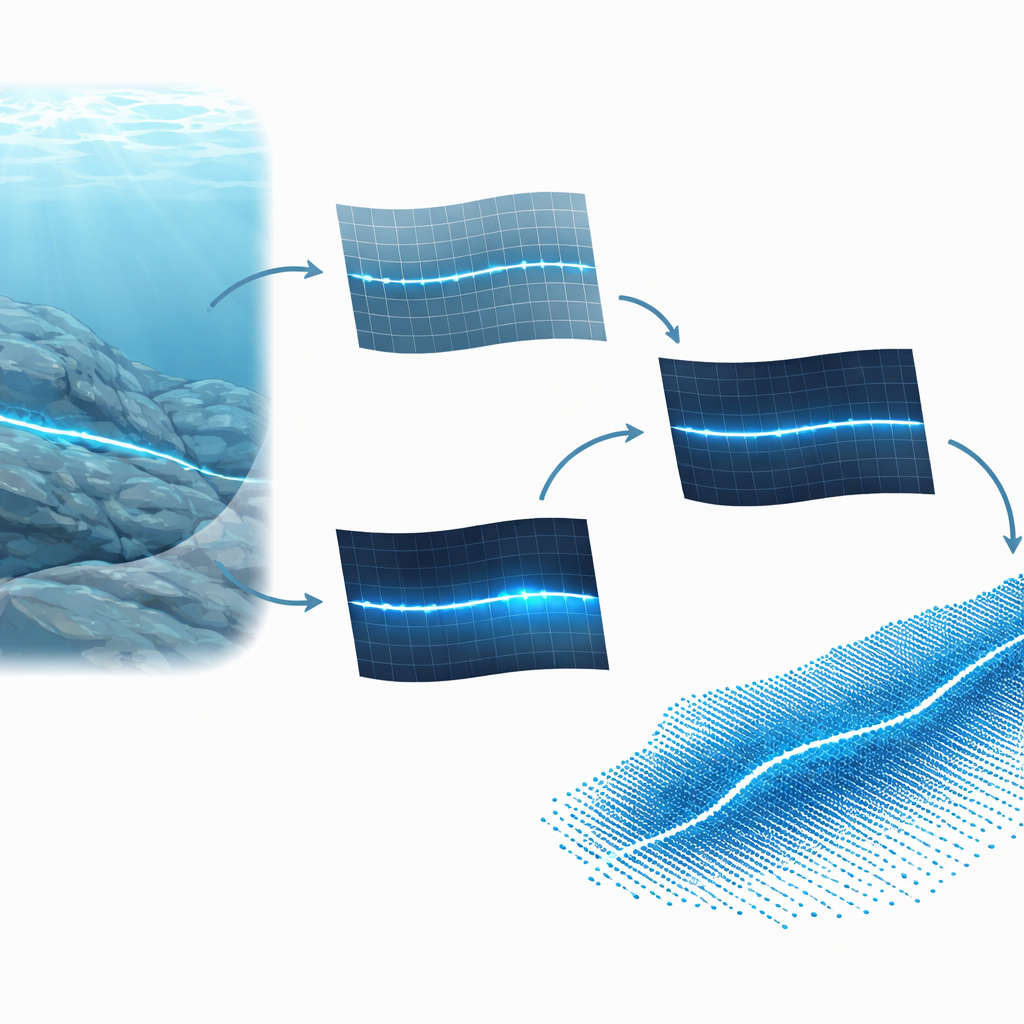

Teaching the System Where Each Pixel Lives in Space

A central challenge in such systems is calibration: figuring out how each camera pixel lines up with real-world coordinates. Traditional approaches rely on detailed mathematical models of the camera and water, with dozens of parameters that must be tuned, making them fragile and error-prone. Here, the researchers take a data-centric route. They directly learn a mapping from image pixels to 3D positions by scanning a wall covered with a known grid. Only a few hundred carefully chosen sample points are needed. Once stored in a look-up table, this map lets the scanner convert any detected laser pixel into a 3D point without ever explicitly solving complicated camera equations.

Straightening and Amplifying a Faint Blue Trace

Because the calibration is done in air, underwater footage must first be “straightened” to undo the bending caused by refraction at the water surface. The team measures this warping using images of a grid that straddles air and water, then computes how each underwater pixel would shift if seen in air. After this dewarping, the real trick begins: finding a faint, often broken blue line in a noisy image. The method first computes a “blueness” value for every pixel, tuned so that light close to the laser’s blue hue stands out. It then looks at how much bluer each pixel is than its neighbors and uses a machine-learning classifier to form a rough black-and-white map of likely laser pixels.

From Noisy Dots to Clean 3D Shapes

That first map still contains many false hits from sand, reflections, and caustics. To clean it, the system searches for straight line patterns using a classic technique that votes for possible lines based on the pixel positions. It keeps only those lines that match the expected orientation of the laser. A smooth curve is then fit through the remaining points, and each pixel’s “confidence” is raised if it lies close to this curve and has strong blueness. For every row in the image, the pixel with the highest confidence is chosen as part of the final laser trace. Feeding these cleaned traces, step by step, into the calibration table produces a 3D point cloud colored from the original camera image.

How Well Does It Work in Real Water?

The authors tested their system in tanks and in a shallow sea at about five meters depth, under lighting ranging from dim indoor levels to intense midday sun with tens of thousands of lux. They scanned objects with precisely known dimensions—a ball and a custom acrylic shape—and compared measured sizes to ground truth. At distances up to roughly half a meter, the typical error stayed below a fraction of a millimeter even under bright light, and remained within a few tenths of a millimeter at greater distances until the laser line became almost invisible to the eye. Existing methods designed for dark conditions could not reconstruct scenes at these higher light levels at all.

What This Means for Exploring Underwater Sites

In essence, this work shows that accurate 3D mapping of shallow underwater structures does not require bulky high-power lasers or perfectly controlled darkness. By carefully correcting for water’s bending of light, emphasizing the laser’s color, and using a direct calibration that ties pixels to real-world positions, the system can reliably pull a faint blue trace out of noisy, sunlit scenes. Although performance drops in extremely bright conditions and with certain object colors, the approach opens the door to more routine, low-cost scanning of reefs, harbor walls, and submerged ruins, helping scientists and conservators build faithful digital copies of underwater worlds.

Citation: Garai, A., Kumar, S. 3D reconstruction of shallow sea structures using direct system calibration and faint laser line extraction. Sci Rep 16, 9321 (2026). https://doi.org/10.1038/s41598-025-25736-4

Keywords: underwater 3D scanning, laser line reconstruction, shallow sea mapping, point cloud imaging, underwater archaeology