Clear Sky Science · en

Eye movement benchmark data for smooth-pursuit classification

Why Following Eyes Matters

Every time you read a sentence, watch a soccer game, or follow a firefly in the dark, your eyes perform a complex dance of quick jumps and smooth glides. These tiny movements reveal what we pay attention to and how our brains work, and they are increasingly used to study conditions such as brain injuries and dementia. But computers that analyze eye-tracking data still struggle to tell apart two key kinds of eye movements: steady staring at a still object and smoothly following something that moves. This paper introduces a carefully designed dataset to help researchers train and test better computer methods for telling these movements apart.

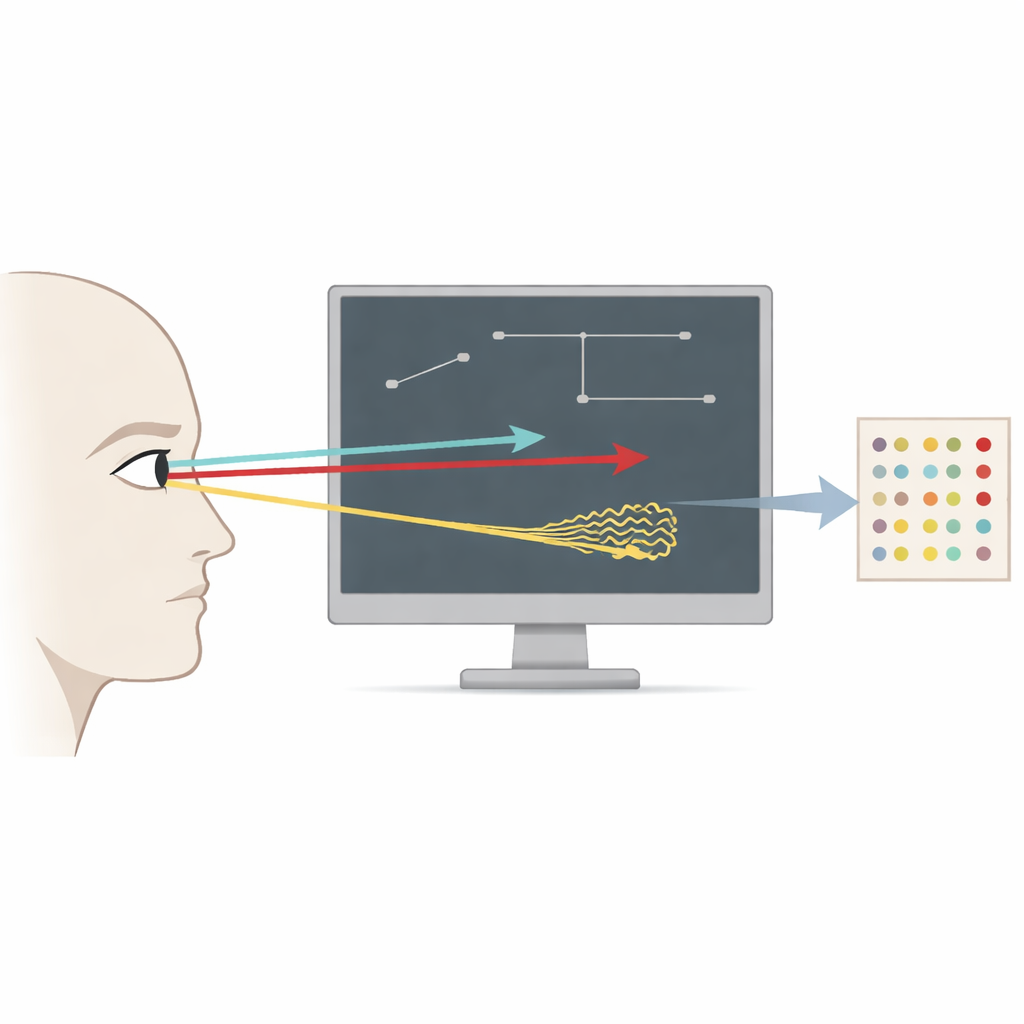

The Challenge of Reading Eye Movements

Eye-trackers record where our eyes point thousands of times per second, but turning those streams of numbers into meaningful events is tricky. There are fast jumps (saccades), steady looks at a spot (fixations), and smooth following of a moving object (smooth pursuits). Fixations and smooth pursuits look surprisingly similar in the raw data because in both cases the eye moves slowly from one point to the next. Human experts often disagree on which is which, and many computer algorithms confuse them as well. That is especially problematic because smooth pursuit performance is an important clue in diagnosing and understanding disorders like schizophrenia, traumatic brain injury, and neurodegenerative diseases.

Designing Clean, Controlled Eye Movements

To tackle this problem, the authors built a highly controlled experiment instead of relying on noisy real-world scenes. Ten university students sat with their heads stabilized in a chin rest, looking at a screen while a single small gray circle moved in different ways on a black background. The researchers created three simple “behaviors” for this circle: a moving circle that glided steadily across the screen, a jumping circle that hopped between fixed spots, and a back-and-forth circle that slid smoothly, then jumped back to start again. Each trial was designed so that only one kind of slow movement (either fixation or smooth pursuit) could occur, together with fast jumps. This clever setup means that long, slow stretches are almost certainly either pure staring or pure following, without both mixed together.

Careful Measurement and High-Quality Data

The team used a high-speed eye-tracker that recorded the position of the right eye 1,000 times per second while the computer screen refreshed 144 times per second. Targets moved along eight straight directions (up, down, left, right, and the four diagonals) and at three speeds representing slow, medium, and fast following. Each participant completed 144 short trials, adding up to about 24 minutes of data per person and nearly four hours overall. The researchers repeatedly calibrated the eye-tracker, checked how closely the recorded gaze matched the targets, and monitored how often data were missing because of blinks or tracking loss. Aside from a clearly identified set of misaligned trials for one participant, these checks showed that the eye and target positions lined up well and that fixations were stable and precise.

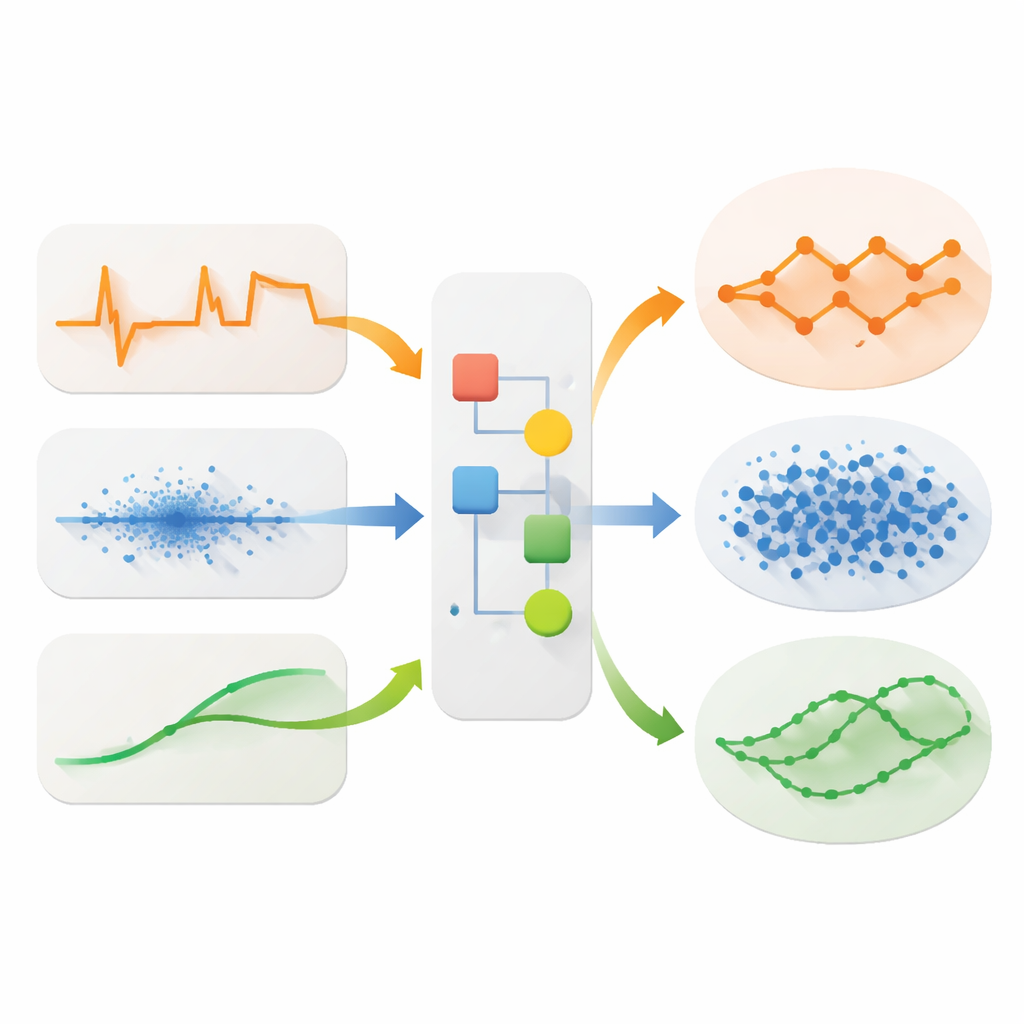

From Raw Traces to Useful Labels

Instead of asking humans to label every moment of data by hand, the authors used the structure of their experiment to guide automatic labeling. First, they cleaned the raw files, removed blinks, and converted positions on the screen into visual angles that better reflect how the eye moves. Then, for each trial, they calculated how quickly the eye position changed over time and built a custom cutoff speed. Movements slower than this cutoff were treated as “slow” events (fixations or pursuits, depending on the trial type), and faster bursts were treated as jumps. Very short events, shorter than about one hundredth of a second, were relabeled to avoid counting tiny glitches as meaningful eye movements. This produced what the authors call “plausible benchmark labels” for fixations, saccades, and smooth pursuits, grounded in both the experimental design and the measured speed of the eye.

Tools for the Research Community

To make the dataset widely usable, the authors placed all files on an open online platform and released companion software in Python. Researchers can download the raw recordings, clean versions, information about each participant, and the exact target paths. The companion package includes ready-made functions to download, preprocess, and label the data, as well as plotting tools to visualize trials. Because the experiment code is also available, other labs can recreate the same task and extend the dataset, or explore new ways of incorporating information about where the target should be into their algorithms.

What This Means for Future Eye-Tracking

For a lay reader, the key message is that this work provides a clean testing ground for teaching computers to recognize different kinds of eye movements, especially the subtle act of smoothly tracking motion. By preventing the most easily confused movements from overlapping in the same trial, and by relying on clear speed differences instead of fallible human judgments, the authors offer a solid reference set that others can build on. Over time, better algorithms trained on such data could make eye-tracking a more reliable tool in psychology, neuroscience, and medical diagnostics, helping clinicians and researchers see more clearly how our eyes reflect the workings of the brain.

Citation: Korthals, L., Visser, I. & Kucharský, Š. Eye movement benchmark data for smooth-pursuit classification. Sci Data 13, 375 (2026). https://doi.org/10.1038/s41597-026-06963-4

Keywords: eye tracking, smooth pursuit, saccades, benchmark dataset, gaze classification