Clear Sky Science · en

Maternal-Fetal Ultrasouno Video Dataset for End-to-end Intrapartum Biometry and Multi-task Learning

Why measuring birth progress matters

When a baby is being born, doctors and midwives must constantly judge how labor is progressing and whether mother and child are safe. Today, those judgments rely heavily on a doctor’s skill in reading blurry ultrasound images in real time. That takes years of training and can still be slow and subjective. This article presents a new public collection of short ultrasound videos taken during labor, carefully labeled by experts, to help researchers build artificial intelligence systems that can automatically track how far the baby’s head has descended. In the long run, such tools could support safer, more consistent decisions in delivery rooms worldwide.

A new window into labor in real time

The authors focus on a specific kind of scan called intrapartum ultrasound, taken while labor is actually underway. These scans are cheap, widely available, and have the potential to reduce deaths during childbirth, a period when almost half of all maternal and newborn deaths occur. Professional societies have issued detailed guidelines describing which views to capture and which measurements best reflect how the baby is moving through the birth canal. Two of the most important are the angle of progression and the head–symphysis distance, which together describe how far and how fast the baby’s head is advancing. Until now, however, there has been no large public video dataset that shows these views during labor and links them to the measurements that doctors care about.

From raw videos to richly labeled data

To close this gap, the team assembled ultrasound recordings from 774 women in labor, all carrying a single baby in a head‑down position at or beyond full term. The scans came from three major hospitals and three different ultrasound machines, making the data more representative of real‑world practice. Each short clip lasts about two seconds and consists of dozens of frames showing the baby’s head and the mother’s pelvic bone from the side. The researchers converted all videos to a common size, removed any identifying information such as names or dates, and standardized the images so that the physical scale is preserved across devices. This careful preparation means the collection can serve as a fair test bed for new computer programs.

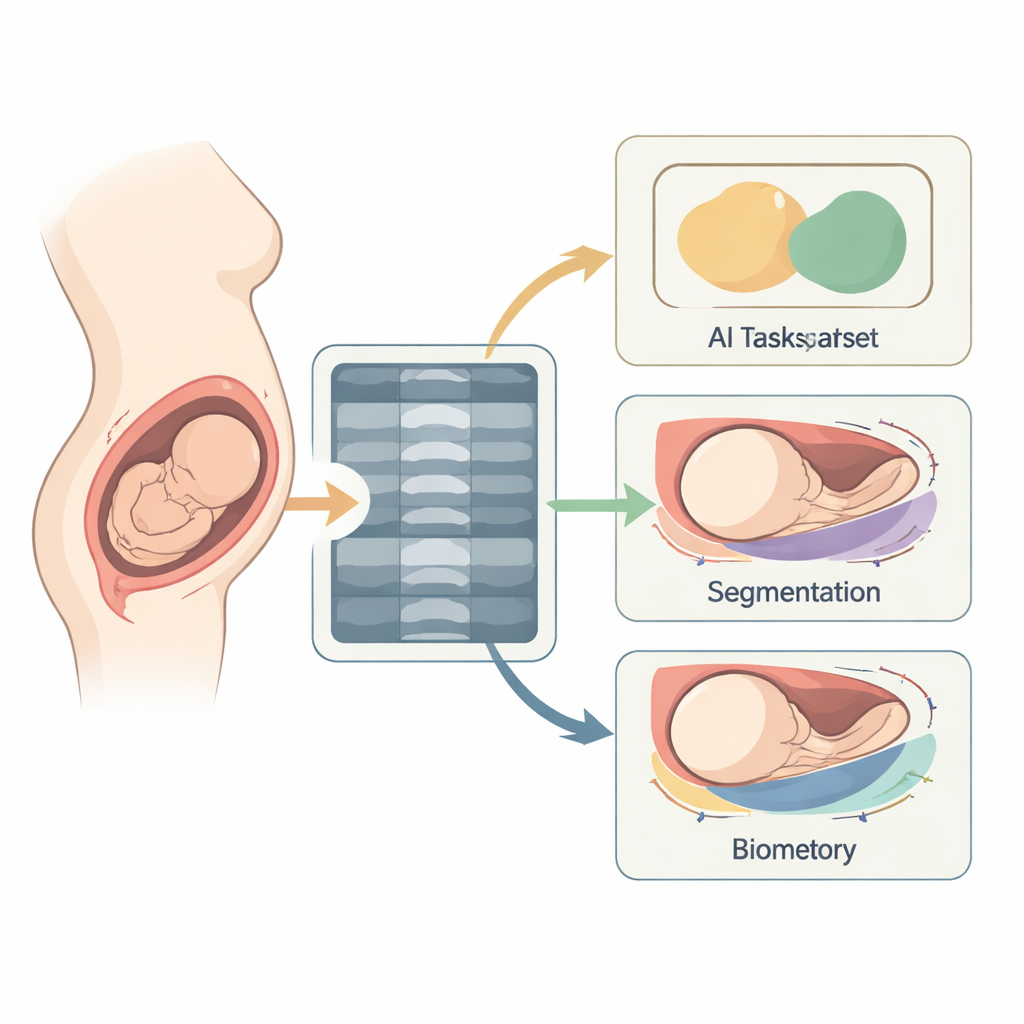

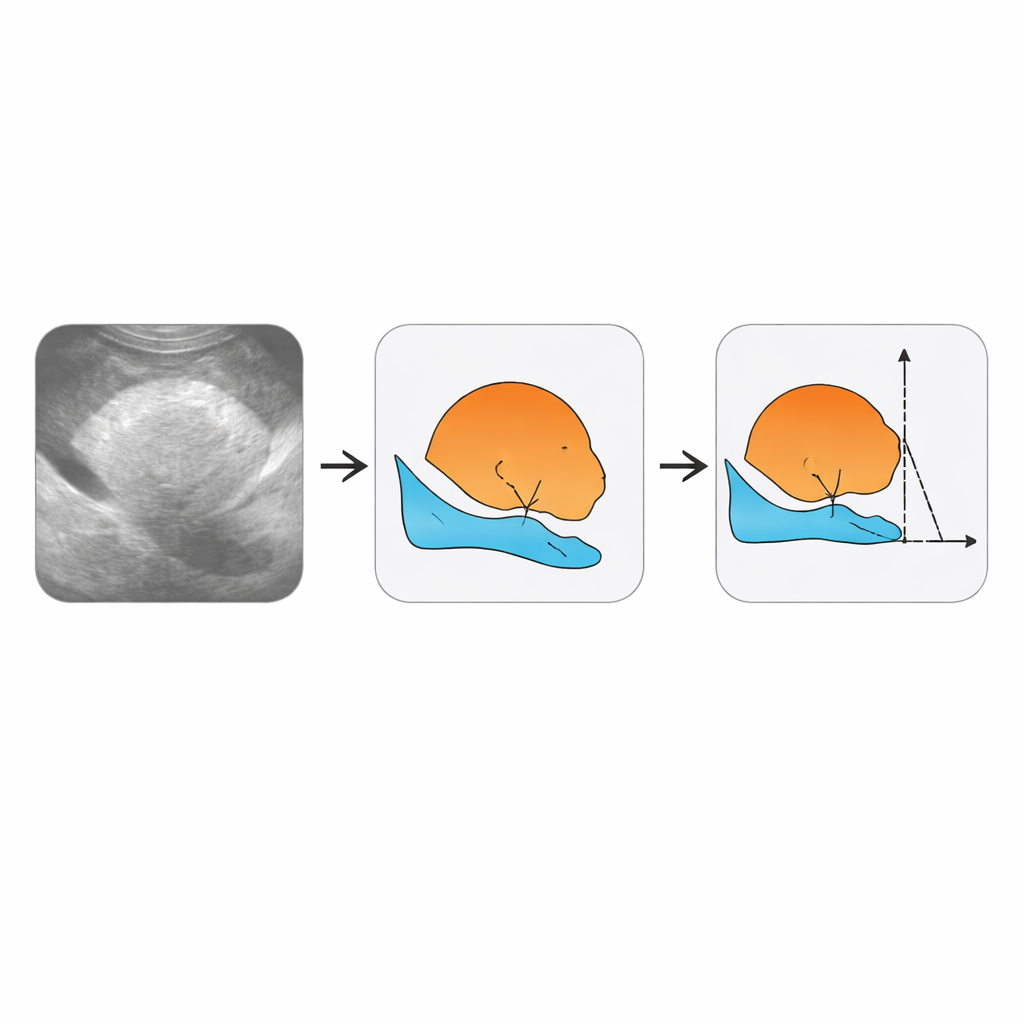

How experts taught the computer what to see

Creating useful training data required much more than saving video files. Experienced ultrasound specialists examined the clips frame by frame. For selected frames, they marked out the outline of the baby’s head and the mother’s pubic bone, creating colored masks that show where each structure lies. They also pinpointed key landmarks along these outlines—four special points that can be used to reconstruct the angle of progression and the distance from the pubic bone to the baby’s head. In addition, they labeled entire videos according to several yes‑or‑no clinical questions, turning each clip into a compact summary of what an automated system should conclude. The authors organized all of this information into clear folders, tables, and coordinate files so that others can easily plug it into their own algorithms.

Checking that human labels are trustworthy

Because computer models can only be as reliable as the examples they learn from, the team devoted substantial effort to testing how consistently different experts labeled the same videos. Three annotators from the participating hospitals independently reviewed a shared set of 150 videos. The researchers then compared each person’s work with a combined “consensus” standard. For broad decisions—such as whether a frame showed the right view—the agreement was very high. For drawing the outline of the pubic bone, consistency was also strong. Segmenting the baby’s head and deriving exact angle and distance measurements proved more challenging, reflecting the inherent difficulty of tracing faint, shadowed edges in noisy ultrasound images. Even so, the level of agreement was good enough to support meaningful training and testing of new methods.

A starting kit for smarter labor monitoring

To help others get started, the authors provide a simple example computer model that first highlights the baby’s head and the mother’s pelvic bone in each frame, and then uses those shapes to estimate the key measurements. Although this baseline system is far from perfect, it shows how the dataset can support “end‑to‑end” approaches that go straight from raw video to clinically relevant numbers. The authors also discuss current limitations, such as the difficulty of handling especially poor‑quality images and the fact that even experts disagree somewhat on where exactly the baby’s head ends. By making the videos and labels freely available, they invite the wider research community to tackle these challenges, with the ultimate goal of more objective, accessible tools to guide decisions during childbirth.

Citation: Niu, M., Bai, J., Gao, Y. et al. Maternal-Fetal Ultrasouno Video Dataset for End-to-end Intrapartum Biometry and Multi-task Learning. Sci Data 13, 327 (2026). https://doi.org/10.1038/s41597-026-06900-5

Keywords: intrapartum ultrasound, labor monitoring, fetal head descent, medical imaging AI, clinical video dataset