Clear Sky Science · en

A Time-Synchronized Multi-Sensor drone dataset acquired from multiple radars and RF receiver

Why watching the skies matters

Drones have quickly moved from toys and film tools to vital machines for delivery, inspection, farming, and more. But the same small aircraft that help us can also be misused for spying, smuggling, or even attacks. Stopping dangerous drones is hard because they are tiny, fast, and often fly in cluttered real-world scenes. This article introduces a new open dataset that helps scientists and engineers build smarter systems to spot, track, and identify drones using their invisible radio fingerprints rather than just what they look or sound like.

Listening to drones with invisible waves

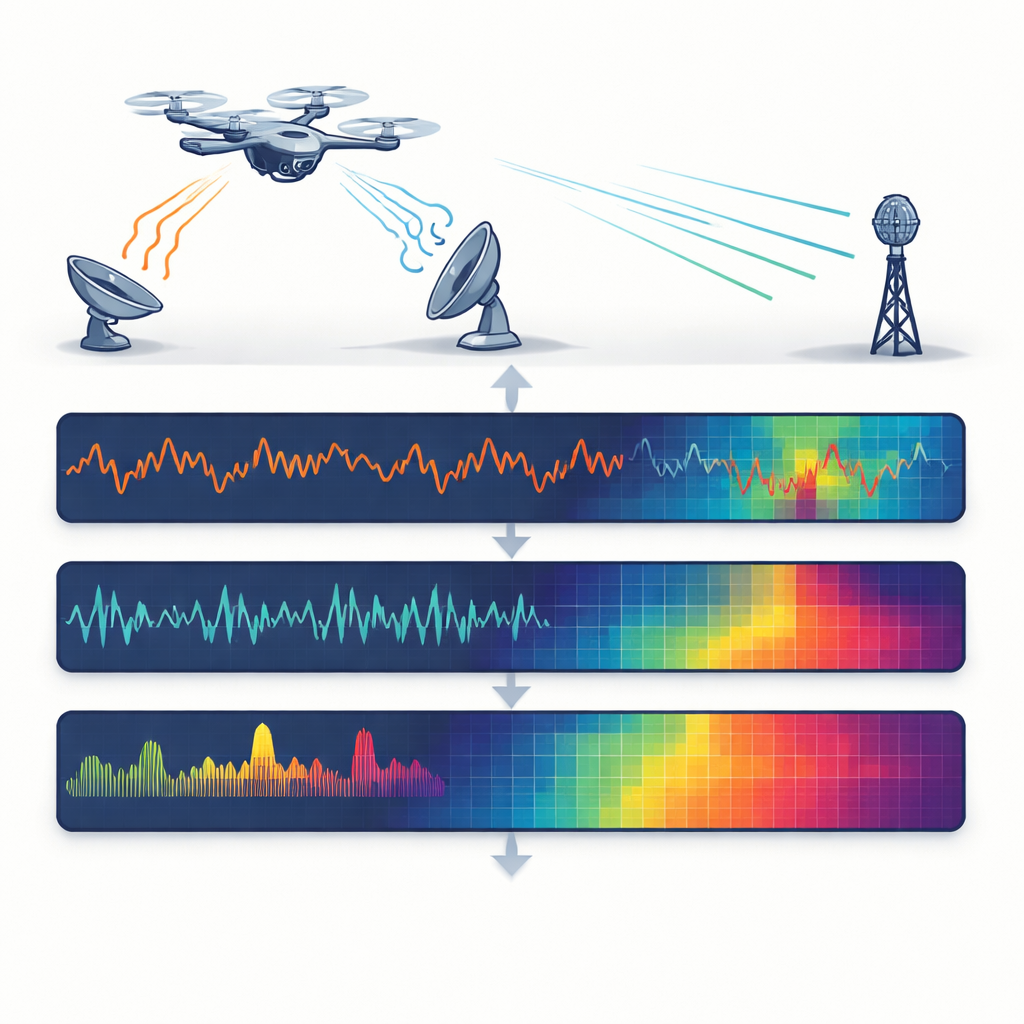

Instead of relying on cameras or microphones, the researchers focus on radio waves, which work day or night and in fog, rain, or glare. They use three different radio-based sensors at once: one radar that sends a steady tone to sense motion, another radar that sweeps its frequency to measure both distance and speed, and a radio receiver that simply listens for the drone’s own control and video signals. Each sensor sees the drone in a different way—through tiny vibrations of its spinning rotor blades, its changing distance from the sensor, or the structure of its wireless link—much like combining sight, hearing, and touch into a fuller picture.

Building a carefully controlled test range

To create trustworthy data, the team flew four popular commercial drones and placed a simple metal corner reflector as a non-drone reference in an open field free of large buildings. All targets hovered at the same height and faced a cluster of sensors mounted together on tripods, so each device viewed the scene from almost exactly the same angle. The drones were measured at distances from 2 to 30 meters in 2-meter steps, with 500 repeated recordings for every combination of drone type, distance, and sensor. This careful design makes it possible to study how detection changes as a drone moves farther away, and to compare different models that vary in size, weight, and construction.

Making different sensors breathe in unison

A key strength of the dataset is that the three sensors are time-synchronized in software. All devices are driven by a single control program that triggers them together and saves their outputs in lockstep. Each recording from one sensor has a matching partner from the others, aligned by a shared index rather than by complex hardware clocks. For the two radars, the system captures either raw signals or processed maps that show how reflected energy spreads over distance and speed. For the radio receiver, it stores the raw communication signal. This shared timing lets researchers directly fuse information across sensors—correlating a flicker in rotor motion with a burst in the control link, for example—without struggling to line things up afterward.

From raw waves to machine-ready pictures

Because modern detection tools often rely on deep learning, the authors also convert the raw measurements into image-like views that computers can easily digest. For the steady-tone radar, they extract the frequency patterns produced by spinning propellers, known as micro-motions, and represent them as simple spectra. For the sweeping radar, they produce colorful distance–speed images that highlight where and how the drone is moving, after trimming away background clutter. For the radio receiver, they compute how power is spread across frequencies, creating fingerprints of each drone’s communication style. Every raw file has a matching image file, so scientists can choose whether to work at signal level or plug directly into standard image-based neural networks.

Proving that more eyes are better than one

To show that the dataset is not just neat but useful, the team trains a well-known image-recognition network separately on each sensor’s images and then on fused combinations of all three. As expected, the radars struggle more as the drone moves farther away: the reflected signals weaken, and classification accuracy drops with distance. The radio receiver holds up better with range, but some drones share almost identical communication bands and are hard to tell apart with that sensor alone. When the researchers merge the three views into single composite inputs, performance improves across the board, especially for smaller, harder-to-detect drones. This demonstrates that synchronized multi-sensor information can compensate for the blind spots of any one device.

What this means for safer airspace

In plain terms, the authors have built a detailed, public “training ground” where smart algorithms can learn to recognize drones using multiple kinds of radio eyes at once. By releasing both raw signals and ready-to-use images, along with example code, they lower the barrier for others to design detection systems that work reliably in changing conditions and at different distances. Over time, tools built on this dataset could help airports, critical facilities, and city authorities better distinguish friendly drones from suspicious ones, making low-altitude airspace safer without depending solely on cameras or human spotters.

Citation: Han, SK., Jung, YH. A Time-Synchronized Multi-Sensor drone dataset acquired from multiple radars and RF receiver. Sci Data 13, 407 (2026). https://doi.org/10.1038/s41597-026-06802-6

Keywords: drone detection, radar sensing, radio frequency signals, sensor fusion, open dataset