Clear Sky Science · en

Comprehensive dataset of features describing eye-gaze dynamics across multiple tasks

How Our Eyes Reveal What We Pay Attention To

Every glance you make—from skimming this page to hunting for a friend in a crowd—leaves a hidden trail in the tiny jumps and pauses of your eyes. This article presents a large, carefully prepared collection of those trails from hundreds of volunteers. By sharing this dataset openly, the researchers give scientists, engineers, and even students a powerful way to probe how we see, focus, and search, and to build future tools like better user interfaces or assistive technologies for people who cannot easily communicate.

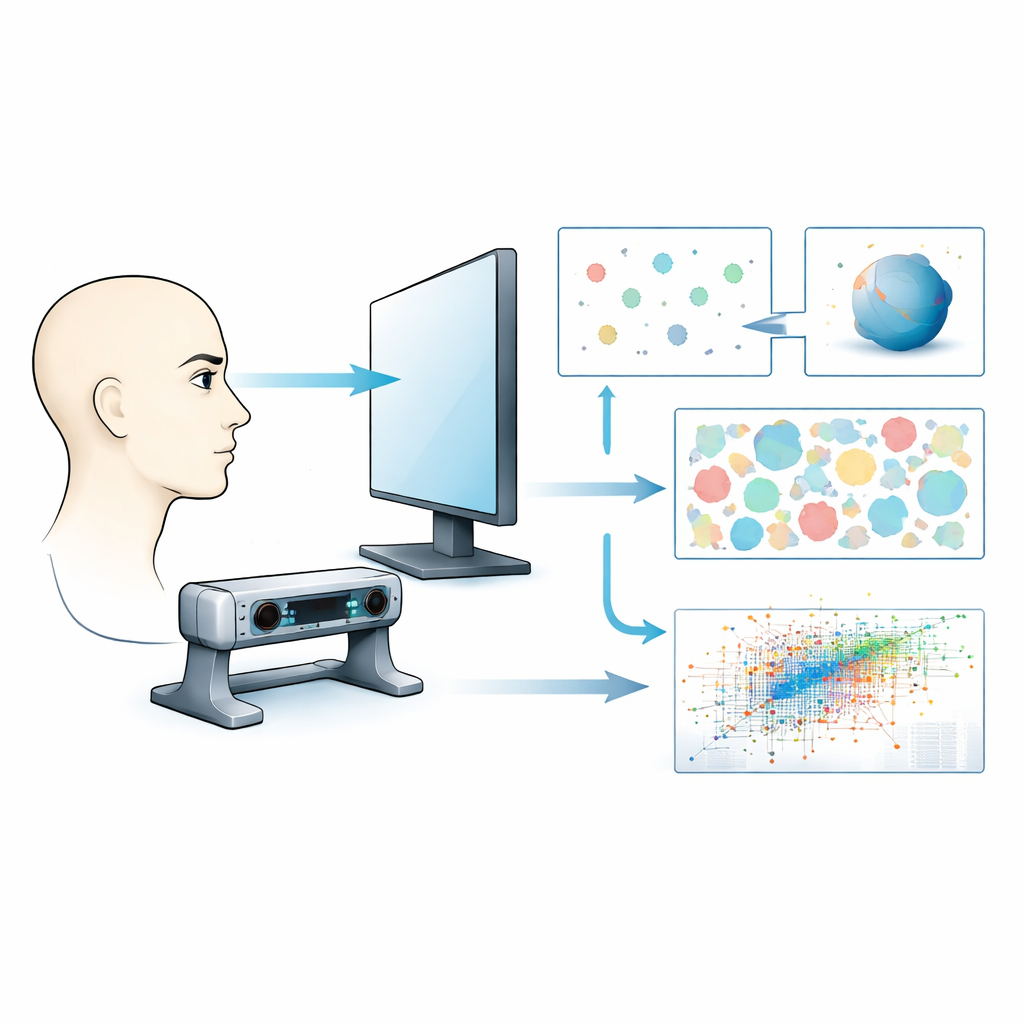

Watching Eyes As They Watch The World

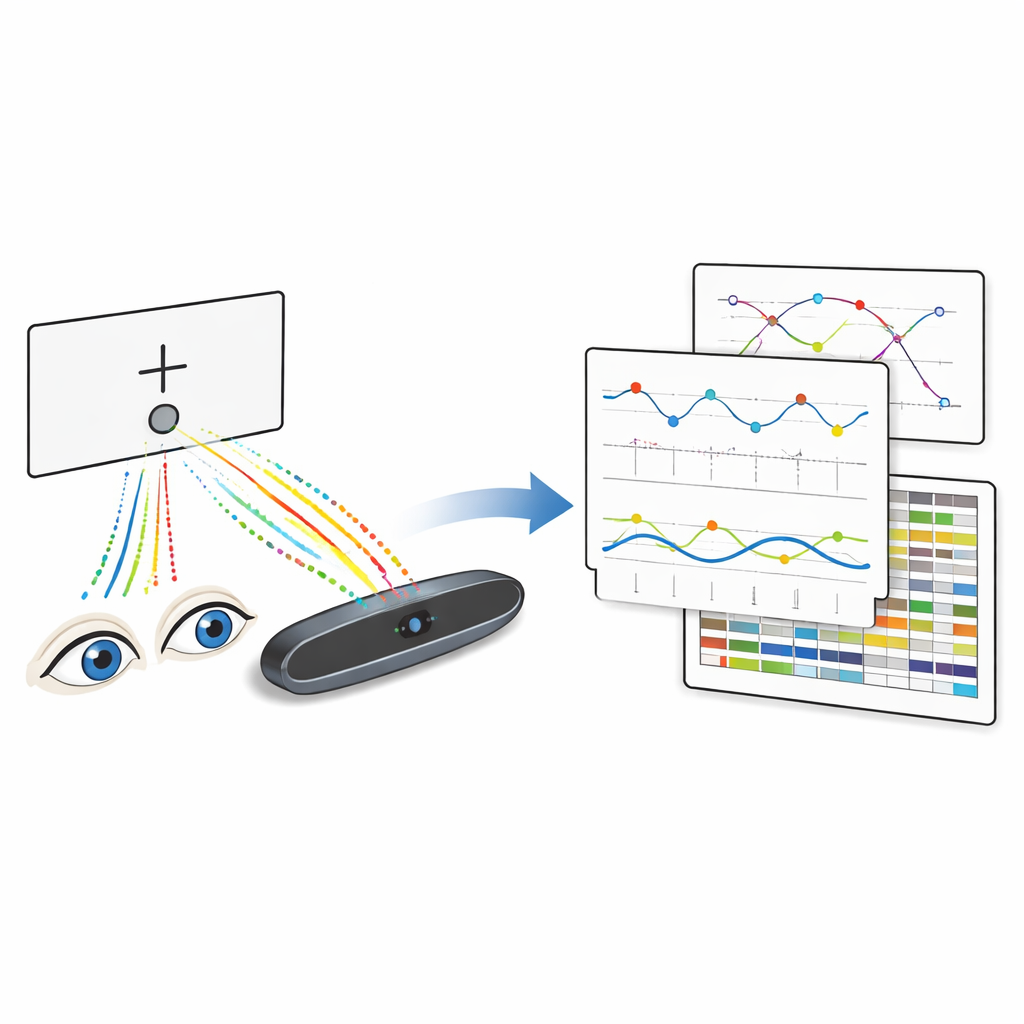

The team recorded eye movements from 251 participants as they completed a range of simple screen-based tasks. Using a high-speed eye-tracker and gaming monitor, they captured where each person looked, how their pupils expanded or shrank, and when they blinked, thousands of times per second. These raw signals were then turned into clean, organized tables that mark each moment as a steady look (a fixation), a rapid jump (a saccade), or a blink. Because the data are anonymized and follow strict Norwegian ethical rules, they can be safely shared with the wider research community.

Short Flashes, Quick Cues, And Tricky Flicker

Several tasks probed what people can notice when things change very quickly on the screen. In the “vanishing saccade” task, a tiny white circle flashed for only a few thousandths of a second to the left or right of a central cross, then disappeared before the eyes could move. Participants had to guess where—if anywhere—it had appeared. By comparing accuracy at different flash durations, the dataset captures how detection falls off as the signal becomes almost too brief for awareness. A related “cued saccade” task asked whether a faint, very short cue could prime people to react faster to a later target appearing in the same place, even when the cue was so quick it might not be consciously noticed. Another “flickering cross” task examined the point at which a rapidly blinking cross stops looking like it flickers at all and instead appears steady, revealing the limits of our visual system’s timing.

When Motion And Meaning Confuse The Brain

Other tasks played with how we interpret complex or ambiguous scenes. In the “rotating ball” task, a ring of dots near the center cross could be seen as spinning one way or the other, even though the physical stimulus never changed. Participants first reported the direction they saw, then tried to will it to reverse while keeping their eyes fixed. Their success or failure, captured in the eye data, shines light on how the brain can flip between different interpretations of the same input. The dataset also includes free-viewing of random colored pixel patterns—images with no objects or story—where gaze patterns are driven mainly by raw contrast and color, and “Where’s Waldo?”-style crowded scenes, where viewers search knowingly for hidden characters and objects.

From Raw Signals To Ready-To-Use Tables

Behind the scenes, all recordings from the eye-tracker’s proprietary format were passed through an automated pipeline. This software converts the raw streams of numbers into standard comma-separated files, one per person and per task, with consistent names that encode key details of each trial. Each row in these tables gives the time, left and right eye positions, pupil sizes, and a simple code for what the eyes were doing—blink, fixation, or saccade. Additional message markers indicate when a trial started, when a stimulus appeared, or when an image was removed. The authors checked calibration quality to ensure that gaze points landed within a small fraction of a degree of where they should, giving users confidence that the positions are accurate enough for fine-grained analysis.

Why This Shared Eye Data Matters

To a non-specialist, this work may sound like a technical exercise in file conversion, but its impact is broader. The collection brings together high-quality, precisely timed eye movement records across many classic psychological tasks and more natural viewing situations. Because the data are open, standardized, and well documented, researchers can test new theories of attention, build machine-learning models that predict where people will look, or design gaze-controlled tools for users with limited mobility, without having to run large experiments themselves. In essence, the paper turns fleeting eye movements into a lasting public resource that helps us better understand how seeing, attention, and decision-making unfold from moment to moment.

Citation: Mathema, R., Nav, S.M., Bhandari, S. et al. Comprehensive dataset of features describing eye-gaze dynamics across multiple tasks. Sci Data 13, 376 (2026). https://doi.org/10.1038/s41597-026-06754-x

Keywords: eye tracking, visual attention, gaze dynamics, cognitive science, open dataset