Clear Sky Science · en

A benchmark dataset for text line segmentation in palm leaf documents

Saving Stories Written on Leaves

Palm leaf manuscripts are among the oldest surviving records of life, science, religion, and art in South and Southeast Asia. Many of these brittle leaves are now fading, cracking, and being eaten away by time, which risks losing centuries of knowledge. This paper presents LeafOCR-Line, a carefully built digital dataset that helps computers read lines of writing on damaged palm leaves more accurately, speeding up efforts to preserve and share this fragile heritage with the world.

Why Ancient Leaves Are Hard to Read

Reading a palm leaf manuscript is not as simple as scanning a modern printed page. The writing is often slanted, squeezed into tight spaces, or broken by punch holes traditionally used to bind the leaves. Age adds stains, fungal spots, tears, and fading ink. Some of these marks look confusingly similar to letters, while parts of real letters may be missing or barely visible. In languages like Malayalam, used for many of these texts, letters are full of loops and stacked marks that can overlap from one line to the next. For a computer vision system that tries to locate each line of writing, this messy, overlapped layout is especially challenging.

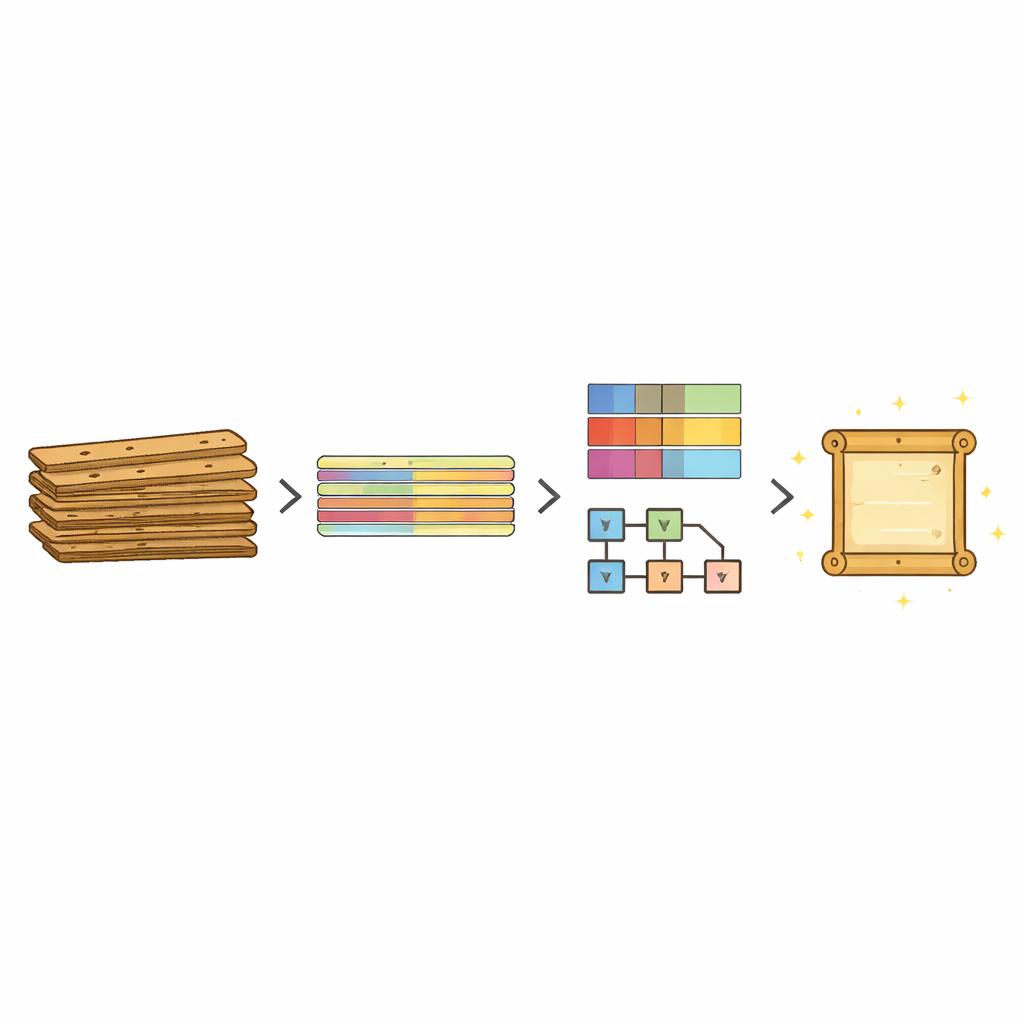

From Physical Leaves to a Digital Benchmark

The authors set out to create a large, realistic benchmark dataset focused on one crucial step in the digitization chain: separating each line of text from the background and from neighboring lines. They gathered 20 bundles of Malayalam palm leaf manuscripts from a public online collection, covering works written between roughly the years 1000 and 1800. After extracting nearly 3,000 page images and automatically cropping out the dark backgrounds, they worked with the leaf regions only. Each cropped leaf varies widely in size, contains three to twelve lines of text, and may include one or two punch holes, irregular spacing, and diverse handwriting styles that reflect different authors and time periods.

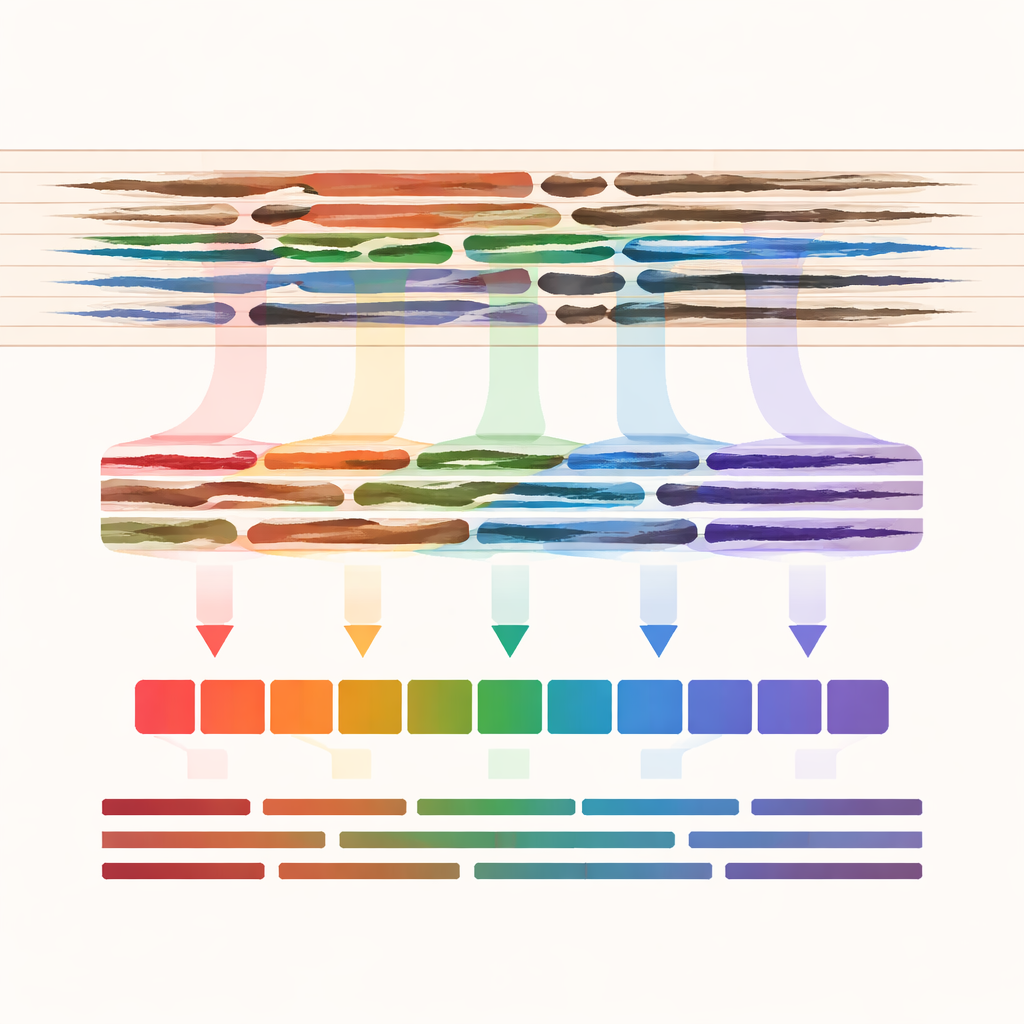

Sorting Damage and Tracing Each Line

Because different levels of damage demand different processing strategies, every image was assigned to one of three quality levels: less deteriorated, moderately deteriorated, or highly deteriorated. This grading drew on a prior, objective assessment method that analyzes visual clarity, contrast, and physical condition. The main innovation of LeafOCR-Line lies in how the lines of writing are marked. Rather than drawing simple rectangles, which often slice through letters that extend above or below a line, the team used flexible polygon outlines that closely follow the actual curved shape of each line.

What the Dataset Contains

In total, LeafOCR-Line provides 1,710 palm leaf images, each paired with a matching mask image that highlights its text lines. The collection is split into training, validation, and test subsets with similar proportions of the three quality levels: about half of the images are moderately deteriorated, while the rest are roughly evenly divided between better and worse condition. From these 1,710 leaves, researchers can extract more than 10,000 individual line images. Additional files summarize, for each image, its damage level and the source manuscript, including links back to the original online repository. This structure makes it easy to compare methods fairly and to design systems that adapt to varying degrees of damage.

How Well Today’s Algorithms Cope

To show that the dataset is both challenging and useful, the authors trained and tested a broad set of modern image segmentation models, ranging from classic encoder–decoder networks to newer transformer-based designs. They measured how closely each model’s predicted line regions matched the human-made masks. All models could segment lines reasonably well, but one approach, called DeepLabV3, stood out. It was especially effective at capturing thin, curved lines and maintaining continuity even in heavily damaged leaves, though small errors remained where lines lay very close together. Other popular models such as U-Net and LinkNet also performed strongly but slightly less consistently on the worst cases, while some transformer-based and pyramid-style networks struggled with fine details.

From One Script to Many, and Why It Matters

Although LeafOCR-Line contains only Malayalam script, the shapes and layout of its letters resemble those of neighboring scripts such as Tamil, Tigalari, and Grantha. The authors demonstrated that a model trained on their dataset can segment lines from these related scripts as well, suggesting that the same data can support broader digitization efforts across several languages. For non-specialists, the main message is straightforward: LeafOCR-Line offers a robust, public foundation for building and testing algorithms that can “see” lines of text on damaged palm leaves. This, in turn, helps archivists, librarians, and communities turn fragile, fading strips of plant material into searchable, shareable digital archives that keep cultural memory alive for future generations.

Citation: Sivan, R., Pati, P.B. A benchmark dataset for text line segmentation in palm leaf documents. Sci Data 13, 424 (2026). https://doi.org/10.1038/s41597-026-06718-1

Keywords: palm leaf manuscripts, text line segmentation, document digitization, Malayalam script, heritage preservation