Clear Sky Science · en

A comprehensive EEG dataset for investigating visual touch perception

Why watching touch matters

Imagine feeling a twinge in your own hand when you see someone else being touched, or even hurt. Many of us have had this experience, and for some people it is so strong that it feels almost like real contact. This study introduces a rich new public dataset that lets scientists examine how the brain responds when we only see, but do not physically feel, touch. By making these brain recordings and questionnaires freely available, the authors hope to speed up research on empathy, social connection, and how screen-based interactions might partially replace real-life contact.

Looking at touch instead of feeling it

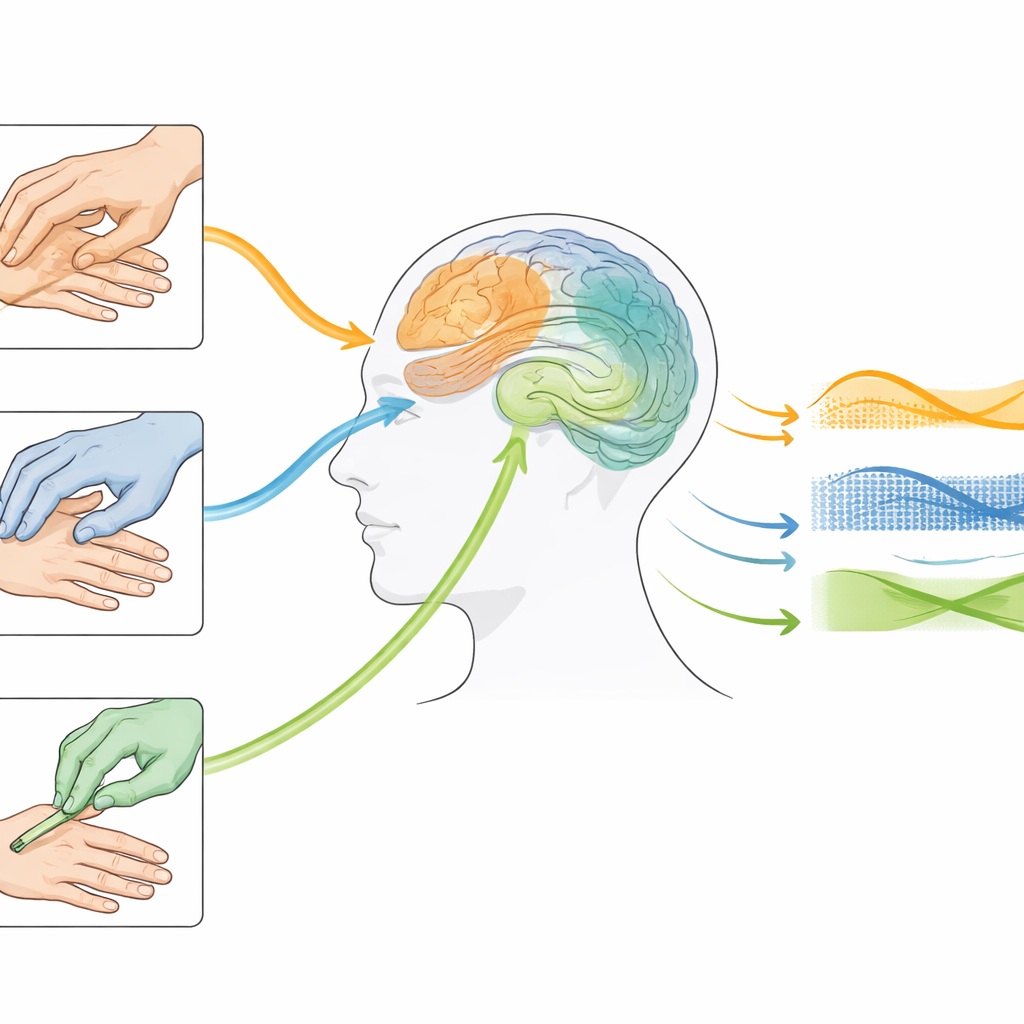

Touch is usually thought of as a skin-to-nerve experience, yet we constantly learn about touch by watching others. When you see a comforting pat on the shoulder or a sharp poke with a pointed object, your brain reacts even though your own skin is untouched. Earlier work suggested that watching touch first activates areas that handle visual scenes, and then engages regions that normally deal with real bodily sensations and feelings. However, most past studies were small, used very simple pictures, and rarely shared their data. This made it hard to capture the richness of everyday touch or to compare results across labs.

A large and detailed viewing experiment

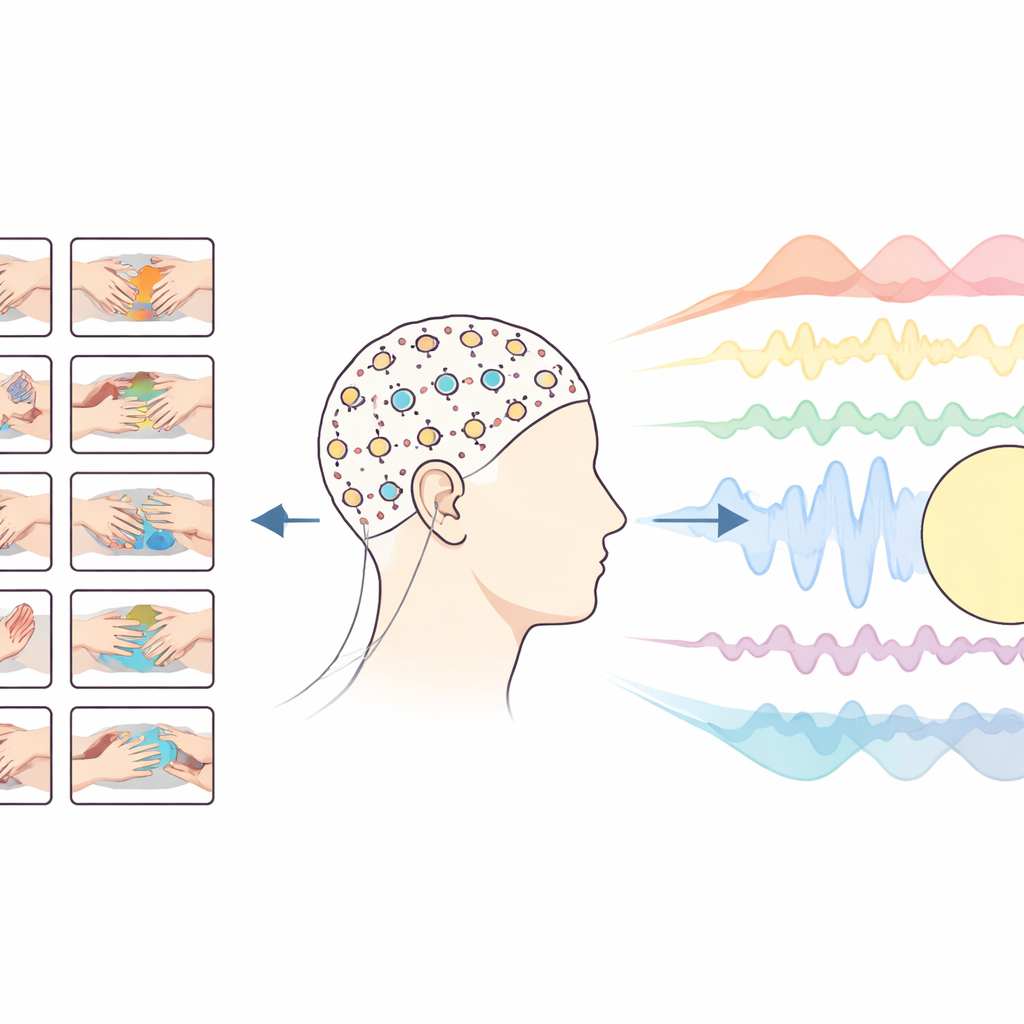

To close this gap, the authors collected brain activity from 80 adults using electroencephalography (EEG), which measures tiny electrical signals from the scalp. Participants watched short, close-up videos of one hand touching another. Some clips showed direct skin contact, such as stroking or pressing; others involved simple objects like a brush, a hammer, or a piece of fabric between the hands. The team carefully trimmed all videos to the same length and kept the look of the hands and background consistent, so that differences in brain activity would mainly reflect the type and feeling of the touch rather than unrelated visual details.

Different angles on the same touch

Each of the 90 original touch videos was flipped in various ways to create four orientations: left or right hand being touched, and a view that felt more like one’s own hands versus someone else’s. This produced 360 distinct clips, and each participant saw every version eight times, adding up to 2,880 trials in a session of under an hour. Between videos, there were brief pauses so the full brain response to each clip could unfold. To ensure that people stayed alert, special target clips showed a hand touching a simple white block instead of another hand, and volunteers silently counted how often these targets appeared. Their good accuracy indicated that they paid close attention throughout the task.

Brain signals and personal traits

The researchers did not just record brain waves; they also gathered self-report measures that capture how people differ in social and sensory experiences. Participants completed short questionnaires about empathy, their tendency to see things from others’ points of view, and how strongly they feel touch when only watching it. One survey focused on “mirror-touch synaesthesia,” a rare but striking trait where seeing someone else touched can trigger a clear, localized sensation on one’s own body. Together, these brain and questionnaire data let future researchers test whether people who are more empathic or more sensitive to vicarious touch show distinct neural signatures when they view the same videos.

What the first analyses reveal

As a check of data quality, the team applied modern pattern-analysis methods to the EEG signals. They asked whether a computer, looking only at the brain activity, could tell what kind of touch was being viewed. The results showed that the brain rapidly distinguished whether the touch was seen from a self-like or other-like angle, with differences emerging around a tenth of a second after the video started. Slightly later, around three-tenths of a second, the signals carried information about what kind of material was involved and whether the clip looked pleasant or unpleasant. These timing patterns suggest that the brain quickly sorts out whose body is being touched, and then refines the sensory and emotional details.

A shared resource for studying social feeling

In plain terms, this work does not claim to solve how empathy or touch perception works, but instead delivers a powerful common toolkit for others to use. The open dataset links carefully controlled touch videos, detailed ratings of how those videos feel, high-density brain recordings from many participants, and measures of social traits. Researchers can now ask fine-grained questions about how we sense others’ experiences through sight alone, how this varies from person to person, and how such processes might be strengthened or disrupted. In an age where more of our social lives play out on screens, understanding how watching touch shapes connection and comfort could prove increasingly important.

Citation: Smit, S., Ramírez-Haro, A., Varlet, M. et al. A comprehensive EEG dataset for investigating visual touch perception. Sci Data 13, 381 (2026). https://doi.org/10.1038/s41597-026-06714-5

Keywords: visual touch, empathy, EEG dataset, vicarious sensation, social neuroscience