Clear Sky Science · en

A comprehensive IMU dataset for evaluating sensor layouts in human activity and intensity recognition

Why your fitness tracker cares where it sits

Fitness watches and step counters promise to track everything from your daily walk to your gym workout. But beneath those sleek straps lies a surprisingly tricky design question: where on the body should we put the sensors so that they see enough of our movement without turning us into wired-up robots? This study introduces a rich new dataset that helps scientists answer exactly that, showing how different wearable layouts can read what we are doing and how hard we are working.

Many trackers, one big blind spot

Human activity recognition is the technology that lets devices infer whether you are sitting, walking, running, or cycling based on motion data. Cameras can do this too, but body-worn sensors are better for long-term, privacy-preserving use in homes, clinics, and everyday life. Most existing datasets for this research, however, only place a few sensors on select body parts—such as a phone in the pocket or a single band on the wrist. That limited view makes it hard to study an important trade-off: how many sensors, and where, are really needed to recognize activities and their intensity accurately while still being comfortable and practical to wear?

Building a full-body motion map

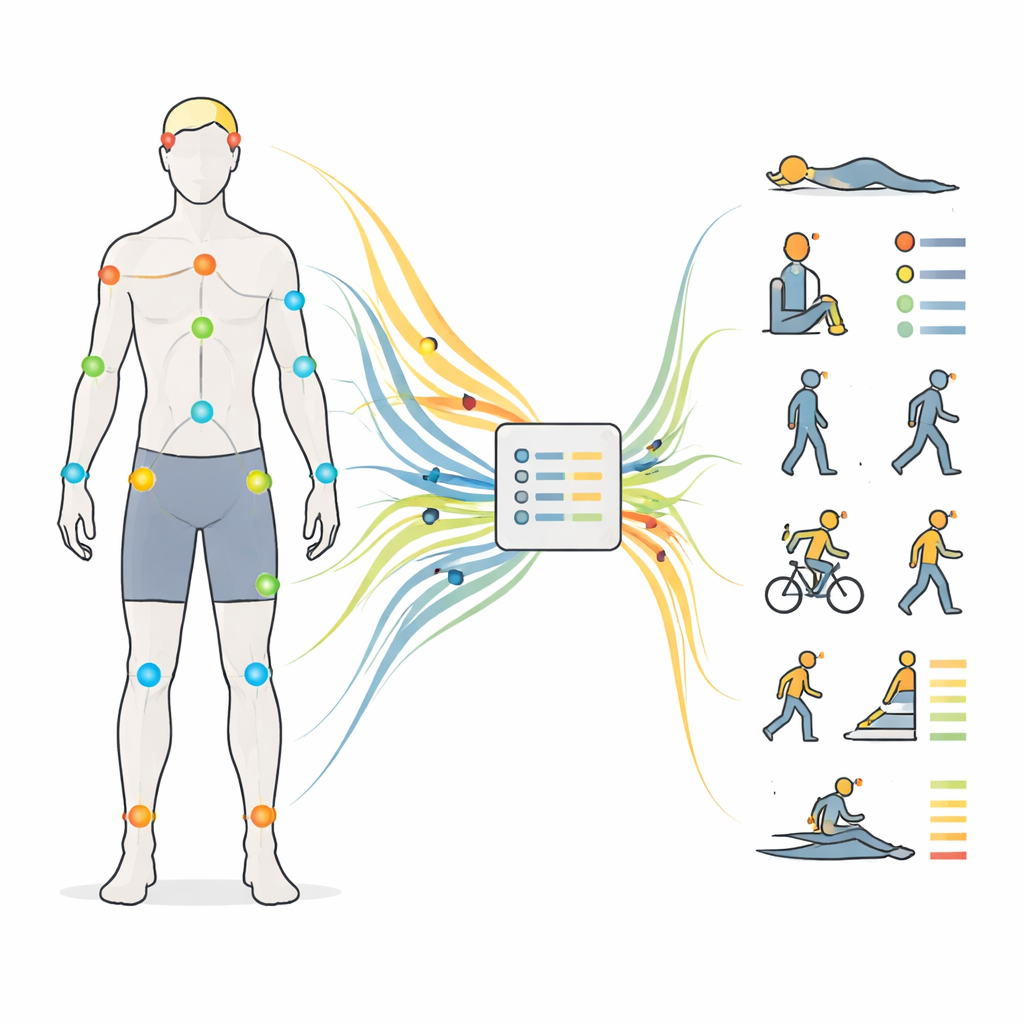

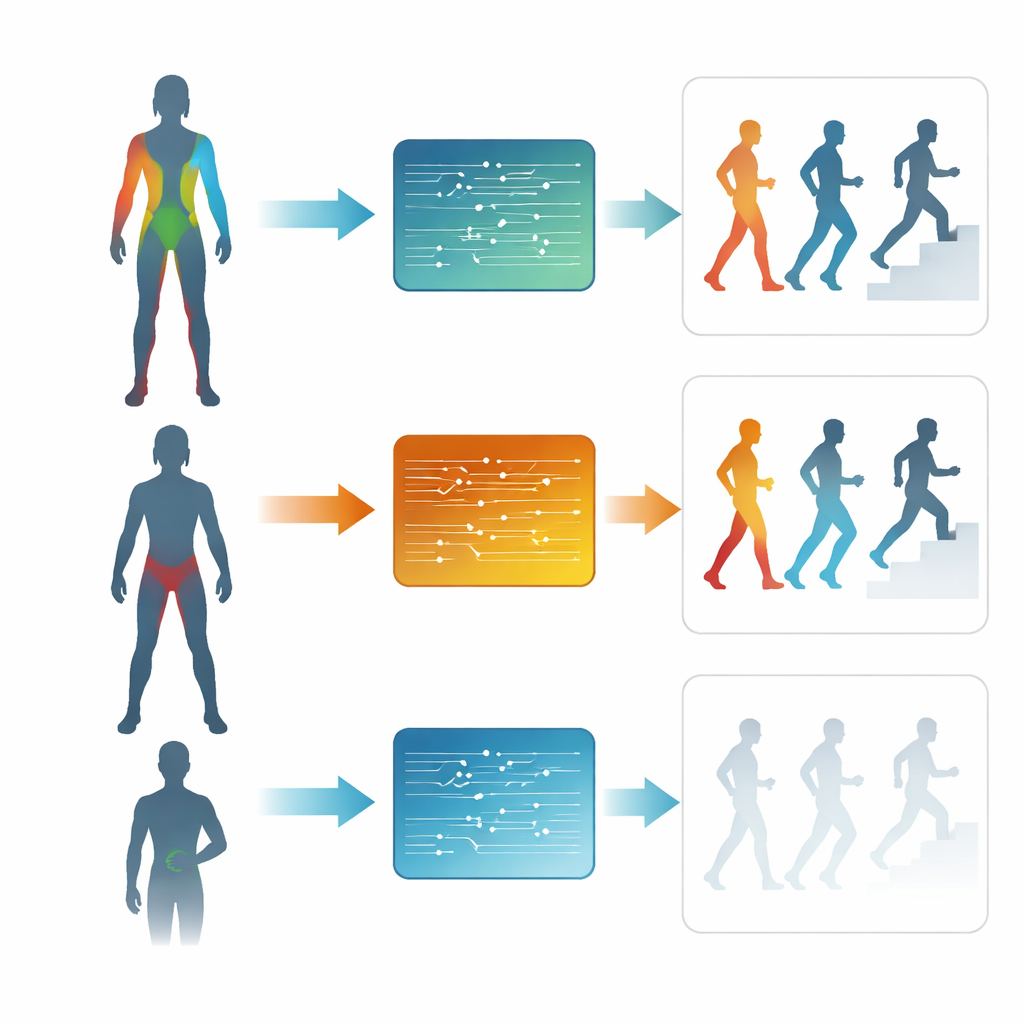

To close this gap, the researchers collected motion data from 30 healthy young adults as they performed 12 common activities, including lying, sitting, standing, several walking speeds, stair climbing, cycling, running, jumping, and rowing. Each person wore 17 small motion units distributed from head to feet: on the head, upper back, lower back, shoulders, arms, wrists, thighs, shanks, and feet. These units recorded how each body segment moved in three dimensions, 60 times per second, in a consistent global coordinate system. The team also logged basic body measurements, such as height and limb lengths, and carefully labeled both the type of activity and its effort level, from sedentary to vigorous, based on standard energy-expenditure tables.

From raw motion to recognizable patterns

Once the data were collected, the signals were chopped into short overlapping time windows ranging from half a second to 10 seconds. For traditional machine learning models, the team distilled each window into sets of handcrafted features that describe how the signals behave over time and frequency, such as their averages, variability, and dominant rhythms. They then trained four widely used models—two classic approaches and two deep learning networks—on two tasks: telling the 12 activities apart, and grouping them into four effort levels. All training and testing were done in a subject-wise fashion: data from each person appeared in only one role, ensuring that the models were truly learning general patterns rather than memorizing an individual’s style of movement.

What really matters: time and placement

The results show that, with thoughtfully chosen features, classic models can recognize activities with around 96–97% accuracy and effort levels even more reliably. Deep learning models trained directly on raw signals perform nearly as well, especially on shorter time windows. Across all approaches, windows of about 2–5 seconds strike the best balance between quick response and reliable classification: long enough to capture the rhythm of walking or rowing, but short enough to be useful for real-time feedback. When looking at where sensors are placed, the findings are striking. A layout focused on the lower body—hips, thighs, shanks, and feet—often matches or even surpasses the performance of full-body coverage, particularly for judging intensity. A minimal three-sensor setup on the lower back, thigh, and shank still exceeds 90% accuracy, while single-sensor setups, especially at the wrist, perform noticeably worse.

Designing smarter, leaner wearables

This new dataset suggests that more sensors are not always better: for everyday movements dominated by the legs, a compact, well-chosen cluster of sensors can rival far more complex systems. That insight can guide the design of future wearables that are lighter, cheaper, and easier to use, yet still capable of reliably tracking both what people are doing and how hard they are working. By making the full dataset and code publicly available, the authors provide a testbed for refining sensor layouts, exploring new algorithms, and eventually extending these tools to older adults, patients, and more varied real-world settings.

Citation: Feng, M., Zhang, Q. & Fang, H. A comprehensive IMU dataset for evaluating sensor layouts in human activity and intensity recognition. Sci Data 13, 317 (2026). https://doi.org/10.1038/s41597-026-06710-9

Keywords: wearable sensors, human activity recognition, inertial measurement units, sensor placement, physical activity intensity