Clear Sky Science · en

An EEG dataset for handwriting imagery decoding of Chinese character strokes and Pinyin single vowels

Reimagining Writing Without Moving a Muscle

For people who lose the ability to write after a stroke or injury, the simple act of jotting down a note can become impossible. Brain‑computer interfaces aim to bridge this gap by turning thoughts directly into text or movement. Until now, the most successful systems have relied on brain implants—powerful but invasive. This study takes an important step toward a safer alternative, releasing the first open collection of brainwave recordings of people imagining handwriting Chinese character strokes and Pinyin vowels, paving the way for future non‑invasive “thought‑to‑text” tools.

Why Brain Signals for Writing Matter

Handwriting is a remarkably efficient way to communicate: it is fast, compact, and familiar to almost everyone. Many brain‑computer interface efforts have focused on large, simple movements such as reaching or grasping, or on spelling by selecting letters one by one with a mental “cursor.” Impressive work with implanted electrodes has already shown that decoding imagined handwriting is possible at speeds approaching everyday typing. But brain surgery is not a realistic option for most patients, and long‑term stability of implants remains a concern. A non‑invasive approach using scalp electrodes to record brainwaves could be used widely in clinics, homes, and rehabilitation centers—if scientists can reliably read the faint, noisy signals associated with imagined pen strokes.

Designing a Rich Brainwave Library

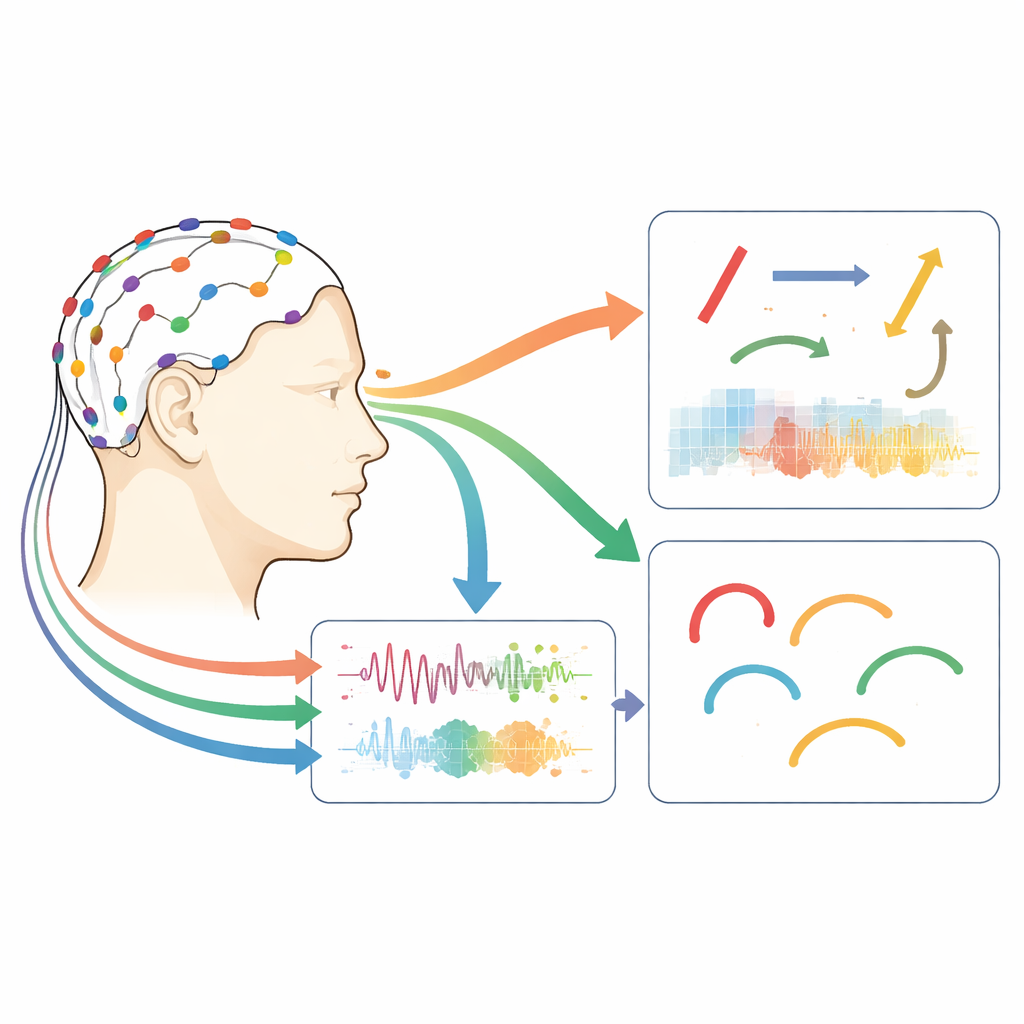

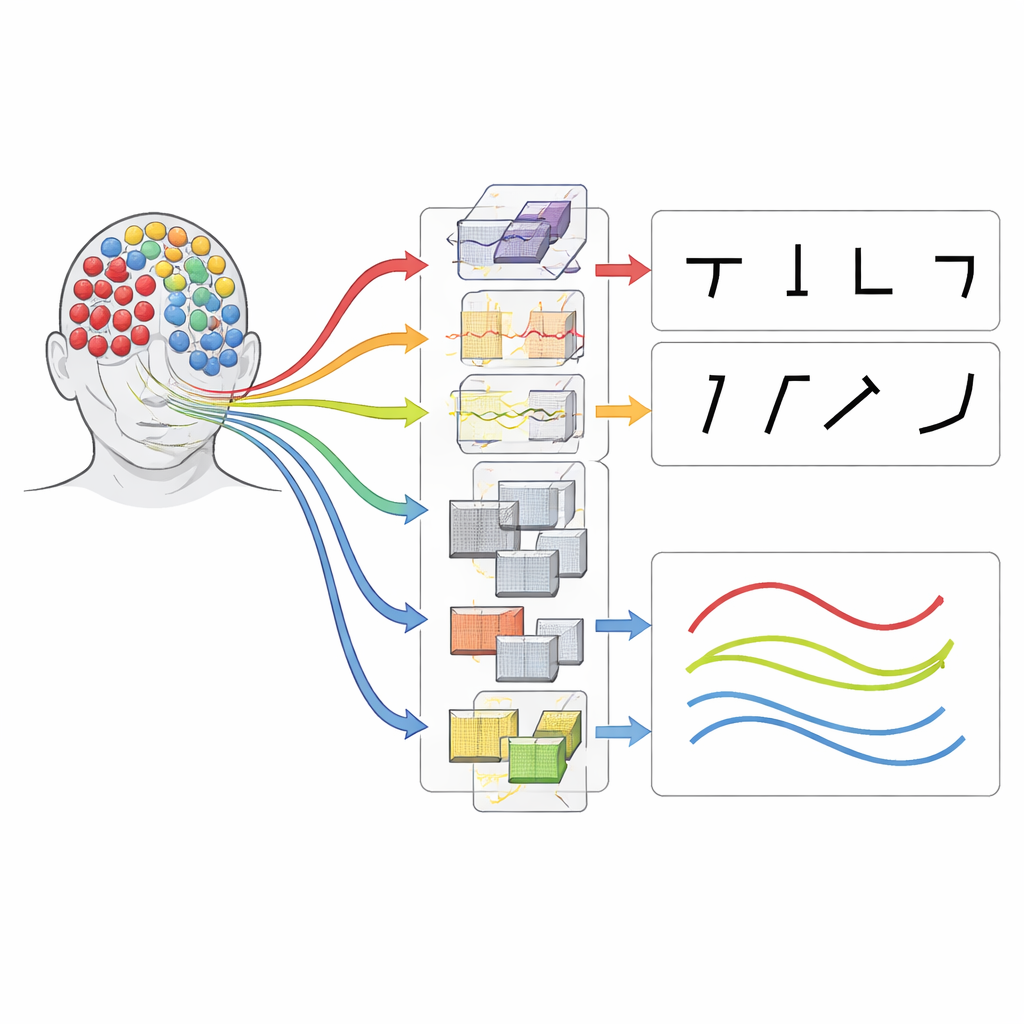

To tackle this challenge, the researchers recruited 21 healthy right‑handed adults and recorded their brain activity using a cap with 32 sensors. Each person took part in two sessions held at least a day apart, providing a way to test how stable the signals are over time. The team used two carefully planned mental tasks. In the first, volunteers imagined writing five basic strokes used to build Chinese characters—simple lines and curves that, in combination, can form almost any character. In the second, they imagined writing six single vowels from Hanyu Pinyin, which represent familiar rounded and hooked letter‑like shapes. Each trial began with a short visual animation of the stroke or vowel to remind participants of the movement, followed by a period when the screen went black and they silently imagined tracing the shape once in their mind.

From Raw Brainwaves to Decodable Patterns

Across both tasks and sessions, the study produced 18,480 four‑second imagination trials—a large and standardized dataset by current brain‑computer interface standards. Signals were recorded at very high speed, then carefully organized using an international standard for brain data so other researchers can analyze them easily. Although the shared files preserve the raw recordings, the authors also described and released example processing code. In their own tests, they filtered the signals, corrected faulty electrodes, reduced data size, and normalized the channels before training a compact deep‑learning model called EEGNet. This model is designed to detect both where in the brain and when in time important patterns occur, making it well suited to the brief bursts of activity that accompany imagined pen movements.

How Well Can Thoughts of Writing Be Read?

Using EEGNet, the team asked how accurately a computer could tell which stroke or vowel a person was imagining. When training and testing were done within the same recording session, average accuracies were well above chance: over 70% for the five‑stroke task and about 67% for the six‑vowel task, with some individuals exceeding 80%. More importantly for real‑world use, models trained on one day and tested on the other still performed strongly—about 63% for strokes and 60% for vowels—showing that the brain’s patterns for these mental actions are fairly stable over time. People with prior experience using brain‑computer interfaces tended to achieve higher accuracies, hinting that users can learn to produce clearer, more consistent brain signals. The researchers also found that high‑performing participants showed more focused activity in brain regions linked to hand control and spatial planning, while low‑performers had more scattered patterns, suggesting potential targets for training or feedback.

What This Means for Future Communication Aids

Instead of presenting a finished gadget, this work offers a carefully built foundation: an openly available, richly annotated collection of brain recordings from imagined handwriting in Chinese. By focusing on both the building blocks of characters (strokes) and the flowing shapes of vowels, the dataset captures different sides of fine motor control and planning. The results show that even with non‑invasive scalp recordings, computers can reliably distinguish between multiple imagined writing movements and maintain that performance across days. For patients who cannot move or speak, future systems built on this resource may eventually allow them to “write” sentences simply by picturing the strokes and shapes of letters in their mind.

Citation: Wang, F., Chen, Y., Wang, P. et al. An EEG dataset for handwriting imagery decoding of Chinese character strokes and Pinyin single vowels. Sci Data 13, 332 (2026). https://doi.org/10.1038/s41597-026-06708-3

Keywords: brain-computer interface, electroencephalography, handwriting imagery, Chinese characters, Pinyin vowels