Clear Sky Science · en

A Chain-of-thought Reasoning Breast Ultrasound Dataset Covering All Histopathology Categories

Why this research matters

Breast cancer screening increasingly relies on ultrasound, especially for younger women and in places where mammography is less available. Yet even the best artificial intelligence (AI) tools for reading these scans often behave like black boxes, offering a verdict—benign or malignant—without showing how they arrived there. This paper introduces BUS-CoT, a new, openly available breast ultrasound dataset designed not just to help AI spot cancer, but to teach it to “think out loud” in a way that mirrors how expert radiologists reason through difficult cases.

From blurry scans to structured clues

Ultrasound images are noisy and difficult to interpret, even for specialists. Human experts do not simply glance at a scan and jump to a diagnosis; they look for a chain of visual clues—whether a lump is oval or irregular, whether its borders are smooth or spiky, whether it casts a shadow, and whether tiny bright spots hint at calcifications. These clues are then weighed together with standardized rules, such as the BI-RADS system, to estimate the chance that a lesion is cancerous and to decide whether a biopsy is needed. Existing AI systems usually skip this step-by-step reasoning, going straight from pixels to a prediction, which makes their decisions hard to trust and difficult to apply to unusual or rare cases.

A rich new collection of real-world cases

The BUS-CoT dataset tackles these problems by assembling 11,439 breast ultrasound images from 11,850 lesions in 4,838 patients, drawn from publications, open datasets, and online case repositories across multiple continents and ultrasound machine types. Crucially, the collection spans all 99 breast tissue categories defined by the World Health Organization, from common benign lumps like fibroadenomas to rare and aggressive cancers. This broad coverage addresses a major weakness of earlier datasets, which tend to miss rare diseases altogether, leaving AI systems poorly prepared for exactly the kinds of cases where doctors are most likely to struggle.

Teaching machines to follow a reasoning trail

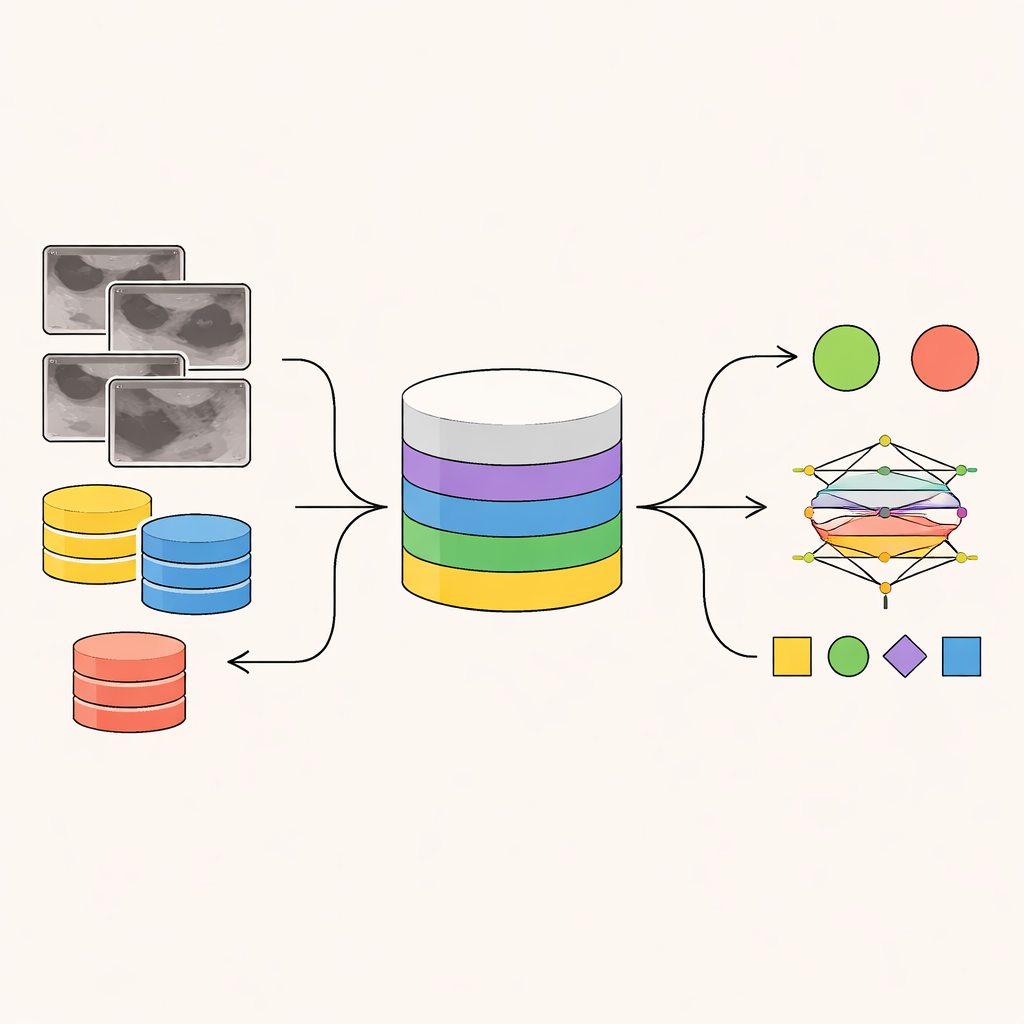

Beyond raw images, BUS-CoT provides multiple layers of expert annotation. Radiologists first record basic observations: whether a mass is present, whether there are calcifications, and where the lesion lies. They then annotate detailed visual features—shape, margins, internal echo patterns, and more—before assigning BI-RADS categories and linking these imaging findings to confirmed pathology from tissue samples. Finally, they convert this structured information into a narrative chain-of-thought: a short step-by-step explanation that connects what is seen on the scan to why a particular diagnosis is likely. Unlike automatically generated text, these reasoning chains are crafted and verified by experienced breast imaging specialists, preserving real clinical logic that models can learn from.

Putting the dataset to the test

To show what this resource can do, the authors trained a range of modern image and vision–language models on BUS-CoT, focusing on a curated high-quality subset of 5,163 lesion-centered images. Traditional image networks learned to classify lesions as benign or malignant, while an advanced vision–language model was trained to both view the image and generate a reasoning chain before giving its answer. When the model was forced to reason in this structured way, its accuracy improved, especially for ambiguous cases where benign and malignant lesions look alike. In other words, guiding the model to “walk through” the same visual clues that radiologists use helped it make better, safer decisions.

How this work can shape future care

For patients and clinicians, the promise of BUS-CoT lies in AI tools that not only match human accuracy but also explain themselves in a clinically meaningful manner. By pairing thousands of ultrasound images with carefully documented reasoning and covering the full spectrum of breast tissue diagnoses—even rare ones—this dataset lays the groundwork for AI systems that can handle tough edge cases and justify their recommendations. Although it does not yet include broader clinical information such as genetics or medical history, BUS-CoT is a major step toward more transparent, trustworthy ultrasound-based diagnosis, where machines can act less like mysterious oracles and more like diligent junior colleagues whose thought processes can be inspected and refined.

Citation: Yu, H., Li, Y., Niu, Z. et al. A Chain-of-thought Reasoning Breast Ultrasound Dataset Covering All Histopathology Categories. Sci Data 13, 370 (2026). https://doi.org/10.1038/s41597-026-06702-9

Keywords: breast ultrasound, medical imaging AI, explainable AI, breast cancer diagnosis, clinical datasets