Clear Sky Science · en

Towards Automated Reporting: A Bronchoscopy Report Dataset for Enhancing Multimodality Large Language Models

Smarter Help for Lung Doctors

When doctors look inside the airways with a tiny camera, they learn a lot about a patient’s lungs—but turning what they see into clear, detailed reports takes time and experience. This study introduces a new, carefully built collection of real bronchoscopy images and reports designed to teach advanced AI systems how to help with that writing. For patients, this could someday mean faster, more consistent reports and fewer chances for important details to be missed.

Why Looking Inside the Lungs Matters

Bronchoscopy is a procedure in which a thin tube with a camera is guided into the airways to inspect the windpipe and branching tubes of the lungs. It helps doctors detect problems such as inflammation, infection, tumors, or bleeding, and can also guide treatments like removing foreign objects or placing tiny supports to keep airways open. Afterward, the doctor must describe what was seen in a formal report, which becomes part of the patient’s medical record and guides treatment decisions. Writing these reports is detailed, repetitive work that depends heavily on the doctor’s training and memory.

Why Existing Data Was Not Enough

In recent years, powerful AI models that can handle both images and text have made progress in reading medical scans and drafting reports. However, for bronchoscopy, the available data used to train such systems has been narrow and incomplete. Earlier datasets often covered only a few tasks—such as spotting a tumor or marking the position of the camera—while ignoring many everyday findings like mucus, mild bleeding, or swelling that doctors routinely describe. Some collections were also private, small, or focused only on simple yes-or-no decisions, making them poor teachers for an AI that needs to write rich, humanlike descriptions of what the camera sees.

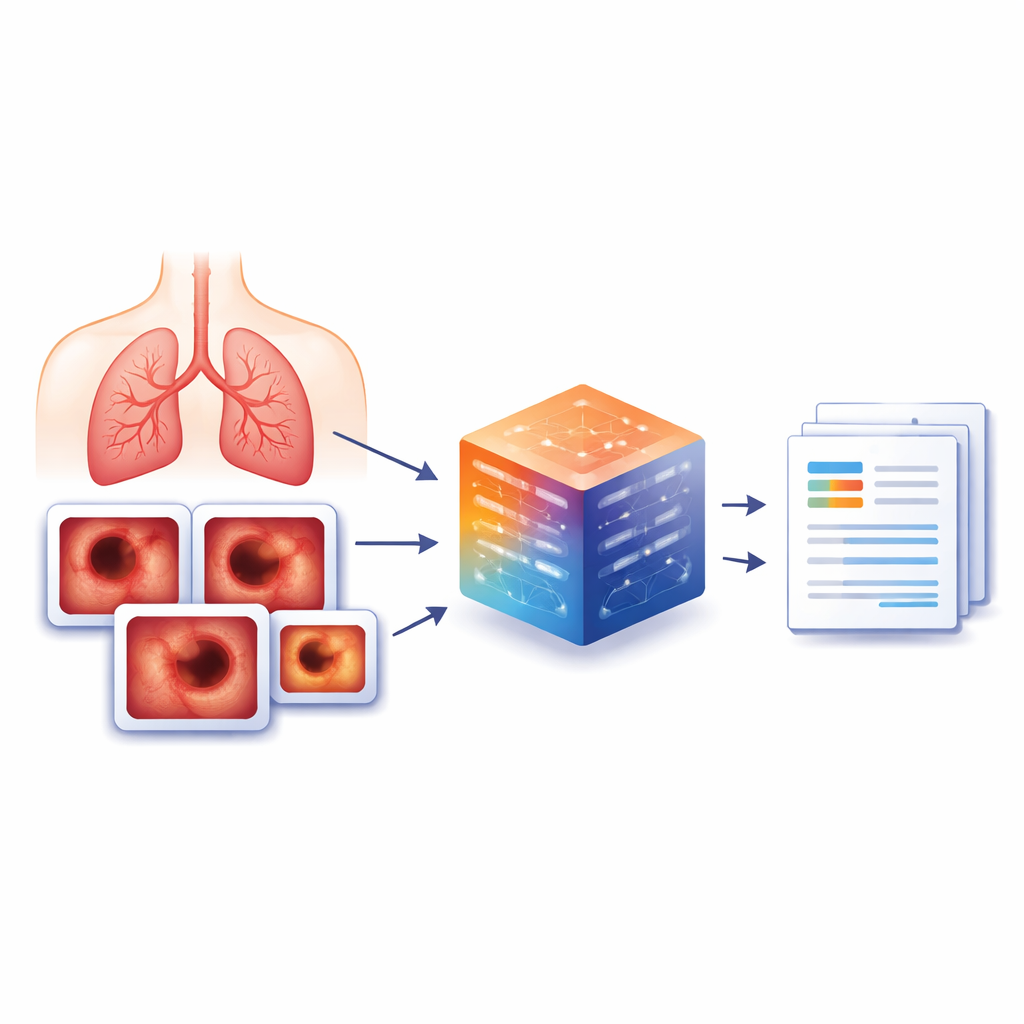

Building a Richer Picture Library

To close this gap, the authors created BERD, a new bronchoscopy examination report dataset built from real procedures at a large hospital in China. From 8,477 bronchoscopies done between 2022 and 2023, they selected 3,692 representative patient cases and 6,330 key images that doctors had marked as especially informative. For each image, trained clinicians linked it to precise written descriptions of what was visible, such as tumors, swelling, deposits, or normal tissue. When an image showed no problem, they used a simple standard phrase like “It is normal” to keep the data consistent. Personal details were removed, and the original Chinese reports were translated into English with a locally run language model to protect privacy.

How Experts and AI Worked Together

Beyond plain descriptions, the team also wanted each image to be tagged with one or more medical categories—like “tumor,” “congestion,” or “edema”—so that AI models could learn both to label and to describe findings. To do this efficiently, senior bronchoscopy specialists first defined a detailed list of categories based on medical guidelines. A locally deployed language model then scanned the text captions to suggest which categories applied to each image. Human experts carefully checked and corrected these suggestions, keeping final control over the medical quality. The result is a finely annotated resource where every image is tied to a clear description, anatomical location, and expert-confirmed labels, all organized in simple files that researchers can use directly.

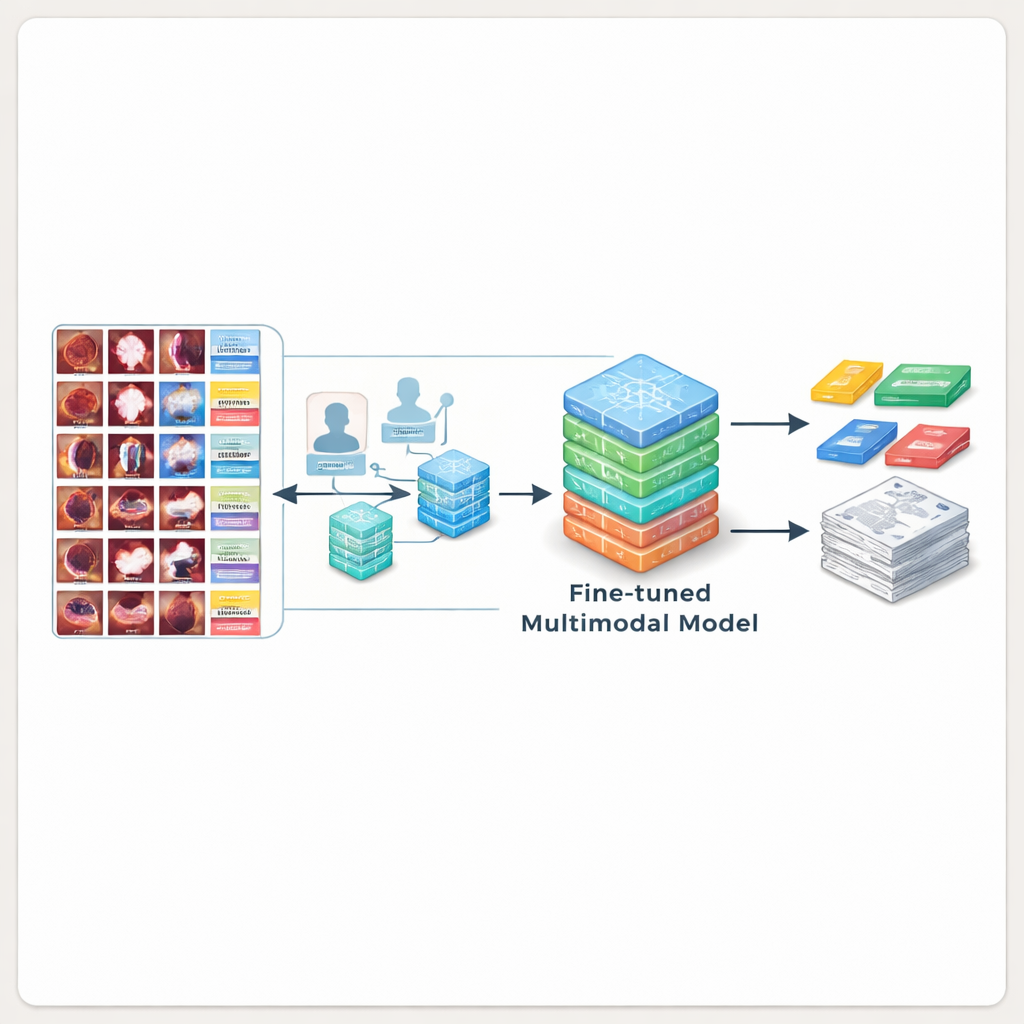

Teaching AI to Write Better Reports

To show that BERD is truly useful, the researchers used it to train several leading multimodal AI models. First, they tested general-purpose and medical AI systems that had never seen bronchoscopy images before. These models often misunderstood what they saw, missing tumors or inventing details, and scored poorly when compared with expert-written text. The team then fine-tuned open-source models on the BERD images and captions. After this extra training, the best model produced descriptions that matched expert wording much more closely and were judged acceptable by clinicians over 80% of the time—meaning the AI-generated text could often be dropped directly into a real report with minimal editing.

What This Means for Future Care

In plain terms, this work provides the missing “training library” that AI systems need to become reliable assistants for bronchoscopy reporting. While the data come from a single hospital and some number details were intentionally removed to avoid misleading the models, the dataset is public, well documented, and large enough to set a new standard for this field. As researchers build on BERD, patients may eventually benefit from quicker, more uniform bronchoscopy reports, giving doctors more time to focus on decisions and treatment rather than paperwork.

Citation: Luo, X., Huang, X., Liang, X. et al. Towards Automated Reporting: A Bronchoscopy Report Dataset for Enhancing Multimodality Large Language Models. Sci Data 13, 339 (2026). https://doi.org/10.1038/s41597-026-06692-8

Keywords: bronchoscopy, medical imaging, clinical reports, multimodal AI, medical datasets