Clear Sky Science · en

SPICE-HL3: Single-Photon, Inertial, and Stereo Camera dataset for Exploration of High-Latitude Lunar Landscapes

Why Moon Shadows Matter for Robots

Future missions to the Moon’s polar regions hope to tap frozen water and other resources, but these areas are also some of the most visually confusing places in the Solar System. Long, moving shadows, blinding glare, and near-total darkness can easily fool a robot’s cameras. This paper introduces SPICE‑HL3, a new open dataset created in an indoor “piece of the Moon” that lets scientists around the world test how robots see and navigate in these harsh polar conditions, including with a cutting‑edge single‑photon camera that can quite literally see in the dark.

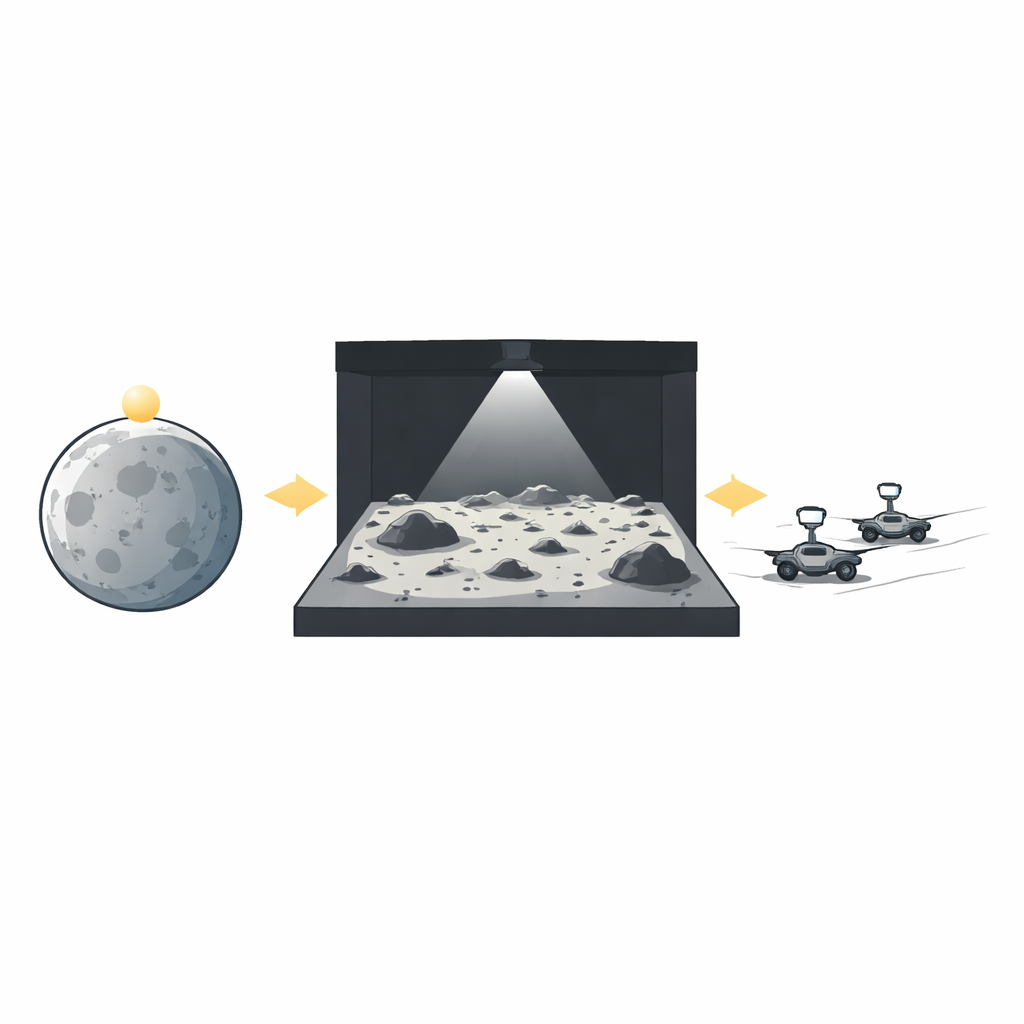

Building a Piece of the Lunar Poles on Earth

Because real data from the Moon’s poles are scarce and expensive to obtain, the team built a controlled test bed called LunaLab at the University of Luxembourg. It is an 11‑by‑8‑meter indoor landscape filled with coarse basalt gravel, rocks, and craters, surrounded by black walls and ceilings to mimic the Moon’s light‑absorbing, airless environment. A powerful, movable spotlight imitates the Sun sitting very low on the horizon, producing long, sharp shadows and huge brightness differences between sunlit slopes and pitch‑black crater interiors. By changing the lamp’s position and output, the researchers reproduced four distinct lighting regimes—reference, noon, dawn/dusk, and night—similar to what a rover would experience over a full lunar day near the poles.

Rovers, Sensors, and a Camera that Counts Single Photons

The dataset was collected using two small wheeled rovers that carried different combinations of cameras and motion sensors. One rover hosted a conventional monochrome camera and a novel single‑photon avalanche diode (SPAD) camera; the other carried a stereo color‑plus‑depth camera with a built‑in motion sensor. Both rovers logged wheel rotation and inertial data, while an overhead motion‑capture system tracked their true positions with sub‑millimeter accuracy. The SPAD camera is the standout technology: instead of measuring light as a smooth intensity value, each pixel reports whether it detected individual photons, with extremely high speed and sensitivity. By combining many of these ultra‑fast binary snapshots, the system can reconstruct images that retain detail even in very dim or extremely high‑contrast scenes where regular cameras tend to blur or saturate.

Capturing Lunar‑Like Drives in Many Flavors

To give researchers a rich testing ground, the authors designed seven types of rover paths, from long, stop‑and‑go traverses that imitate cautious planetary driving to short, continuous runs in different directions relative to the artificial Sun (toward it, away from it, and sideways) and tight on‑the‑spot turns. They repeated these paths at slow walking speeds and ten‑times‑faster runs, under multiple lighting conditions, sometimes with rover headlights on and sometimes off. In total, SPICE‑HL3 contains 88 time‑synchronized sequences, almost 1.3 million images, and matching motion and ground‑truth data. The images span static scenes ideal for careful analysis and fast sequences that stress motion blur and exposure control. Everything is packed into a clearly organized file structure, with calibration files that describe exactly how each camera and sensor is oriented and how their clocks line up in time.

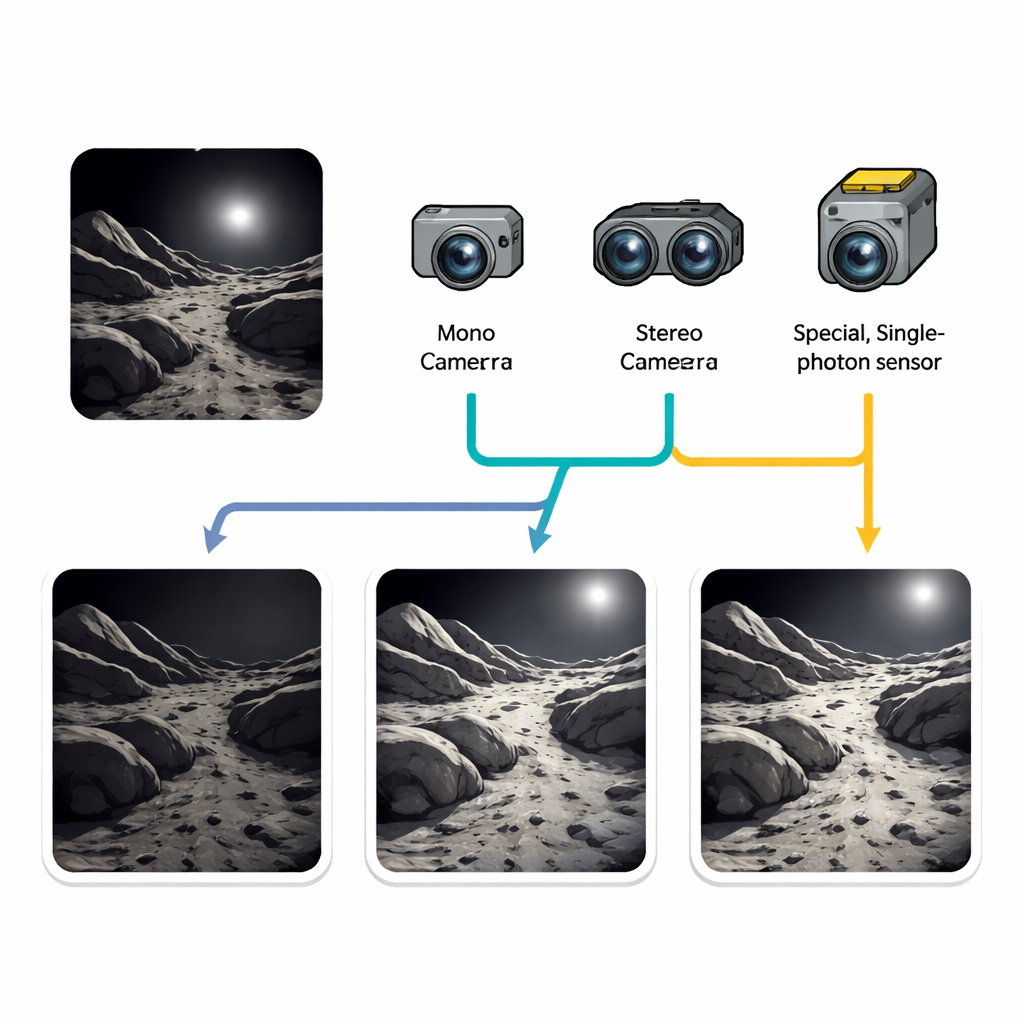

Putting Single‑Photon Vision to the Test

Beyond simply releasing data, the team checked the quality and usefulness of the recorded images. They compared how the SPAD, the monochrome camera, and the stereo camera handled some of the toughest visual situations: dusk and night drives, and runs where the rover faced directly into the “Sun.” Using simple image‑quality measures and visual inspection, they found that the single‑photon camera consistently preserved structure in both bright and shadowed regions, maintained a wide range of brightness levels, and remained stable across a variety of conditions. Conventional cameras performed well when the scene was well lit, but either lost detail in very dark areas or blew out highlights near the light source. The authors also verified that common mapping and localization software could successfully process the dataset, confirming that timestamps, calibrations, and formats are robust enough for real robotics research.

Limits, Caveats, and Why This Matters

Although LunaLab cannot perfectly reproduce the tiny dust grains and subtle light‑scattering effects of true lunar soil, and some unintended infrared glow from the motion‑capture system crept into the darkest scenes, the authors argue that SPICE‑HL3 still represents a demanding “worst‑case” optical environment for rover vision. For engineers and scientists preparing missions to the Moon’s poles—or designing robots for any dim, high‑contrast environment—the dataset offers a rare, publicly available benchmark. It makes it possible to fairly compare new camera technologies like SPAD sensors against traditional systems, improve navigation and mapping algorithms, and ultimately help ensure that future rovers can keep moving safely through the Moon’s shifting shadows instead of being stranded in the dark.

Citation: Rodríguez-Martínez, D., van der Meer, D., Song, J. et al. SPICE-HL3: Single-Photon, Inertial, and Stereo Camera dataset for Exploration of High-Latitude Lunar Landscapes. Sci Data 13, 374 (2026). https://doi.org/10.1038/s41597-026-06668-8

Keywords: lunar robotics, planetary navigation, single-photon imaging, robot vision datasets, extreme lighting